Community Articles

- Cloudera Community

- Support

- Community Articles

- Creating Custom Runtimes with Spark3, Python 3.9 o...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

04-21-2023

12:00 PM

- edited on

04-21-2026

12:16 AM

by

GrazittiAPI

Introduction:

Cloudera Data Engineering service is a robust and flexible platform for managing Data Engineering workloads.CDE service allows you to manage workload configurations such as choosing the spark version to use, orchestrate your DE pipelines using Airflow as well as provides a rich API to manage and automate pipelines remotely from the command line. At times, however, one is unable to use the standard CDE configurations for any of the following reasons,

- The workload requires a very customized set of packages and libraries with version specificity that needs to be baked together and is unique from other workloads in the CDE Service.

- Additional access requirements (e.g. root access) for package installations are necessary, which are not provided by default CDE runtime libraries

Such unique requirements are addressed by building custom runtimes and pushing these runtimes as docker images in an external docker registry. CDE services pull these images from these remote registries and create the context by setting up the software packages and versions required for running such “special” workloads.

This article provides a step-by-step guide to creating a custom runtime and then pulling this runtime image from a custom docker registry for running a sample CDE Workload.

Runtime Configuration / Requirement:

- Spark 3.x

- Python 3.9

- DBT(https://www.getdbt.com/)

Cloudera Documentation

Pre-requisites

Before getting started, make sure that you have the following prerequisites setup:

- Access to Cloudera Data Engineering ( CDE) Service and Virtual Cluster(VC), including read and write access to the VC ( reference)

- Access to Cloudera Data Engineering ( CDE) Service using the Command line tool( reference)

- Docker Client installed on a local machine/laptop.

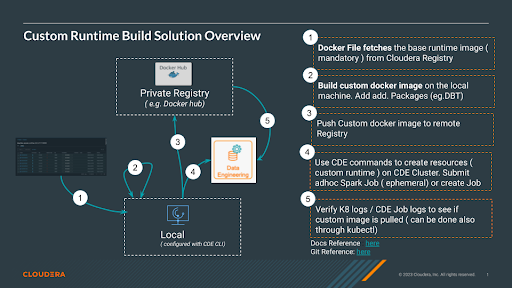

Solution Overview

The figure above describes the high-level steps for the Custom Runtime Build solution. It is mandatory that you start with a Cloudera-provided runtime image as a first step in building your custom docker image. To access the Cloudera docker registry contact your Cloudera account administrator, as this requires a license. Refer here for some details on the process if you are having a private cloud CDP installation.

Step 0: Clone the sample code from git

Create a folder on your local machine where you have the docker client installed and clone the sample code and change to the newly created directory:

$ git clone git@github.com:SuperEllipse/CDE-runtime.git

$ cd CDE-runtime

Steps 1 & 2: Fetch the image and build a custom docker image

In this step, we will fetch the base Image from the Cloudera repository and then customize the image to include packages and Python versions per our requirements.

Note: This requires access to the Cloudera repository. Contact your Cloudera account team contact to enable this if you do not have access. Once access to the repository is established, you should be able to build this Dockerfile into a Docker image. Change the dockerfile to add the name of the user per your requirement i.e. substitute vishrajagopalan to <<my-user-name >>.

Name : Dockerfile

FROM container.repository.cloudera.com/cloudera/dex/dex-spark-runtime-3.2.3-7.2.15.8:1.20.0-b15

USER root

RUN groupadd -r vishrajagopalan && useradd -r -g vishrajagopalan vishrajagopalan

RUN yum install ${YUM_OPTIONS} gcc openssl-devel libffi-devel bzip2-devel wget python39 python39-devel && yum clean all && rm -rf /var/cache/yum

RUN update-alternatives --remove-all python

RUN update-alternatives --install /usr/bin/python3 python3 /usr/bin/python3.9 1

RUN rm /usr/bin/python3

RUN ln -s /usr/bin/python3.9 /usr/bin/python3

RUN yum -y install python39-pip

RUN /usr/bin/python3.9 -m pip install --upgrade pip

RUN /usr/bin/python3.9 -m pip install pandas==2.0.0 impyla==0.18.0 dbt-core==1.3.1 dbt-impala==1.3.1 dbt-hive==1.3.1 impyla==0.18.0 confluent-kafka[avro,json,protobuf]==1.9.2

ENV PYTHONPATH="${PYTHONPATH}:/usr/local/lib64/python3.9/site-packages:/usr/local/lib/python3.9/site-packages"

#RUN echo $PYTHONPATH

RUN dbt --version

RUN /usr/bin/python3.9 - -c "import pandas; from impala.dbapi import connect "

USER vishrajagopalan

Steps To Build Dockerfile:

The following steps are needed to be executed in sequence for you to build and push your docker image.

- Recommended changes: As you can see I have used my initials/ username to tag the docker image (the -t option in the docker build command is used to tag a docker image). You can use any tag name you like but I recommend using the <<my-user-name >> you used earlier.

- The docker build step could take up to 20 minutes based on network bandwidth for the very first step. Subsequent builds will be much faster because Docker will cache the layers and will only rebuild those layers that have been changed.

docker build --network=host -t vishrajagopalan/dex-spark-runtime-3.2.3-7.2.15.8:1.20.0-b15-custom . -f Dockerfile

Step 3: Push docker image to remote Registry

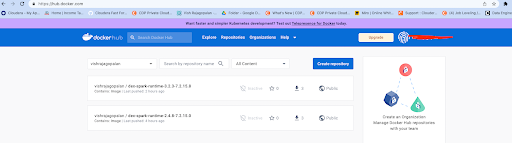

- Before you upload a Docker Image you need to log in to the Docker registry you intend to use. I have used a docker hub and have executed the command below to log in to the docker hub. This requires an existing docker hub account to be created if you have not done so already. The image below is how you could use the public docker hub ( hub.docker.io) to store your image. This could vary depending on the Docker registry that you plan to use. But ensuring that you are able to login to your docker registry is critical for you to push the custom image you built above to this registry. After logging into the docker registry, execute the docker push command shown below, making the changes to the tag ( i.e. replace vishrajagopalan with the tag name you want to use).

- Execute the command below only after you have successfully logged in to the registry, else this will not work.

docker push vishrajagopalan/dex-spark-runtime-3.2.3-7.2.15.8:1.20.0-b15-custom

- After executing the above command successfully you should be able to see the pushed Docker Image in your Docker registry. Here I can see that my image with the right tag above has been pushed to the docker hub.

- Once the Docker Image has been successfully pushed to a remote registry, we need to enable our CDE cluster to pull this image. Note: you need to have the CDE command line tool setup with the cluster endpoint on which you want to run the commands/jobs below. Refer to the additional reads section for help on this topic

- Run the command below and enter the password to your docker registry. This creates a docker secret and is saved by CDE in the Kubernetes cluster, in order to be used during workload execution.

Steps 4 & 5: CDE Job runs with the custom runtime image

- Create a CDE credential that saves the docker login information as a secret. You will need to enter your password after you enter this command. Change the URL from hub.docker.com to the private registry that you plan to use.

cde credential create --name docker-creds --type docker-basic --docker-server hub.docker.com --docker-username vishrajagopalan

- Run the command below to create a resource type of custom-runtime-image in CDE referring to the Runtime Image information to be used.

cde resource create --name dex-spark-runtime-custom --image vishrajagopalan/dex-spark-runtime-3.2.3-7.2.15.8:1.20.0-b15-custom --image-engine spark3 --type custom-runtime-image

- Run the first command below to execute the sample spark script provided in the GitHub repo. This executes a SPARK job in an ephemeral context, without saving any resources in the CDE Cluster. If you have not cloned the GitHub, you could also copy the sample Spark code provided in an editor( refer section at the end called Sample Spark Code).

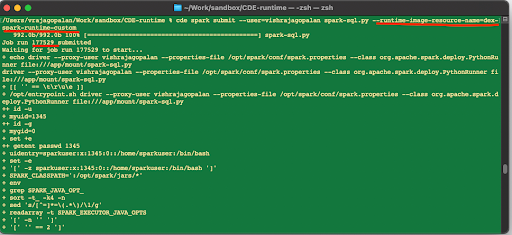

cde spark submit --user=vishrajagopalan spark-sql.py --runtime-image-resource-name=dex-spark-runtime-custom

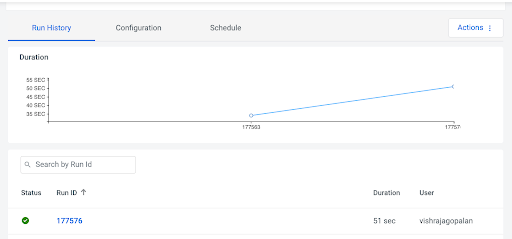

- On submission, you should be able to note the job id as shown below, which can then be used to fetch details in the CDE User Interface.

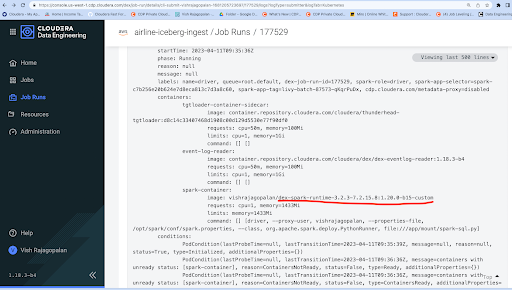

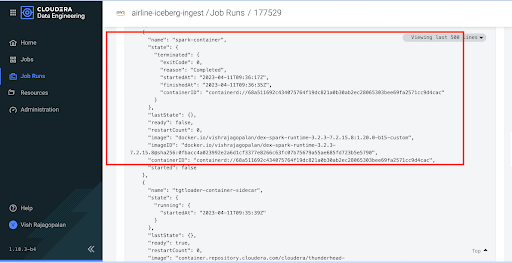

- We need to validate that CDE has indeed pulled the DockerImage to run as a container. In order to do so, we can check Spark Submitter Kubernetes logs and Jobs logs ( see images below) respectively. The specific image Tag name should be changed to the Tag you used when building the image.

A new job for scheduled Runs

- As an alternative to doing ad hoc job runs, we can create a new job and schedule a job run. Using the code example below, you may need to change the path of the spark-sql.py file to the location on your local machine, as well as the user name to your CDP account name.

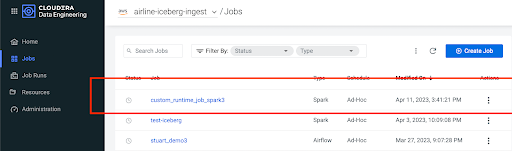

- The images show the execution of the job in the CDE user interface:

cde resource create --name sparkfiles-resource cde resource upload --name sparkfiles-resource --local-path $HOME/Work/sandbox/CDE-runtime/spark-sql.py cde job create --name custom_runtime_job_spark3 --type spark --mount-1-resource dex-spark-runtime-custom --mount-2-resource sparkfiles-resource --application-file spark-sql.py --user vishrajagopalan --runtime-image-resource-name dex-spark-runtime-custom cde job run --name custom_runtime_job_spark3

Summary

This article demonstrates the steps needed to build a custom runtime image, push the image in a private docker registry and use this custom runtime in a CDE workload. While this example demonstrates usage with a Docker hub, you can also use the private registry in your organization for this purpose.

Additional References

- Creating Custom Runtime help doc: here

- Creating and Updating Docker Tokens in CDE: here

- Using CLI API to automate access to CDE: here

- Paul de Fusco’s CDE Tour: here

Sample Spark Code

Filename: spark-sql.py

- Use this sample spark code to copy into your favorite editor and save it as spark-sql.py. This code needs to be only used if you have not cloned the GitHub repo.

from __future__ import print_function import os import sys from pyspark.sql import SparkSession from pyspark.sql.types import Row, StructField, StructType, StringType, IntegerType import sys spark = SparkSession\ .builder\ .appName("PythonSQL")\ .getOrCreate() # A list of Rows. Infer schema from the first row, create a DataFrame and print the schema rows = [Row(name="John", age=19), Row(name="Smith", age=23), Row(name="Sarah", age=18)] some_df = spark.createDataFrame(rows) some_df.printSchema() # A list of tuples tuples = [("John", 19), ("Smith", 23), ("Sarah", 18)] # Schema with two fields - person_name and person_age schema = StructType([StructField("person_name", StringType(), False), StructField("person_age", IntegerType(), False)]) # Create a DataFrame by applying the schema to the RDD and print the schema another_df = spark.createDataFrame(tuples, schema) another_df.printSchema() for each in another_df.collect(): print(each[0]) print("Python Version") print(sys.version) spark.stop()