Community Articles

- Cloudera Community

- Support

- Community Articles

- Customizing Atlas (Part1): Model governance, trace...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

12-12-2018

05:08 PM

- edited on

04-21-2026

06:26 AM

by

GrazittiAPI

Problem Statement: Model governance

Data science and model building are prevalent activities that bring new and innovative value to enterprises. The more prevalent this activity becomes, the more problematic model governance becomes. Model governance typically centers on these questions:

- What models were deployed? when? where?

- What was the serialized object deployed?

- Was deployment to a microservice, a Spark context, other?

- What version was deployed?

- How do I trace the deployed model to its concrete details: the code, its training data, owner, Read.me overview, etc?

Apache Atlas is the central tool in organizing, searching and accessing metadata of data assets and processes on your Hadoop platform. Its Rest API can push metadata from anywhere, so Atlas can also represent metadata off your Hadoop cluster.

Atlas lets you define your own types of objects and inherit from existing out-of-the box types. This lets you store whatever metadata you want to store, and to tie this into Atlas's powerful search, classification and taxonomy framework.

In this article I show how to create a custom Model object (or more specifically 'type') to manage model deployments the same as you govern the rest of your data processes and assets using Atlas. This custom Model type lets you answer all of the above questions for any model you deploy. And ...it does so at scale while your data science or complex Spark transformation models explode in number, and you transform your business to enter the new data era.

In a subsequent article I implement the Atlas work developed here into a larger model deployment framework: https://community.hortonworks.com/articles/229515/generalized-model-deployment-framework-with-apache...

A very brief primer on Atlas: types, entities, attributes, lineage and search

Core Idea

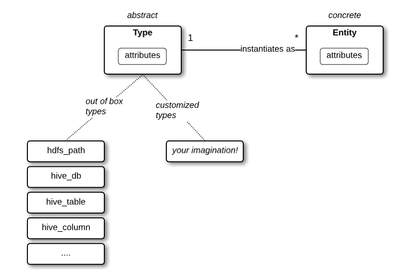

The below diagram represents the core concepts of Atlas: types, entities, attributes. (Let's save the ideas classification and taxonomy for another day).

A type is an abstract representation of an asset. A type has a name and attributes that hold metadata on that asset. Entities are concrete instances of a type. For example, hive_table is a type that represents any hive_table in general. When you create an actual hive table, you will create a new hive_table entity in Atlas, with attributes like table name, owner, create time, columns, external vs managed, etc.

Atlas comes out of the box with many types, and services like Hive have hooks to Atlas to auto-create and modify entities in Atlas. You can also create your own types (via the Atlas UI or Rest API). After this, you are in charge of instantiating entities ... which is easy to do via the RestAPI called from your job scheduler, deploy script or both.

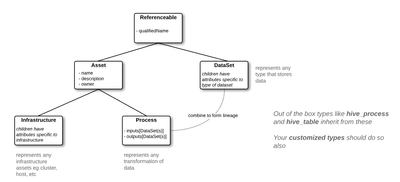

System Specific Types and Inheritance

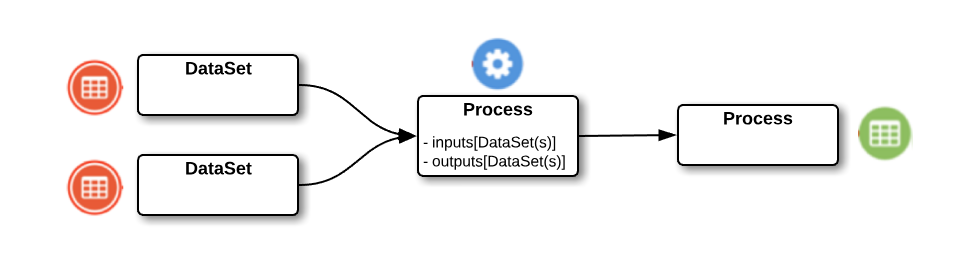

Atlas types are organized around the below inheritance model of types. Out of the box types like hive_table inherit from here and when you create customized types you should also.

The most commonly used parent types in Atlas are DataSet (which represents any type and level of stored data) and Process (which represents transformation of data).

Lineage

Notice that Process has an attribute for an array of one or more input DataSets and another for output DataSets. This is how Process creates lineages of data processed to new data, as shown below.

Search

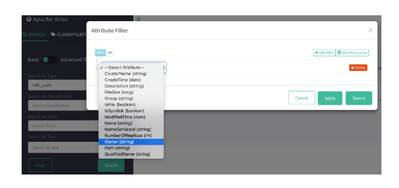

Now that Atlas is filled with types, entities and lineages ... how do you make sense of it all?

Atlas has extremely powerful search constructs that let you find entities by attribute values (you can assemble AND/OR constructs among attributes of a type, using equals, contains, etc). And of course, anything performed on the UI can be done through the Rest API).

Customizing Atlas for model governance

My approach: I first review a customized Model type and then show how to implement it. Implementation comes in two steps: (1) create the custom Model type, and then (2) instantiate it with Model entities as they are deployed in your environment.

I make a distinction between models that are (a) deployed on Hadoop in a data pipeline processing architecture (e.g. complex Spark transformation or data engineering models) and (b) deployed in a microservices or Machine Learning environment. In the first data lineage makes sense (there is a clear input, transformation, output pipeline) whereas in the second it does not (it is more of a request-response model with high throughput requests).

I also show the implementation as hard-coded examples and then as an operational example where values are dynamic at deploy-time. In a subsequent article I implement the customized Model type in a fully automated model deployment and governance framework.

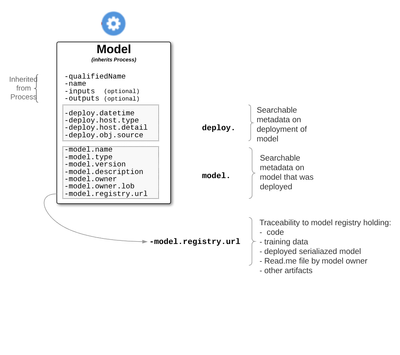

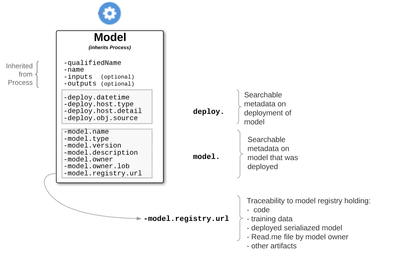

Customized Atlas Type: Model

The customized model type is shown in the diagram below. You can of course exclude shown attributes or include new ones as you feel appropriate for your needs.

Key features are:

- deploy.: attributes starting with deploy describe metadata around the model deployment runtime

- deploy.datetime: the date and time the model was deployed

- deploy.host.type: type of hosting environment for deployed model (e.g. microservices, hadoop)

- deploy.host.detail: specifically where model was deployed (e.g. microservice endpoint, hadoop cluster)

- deploy.obj.source: location of serialized model that was deployed

- model. : attributes describing the model that was deployed (most self-explanatory)

- model.registry.url: provides traceability to model details; points to model registry holding model artifacts including code, training data, Read.me by owner, etc

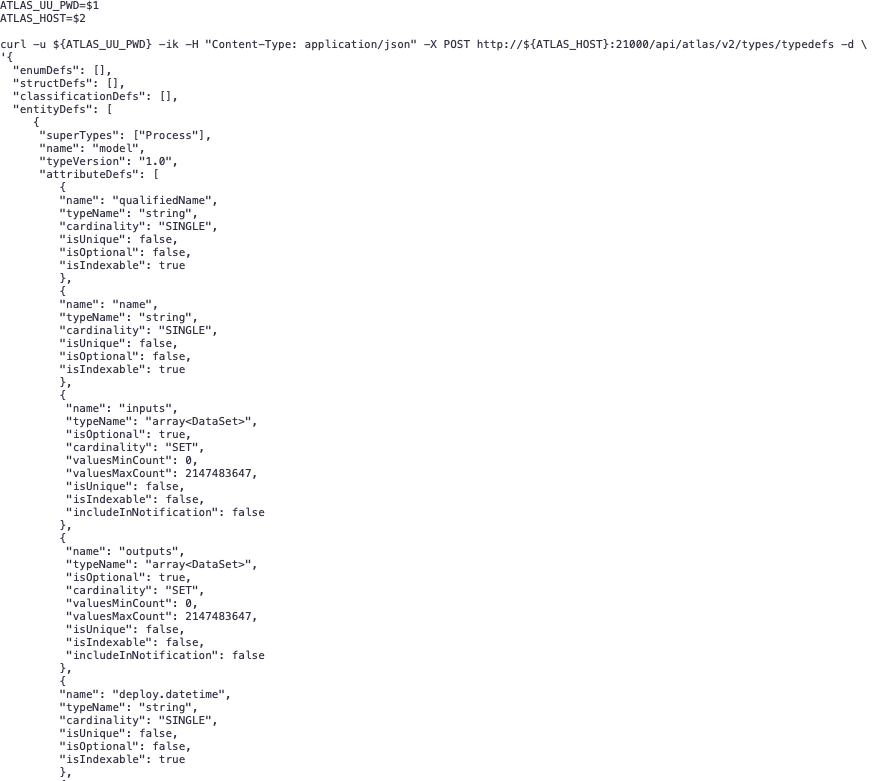

Step 1: Create customized model type (one-time operation)

Use the Rest API by running the below curl command with json construct.

#!/bin/bash

ATLAS_UU_PWD=$1

ATLAS_HOST=$2

curl -u ${ATLAS_UU_PWD} -ik -H "Content-Type: application/json" -X POST http://${ATLAS_HOST}:21000/api/atlas/v2/types/typedefs -d '{

"enumDefs": [],

"structDefs": [],

"classificationDefs": [],

"entityDefs": [

{

"superTypes": ["Process"],

"name": "model",

"typeVersion": "1.0",

"attributeDefs": [

{

"name": "qualifiedName",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "name",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "inputs",

"typeName": "array<DataSet>",

"isOptional": true,

"cardinality": "SET",

"valuesMinCount": 0,

"valuesMaxCount": 2147483647,

"isUnique": false,

"isIndexable": false,

"includeInNotification": false

},

{

"name": "outputs",

"typeName": "array<DataSet>",

"isOptional": true,

"cardinality": "SET",

"valuesMinCount": 0,

"valuesMaxCount": 2147483647,

"isUnique": false,

"isIndexable": false,

"includeInNotification": false

},

{

"name": "deploy.datetime",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "deploy.host.type",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "deploy.host.detail",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "deploy.obj.source",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "model.name",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "model.version",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "model.type",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "model.description",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "model.owner",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "model.owner.lob",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

},

{

"name": "model.registry.url",

"typeName": "string",

"cardinality": "SINGLE",

"isUnique": false,

"isOptional": false,

"isIndexable": true

}

]

}

]

}'

Notice we are (a) using superType 'Process', (b) giving the type name 'model', and (c) creating new attributes in the same attributeDefs construct as those inherited by Process.

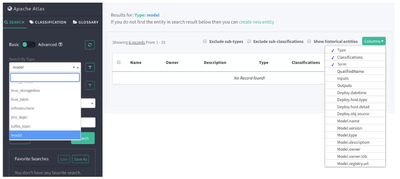

Step 1 result

When we go the Atlas UI we see the 'model' type listed with the other types, and we see the customized attribute fields in the Columns drop down.

Step 2: Create model entity (each time you deploy model or new model version)

Example 1: With lineage (for clear input/process/output processing of data)

Notice two DataSets (type 'hdfs_path') are inputted to the model and one is outputted, as identified by their Atlas guid.

#!/bin/bash

ATLAS_UU_PWD=$1

ATLAS_HOST=$2

curl -u ${ATLAS_UU_PWD} -ik -H "Content-Type: application/json" -X POST http://${ATLAS_HOST}:21000/api/atlas/v2/entity/bulk -d '{

"entities": [

{

"typeName": "model",

"attributes": {

"qualifiedName": "model:disease-risk-HAIL-v2.8@ProdCluster",

"name": "disease-risk-HAIL-v2.8",

"deploy.datetime": "2018-12-05_15:26:41EST",

"deploy.host.type": "hadoop",

"deploy.host.detail": "ProdCluster",

"deploy.obj.source": "hdfs://prod0.genomicscompany.com/model-registry/genomics/disease-risk-HAIL-v2.8/Docker",

"model.name": "disease-risk-HAIL",

"model.type": "Spark HAIL",

"model.version": "2.8",

"model.description": "disease risk prediction for sequenced blood sample",

"model.owner": "Srinivas Kumar",

"model.owner.lob": "genomic analytics group",

"model.registry.url": "hdfs://prod0.genomicscompany.com/model-registry/genomics/disease-risk-HAIL-v2.8",

"inputs": [

{"guid": "cf90bb6a-c946-48c8-aaff-a3b132a36620", "typeName": "hdfs_path"},

{"guid": "70d35ffc-5c64-4ec1-8c86-110b5bade70d", "typeName": "hdfs_path"}

],

"outputs": [{"guid": "caab7a23-6b30-4c66-98f1-b2319841150e", "typeName": "hdfs_path"}]

}

}

]

}'

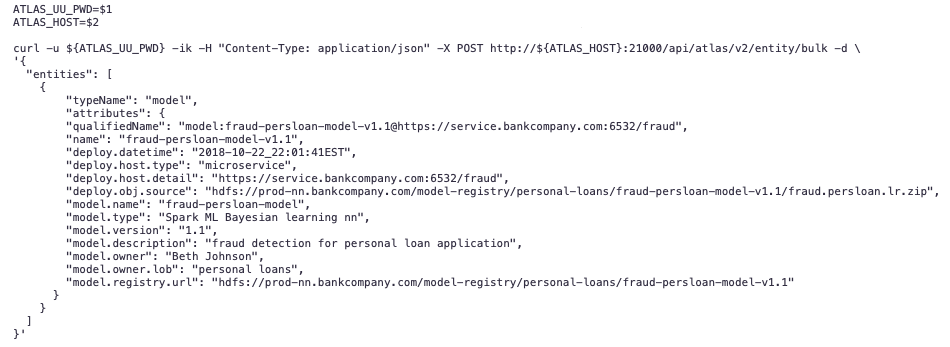

Example 2: No lineage (for request-response type of model eg. microservices or ML scoring)

Similar to above, but no inputs and outputs specified.

#!/bin/bash

ATLAS_UU_PWD=$1

ATLAS_HOST=$2

curl -u ${ATLAS_UU_PWD} -ik -H "Content-Type: application/json" -X POST http://${ATLAS_HOST}:21000/api/atlas/v2/entity/bulk -d '{

"entities": [

{

"typeName": "model",

"attributes": {

"qualifiedName": "model:fraud-persloan-model-v1.1@https://service.bankcompany.com:6532/fraud",

"name": "fraud-persloan-model-v1.1",

"deploy.datetime": "2018-10-22_22:01:41EST",

"deploy.host.type": "microservice",

"deploy.host.detail": "https://service.bankcompany.com:6532/fraud",

"deploy.obj.source": "hdfs://prod-nn.bankcompany.com/model-registry/personal-loans/fraud-persloan-model-v1.1/fraud.persloan.lr.zip",

"model.name": "fraud-persloan-model",

"model.type": "Spark ML Bayesian learning nn",

"model.version": "1.1",

"model.description": "fraud detection for personal loan application",

"model.owner": "Beth Johnson",

"model.owner.lob": "personal loans",

"model.registry.url": "hdfs://prod-nn.bankcompany.com/model-registry/personal-loans/fraud-persloan-model-v1.1"

}

}

]

}'

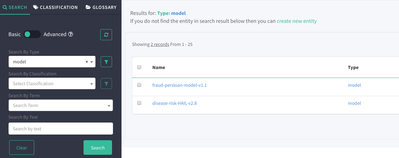

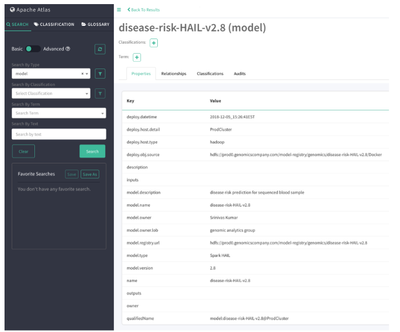

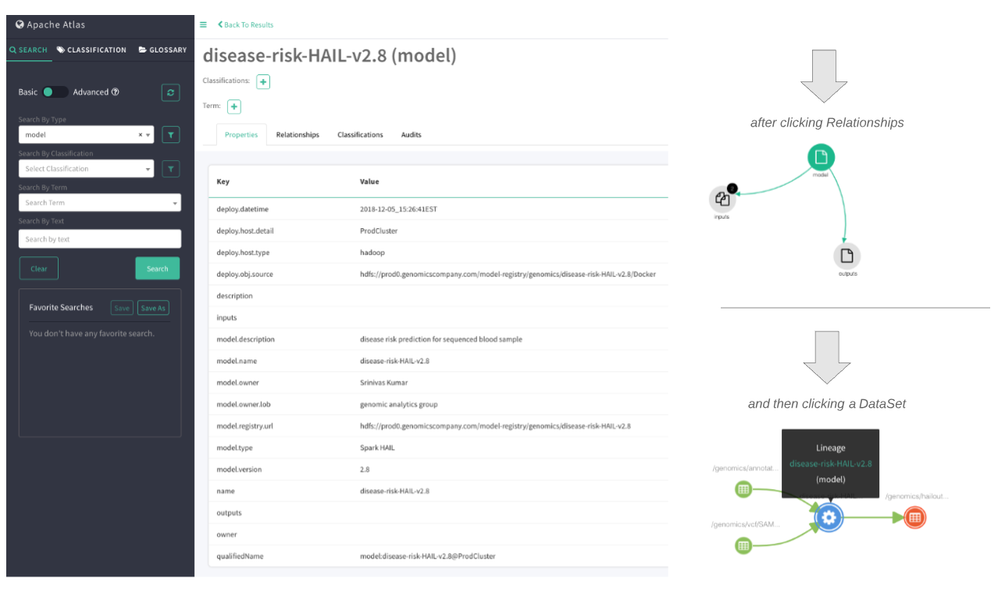

Step 2 result

Now we can search the 'model' type in the UI and see the results (below).

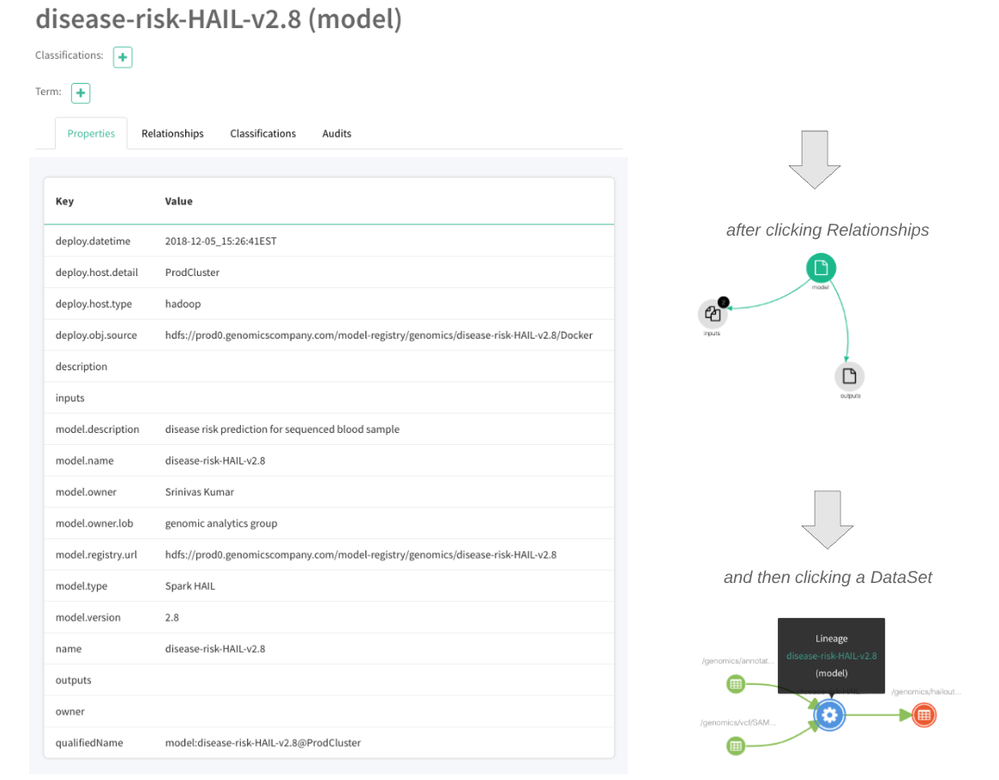

When we click on 'disease-risk-HAIL-v2.8 we see the attribute values, and when we click on Relationships and then on a DataSet we see the lineage (below).

After we click Relationships we see the image on left. After then clicking a DataSet in the relationship, we the lineage on right (below).

For models deployed with no inputs and outputs values, the result is similar to above but no 'Relationships' nor 'Lineage' is created.

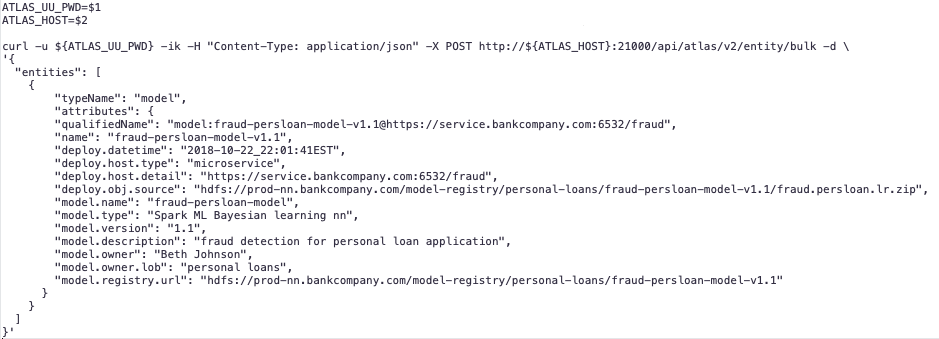

A Note on Operationalizing

The above entity creation was done using hardcoded values. However, in an operational environment these values will be created dynamically for each model deployment (entity creation). In this case the values are gathered by the orchestrator or deploy script, or both, and passed to the curl command. It will look something like this.

#!/bin/bash

ATLAS_UU_PWD=$1

ATLAS_HOST=$2

curl -u ${ATLAS_UU_PWD} -ik -H "Content-Type: application/json" -X POST http://${ATLAS_HOST}:21000/api/atlas/v2/entity/bulk -d '{

"entities": [

{

"typeName": "model",

"attributes": {

"qualifiedName": "model:'"${3}"'@'"${6}"'",

"name": "'"${3}"'",

"deploy.datetime": "'"${4}"'",

"deploy.host.type": "'"${5}"'",

"deploy.host.detail": "'"${6}"'",

"deploy.obj.source": "'"${7}"'",

"model.name": "'"${8}"'",

"model.type": "'"${9}"'",

"model.version": "'"${10}"'",

"model.description": "'"${11}"'",

"model.owner": "'"${12}"'",

"model.owner.lob": "'"${13}"'",

"model.registry.url": "'"${14}"'"

}

}

]

}'

Do notice the careful use of single and double quotes around each shell script variable above. The enclosing single quotes break and then reestablish the json string and the enclosing double quotes allows for spaces inside the variable values.

Summary: What have we accomplished?

We have:

- centralized the governance of data science models (and any complex Spark or other code) in the same Atlas metadata framework we use for the rest of our deployed systems

- leveraged Atlas search against model attributes to make sense of these model deployments

- traced a model's deployment to its concrete artifacts (code, training data, serialized deploy object, model owner Read.me file, etc) stored in a model registry

Data science ... you have now been formally governed along with the rest of the data world 🙂

Crank out more models ... we'll take care of the rest!

References

- Atlas Overview

- Using Apache Atlas on HDP3.0

- Apache Atlas

- Altas Type System

- Atlas Rest API

- Misc HCC articles

- https://community.hortonworks.com/articles/136800/atlas-entitytag-attribute-based-searches.html

- https://community.hortonworks.com/articles/58932/understanding-taxonomy-in-apache-atlas.html

- https://community.hortonworks.com/articles/136784/intro-to-apache-atlas-tags-and-lineage.html

- https://community.hortonworks.com/articles/36121/using-apache-atlas-to-view-data-lineage.html

- https://community.hortonworks.com/articles/63468/atlas-rest-api-search-techniques.html (but v1 of API)

- https://community.hortonworks.com/articles/229220/adding-atlas-classification-tags-during-data-inges...

- Implementing the ideas here into a model deployment framework: https://community.hortonworks.com/articles/229515/generalized-model-deployment-framework-with-apache...

Acknowledgements

Appreciation to the Hortonworks Data Governance and Data Science SME groups for their feedback on this idea. Particular appreciation to @Ian B and @Willie Engelbrecht for their deep attention and interest.

Created on 12-11-2024 02:19 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I don't understand what the benefit of doing it this way is. As far as I know, when creating a table in Hive, a new entity of type hive_table is automatically created in Atlas. This happens automatically, in contrast to your manual approach. Am I misunderstanding something? Could you please explain it to me?