Community Articles

- Cloudera Community

- Support

- Community Articles

- Falcon Hive Integration

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 06-08-2016 01:43 AM - edited 08-17-2019 12:10 PM

I am sharing this again with the configurations and prerequisites which were missing from above example.

This was done using HDP version 2.3.4 on both source and target cluster.

Pre-requisites :

Source and target clusters should be up and running with required services.

HDP stack services required on the clusters are HDFS, Yarn, Falcon, Hive, Oozie, Pig, Tez and Zookeeper as seen below:

Staging and working directories should be present on hdfs with falcon users:

To do that run below commands on both source and target clusters as falcon user.

[falcon@src1 ~]$ hdfs dfs -mkdir /apps/falcon/staging /apps/falcon/working [falcon@src1 ~]$ hdfs dfs -chmod 777 /apps/falcon/staging

Create source and target cluster entity using falcon CLI as below :

For source cluster:

falcon entity -type cluster -submit -file source-cluster.xml

source-cluster.xml :

<?xml version="1.0" encoding="UTF-8" standalone="yes"?> <cluster name="source" description="primary" colo="primary" xmlns="uri:falcon:cluster:0.1"> <tags>EntityType=Cluster</tags> <interfaces> <interface type="readonly" endpoint="hdfs://src-nameNode:8020" version="2.2.0"/> <interface type="write" endpoint="hdfs://src-nameNode:8020" version="2.2.0"/> <interface type="execute" endpoint="src-resourceManager:8050" version="2.2.0"/> <interface type="workflow" endpoint="http://src-oozieServer:11000/oozie/" version="4.0.0"/> <interface type="messaging" endpoint="tcp://src-falconServer:61616?daemon=true" version="5.1.6"/> <interface type="registry" endpoint="thrift://src-hiveMetaServer:9083" version="1.2.1" /> </interfaces> <locations> <location name="staging" path="/apps/falcon/staging"/> <location name="temp" path="/tmp"/> <location name="working" path="/apps/falcon/working"/> </locations> <ACL owner="ambari-qa" group="users" permission="0x755"/> </cluster>

For target cluster:

falcon entity -type cluster -submit -file target-cluster.xml

target-cluster.xml :

<?xml version="1.0" encoding="UTF-8" standalone="yes"?> <cluster name="target" description="target" colo="backup" xmlns="uri:falcon:cluster:0.1"> <tags>EntityType=Cluster</tags> <interfaces> <interface type="readonly" endpoint="hdfs://tgt-nameNode:8020" version="2.2.0"/> <interface type="write" endpoint="hdfs://tgt-nameNode:8020" version="2.2.0"/> <interface type="execute" endpoint="tgt-resouceManager:8050" version="2.2.0"/> <interface type="workflow" endpoint="http://tgt-oozieServer:11000/oozie/" version="4.0.0"/> <interface type="messaging" endpoint="tcp://tgt-falconServer:61616?daemon=true" version="5.1.6"/> <interface type="registry" endpoint="thrift://tgt-hiveMetaServer:9083" version="1.2.1" /> </interfaces> <locations> <location name="staging" path="/apps/falcon/staging"/> <location name="temp" path="/tmp"/> <location name="working" path="/apps/falcon/working"/> </locations> <ACL owner="ambari-qa" group="users" permission="0x755"/> </cluster>

Create source db and table and insert some data :

Run on source cluster's hive:

create database landing_db; use landing_db; CREATE TABLE summary_table(id int, value string) PARTITIONED BY (ds string); ---------------------------------------------------------------------------------------- ALTER TABLE summary_table ADD PARTITION (ds = '2014-01'); ALTER TABLE summary_table ADD PARTITION (ds = '2014-02'); ALTER TABLE summary_table ADD PARTITION (ds = '2014-03'); ---------------------------------------------------------------------------------------- insert into summary_table PARTITION(ds) values (1,'abc1',"2014-01"); insert into summary_table PARTITION(ds) values (2,'abc2',"2014-02"); insert into summary_table PARTITION(ds) values (3,'abc3',"2014-03");

Create target db and table.

Run on target cluster's hive:

create database archive_db; use archive_db; CREATE TABLE summary_archive_table(id int, value string) PARTITIONED BY (ds string);

Submit feed entity (do not schedule):

falcon entity -type feed -submit -file replication-feed.xml

replication-feed.xml :

<?xml version="1.0" encoding="UTF-8"?>

<feed description="Monthly Analytics Summary" name="replication-feed"xmlns="uri:falcon:feed:0.1">

<tags>EntityType=Feed</tags>

<frequency>months(1)</frequency>

<clusters>

<cluster name="source" type="source">

<validity start="2014-01-01T00:00Z" end="2015-03-31T00:00Z"/>

<retention limit="months(36)" action="delete"/>

</cluster>

<cluster name="target" type="target">

<validity start="2014-01-01T00:00Z" end="2015-03-31T00:00Z"/>

<retention limit="months(180)" action="delete"/>

<table uri="catalog:archive_db:summary_archive_table#ds=${YEAR}-${MONTH}" />

</cluster>

</clusters>

<table uri="catalog:landing_db:summary_table#ds=${YEAR}-${MONTH}" />

<ACL owner="falcon" />

<schema location="hcat" provider="hcat"/>

</feed>This example Feed entity below demonstrates the following:

- Cross-cluster replication of a Data Set

- The native use of a Hive/HCatalog table in Falcon

- The definition of a separate retention policy for the source and target tables in replication.

Make sure all oozie servers that falcon talks to has the hadoop configs configured in oozie-site.xml

For example in my case I have added below in my target cluster's oozie:

<property> <name>oozie.service.HadoopAccessorService.hadoop.configurations</name> <value>*=/etc/hadoop/conf,src-namenode:8020=/etc/src_hadoop/conf,src-resourceManager:8050=/etc/src_hadoop/conf</value> <description>Comma separated AUTHORITY=HADOOP_CONF_DIR, where AUTHORITY is the HOST:PORT of the Hadoop service (JobTracker, HDFS). The wildcard '*' configuration is used when there is no exact match for an authority. The HADOOP_CONF_DIR contains the relevant Hadoop *-site.xml files. If the path is relative is looked within the Oozie configuration directory; though the path can be absolute (i.e. to point to Hadoop client conf/ directories in the local filesystem.</description> </property>

Here /etc/src_hadoop/conf is configuration files(/etc/hadoop/conf) copied from source cluster to target cluster's oozie server.

Also ensure that from your target cluster, oozie can submit jobs in source cluster.

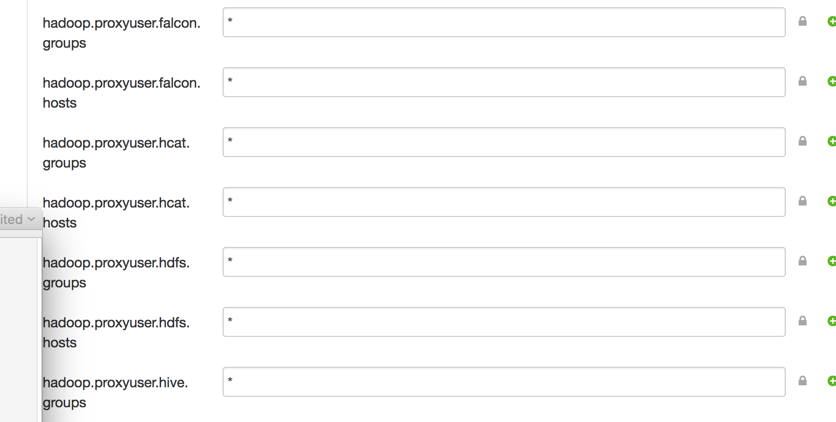

This can be done by setting below property:

Finally schedule the feed as below which will submit oozie co-ordinaton on target cluster.

falcon entity -type feed -schedule -name replication-feed

Created on 06-15-2016 03:13 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Nice one @Rahul Pathak 🙂

Created on 05-03-2017 04:21 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Very good article Rahul. Quick question: Does the table have to be partitioned? I'm trying to replicate a non-partitioned table with UI and I'm getting an exception.

default/FalconWebException:FalconException:java.net.URISyntaxException:Partition Details are missing.

How can I replicate this table using the UI?