Community Articles

- Cloudera Community

- Support

- Community Articles

- Garbage Collection Pauses in Namenode and Datanode

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

09-05-2023

03:33 PM

- edited on

04-20-2026

11:49 PM

by

GrazittiAPI

High level Problem Explanation

The primary garbage collection challenge often arises when the heap configuration of the Namenode or Datanode is inadequate. GC pauses can trigger Namenode crash, failovers, and performance bottlenecks. In larger clusters, there is no response at the time of transitioning to an another Namenode.

Detailed Technical Explanation

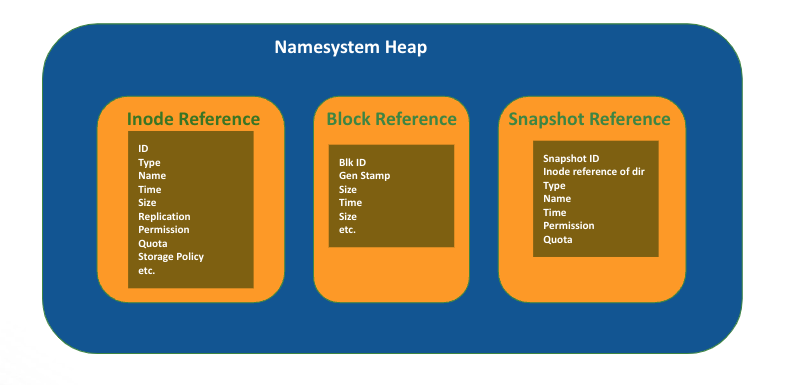

The Namenode comprises three layers of management:

- Inode Management

- Block Management

- Snapshot Management

All of these layers are stored in the Namenode in-memory heap, and any substantial modifications to them can lead to increased heap usage.

Similarly, the Datanode also maintains block mapping information in its memory.

Example Of Inode Reference

<inode>

<id>26890</id>

<type>DIRECTORY</type>

<name>training</name>

<mtime>1660512737451</mtime>

<permission>hdfs:supergroup:0755</permission>

<nsquota>-1</nsquota><dsquota>-1</dsquota>

</inode>

<inode>

<id>26893</id>

<type>FILE</type>

<name>file1</name>

<replication>3</replication>

<mtime>1660512801514</mtime>

<atime>1660512797820</atime>

<preferredBlockSize>134217728</preferredBlockSize>

<permission>hdfs:supergroup:0644</permission>

<storagePolicyId>0</storagePolicyId>

</inode>

Example Of Block Reference

<blocks>

<block><id>1073751671</id><genstamp>10862</genstamp><numBytes>134217728</numBytes></block>

<block><id>1073751672</id><genstamp>10863</genstamp><numBytes>134217728</numBytes></block>

<block><id>1073751673</id><genstamp>10864</genstamp><numBytes>134217728</numBytes></block>

<block><id>1073751674</id><genstamp>10865</genstamp><numBytes>134217728</numBytes></block>

<block><id>1073751675</id><genstamp>10866</genstamp><numBytes>134217728</numBytes></block>

<block><id>1073751676</id><genstamp>10867</genstamp><numBytes>134217728</numBytes></block>

<block><id>1073751677</id><genstamp>10868</genstamp><numBytes>116452794</numBytes></block>

</blocks>

Example Of Snapshot Reference

<SnapshotSection>

<snapshotCounter>1</snapshotCounter>

<numSnapshots>1</numSnapshots>

<snapshottableDir>

<dir>26890</dir>

</snapshottableDir>

<snapshot>

<id>0</id>

<root>

<id>26890</id>

<type>DIRECTORY</type>

<name>snap1</name>

<mtime>1660513260644</mtime>

<permission>hdfs:supergroup:0755</permission>

<nsquota>-1</nsquota>

<dsquota>-1</dsquota>

</root>

</snapshot>

</SnapshotSection>

High heap usage can be caused by several factors, including:

- Inadequate heap size for the current dataset.

- Lack of proper heap tuning configurations.

- Failure to include "-XX:CMSInitiatingOccupancyFraction" with the default value set at 92.

- Gradual growth in the number of inodes/files over time.

- Sudden spikes in HDFS data volumes.

- Sudden changes in HDFS snapshot activity.

- Job executions generating millions of small files.

- System slowness, including CPU or IO bottlenecks.

Symptoms

- Namenode failure and Namenode transitions

- Namenode logs showing JVM metrics, with any pause exceeding 10 seconds being a concern.

WARN [JvmPauseMonitor] util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 143694ms

GC pool 'ParNew' had collection(s): count=1 time=0ms

GC pool 'ConcurrentMarkSweep' had collection(s): count=2 time=143973ms

- Occasionally, GC pauses are induced by system overhead factors, such as CPU spikes, IO contention, slow disk performance, or kernel delays. Look for the phrase "No GCs detected" in the Namenode log.

WARN [JvmPauseMonitor] util.JvmPauseMonitor: Detected pause in JVM or host machine (eg GC): pause of approximately 83241ms

No GCs detected

- Do not misinterpret the FSNamesystem lock message; the root cause of the issue is actually GC overhead the pause before this event.

INFO org.apache.hadoop.hdfs.server.namenode.FSNamesystem:

Number of suppressed read-lock reports: 0

Longest read-lock held at xxxx for 143973ms via java.lang.Thread.getStackTrace(Thread.java:1559)

org.apache.hadoop.util.StringUtils.getStackTrace(StringUtils.java:1058)

org.apache.hadoop.hdfs.server.namenode.FSNamesystemLock.readUnlock(FSNamesystemLock.java:187)

org.apache.hadoop.hdfs.server.namenode.FSNamesystem.readUnlock(FSNamesystem.java:1684)

org.apache.hadoop.hdfs.server.namenode.ContentSummaryComputationContext.yield(ContentSummaryComputationContext.java:134)

..

Relevant Data to Check

- To gain insights into usage patterns, it's advisable to regularly collect heap histograms. If you have enabled the parameters -XX:+PrintClassHistogramAfterFullGC and -XX:+PrintClassHistogramBeforeFullGC in the heap configuration, there's no need for additional histogram collection.

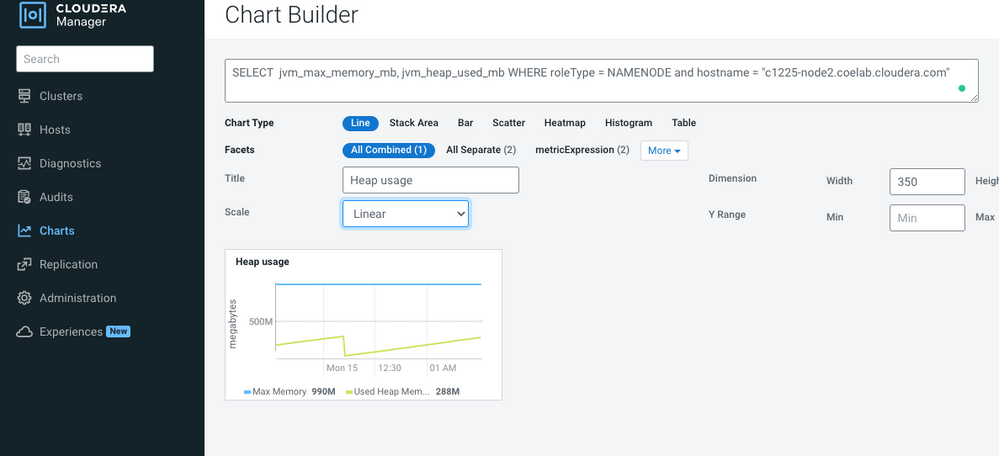

- Metrics:

Cloudera Manager -> Charts -> Chart Builder ->

SELECT jvm_gc_rate WHERE roleType = NAMENODE and hostname = "<problamatic namenode hostname>"SELECT jvm_max_memory_mb, jvm_heap_used_mb WHERE roleType = NAMENODE and hostname = "<problamatic namenode hostname>"

- Minor pauses are generally tolerable, but when pause times exceed the defined "ha.health-monitor.rpc-timeout.ms" timeout, the risk of a Namenode crash increases, particularly if the Namenode writes edits after a GC pause. Also, Heap usage reaching 85% of the total heap can also lead to issues. Even slight pauses, such as 2-5 seconds occurring frequently, can pose problems.

Resolution

- To adjust the heap size effectively, consider the current system load and anticipated future workloads. Given the diverse range of customer use cases, our standard guidelines may not always align perfectly with each scenario. Factors like differing file lengths, snapshot utilization, encryption zones, and more can influence requirements.

- Instead, it's advisable to analyze the current number of files, directories, and blocks in conjunction with the existing heap utilization. For instance, if you have 30 million files and directories, 40 million blocks, and 200 snapshots, and your current heap usage is 45GB out of a total heap size of 50GB, you can estimate the additional heap required for a 50% load increase. In this case, you would need an additional 23GB of heap (current_heap * additional_load_percentage/100) to accommodate the increased load.

- Please note that you can find the current heap usage metrics in the Namenode web UI or through CM (Cloudera Manager) charts by querying: "SELECT jvm_max_memory_mb, jvm_heap_used_mb WHERE roleType = NAMENODE and hostname = "<problematic Namenode hostname>""

- To ensure smooth operation, it's wise to allocate an additional 10-15% of heap beyond the total configured heap. If Sentry is configured, consider adding a 10-20% buffer.

- It's crucial to maintain the total count of files and directories in the cluster below 350 million. Namenode performance tends to decline after surpassing this threshold. We generally recommend not exceeding 300 million files per cluster.

- Please cross-reference our guidance with official heap documents provided by CDP.

- If a customer has configured G1 Garbage Collection for the Namenode and there is no notable improvement in Namenode GC performance, consider using CMS. Note, During our internal testing, we observed no significant performance improvement in the Namenode when using G1 Garbage Collection but leave it to your own testings.

- For Datanode performance, ensure that the Java heap is set to 1GB per 1 million blocks. Additionally, verify that all Datanodes are balanced, with a roughly equal distribution of blocks. Avoid scenarios where one node holds a disproportionately high number of blocks compared to others.