Community Articles

- Cloudera Community

- Support

- Community Articles

- Hive ACID Merge by Example

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 04-21-2017 12:18 PM - edited 08-17-2019 01:17 PM

With the release of HDP 2.6 comes the Hive ACID Merge feature. This is long sought after feature for people who want to batch update table content in ACID manner. This tutorial will walk you through step-by-step how to use this new feature with some really simple dataset. The example is done on HDP 2.6.0.3 installation.

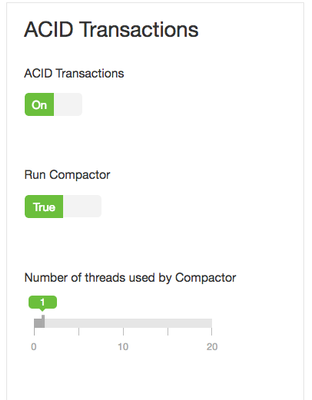

First, ACID in Hive need to be turned on in Ambari UI

Then we will create 2 tables, one as the target of merge and one as the source of merge. Please note that the target table must be bucketed, set as transaction enabled and stored in orc format.

CREATE DATABASE merge_data;

CREATE TABLE merge_data.transactions(

ID int,

TranValue string,

last_update_user string)

PARTITIONED BY (tran_date string)

CLUSTERED BY (ID) into 5 buckets

STORED AS ORC TBLPROPERTIES ('transactional'='true');

CREATE TABLE merge_data.merge_source(

ID int,

TranValue string,

tran_date string)

STORED AS ORC;

Then we will populate the target and source table with some data.

INSERT INTO merge_data.transactions PARTITION (tran_date) VALUES (1, 'value_01', 'creation', '20170410'), (2, 'value_02', 'creation', '20170410'), (3, 'value_03', 'creation', '20170410'), (4, 'value_04', 'creation', '20170410'), (5, 'value_05', 'creation', '20170413'), (6, 'value_06', 'creation', '20170413'), (7, 'value_07', 'creation', '20170413'), (8, 'value_08', 'creation', '20170413'), (9, 'value_09', 'creation', '20170413'), (10, 'value_10','creation', '20170413'); INSERT INTO merge_data.merge_source VALUES (1, 'value_01', '20170410'), (4, NULL, '20170410'), (7, 'value_77777', '20170413'), (8, NULL, '20170413'), (8, 'value_08', '20170415'), (11, 'value_11', '20170415');

When we examine the 2 tables, we would expect after the merge, row 1 should be left untouched, row 4 should be deleted (we imply a business rule here: NULL value indicates deletion), row 7 will be update and row 11 will be insertion for new value.

The more interesting use case is with row 8, where it involves move the row from one partition to another. Merge currently does not support change of partition value on the fly. This need to happen as delete in the old partition and insert in the new partition. In real world use case, you need to construct the source table base on this critiria.

Then we will create the merge statement as the following. Please note that not all 3 WHEN of the merge statements need to exist, it is fine to have only 2 or even 1 of the WHEN statement. We labeled the data with different last_update_user. For more details on Hive Merge, please refer to Hive document

MERGE INTO merge_data.transactions AS T USING merge_data.merge_source AS S ON T.ID = S.ID and T.tran_date = S.tran_date WHEN MATCHED AND (T.TranValue != S.TranValue AND S.TranValue IS NOT NULL) THEN UPDATE SET TranValue = S.TranValue, last_update_user = 'merge_update' WHEN MATCHED AND S.TranValue IS NULL THEN DELETE WHEN NOT MATCHED THEN INSERT VALUES (S.ID, S.TranValue, 'merge_insert', S.tran_date);

As part of the update clause, the set value statement should not contain the target table decorator "T.", otherwise you will get SQL compile error.

Once the merge finished, re-examining the data shows the data is merged just as expected

row 1 wasn't changed ; row 4 was deleted; row 7 was updated and row 11 was inserted. And row 8, as we see, got moved to a new partition.

SELECT * FROM merge_data.transactions order by ID; +----+-----------------------+------------------------------+-----------------------+ | id | transactions.tranvalue| transactions.last_update_user| transactions.tran_date| +----+-----------------------+------------------------------+-----------------------+ | 1 | value_01 | creation | 20170410 | | 2 | value_02 | creation | 20170410 | | 3 | value_03 | creation | 20170410 | | 5 | value_05 | creation | 20170413 | | 6 | value_06 | creation | 20170413 | | 7 | value_77777 | merge_update | 20170413 | | 8 | value_08 | merge_insert | 20170415 | | 9 | value_09 | creation | 20170413 | | 10 | value_10 | creation | 20170413 | | 11 | value_11 | merge_insert | 20170415 | +----+-----------------------+------------------------------+-----------------------+

This simple example provides instructions on how to use ACID merge with HDP 2.6 or later. Of course the real world use case would be much more complicated comparing to this oversimplified example, but they all follow the same principles. And the beauty behind this is, you don't need to create a ETL process to accomplish this any more.

Created on 01-09-2018 06:45 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Qi Wang, Whether this MERGE feature can handle for very high volume ( X TeraBytes ) of data?

Created on 08-20-2018 12:57 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Please let me know why below merge command is throwing the error message.

-------- merge command ----

MERGE INTO merge_data.transactions AS T USING merge_data.merge_source AS S ON T.ID = S.ID WHEN MATCHED THEN UPDATE SET TranValue = S.TranValue, last_update_user = 'merge_update' WHEN NOT MATCHED THEN INSERT VALUES (S.ID, S.TranValue, 'merge_insert', S.tran_date);

------ Error ----

Error: Failure while running task:java.lang.RuntimeException: java.lang.RuntimeException: Hive Runtime Error while closing operators: org.apache.hadoop.hive.ql.metadata.HiveException: org.apache.hadoop.hive.ql.metadata.HiveException: Error evaluating cardinality_violation(_col0,_col1)

Caused by: java.lang.RuntimeException: Hive Runtime Error while closing operators: org.apache.hadoop.hive.ql.metadata.HiveException: org.apache.hadoop.hive.ql.metadata.HiveException: Error evaluating cardinality_violation(_col0,_col1)

Caused by: java.lang.RuntimeException: Cardinality Violation in Merge statement: [61, 3, 0],20170415

Created on 08-22-2018 05:55 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

very nice article. thank you for sharing.

Created on 08-22-2018 05:57 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

great article

Created on 10-16-2018 11:50 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Is it possible to batch insert into a table that contains a field of type array<string>. What is the syntax for that?

Created on 07-17-2022 04:09 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi,

Can i use CTE in Hive script? Thanks.