Community Articles

- Cloudera Community

- Support

- Community Articles

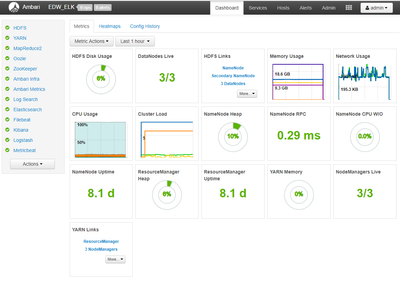

- How To Install ELK Stack (6.3.2) in Ambari

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 08-30-2018 03:07 PM - edited 09-16-2022 01:44 AM

In Hortworks HCP they use an ElasticSearch Mpack for version 5.x of Elasticsearch and Kibana:

I have taken this and expanded it to include version 6.3.2 of ElasticSearch, Logstash, Kibana, FileBeat, and MetricBeat.

My cluster is 6 nodes.

ElasticSearch is installed on Nodes 4,5,6(4 Master & 5,6 Data Nodes).

Logstash is Installed on Node 3.

FileBeat & MetricBeat are installed on all 6 nodes.

Kibana is installed on Node 4.

The rest of the cluster is configured normally for a minimal Install.

Downloads

Orginal HCP Mpack

elasticsearch-mpack-1600-7.tar.gz

My MPack 6.3.0 - Version Change + Logstash + Beats

elasticsearch-mpack-2500-9.tar.gz

My Mpack 6.3.2 - Version Change + Logstash (multi-node & jvm settings config) + sudo non root capabilities

elasticsearch-mpack-2600-9.tar.gz

Installation Steps

1. Deliver Management Pack tar.gz to local filesystem on Ambari-Server

upload to hdfs view Files View

download to ambari-server node (/home/root)

2. Install Management Pack

sudo ambari-server install-mpack --mpack=/home/root/elasticsearch_mpack-2.6.0.0-9.tar.gz --verbose

Uninstall is: sudo ambari-server uninstall-mpack --mpack-name=elasticsearch-ambari.mpack

3. Restart Ambari Server

sudo ambari-server restart

4. Use Ambari Add Service to Install ELK Stack Components

The following settings will be required during the Install Wizard

ES_URL:

example: http://node4.hostname.com:9200

KIBANA_URL:

example: http://node4.hostname.com:5000

LOGSTASH_URL:

example: http://node3.hostname.com:5044

ElasticSearch Zen Discovery Hosts:

example: [ node4.hostname.com, node5.hostname.com, node6.hostname.com ]

Configuration

Post installation Ambari handles the configuration of all components including logstash (input, output, and filters) and FileBeat and MetricBeat configuration files.

- ElasticSearch configuration should work out of the box without any changes other than Zen Discovery Hosts.

- Logstash filters are setup for beats input, file FileBeat filter, and elasticsearch output.

- FileBeat is setup to use Logstash.

- MetricBeat is setup to send metrics directly to Elasticsearch.

Summary

Learning this Elasticsearch MPack is a good example of how to create your own custom stack using a Management Pack to define services not normally found in an Ambari Cluster. Ambari Administrators looking to understand how to create their own Management Pack should take some time to diff the Mpacks attached below. Creating custom services controlled via Ambari is pretty easy if you mimic the folder structure, make the necessary xml file changes, and adjust the python package scripts accordingly.

Created on 09-01-2018 09:06 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@ Steve Matison

Thank you for posting the ES MPACK. Would you be able to share how to build our own custom MPACK. Maybe a follow on blog.

Cheers

Amit

Created on 09-02-2018 04:03 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Amit Nandi sure I will work on an article for how to make an mpack.

Created on 02-23-2019 03:06 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Today I am working on an ELK Mpack for HDP 3.0+ and HDF 3.0+. To pick this back up I have started on another documentation journey to record my progress. Starting with this post and https://cwiki.apache.org/confluence/display/AMBARI/Quick+Start+Guide I was able to get a base cluster up with the ELK above. I will be creating another article for getting this to work on the new HDP stacks as well as changes for most recent version of ELK stack.

Created on 12-31-2019 03:55 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

When i try to install ELK to my cluster (HDP 3.0, HDF 3.2 and Ambari 2.7.1) according to these steps, i get this error:

Traceback (most recent call last):

File "/usr/sbin/ambari-server.py", line 1060, in <module>

mainBody()

File "/usr/sbin/ambari-server.py", line 1030, in mainBody

main(options, args, parser)

File "/usr/sbin/ambari-server.py", line 980, in main

action_obj.execute()

File "/usr/sbin/ambari-server.py", line 79, in execute

self.fn(*self.args, **self.kwargs)

File "/usr/lib/ambari-server/lib/ambari_server/setupMpacks.py", line 900, in install_mpack

(mpack_metadata, mpack_name, mpack_version, mpack_staging_dir, mpack_archive_path) = _install_mpack(options, replay_mode)

File "/usr/lib/ambari-server/lib/ambari_server/setupMpacks.py", line 700, in _install_mpack

tmp_root_dir = expand_mpack(tmp_archive_path)

File "/usr/lib/ambari-server/lib/ambari_server/setupMpacks.py", line 151, in expand_mpack

archive_root_dir = get_archive_root_dir(archive_path)

File "/usr/lib/ambari-server/lib/resource_management/libraries/functions/tar_archive.py", line 82, in get_archive_root_dir

with closing(tarfile.open(archive, mode(archive))) as tar:

File "/usr/lib64/python2.7/tarfile.py", line 1678, in open

return func(name, filemode, fileobj, **kwargs)

File "/usr/lib64/python2.7/tarfile.py", line 1729, in gzopen

raise ReadError("not a gzip file")

tarfile.ReadError: not a gzip file

Does anyone know the cause of this error?

Created on 12-31-2019 04:25 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@mervezeybel this error is caused by sending the mpack command to ambari but without a valid mpack file. Not sure what full command you gave but I have seen this happen before when the url I use is wrong. Couple of things you can do:

Try to wget the url to a local file, then adjust command to use the local file.

If the mpack url you are using is still not working, try to get it again from my GitHub directly:

https://github.com/steven-dfheinz/dfhz_elk_mpack

There is a newer version there as well. Make sure you get the correct /raw/ link if using the github links (see sample below). If you just take the /blob/ link straight from the page, it will result in same error you have (not a gzip file).

ambari-server install-mpack --mpack=https://github.com/steven-dfheinz/dfhz_elk_mpack/raw/master/elasticsearch_mpack-3.4.0.0-0.tar.gz --verbose

Created on 12-31-2019 06:11 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thank you @stevenmatison. I can install properly from https://github.com/steven-dfheinz/dfhz_elk_mpack.

Created on 09-17-2021 03:19 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I try to install elasticsearch-6.4.2 to my cluster(HDP 3.1 Ambari 2.7.3) , intallation was completed successfully but it could not start, and the error encounterd:

Traceback (most recent call last):

File "/var/lib/ambari-agent/cache/stacks/HDP/3.1/services/ELASTICSEARCH/package/scripts/es_master.py", line 168, in <module>

ESMaster().execute()

File "/usr/lib/ambari-agent/lib/resource_management/libraries/script/script.py", line 352, in execute

method(env)

File "/usr/lib/ambari-agent/lib/resource_management/libraries/script/script.py", line 1011, in restart

self.start(env)

File "/var/lib/ambari-agent/cache/stacks/HDP/3.1/services/ELASTICSEARCH/package/scripts/es_master.py", line 153, in start

self.configure(env)

File "/var/lib/ambari-agent/cache/stacks/HDP/3.1/services/ELASTICSEARCH/package/scripts/es_master.py", line 86, in configure

group=params.es_group

File "/usr/lib/ambari-agent/lib/resource_management/core/base.py", line 166, in __init__

self.env.run()

File "/usr/lib/ambari-agent/lib/resource_management/core/environment.py", line 160, in run

self.run_action(resource, action)

File "/usr/lib/ambari-agent/lib/resource_management/core/environment.py", line 124, in run_action

provider_action()

File "/usr/lib/ambari-agent/lib/resource_management/core/providers/system.py", line 123, in action_create

content = self._get_content()

File "/usr/lib/ambari-agent/lib/resource_management/core/providers/system.py", line 160, in _get_content

return content()

File "/usr/lib/ambari-agent/lib/resource_management/core/source.py", line 52, in __call__

return self.get_content()

File "/usr/lib/ambari-agent/lib/resource_management/core/source.py", line 144, in get_content

rendered = self.template.render(self.context)

File "/usr/lib/ambari-agent/lib/ambari_jinja2/environment.py", line 891, in render

return self.environment.handle_exception(exc_info, True)

File "/var/lib/ambari-agent/cache/stacks/HDP/3.1/services/ELASTICSEARCH/package/templates/elasticsearch.master.yml.j2", line 93, in top-level template code

action.destructive_requires_name: {{action_destructive_requires_name}}

File "/usr/lib/ambari-agent/lib/resource_management/libraries/script/config_dictionary.py", line 73, in __getattr__

raise Fail("Configuration parameter '" + self.name + "' was not found in configurations dictionary!")

resource_management.core.exceptions.Fail: Configuration parameter 'hostname' was not found in configurations dictionary!

I modified the property of discovery.zen.ping.unicast.hosts from elastic-config.xml and hostname from elasticsearch-env.xml, However, it still could not start and the same error encountered, do you have any idea?