Community Articles

- Cloudera Community

- Support

- Community Articles

- How to Mask Columns in Hive with Atlas and Ranger

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 09-07-2017 01:58 PM - edited 08-17-2019 11:17 AM

Short Description:

A quick tutorial on how to mask columns in Hive for regulatory purposes

Abstract:

This tutorial will cover how to apply tags to Atlas entities and subsequently leverage tagging policies in Ranger to mask Personally Identifiable Information (PII). Atlas serves as a common metadata store designed to exchange metadata both within and outside of the Hadoop stack. It features a simple user interface and a REST API for ease of access and integration. The Atlas-Ranger paradigm unites data classification with policy enforcement. Figures will be used as a graphical aid. Steps will be provided in between figures via bullet points.

Note:

This tutorial assumes the user has successfully installed Ranger, enabled Ranger audit to Solr, installed Atlas, installed Hive, configured Atlas to work with Hive, and configured Atlas to work with Ranger. It also assumes the user has dummy data for development purposes. For detailed instructions on how to accomplish these steps please review our HDP development documentation: HDP Developer Guide: Data Governance.

Tools:

You can create the dummy data we will use in this tutorial via the following commands. Ensure you have the proper user privileges to write files to your local environment and copy files into HDFS.

Statement for creating the employee table

create table employee (ssn string, name string, location string) row format delimited fields terminated by ',' stored as textfile;

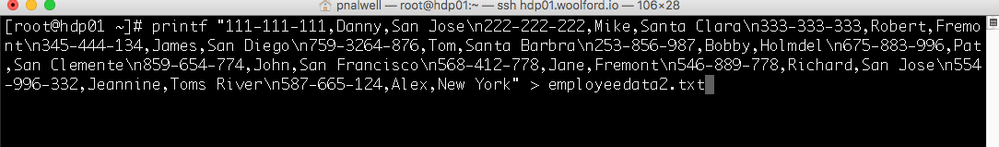

Statement for creating our dummy data

printf "111-111-111,Danny,San Jose\n222-222-222,Mike,Santa Clara\n333-333-333,Robert,Fremont\n345-444-134,James,San Diego\n759-3264-876,Tom,Santa Barbra\n253-856-987,Bobby,Holmdel\n675-883-996,Pat,San Clemente\n859-654-774,John,San Francisco\n568-412-778,Jane,Fremont\n546-889-778,Richard,San Jose\n554-996-332,Jeannine,Toms River\n587-665-124,Alex,New York" > employeedata.txt

Statement for transfer data to the Hive Warehouse

hdfs dfs -copyFromLocal employeedata.txt /apps/hive/warehouse/employee

How to Mask Columns in Hive with Atlas and Ranger

Step 1: Creating our Hive table and populating data

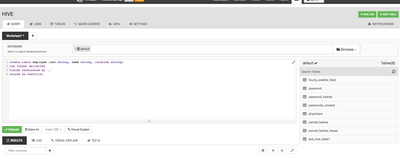

- Log into Ambari and navigate to the Hive UI. Submit the create table statement from the tools above. Figure 1

Figure 1: The HIVE UI and create employee table statement

- Generate our dummy data. Figure 2

Figure 2: The CLI command to generate our dummy data

- Transfer our dummy data to the Hive warehouse and our employee table. Figure 3

Figure 3: The HDFS command to transfer our data to the HIve Warehouse

- Ensure our data was properly populated into our Hive table via a select * from employee; SQL command. Figure 4

Figure 4: The "select * from employee" Hive table.

Step 2: Creating a tag in the Atlas UI

- Now that we have a table with PII we can create a tag in atlas and leverage the event related metadata we created during the creation of our Hive table.

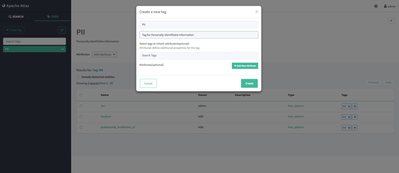

- Log into Atlas UI and navigate to tags. Click the create tag button. Let's create a tag called PII and give it a description. Figure 1

Figure 1: The Atlas UI and the Creation of a Tag

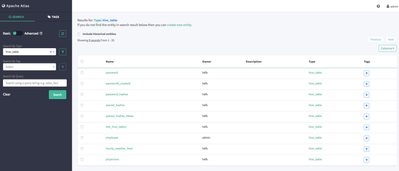

- Now that we have our PII tag, we can append our tag to an Atlas entity. In this case we want to search for hive_table; which is an entity atlas supports out of the box. Once we've found our entity, we can then search for the columns we wish to task and subsequently mask. Figure 2

Figure 2: Navigating to our hive_table entities and searching for the employee table

-

In this case let's select the employee table; which contains a ssn column. After we've clicked on the table we wish to work with; we can navigate to our columns of choice ssn. Figure 3

Figure 3: Employee hive_table entity and subsequent columns

- Once we've clicked on the ssn column under the key columns and value ssn we can add a tag via the button underneath the name of the Atlas hive_column entity. Figure 4

- Let's select PII, the tag we previously created and click add. After we've tagged the ssn (hive_column) entity, our tags key will show a blue PII tag to let the user know a tag has been applied to the entity.

Figure 4: Adding a tag via the tagging + button under ssn(hive_column)

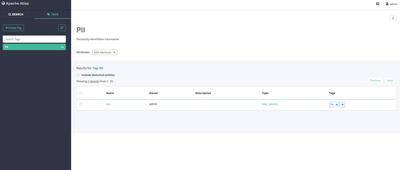

- We can now navigate back to the tags page of our UI and see that our PII tag has been applied to the ssn column. Figure 5

- This navigation and classification paradigm allows the user to mask multiple columns in a variety of datasets. This is just a simple example; but one can leverage this notion to apply tagging to other conventions. e.g the dev_group only has access to website_user_tables, the data_science_group only has access to specific tables, and the admin_group can assign create, update, alter SQL commands to users or groups that should have those privileges and assign select SQL commands to anyone who is not allowed to alter data.

Figure 5: The assignment of our PII tag to the employee.ssn column

Step 3: Creating a Tag Based Policy in Ranger

- Now that we've created a table in Hive and assigned a tag in Atlas, we can create a tag based policy in Ranger.

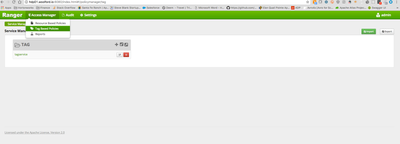

- Log into the Ranger UI and navigate to access manager >> tag based policies. Figure 1

Figure 1: Accessing the Ranger Tag Based Policies

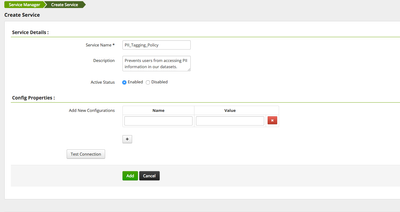

- Click the + button to create a new tag policy. Let's name ours PII_Tagging_Policy, add a description, and click Add. Figure 2

Figure 2: Creating a new tagging Service called PII_Tagging_Policy

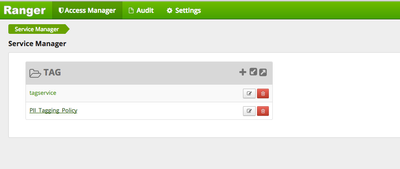

- After adding the new tag based policy, navigate back to the tag based policies service manager and click the tagging policy we just created. There are two tabs within our tagging policy: Access and Masking. Figure 3

Figure 3: Navigating to the Tagging Policy we just created

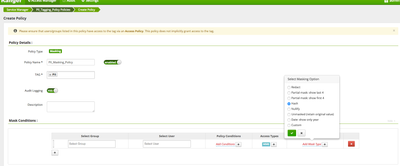

- Since we want to apply a mask to the columns we've tagged in Atlas with PII we are going to create a masking policy, so navigate to the masking tab and select Add New Policy. Figure 4

Figure 4: Adding a new masking policy within our PII_Tagging_Policy

- Let's name our new policy PII_Masking_Policy, and select the Atlas Tag we previously created.

- Be sure to select the user our group you want to apply this tagging policy to. In this case, I am applying the policy to a single user, admin. Our mask conditions will be for Hive and we will select a Hash Mask

- This is where Ranger integrates with Atlas and applies a hashing policy to the column entities we selected within Atlas. The Ranger tag policy will follow our PII tag on all of our Hive datasets; and we can now manage our how our policy is applied via Atlas. Figure 5

- Be sure to include the relevant user or group you'd like apply this policy to. In this case, I choose the user admin.

Figure 5: Applying our Atlas Tag and Ranger Masking Policy

- We still need to ensure Ranger applies this policy to our Hive resource within Ranger so let's navigate to access manager>> resource based policies and edit our hive service.

- In this case, we're editing the woolford_hdp_hive service. Click the pencil icon next to the service based policy name. Figure 6

- If you do not have a hive service, you can create a new service and apply the proper settings.

Figure 6: Navigating to the resource based policies page and the hive service folder

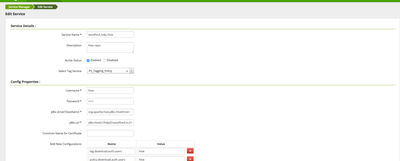

- Edit the hive service by selecting the Ranger tag policy we just created "PII_Tagging_Policy". There is a drop down menu next to the Select Tag Service property. Figure 7

- Save your changes.

Figure 7: Applying our Ranger tagging policy to the Hive Resource

Step 4: Verifying our Users cannot access PII

- Now that we've created a tag, applied that tag to an Atlas entity, (our Hive column ssn from the employees table), created a Ranger tagging policy that leverages that tag and masks the information within the tagged column, and applied that tagging policy to our Hive service we can validate our policy from the Hive UI.

- Navigate to the Hive UI and fire off a "select * from employee;" query.

- You will now see a mask over the ssn column. Figure 1

Figure 1: The Hive UI and our masked ssn column, notice the Hash over our PII data

Conclusion:

Congratulations; you're now able to successfully implement a tagging policy that masks Personally Identifiable Information so your organization can comply with regulatory guidelines. You can also perform a number of other tasks that are very similar to the policy you've just developed. For a full listing of features please refer to the developer guide above or post questions to the Hortonworks Community Connection. Suggestions for other tutorials are also highly encouraged.

Created on 02-23-2018 11:58 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I having problem masking fields in views.

I create a view of the table employee:

create view employee_v as select * from employee;

Then I tag the field ssn as PII in the view. When selecting from the view employee_v the ssn field is not get masked. (Masking in the table works.)

Is this a known issue or am I doing something wrong?

I running HDP 2.6.2.

Created on 02-23-2018 07:44 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

This is something that should be supported: http://atlas.apache.org/Bridge-Hive.html

Let me try and replicate.

Created on 08-28-2019 04:59 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Palwell

Is the same solution works for impala/hbase databases/tables/columns as well as Hive?

is there any relevent documentation you can please refer to?

regards

Tag

Created on 05-22-2020 09:45 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

We have created a new Support Video based on this topic: