Community Articles

- Cloudera Community

- Support

- Community Articles

- How to address JVM OutOfMemory errors in NiFi.

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 02-23-2017 02:25 PM - edited 08-17-2019 02:10 PM

NiFi works with FlowFiles. Every FlowFile that exists consists of two parts, FlowFile content and FlowFile Attributes. While the FlowFile's content lives on disk in the content repository, NiFi holds the "majority" of the FlowFile attribute data in the configured JVM heap memory space. I say "majority" because NiFi does swapping of Attributes to disk on any queue that contains over 20,000 FlowFiles (default, but can be changed in the nifi.properties).

Once your NiFi is reporting OutOfMemory (OOM) Errors, there is no corrective action other then restarting NiFi. If changes are not made to your NiFi or dataflow, you are surely going to encounter this issue again and again.

The default configuration for JVM heap in NiFi is only 512 MB. This value is set in the nifi-bootstrap.conf file.

# JVM memory settings java.arg.2=-Xms512m java.arg.3=-Xmx512m

While the default may work for some dataflow, they are going to be undersized for others. Simply increasing these values till you stop seeing (OOM) error should not be your immediate go to solution. Very large heap sizes could also have adverse impacts on your dataflow as well. Garbage collection will take much longer to run with very large heap sizes. While garbage collections occurs, it is essentially a stop the world event. This amount to dataflow stoppage for the length time it takes for that to complete. I am not saying that you should never set large heap sizes because sometimes that is really necessary; however, you should evaluate all other options first....

NiFi and FlowFile attribute swapping:

NiFi already has a built in mechanism to help reduce the overall heap footprint. The mechanism swaps FlowFiles attributes to disk when a given connection's queue exceeds the configured threshold. These setting are found in the nifi.properties file:

nifi.swap.manager.implementation=org.apache.nifi.controller.FileSystemSwapManager nifi.queue.swap.threshold=20000 nifi.swap.in.period=5 sec nifi.swap.in.threads=1 nifi.swap.out.period=5 sec nifi.swap.out.threads=4

Swapping however will not help if your dataflow is so large that queues are how everywhere, but still have not exceeded the threshold for swapping. Anytime you decrease the swap threshold, more swapping can occur which may result in some throughput performance. So here are some other things to check for...

So some common reason for running out of heap memory include:

1. High volume dataflow with lots of FlowFiles active any any given time across your dataflow. (Increase configured nifi heap size in bootstrap.conf to resolve)

2. Creating a large number of Attributes on every FlowFile. More Attributes equals more heap usage per FlowFile.

Avoid creating unused/unnecessary Attributes on FlowFiles. (Increase configured nifi heap size in bootstrap.conf to resolve and/or reduce the configured swap threshold)

3. Writing large values to FlowFile Attributes. Extracting large amounts of content and writing it to an attribute on a FlowFile will result in high heap usage. Try to avoid creating large attributes when possible. (Increase configured nifi heap size in bootstrap.conf to resolve and/or reduce the configured swap threshold)

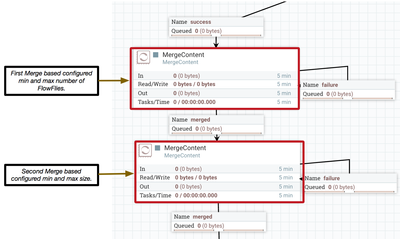

4. Using the MergeContent processor to merge a very large number of FlowFiles. NiFi can not merge FlowFiles that are swapped, so all these FlowFile's attributes must be in heap when the merge occurs. If merging a very large number of FlowFiles is needed, try using two MergeContent processors in series with one another. Have first merge a max of 20,000 FlowFiles and the second then merge those 10,000 FlowFile files in to even larger bundles. (Increase configured nifi heap size in bootstrap.conf also help)

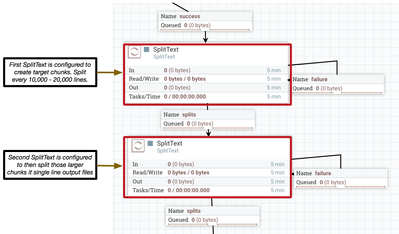

5. Using the SplitText processor to split one File in to a very large number of FlowFiles. Swapping of a large connection queue will not occur until after the queue has exceeded swapping threshold. The SplitTEXT processor will create all the split FiLowFiles before committing them to the success relationship. Most commonly seen when SpitText is used to split a large incoming FlowFile by every line. It is possible to run out of heap memory before all the splits can be created. Try using two SplitText processors in series. Have the first split the incoming FlowFiles in to large chunks and the second split them down even further. (Increase configured nifi heap size in bootstrap.conf also help)

Note: There are additional processors that can be used for splitting and joining large numbers of FlowFiles, so the same approach as above should be followed for those as well. I only specifically commented on the above since they are more commonly seen being used to deal with very large numbers of FlowFiles.

Created on 02-24-2017 02:48 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks for writing this up @Matt Clarke - very helpful. Do you have a rule of thumb for a maximum heap size? Is there a limit where garbage collection will surely cause more problems than any gains from further increases in heap?