Community Articles

- Cloudera Community

- Support

- Community Articles

- How to use Hive testbench to perform benchmarks an...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 09-01-2016 01:26 AM - edited 08-17-2019 10:25 AM

The hive testbench consists of a data generator and a standard set of queries typically used for benchmarking hive performance. This article describes how to generate data and run a query in using beeline and Hive 2.0 with and without LLAP. It also shows how to use explain to see the difference in query plans.

If you don't have a cluster already configured for LLAP, you can provision one in AWS using Hortonworks Cloud. See this article for instructions on how to provision a 2.5 tech preview with LLAP enabled.

1. Log into the master node in you cluster where Hive is installed. If you used Hortonworks Cloud to create your instance, locate the node with a name ending in master. The ssh command is shown next to the master instance. If you are logging in from a linux host, click on the icon to the right of the ssh command to select the command text and copy the command. In the linux shell, change to the directory containing your AWS key .pem file and the run the copied command.

If you are logging in from Windows, consult the AWS user guide for instructions on how to log in using putty with the user name cloudbreak and authenticating with the key file.

2. Sudo to the hdfs user to begin generating data. Change to the home directory for the hdfs user:

sudo -u hdfs -s cd /home/hdfs

3. Download the testbench utilities from Github and unzip them:

wget https://github.com/hortonworks/hive-testbench/archive/hive14.zip unzip hive14.zip

4. Open the load_partitioned.sql file in an editor:

vi hive-testbench-hive14/settings/load-partitioned.sql

5. Correct the hive.tez.java.opts setting:

Comment out the line below by adding -- at the beginning of the line:

-- set hive.tez.java.opts=-XX:+PrintGCDetails -verbose:gc -XX:+PrintGCTimeStamps -XX:+UseNUMA -XX:+UseG1GC -XX:+HeapDumpOnOutOfMemoryError -XX:HeapDumpPath=/tmp/;

Add the line below:

set hive.tez.java.opts=-XX:+PrintGCDetails -verbose:gc -XX:+PrintGCTimeStamps -XX:+UseNUMA -XX:+UseParallelGC -XX:+HeapDumpOnOutOfMemoryError -XX:HeapDumpPath=/tmp/;

Save the file and exit.

6. Generate 30G of test data:

/* In case GCC is not installed */ yum install gcc /* If javac is not found */ export JAVA_HOME=/usr/jdk64/jdk1.8.0_77 export PATH=$JAVA_HOME/bin:$PATH cd hive-testbench-hive14/ sudo ./tpcds-build.sh ./tpcds-setup.sh 30

7. A map reduce job runs to create the data and load the data into hive. This will take some time to complete. The last line in the script is:

Data loaded into database tpcds_bin_partitioned_orc_30.

8. Choose a query to run for benchmarking. For example query55.sql. Copy the query of of your choice and make an explain version of the query. The explain query will be helpful later on to see how hive is planning the query.

cd sample-queries-tpcds cp query55.sql explainquery55.sql vi explainquery55.sql

Add the keyword explain before the query. For example the first line of the explain of query 55:

explain select i_brand_id brand_id, i_brand brand,

Save and quit out of the file.

9. You are now ready to issue a benchmark query. Start the beeline hive2 cli.

beeline -i testbench.settings -u jdbc:hive2://localhost:10500/tpcds_bin_partitioned_orc_30

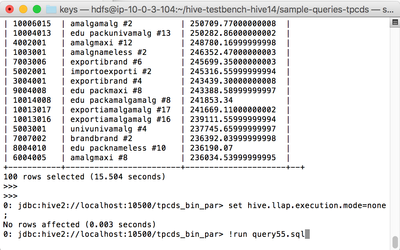

10. To try a query without LLAP, set hive.llap.execution.mode=none and run a query. For example, the command line below will run benchmark query 55:

set hive.llap.execution.mode=none; !run query55.sql

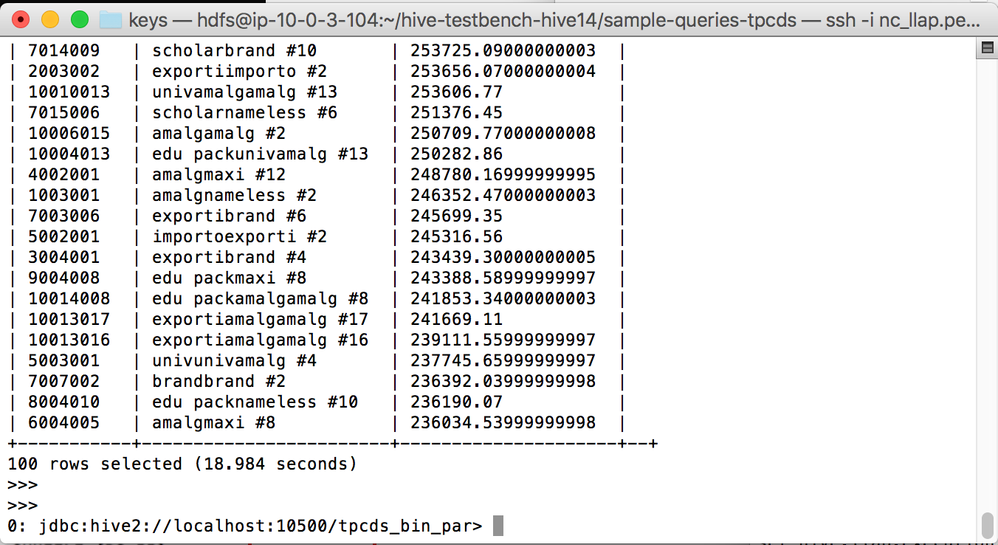

Note the completion time at the end of the query is 18.984 without LLAP:

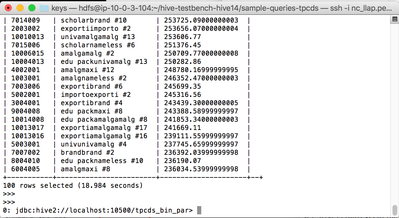

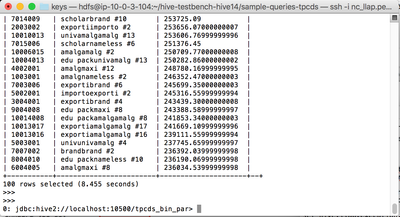

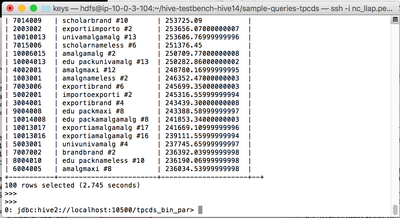

11. Now try the query with LLAP, set hive.llap.execution.mode=all and run the query again:

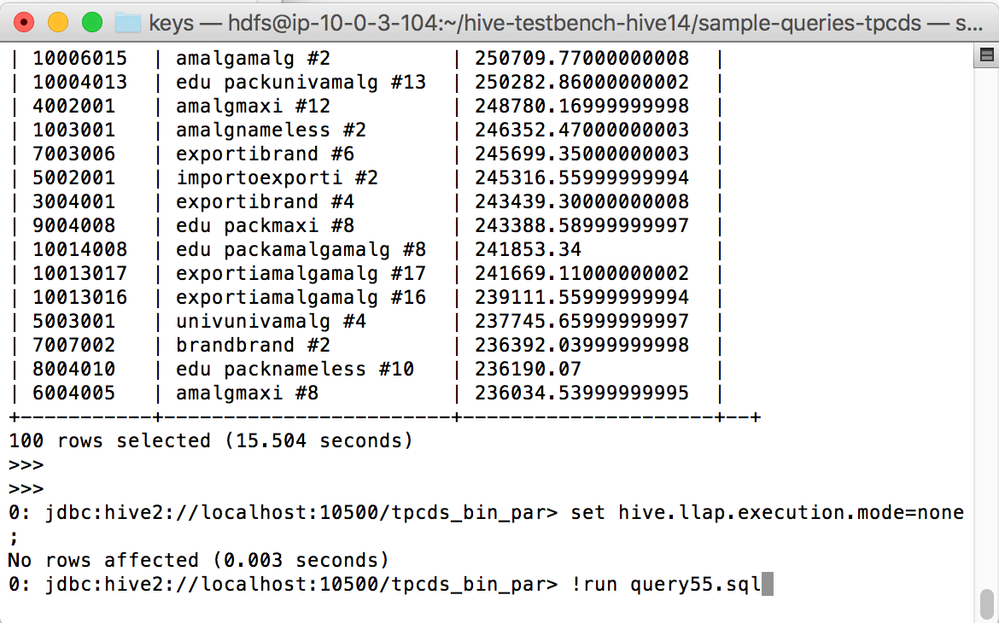

set hive.llap.execution.mode=all; !run query55.sql

12. Notice that the query with LLAP completes much more quickly. If you don’t see a significant speed up at first, try the same query again. As the LLAP cache fills with data, queries respond more quickly. Below are the results of the next two runs of the same query with LLAP set to all. The second query returned in 8.455 seconds and a subsequent query in 2.745 seconds. If your cluster has been up and you have been doing LLAP queries on this data your performance my be in the 2 second range on the first try:

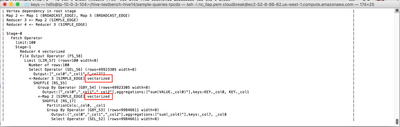

13. To see the difference between the query plans, use the explain query to show the plan for a query with no LLAP. Take note of the vectorized outlined in red in the screen shot below:

set hive.llap.execution.mode=none; !run explainquery55.sql

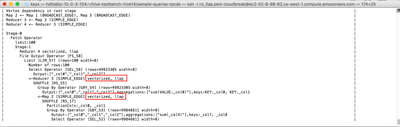

14. Try the explain again, with LLAP enabled:

set hive.llap.execution.mode=all; !run explainquery55.sql

15. Notice in the explain plan for the LLAP query, LLAP is shown after the vectorized keyword.

References:

https://github.com/hortonworks/hive-testbench

https://community.hortonworks.com/questions/51333/hive-testbench-error-when-generating-data.html

https://community.hortonworks.com/questions/23988/not-able-to-run-hive-benchmark-test.html

Created on 10-28-2016 02:47 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

At step 6 you need to be sudo to execute ./tpcds-build.sh

Data generation at the next step is fine with hdfs user.

Created on 10-28-2016 05:41 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

At step 5, you changed the GC mode. The recommended for LLAP is G1GC. Is there a reason for the change you proposed?

Created on 11-03-2016 02:19 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Constantin Stanca The change to the gc parameters is to work around this problem in the data generation script:

https://community.hortonworks.com/questions/51333/hive-testbench-error-when-generating-data.html

The LLAP daemon process is running on the server with the correct GC parameters.

Created on 11-03-2016 02:21 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Constantin Stanca I added a sudo before the build command. Thanks for the correction.

Created on 02-14-2017 11:37 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

step #15: I do not see llap. looks like "set hive.llap.execution.mode=all;" does not work as set hive.llap.execution.mode returns hive.llap.execution.mode is undefined. Please advice.

Created on 02-15-2017 06:40 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Serge Kazarin Check the port in the hive connection string and make sure the port is 10500 (hive2 interface). The hive1 interface will not understand the LLAP configuration settings.

Created on 07-13-2018 06:56 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi! May I ask what's the content of testbench.settings file? I can't find the file.. 😞

beeline -i testbench.settings -u jdbc:hive2://localhost:10500/tpcds_bin_partitioned_orc_30

Thanks!

Created on 07-13-2018 08:16 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Already found the file 🙂 sorry for the trouble...

just had some error running testbench

Error: Error while compiling statement: FAILED: SemanticException [Error 10001]: Line 3:6 Table not found 'date_dim' (state=42S02,code=10001)

Error: Error while compiling statement: FAILED: SemanticException [Error 10001]: Line 13:1 Table not found 'lineitem' (state=42S02,code=10001)

Created on 07-15-2018 02:53 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Michael Dennis "MD" Danang I think there may have been an issue generating the test data. It looks like some of the benchmark tables did not get generated correctly. Try the generation again.

Created on 05-30-2021 01:17 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

[hdfs@c****-node* hive-testbench-hive14]$ ./tpcds-build.sh

Building TPC-DS Data Generator

make: Nothing to be done for `all’.

TPC-DS Data Generator built, you can now use tpcds-setup.sh to generate data.

[hdfs@c4237-node2 hive-testbench-hive14]$ ./tpcds-setup.sh 2

TPC-DS text data generation complete.

Loading text data into external tables.

make: *** [time_dim] Error 1

make: *** Waiting for unfinished jobs....

make: *** [date_dim] Error 1

Data loaded into database tpcds_bin_partitioned_orc_2.

INFO : OK

+---------------------+

| database_name |

+---------------------+

| default |

| information_schema |

| sys |

+---------------------+

3 rows selected (1.955 seconds)

0: jdbc:hive2://c4237-node2.coelab.cloudera.c>

tpcds_bin_partitioned_orc_2 database is not created, I have some issues in testing the tpcds queries

sudo -u hdfs -s

13 cd /home/hdfs

14 wget https://github.com/hortonworks/hive-testbench/archive/hive14.zip

15 unzip hive14.zip

17 export JAVA_HOME=/usr/jdk64/jdk1.8.0_77

18 export PATH=$JAVA_HOME/bin:$PATH

./tpcds-build.sh

beeline -i testbench.settings -u "jdbc:hive2://c****-node9.coe***.*****.com:10500/tpcds_bin_partitioned_orc_2"

I'm not able to test the tpcds queries, any help would be appreciated.