Community Articles

- Cloudera Community

- Support

- Community Articles

- Ingesting Drone Data From Ryze Tello Part 1 - Setu...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 01-07-2019 08:46 PM - edited 08-17-2019 04:57 AM

Ingesting Drone Data From DJII Ryze Tello Drones Part 1 - Setup and Practice

In Part 1, we will setup our drone, our communication environment, capture the data and do initial analysis. We will eventually grab live video stream for object detection, real-time flight control and real-time data ingest of photos, videos and sensor readings. We will have Apache NiFi react to live situations facing the drone and have it issue flight commands via UDP.

In this initial section, we will control the drone with Python which can be triggered by NiFi. Apache NiFi will ingest log data that is stored as CSV files on a NiFi node connected to the drone's WiFi. This will eventually move to a dedicated embedded device running MiniFi.

This is a small personal drone with less than 13 minutes of flight time per battery. This is not a commercial drone, but gives you an idea of the what you can do with drones.

Drone Live Communications for Sensor Readings and Drone Control

You must connect to the drone's WiFi, which will be Tello(Something).

Tello IP: 192.168.10.1

UDP PORT:8889

Receive Tello Video Stream

Tello IP: 192.168.10.1

UDP Server: 0.0.0.0

UDP PORT:11111

Example Install:

pip3.6 install tellopy git clone https://github.com/hanyazou/TelloPy pip3.6 install av pip3.6 install opencv-python pip3.6 install image python3.6 -m tellopy.examples.video_effect

Example Run Video:

https://www.youtube.com/watch?v=mYbStkcnhsk&t=0s&list=PL-7XqvSmQqfTSihuoIP_ZAnN7mFIHkZ_e&index=18

Example Flight Log: Tello-flight log.pdf.

Let's build a quick ingest with Apache NiFi 1.8.

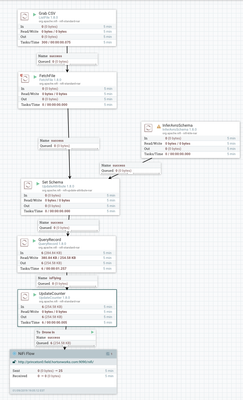

Our first step we use a local Apache NiFi to read the CSV from the Drone rune locally.

We read the CSVs from the Tello logging directory, add a schema definition and query it.

We have a controller for CSV processing. We are using the posted schema and the Jackson CSV processor. We want to ignore the header as it has invalid characters.

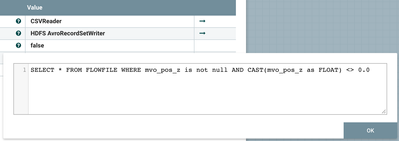

We use a QueryRecord to find if the position in Z has changed.

SELECT * FROM FLOWFILE WHERE mvo_pos_z is not null AND CAST(mvo_pos_z as FLOAT) <> 0.0

We also convert from CSV to Apache AVRO format for further processing.

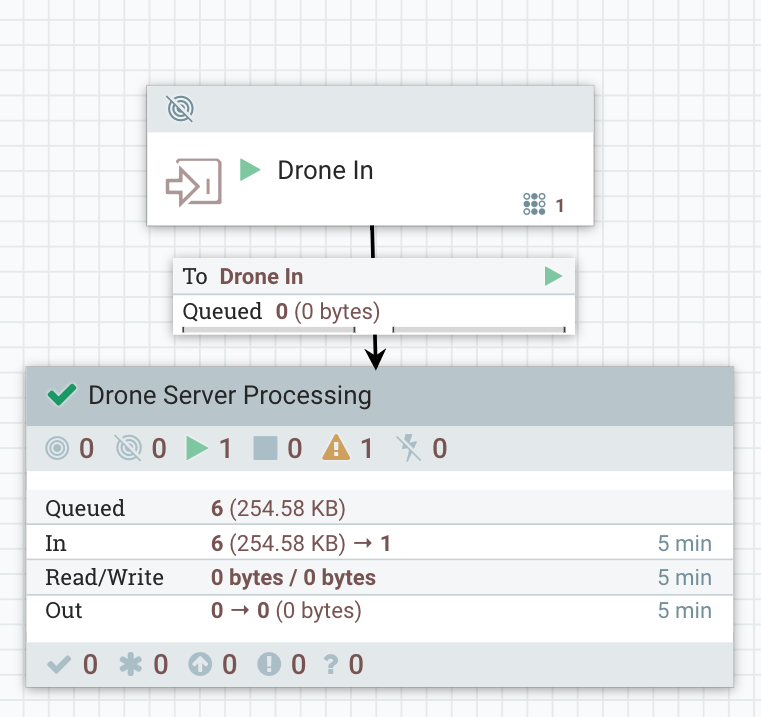

Valid records are sent over HTTP(S) Site-to-Site to a cloud hosted Apache NiFi cluster for further processing to save to an HBase table.

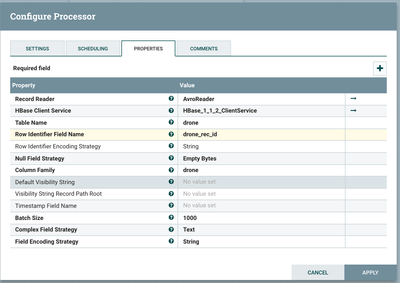

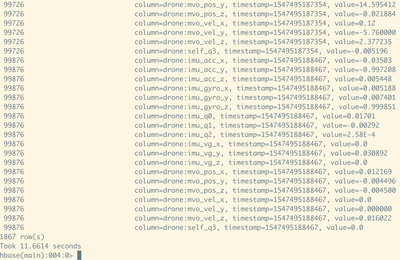

As you can see it's trival to store these records in HBase.

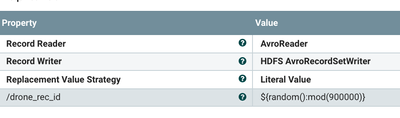

For HBase, our data didn't have a record identifier, so I use the UpdateRecord processor to create one and add it to the data. I updated the schema to have this field (and have a default and allow nulls).

As you can see it's pretty easy to store data to HBase.

Schema:

{ "type" : "record", "name" : "drone",

"fields" : [

{ "name" : "drone_rec_id", "type" : [ "string", "null" ], "default": "1000" },

{ "name" : "mvo_vel_x", "type" : ["double","null"], "default": "0.00" },

{ "name" : "mvo_vel_y", "type" : ["string","null"], "default": "0.00" },

{ "name" : "mvo_vel_z", "type" : ["double","null"], "default": "0.00" },

{ "name" : "mvo_pos_x", "type" : ["string","null"], "default": "0.00" },

{ "name" : "mvo_pos_y", "type" : ["double","null"], "default": "0.00"},

{ "name" : "mvo_pos_z", "type" : ["string","null"], "default": "0.00" },

{ "name" : "imu_acc_x", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_acc_y", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_acc_z", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_gyro_x", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_gyro_y", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_gyro_z", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_q0", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_q1", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_q2", "type" : ["double","null"], "default": "0.00" },

{ "name" : "self_q3", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_vg_x", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_vg_y", "type" : ["double","null"], "default": "0.00" },

{ "name" : "imu_vg_z", "type" : ["double","null"], "default": "0.00" } ] }

The updated schema now has a record id. The original schema derived from the raw data does not.

Store the Data in HBase Table

Soon we will be storing in Kudu, Impala, Hive, Druid and S3.

create 'drone', 'drone'

Source:

We are using the TelloPy interface. You need to clone this github and drop in the files from nifi-drone.

https://github.com/hanyazou/TelloPy/

https://github.com/tspannhw/nifi-drone

Apache NiFi Flows:

References:

- https://github.com/hanyazou/TelloPy

- https://gobot.io/blog/2018/04/20/hello-tello-hacking-drones-with-go/

- https://github.com/grofattila/dji-tello

- https://github.com/dbaldwin/droneblocks-tello-python

- https://medium.com/@makerhacks/programming-the-ryze-dji-tello-with-python-eecd56fc2c27

- https://github.com/Ubotica/telloCV/

- https://www.instructables.com/id/Ultimate-Intelligent-Fully-Automatic-Drone-Robot-w/

- https://github.com/hybridgroup/gobot/tree/master/platforms/dji/tello

- https://www.ryzerobotics.com/tello

- https://dl-cdn.ryzerobotics.com/downloads/Tello/20180404/Tello_User_Manual_V1.2_EN.pdf

- https://dl-cdn.ryzerobotics.com/downloads/Tello/20180212/Tello+Quick+Start+Guide_V1.2+multi.pdf

- https://dl-cdn.ryzerobotics.com/downloads/tello/20180910/Tello%20Scratch%20README.pdf

- https://dl-cdn.ryzerobotics.com/downloads/tello/20180910/scratch0907.7z

- https://www.ryzerobotics.com/tello/downloads

- https://www.hackster.io/econnie323/alexa-voice-controlled-tello-drone-760615

- https://tellopilots.com/forums/tello-development.8/

- https://medium.com/@swalters/dji-ryze-tello-drone-gets-reverse-engineered-46a65d83e6b5

- http://www.fabriziomarini.com/2018/04/java-udp-drone-tello.html?m=1

- https://github.com/microlinux/tello/blob/master/tello.py

- https://github.com/hybridgroup/gophercon-2018/blob/master/drone/tello/README.md

- https://tellopilots.com/threads/object-tracking-with-tello.1480/

- https://github.com/gnamingo/jTello/blob/master/JTello.java

- https://github.com/microlinux/tello/blob/master/README.md

- https://steemit.com/python/@makerhacks/programming-the-ryze-dji-tello-with-python

- https://github.com/hanyazou/TelloPy

- https://github.com/dji-sdk/Tello-Python

- https://github.com/Ubotica/telloCV/

- https://github.com/dji-sdk/Tello-Python/tree/master/Tello_Video_With_Pose_Recognition

- https://github.com/DaWelter/h264decoder

- https://github.com/twilightdema/h264j

- http://jcodec.org/

- https://github.com/cisco/openh264

- https://github.com/hanyazou/TelloPy/blob/develop-0.7.0/tellopy/examples/video_effect.py

- https://gobot.io/blog/2018/04/20/hello-tello-hacking-drones-with-go/

- https://medium.com/@swalters/dji-ryze-tello-drone-gets-reverse-engineered-46a65d83e6b5