Community Articles

- Cloudera Community

- Support

- Community Articles

- Ingesting SQL Server Tables into Hive via Apache N...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 03-08-2017 02:02 PM - edited 08-17-2019 01:56 PM

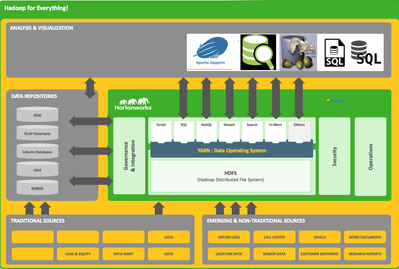

We needed to create a data lake of all the companies data, the first set of data was from SQL Server. So using Apache NiFi 1.1.x I ingested it into Hive / ORC. A few of the smaller constantly changing tables need to stay in SQL Server so we need to be able to join tables in Hive with tables in SQL Server. Fortunately, Microsoft provides a very cool extension to SQL Server called Polybase that let's us build external tables pointing to Hadoop. Once those tables are referenced they act like regular tables. So now all the companies data including other data sources loaded into Hadoop Hive ORC tables can be queried and joined. And it's fast!

Step 1: Apache NiFi Magic

QueryDatabaseTable: One for each table picking a sequence id primary key the tables have. Could also do timestamp.

ConvertAVROtoORC: Point to /etc/hive/conf/hive-site.xml

PutHDFS: Store in a separate HDFS, write this down as we need it for polybase.

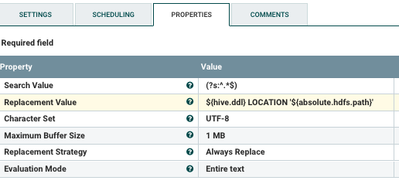

ReplaceText (GenerateHiveDDL): Builds create Hive table string automagically. You can do this manually.

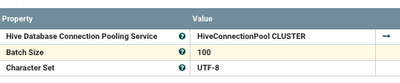

PutHiveQL: Runs the Hive table creation DDL. You can do this manually.

For my example, I had six tables to do. So I just copied that set of processors and made 5 copies and changed table names and HDFS directories. That's all folks.

Step 2: Prepare Polybase

Change the yarn-site.xml file on the SQL Server machine to point to the HDP 2.5.

E:\Program Files\Microsoft SQL Server\MSSQL13.MSSQLSERVER\MSSQL\Binn\Polybase\Hadoop\conf\yarn-site.xml

<property> <name>yarn.application.classpath</name> <value>$HADOOP_CONF_DIR,/usr/hdp/current/hadoop-client/*,/usr/hdp/current/hadoop-client/lib/*,/usr/hdp/current/hadoop-hdfs-client/*,/usr/hdp/current/hadoop-hdfs-client/lib/*,/usr/hdp/current/hadoop-yarn-client/*,/usr/hdp/current/hadoop-yarn-client/lib/*</value> </property>

Step 3: Run DDL Necessary for Polybase Access to Hadoop

CREATE EXTERNAL DATA SOURCE [HDP2] WITH( TYPE = HADOOP, LOCATION = 'hdfs://hadoopserver:8020') CREATE EXTERNAL FILE FORMAT ORC WITH ( FORMAT_TYPE =ORC ); CREATE EXTERNAL TABLE [dbo].[myTableIsExcellent] ( [myid] int NULL, [yourid] int NULL, [theirid] varchar(64) NULL, [somedata] varchar(255) NULL, [somedata] int NULL) WITH (LOCATION='/import/mydirectory/', DATA_SOURCE =HDP2, FILE_FORMAT =ORC );

2b. Create an external data source pointing to your HDFS on HDP

2c. Create an external file format like ORC that your tables use. I recommend ORC.

2d. Create your external tables pointing to their HDFS directories containing ORC files.

Step 4: Polybase Federated Query

SELECT TOP (1000) [id], hs.[id], hs.[name], c.[description] FROM [database].[dbo].[MyHiveTable] hs, [LocalSQLServerTableName] c WHERE c.id = hs.id ORDER BY c.name desc

You don't get much easier than that. Looks like a regular table, acts like a regular table, queries like a regular table. Users won't know or care where the data is. They don't have to know you have 100 petabytes of data sitting in a massive Hortonworks Data Platform.

References:

- https://msdn.microsoft.com/en-us/library/mt163689.aspx

- http://blog.pragmaticworks.com/sql-server-2016-polybase

- https://msdn.microsoft.com/en-us/library/dn935026.aspx

- https://hernandezpaul.wordpress.com/2016/05/29/polybase-query-service-and-hadoop-welcome-sql-server-2016/

- https://realizeddesign.blogspot.com/2015/09/setting-up-polybase-for-yarn-in-sql.html

- https://blogs.msdn.microsoft.com/sqlcat/2016/06/21/polybase-setup-errors-and-possible-solutions/

Created on 04-08-2018 11:37 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

But this approach will be painful if you have 2000+ tables to move from MS SQL using Nifi, also Using GenerateTableFetch gives error (Order by clause cannot be null or empty when using row paging) what to do in that case?

Created on 04-25-2018 02:00 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

You can use executesql

You can use sqoop for initial export