Community Articles

- Cloudera Community

- Support

- Community Articles

- Key factors that affects Zeppelin's Performance

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

08-13-2018

02:02 PM

- edited on

10-07-2019

11:18 PM

by

ask_bill_brooks

Target Audience: It is recommended for those who have some operational experience in Zeppelin. Intention of the article is to give a fair idea on key factors which plays significant role in zeppelin's stability and performance.

Disclaimer: This article is based on my personal experience and knowledge. Don't take it as a standard guidelines, understand the concept and modify it for your environmental best practices and use case.

A common question everyone has, How many users can use a single zeppelin instance?

It may vary from 10 to 50 users, we can't provide a number as an answer since zeppelin is a Multi-purpose Notebook and used for variety of use case with different interpreters and interpreter modes.

Below are the factors that needs to be considered while deciding how many users can share/use a single instance in an environment. Often, tweaking these factors can give lot of performance boost, try doing the below checks before considering to scaling out for more instances. For scaling out please refer Zeppelin Multiple Instances

- Zeppelin Memory:

To get better performance, Increase your Zeppelin Memory if you have enough resource in the system and when number of users are more( by default its 1GB, Option ZEPPELIN_MEM can be tuned in zeppelin_env_content) Often this could be the cause for slowness in UI responses you might need to increase Zeppelin Memory. use "jmap -heap <zeppelin PID>" as zeppelin user to analyse the heap usage at peak utilisation times of zeppelin.

- Livy:

Prefer Livy over spark/spark2. Follow the link Advantages of Livy to see the advantages of Livy

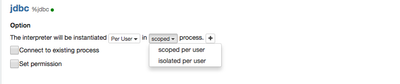

- Interpreter binding mode:

Available modes are shared, scoped and isolated'

- In 'shared' mode, every notebook bound to the Interpreter Setting will share the single Interpreter instance (less memory consumption). Typically if your primarily used interpreter is in shared mode you should increase the ZEPPELIN_INTP_MEM in zeppelin_env_content to have better performance.

- In 'scoped' mode, each notebook will create new Interpreter instance in the same interpreter process

- In 'isolated' mode, each notebook will create new Interpreter process. ( Memory consumption is more but every user gets their their own interpreter process, interpreter restart will not affect other users)

Important: Before choosing Isolated check your system has enough resources, Ex if 30 users Interpreter memory is 1GB then you will need 30 GB of RAM available in the zeppelin node.

Note: Users should restart their own interpreter session from the notebook page button instead of the interpreter page which would restart sessions for all users Interpreter Memory:

- Logging:

If you have debug log enabled, you will have considerable slowness in UI response especially while saving the configuration in interpreter while creating new notebook. ( only enable DEBUG when u troubleshoot and if its absolutely necessary)

- Execution Mode:

If your spark code is running locally ( interpreter setting's "master" property is set to "local[*]" then your zeppelin node should have enough resources for it. always use distributed mode on the interpreter wherever available).

Note: Most of the interpreter's first run will be slower since it has to create context and do create Application master in the first run.

- Storage:

The disk used for storaged shouldn't be a bottleneck when multiple I/O operation performed, I recommend HDFS storage if you are using 2.6.3+ (Refer Enabling HDFS Storage in HDP-2.6.3+)

- Multiple Hung process:

Sometimes unhandled exceptiona may leave stale process' which will severely affect the performance, you might see content unavailable and even it will not fix after a clean restart. Check if any old process(process' will have timestamp before the zeppelin restart) runs as zeppelin users and kill them ps -ef |grep ^zeppelin. Also you can use ps -L -u zeppelin |wc -l to see how much thread zeppelin is currently spawned and analyse the trend. you should see only 30-60 after a restart

- Browser:

The browser plays a vital role as visualisation is one of the key objective of zeppelin, browser has to load the notebook ( say your Notebook is 10MB big ) and display its content and output. in Older version of IE you could even see that you won't be able to sign out properly. I personally recommend to use latest chrome.

- Clearing the notebook/pragraph's output:

Clearing the output of the notebook or paragraph which has large result(output)as a practice because in the note.json the last result will be stored, where many paragraph with huge results will increase the note.json size which will be hard for the browser and JVM to hold and will slow down the UI response.

Created on 08-22-2018 02:36 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks for the Zeppelin articles Franklin!

Could you elaborate on the Execution mode 'master' value, and what options there are/when to use each? I'm having trouble finding a good resource on them.

Created on 08-27-2018 04:45 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Nick Lewis, We remove livy.spark.master in zeppelin-0.7. Because we sugguest user to use livy 0.3 in zeppelin-0.7. And livy 0.3 don't allow to specify livy.spark.master, it enforce yarn-cluster mode refer here. As you aware always to have yarn-cluster mode is better after all using the cluster resource is wise.

For spark/spark2,

1) local[*] in local mode --> everything processed locally on the zeppelin node - not so useful in a distributed environment. If the spark home is not set then it will use the inbuilt spark version ( not your clusters)

2) yarn-client mode --> in this the driver will run in the zeppelin node and it is the default yarn mode in the current hdp versions for spark interpreters (recommended till HDP 2.6.x as it is designed to work this way)

3) yarn-cluster mode --> you can't directly mention yarn-cluster as master option ( in HDP 2.6.x i guess - i tested in HDP 2.6.3) instead you can use the workaround below to use the yarn cluster mode. this option is available from 0.8.0 jira.

master yarn

spark.submit.deployMode cluster

Created on 01-07-2020 10:29 PM - edited 01-07-2020 10:31 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

We remove livy.spark.master in zeppelin-0.7. Because we suggest user to use livy 0.3 in zeppelin-0.7. And livy 0.3 don't allow to specify livy.spark.master by default, it enforce yarn-cluster mode refer here. As you aware always to have yarn-cluster mode is better after all using the cluster resource is wise.

yarn-cluster mode --> you can't directly mention yarn-cluster as master option ( in HDP 2.6.x i guess - i tested in HDP 2.6.3) instead you can use the workaround below to use the yarn cluster mode. this option is available from 0.8.0 jira.

master yarn

spark.submit.deployMode cluster

Hope this helps!