Community Articles

- Cloudera Community

- Support

- Community Articles

- Making single HiveServer2 instance handle more...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 07-30-2018 04:27 PM - edited 08-17-2019 06:43 AM

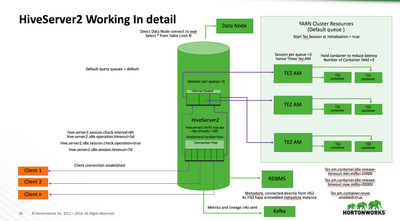

HiveServer2 is a thrift server which is a thin Service layer to interact with the HDP cluster in a seamless fashion.

It supports both JDBC and ODBC driver to provide a SQL layer to query the data.

An incoming SQL query is converted to either TEZ or MR job, the results are fetched and send back to client. No heavy lifting work is done inside the HS2. It just acts as a place to have the TEZ/MR driver, scan metadata infor and apply ranger policy for authorization.

Configurations :

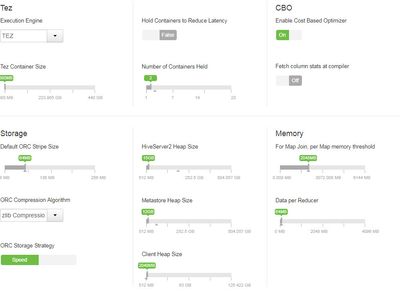

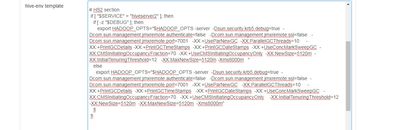

TEZ parameters

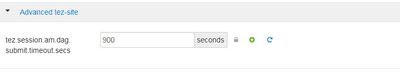

- Tez Application Master Waiting Period (in seconds) -- Specifies the amount of time in seconds that the Tez Application Master (AM) waits for a DAG to be submitted before shutting down. For example, to set the waiting period to 15 minutes (15 minutes x 60 seconds per minute = 900 seconds):

tez.session.am.dag.submit.timeout.secs=900

- Enable Tez Container Reuse -- This configuration parameter determines whether Tez will reuse the same container to run multiple queries. Enabling this parameter improves performance by avoiding the memory overhead of reallocating container resources for every query.

tez.am.container.reuse.enabled=true

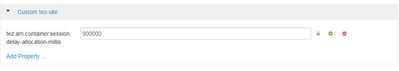

- Tez Container Holding Period (in milliseconds) -- Specifies the amount of time in milliseconds that a Tez session will retain its containers. For example, to set the holding period to 15 minutes (15 minutes x 60 seconds per minute x 1000 milliseconds per second = 900000 milliseconds):

tez.am.container.session.delay-allocation-millis=900000

A holding period of a few seconds is preferable when multiple sessions are sharing a queue. However, a short holding period negatively impacts query latency.

HiveServer2 Parameters

Heap configurations :

GC tuning :

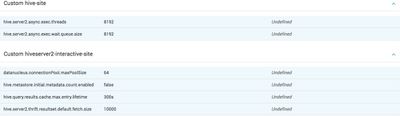

Database scan disabling And Session initialization parameter :

Tuning OS parameters (Node on which HS2, metastore and zookeeper are running) :

set net.core.somaxconn=16384

set net.core.netdev_max_backlog=16384

set net.ipv4.tcp_fin_timeout=10

Disconnecting Idle connections to lower down the memory footprint (Values can be set to minutes and seconds):

Default

hive.server2.session.check.interval=6h

hive.server2.idle.operation.timeout=5d

hive.server2.idle.session.check.operation=true

hive.server2.idle.session.timeout=7d

Proactively closing connections

hive.server2.session.check.interval=60000=1min

hive.server2.idle.session.timeout=300000=5mins

hive.server2.idle.session.check.operation=true

Connection pool (change it depending how concurrent the connections are happening )

General

Hive.server2.thrift.max.worker.threads 1000. (If they get exhauseted no incoming request will be served)

Things to watch out for :

1. making more than 60 connection to HS2 from a single machine will result in failures as zookeeper will rate limit it.

2. Don't forget to disable DB scan for every connection.

3. Watch out for memory leak bugs (HIVE-20192, HIVE-19860), make sure the version of HS2 you are using is patched.

4. Watch out for the number on connection limit on the your backed RDBMS.

5. Depending on your usage you need to fine tune heap and GC, so keep an eye on the full GC and minor GC frequency.

6. Usage of add jar leads to class loader memory leak in some version of HS2, please keep an eye.

7. Do remember in Hadoop for any service the client always retries and hence look for retries log in HS2 and optimize the service, to handle connections seamlessly.

8. HS2 has no upper threshold in terms of the number of connection it can accept, it is limited by the Heap and respone of the other service to scale.

9. Keep an eye on CPU consumption on the machine where HS2 is hosted to make sure the process has enough CPU to work with high concurrency.

Created on 08-14-2018 06:56 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi Gautam,

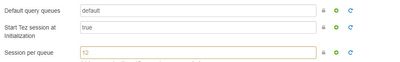

Firstly, great article, helps a lot. Could you please explain the session per queue parameter little more in detail? Currently this value is set to 1 by default on our cluster, does it mean, that only one query would be serviced at any given point of time by the HS2 Interactive?

Created on 03-25-2019 02:47 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks for the article. Can you explain in more detail what "Don't forget to disable DB scan for every connection." means and how one would disable it?

Created on 01-10-2022 02:46 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

How to disable DB scan for every search or while connecting to beeline?