Community Articles

- Cloudera Community

- Support

- Community Articles

- Monitoring Kafka with Burrow - Part 1

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 04-18-2016 06:47 PM - edited 08-17-2019 12:42 PM

This part 1 article is an introduction to Burrow’s design, an overview of the features meant to address some of the known Kafka’s monitoring challenges, a step-by-step tutorial to setup Burrow on sandbox. The part 2 article will explore Consumer lag evaluation rules, HTTP endpoint APIs, email and HTTP notifiers. Stay tuned for part 2 article to be released on May.

The source reference is Burrow’s documentation wiki: https://github.com/linkedin/Burrow/wiki

Why Monitoring Kafka?

Collecting metrics can help troubleshooting and set alerts on those that require an action.

What to Monitor?

Process state, memory usage, swap usage, network bandwidth, disk usage, disk IO, under-replicated partitions, offline partitions, active controller brokers, incoming messages per second, incoming/outgoing bytes per second, requests per second, total and split time to process a request, disputed leader elections rate, asynchronous disk log flush and time, unclean leader election rate, number of partitions, ISR shrink/expansion rate, network processor average idle %, messages by which the consumer lags behind the producer (MaxLag), minimum rate at which consumer sends requests to the Kafka broker, messages consumed per second per consumer topic, bytes consumed per second, rate at which consumer commits offsets to Kafka, partitions owned by a consumer.

Standard Kafka Consumer Monitor Limitations

The standard Kafka consumer has a built-in metric to track MaxLag, however, it has several flaws:

- The MaxLag sensor that the consumer provides is only valid as long as the consumer is active. This could be somehow addressed by monitoring other sensors, such as the MinFetchRate, however combining multiple metrics can be cumbersome and still only monitors the partition that is furthest behind.

- Spot checking topics hides problems. Problems could appear on topics that are not monitored for a specific consumer. Other misses occur when a consumer thread dies or when the topic monitored is not covered by all consumer processes.

- Measuring lag for wildcard consumers can be overwhelming. Lag is calculated per-partition, so for 100 topics with 10 partitions each, that is 1000 measurements to review. Aggregating the numbers leads to masking problems and makes it difficult to assess the situation.

- Setting thresholds for lag is a losing proposition. When a topic has a sudden spike of messages that crosses a monitoring threshold, alerts will go off. This does not necessarily mean that the consumer has a problem. Furthermore, the consumer is also only committing offsets periodically, which means that the lag measurement (when not using MaxLag) follows a sawtooth pattern. This requires a threshold far enough above the top of the pattern at peak traffic, which means it takes longer before you know there is a problem when not at peak.

- Lag alone does not tell what has happened. When the lag goes up to a high number and levels off, a single number is insufficient to determine whether the consumer failed or is a reporting error, also to determine consumer’s is efficient processing and ability to catch up.

What is Burrow?

Burrow is under active development by the Data Infrastructure Streaming SRE team at LinkedIn, is written in Go, published under the Apache License, and hosted on www.gituhub.com/linkedin/Burrow.

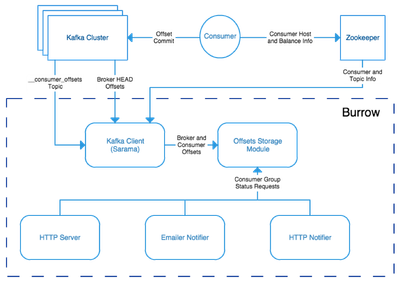

Burrow's high level design is presented in the diagram below.

Burrow automatically monitors all consumers and every partition that they consume. It does it by consuming the special internal Kafka topic to which consumer offsets are written. Burrow then provides consumer information as a centralized service that is separate from any single consumer. Consumer status is determined by evaluating the consumer's behavior over a sliding window. For each partition, data is recorded to answer the following questions:

- Is the consumer committing offsets?

- Are consumer offset commits increasing?

- Is the lag increasing?

- Is the lag increasing consistently, or fluctuating?

The information is distilled down into a status for each partition, and then into a single status for the consumer. A consumer is either OK, in a WARNING state (the consumer is working but falling behind), or in an ERROR state (the consumer has stopped or stalled). This status is available through a simple HTTP request to Burrow, or it can be periodically checked and sent out via email or to a separate HTTP endpoint (such as a monitoring or notification system). The HTTP request endpoints for getting information about the Kafka cluster and consumers, separate from the lag status, are very useful for applications that assist with managing Kafka clusters when it is not possible to run a Java Kafka client.

For example, if we have configured Burrow with a Kafka cluster named local which has a consumer group named kafkamirror_aggregate, a simple HTTP GET request to Burrow using the path/v2/kafka/local/consumer/kafkamirror_aggregate/status can show us that the consumer is working correctly:

{"error":false,"message":"consumer group status returned","status":{"cluster":"local","group":"kafkamirror_aggregate","status":"OK","complete":true,"partitions":[ ]}}It can also show us when the consumer is not working correctly, and specifically which topics and partitions are having problems:

{"error":false,"message":"consumer group status returned","status":{"cluster":"local","group":"kafkamirror_aggregate","status":"WARN","complete":true,"partitions":[{"topic":"very_busy_topic","status":"WARN","partition":1,"start":{"timestamp":1433033218951,"lag":248314281,"offset":303081219},"end":{"timestamp":1433033758950,"lag":251163129,"offset":3035669403}}]}}

Why Burrow?

Burrow was created to address problems at LinkedIn with standard Kafka Consumer Monitor, in particular wildcard consumers like mirror makers and audit consumers. Instead of checking offsets for specific consumers periodically, it monitors the stream of all committed offsets and continually calculates lag over a sliding window.

- Multiple Kafka cluster support - Burrow supports any number of Kafka clusters in a single instance. You can also run multiple copies of Burrow in parallel and only one of them will send out notifications.

- All consumers, all partitions - If the consumer is committing offsets to Kafka (not Zookeeper), it will be available in Burrow automatically. Every partition it consumes will be monitored simultaneously, avoiding the trap of just watching the worst partition (MaxLag) or spot checking individual topics.

- Status can be checked via HTTP request - There's an internal HTTP server that provides topic and consumer lists, can give you the latest offsets for a topic either from the brokers or from the consumer, and lets you check consumer status.

- Continuously monitor groups with output via email or a call to an external HTTP endpoint - Configure emails to send for bad groups, checked continuously. Or you can have Burrow call an HTTP endpoint into another system for handling alerts.

- No thresholds - Status is determined over a sliding window and does not rely on a fixed limit. When a consumer is checked, it has a status indicator that tells whether it is OK, a warning, or an error, and the partitions that caused it to be bad are provided.

Burrow is currently limited to monitoring consumers that are using Kafka-committed offsets. This method (new in Apache Kafka 0.8.2) replaces the previous method of committing offsets to Zookeeper.

How Do I Get Started with Burrow?

For the purpose of this demo, I have used HDP 2.4 sandbox which runs on CentOS release 6.7, and I used sudo to execute the following commands.

1. Install and set up Go.

Create /tmp and download latest Go version for Linux 64-bit.

$ cd /tmp$ wget https://storage.googleapis.com/golang/go1.6.linux-amd64.tar.gz

Extract the binary files to /usr/local/go.

$ tar -C /usr/local -xzf /tmp/go1.6.linux-amd64.tar.gz

For easy access, symlink your installed binaries in /usr/local/go to /usr/local/bin, which should be in your default $PATH in your shell.

$ ln -s /usr/local/go/bin/go /usr/local/bin/go$ ln -s /usr/local/go/bin/godoc /usr/local/bin/godoc$ ln -s /usr/local/go/bin/gofmt /usr/local/bin/gofmt$ export GOROOT=/usr/local/go

Add the GOROOT/bin directory to your $PATH. Add the following line to your ~/.profile file.

$ export PATH=$PATH:$GOROOT/bin

You now have the working go binary for version 1.6 (at the time of this article, 1.6 is the latest version).

$ go version go version go1.6 linux/amd64

Create a workspace for Go projects

$ mkdir /workspace$ $ cd /workspace$ $ mkdir go$ $ export GOPATH=/workspace/go $ cd go $ mkdir src $ cd src $ mkdir github.com $ cd github.com $ mkdir linkedin$ $ cd linkedin

2. Install the latest Go Package Manager version. GPM is used to automatically pull in the dependencies for Burrow so you don't have to deal with that complexity.

$ cd /tmp$ wget https://raw.githubusercontent.com/pote/gpm/v1.4.0/bin/gpm && chmod +x gpm && sudo mv gpm /usr/local/bin

3. Install git client:

$ yum install git$ git --versiongit version 1.7.1

Note: installing git client will prove useful at step 5

4. Clone Burrow repository:

$ cd $GOPATH/src/$ go get github.com/linkedin/burrow

5. Build and install Burrow:

$ export GOBIN=$GOPATH/bin$ cd /workspace/go/src/github.com/linkedin/burrow$ gpm install

Because of a change made 8-month ago, it seems that gpm is unable to bring all the dependencies; some packages were migrated to a different repository gopkg.in/gcfg.v1. The workaround is to clone the missing package from Github:

$ cd $GOPATH/src/gopkg.in $ git clone https://github.com/go-gcfg/gcfg.git

Then:

$ cd $GOPATH/src/github.com/linkedin/burrow $ mv $GOPATH/src/gopkg.in/gcfg/ $GOPATH/src/gopkg.in/gcfg.v1 $ go install

Finally, the executable, can be found in $GOBIN

6. Run Burrow:

Go to $GOPATH/src/github.com/linkedin/burrow/config and save the burrow.cfg as burrow.cfg.orig then edit burrow.cfg to match the environment then copy this file to $GOBIN for simplification. You can also specify the path where the config file is stored.

$ GOPATH/bin/burrow --config path/to/burrow.cfg

For information on how to write your configuration file, check out the https://github.com/linkedin/Burrow/wiki/Configuration

Created on 06-03-2016 01:58 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hello,

Thank you very much! The workaround about gopkg.in/gcfg.v1 was really useful.

Nevertheless, you should verify some commands that are not working for me. Ex:

$ yum install -> needs sudo

$ cd $GOPATH/src/github.com/linkedin/Burrow -> should be burrow

You need to execute this command before "go install"

mv $GOPATH/src/gopkg.in/gcfg/ $GOPATH/src/gopkg.in/gcfg.v1

Otherwise you will get

onfig.go:16:2: cannot find package "gopkg.in/gcfg.v1" in any of:

Also, I think is not required to "add the GOROOT/bin directory to your $PATH" because you are already doing symbolic link and on the other hand, you may use "sudo yum install golang".

Thanks again, this post helped me a lot.

Created on 06-11-2016 11:03 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thank you so much for your review. Your findings were spot-on. I had a few typos and omitted a mv command. Excellent catches.