Community Articles

- Cloudera Community

- Support

- Community Articles

- ORC Creation Best Practices

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 12-30-2016 09:03 PM - edited 08-17-2019 06:22 AM

Synopsis.

ORC is a columnar storage format for Hive.

This document is to explain how creation of ORC data files can improve read/scan performance when querying the data. TEZ execution engine provides different ways to optimize the query, but it will do the best with correctly created ORC files.

ORC Creation Strategy.

Example:

CREATE [EXTERNAL] TABLE OrcExampleTable (clientid int, name string, address string, age int) stored as orc TBLPROPERTIES ( "orc.compress"="ZLIB", "orc.compress.size"="262144", "orc.create.index"="true", "orc.stripe.size"="268435456", "orc.row.index.stride"="3000", "orc.bloom.filter.columns"="clientid,age,name");

Ingesting data into Hive tables heavily depends on usage patterns. In order to make queries running efficiently, ORC files should be created to support those patterns.

- -Identify most important/frequent queries that will be running against your data set (based on filter or JOIN conditions)

- -Configure optimal data file size

- -Configure stripe and stride size

- -Distribute and sort data during ingestion

- -Run “analyze” table in order to keep statistics updated

Usage Patterns.

Filters are mainly used in “WHERE” clause and “JOIN … ON”. An information about the fields being used in filters should be used as well for choosing correct strategy for ORC files creation.

Example:

select * from orcexampletable where clientid=100 and age between 25 and 45;

Does size matter?

As known, small files are a pain in HDFS. ORC files aren’t different than others. Even worse.

First of all, small files will impact NameNode memory and performance. But more importantly is response time from the query. If ingestion jobs generate small files, it means there will be large number of the files in total.

When query is submitted, TEZ will need an information about the files in order to build an execution plan and allocate resources from YARN.

So, before TEZ engine starts a job:

- -TEZ gets an information from HCat about table location and partition keys. Based on this information TEZ will have exact list of directories (and subdirectories) where data files can be found.

- -TEZ reads ORC footers and stripe level indices in each file in order to determine how many blocks of data it will need to process. This is where the problem of large number of files will impact the job submission time.

- -TEZ requests containers based on number of input splits. Again, small files will cause less flexibility in configuring input split size, and as result, larger number of containers will need to be allocated

Note, if query submit stage time-outs, check the number of ORC files (also, see below how ORC split strategy (ETL vs BI) can affect query submission time).

There is always a trade-off between ingestion query performance. Keep to a minimum number of ORC files being created, but to satisfy acceptable level of ingestion performance and data latency.

For transactional data being ingested continuously during the day, set up daily table/partition re-build process to optimize number of files and data distribution.

Stripes and Strides.

ORC files are splittable on a stripe level. Stripe size is configurable and should depend on average length (size) of records and on how many unique values of those sorted fields you can have. If search-by field is unique (or almost unique), decrease stripe size, if heavily repeated – increase. While default is 64 MB, keep stripe size in between ¼ of block-size to 4 blocks-size (default ORC block size is 256 MB). Along with that you can play with input split size per job to decrease number of containers required for a job. Sometimes it’s even worth to reconsider HDFS block size (default HDFS block size if 128 MB).

Stride is a set of records for which range index (min/max and some additional stats) will be created. Stride size (number of records, default 10K): for unique values combinations of fields in bloom filter (or close to unique) – go with 3-7 K records. Non-unique 7-15 K records or even more. If bloom filter contains unsorted fields, that will also make you go with smaller number of records in stride.

Bloom filter can be used on sorted field in combination with additional fields that can participate in search-by clause.

Sorting and Distribution.

Most important for efficient search within the data set is how this set stored.

Since TEZ utilize ORC file level information (min/max range index per field, bloom filter, etc.), it is important that those ranges will give the best reference to the exact block of data having desired values.

Here is an example:

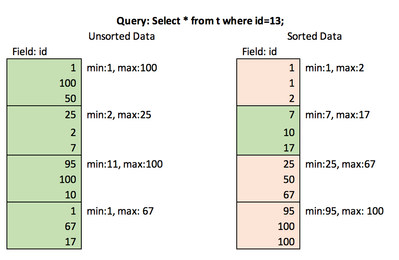

This example shows that with unsorted data, TEZ will request 4 containers and up to full table scan, while with sorted data – only single container for single stripe read.

The best result can be achieved by globally sorting the data in a table (or partition).

Global sorting in Hive (“ORDER BY”) enforces single reducer to sort final data set. It can be inefficient. That’s when “DISTRIBUTE BY” comes in help.

For example, let’s say we have daily partition with 200 GB and field “clientid” that we would like to sort by. Assuming we have enough power (cores) to run 20 parallel reducers, we can:

1. Limit number of reducers to 20 (mapred.reduce.tasks)

2. Distribute all the records to 20 reducers equally:

insert into … select … from … distribute by floor(clientid/((<max(clientid)> – <min(clientid)> + 1)/ 20 ) sort by clientid.

- Note, this will work well if client ID values are distributed evenly on scale between min and max values. If that’s not the case, find better distribution function, but ensure that ranges of values going to different reducers aren’t intersecting.

3. Alternatively, use PIG to order by client id (with parallel 20).

Usage.

There is a good article on query optimization:

I would only add to that following items to consider when working with ORC:

- -Proper Input Split Size for query job will result in less resources (cores/memory/containers) allocation.

- -set hive.hadoop.supports.splittable.combineinputformat=true;

- -set hive.exec.orc.split.strategy=ETL; -- this will work only for specific values scan, if full table scan will be required anyway, use default (HYBRID) or BI.

- -Check out other TEZ/ORC

parameters on this page:

https://cwiki.apache.org/confluence/display/Hive/Configuration+Properties

Analyze table.

Once the data is ingested and ready, run:

analyze table t [partition p] compute statistics for [columns c,...];

Refer to https://cwiki.apache.org/confluence/display/Hive/Column+Statistics+in+Hive for more details.

Note, ANALYZE statement is time consuming. More columns are defined to be analyzed – longer time it takes to complete.

Let me know if you have more tips in this area!

Created on 12-31-2016 02:02 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

This is really awesome.

Created on 08-10-2017 11:00 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

It says "TEZ gets an information from HCat". Does this mean Tez uses hcat client library to talk to Hive Metastore to get those information?

Created on 07-20-2022 01:34 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Does it can read from pyspark?

Created on 07-20-2022 01:50 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@mala_etl, as this is an older aticler, you would have a better chance of receiving a resolution by starting a new thread. This will also be an opportunity to provide details specific to your environment that could aid others in assisting you with a more accurate answer to your question. You can link this article as a reference in your new post.

Created on 07-20-2022 02:08 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@VidyaSargur , Thank you.

Created on 09-15-2023 02:06 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I use CDH 6.3.2 。

hive 2.1

hadoop 3.0

hive on spark 。yarn cluster 。

hive.merge.sparkfiles=true ;

hive.merge.orcfile.stripe.level=true ;

This configuration makes the 1099 reduce file result merge into one file when the result is small 。Then the merged file has about 1099 stripes in one file 。

Then the result is so slow when it is read.

I tried

hive.merge.orcfile.stripe.level=false ;

The result is desirable 。One small file with one stripe and read fast 。

Can anyone tell the difference between true and false ?

Why " hive.merge.orcfile.stripe.level=true " is the default one ?