Community Articles

- Cloudera Community

- Support

- Community Articles

- Phoenix Index Basics - Part 1

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 11-17-2018 12:03 AM - edited 08-17-2019 05:45 AM

Phoenix secondary index

Phoenix secondary Indexes are useful for point lookups or scans directed against non primary key of Phoenix or non row key columns of HBase. This saves the “full scan” of data table you would otherwise do if you intend to retrieve data based non rowkey.

You create secondary indexes by choosing existing non primary key column from data table and making it as primary or a covered column. By covered column, we mean making exact copy of the covered column’s data from data table to index table.

Types of secondary Index:

Functional Index: Built on functions rather than just columns

Global secondary Index: This is the one where we make a exact copy of covered columns and call it index table. In simple terms, its an upsert select on all chosen columns from data table to Index table.

Since a lot of write is involved during initial stages of index creation, this type of index would work best for read heavy use cases where data is written only occasionally and read more frequently.

There are two ways we can create global index :

- Sync way : In this data table is upsert selected and the rows transported over to client and client in turn writes to index table. Very cumbersome and error prone (timeouts etc) method.

- Async way: Index is written asynchronously via mapreduce job. Here index state becomes “building” and each single mapper works with each data table region and writes to index region.

For specific commands on creating various types of indexes , refer here

Thus, global index (above) assumes the following points :

- you have lot of available disk space to create several copies of data columns.

- You do not worry about write penalties (across network !) of creating indexes

- The query is fully covered i.e. all columns queried are part of index.Note that global index would not be used if a column is referred in query which is not part of index table (unless we use hint )

Local Index:

What if none or some of above assumptions are not fulfilled ? Thats where local index becomes useful as it is part of data table itself in the form of a shadow column family (eliminating assumption 1) , best fit for write heavy use-cases (eliminating assumption 2) and can best be used for partially covered queries as data and index tables co reside. (eliminating assumption 3)

For all practical purposes, I will talk about global index only as that is most common use case and most stable option so far.

How global Index maintenance works

To go into details of Index maintenance, we need to also know about another type of global secondary index :

Immutable Global Secondary Index: This is the type of index where index is written once and never updated in-place. Only the client which writes to data table is responsible for writing to index table as well (at the same time ! ). Thus its purely client’s responsibility to maintain sync between data and index table.

Use cases such as time series data or event logs can take advantage of immutable data and index tables. (create data table with IMMUTABLE_ROWS=true option and all index created would default to immutable)

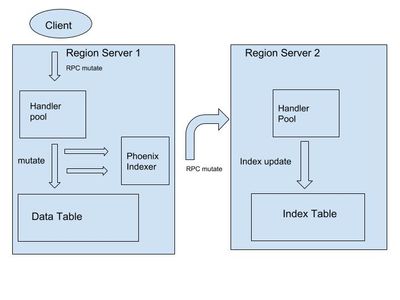

Mutable Global Secondary Index: Here index maintenance is done via server to server RPC (network and handler overhead remember ! ) between data table server and index table server. For simplicity , we can believe that if client was successfully able to write to data table, writes to index table also would have been completed by the data region server. However many issues around this aspect exist, which we will discuss in Part 2.

There are two more varieties of tables called transactional tables and non transactional tables. Transactional tables intend to have atomic writes to data and index table (ACID compliant) and are still work in progress. Thus in next few sections and articles, for all practical purposes, we will talk about non transactional mutable global secondary indexes.

Here are the steps involved in Index maintenance :

- Client submits “upsert” RPC to regionserver 1

- The mutation is written to WAL (and thus makes it durable) so in case if region server crashes at this point or later , WAL replay syncs the Index table. ( If there is a write failure before this step, its client which is supposed to retry.)

- In preBatchMutate step (part of Phoenix Indexer box in above diagram) , Phoenix coprocessor prepares for writing index update to Region server 2 ( actually step 2 and 3 occur together )

- The mutation is written to data table.

- In postBatchMutate step (also part of Phoenix Indexer box in above diagram ), Index update is committed on regionserver 2 via server-to-server RPC call.

Understanding of these steps is very important because in Part 2 of this article series , we will discuss about various issues appearing in index maintenance, index going out of sync, index getting disabled , Queries slowing down, region servers getting unresponsive etc.

References:

Created on 11-19-2018 03:50 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Fabulous stuff @Gaurav Sharma !

Created on 11-19-2018 06:27 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

thank you @Dinesh Chitlangia

Created on 11-28-2018 09:01 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks @Gaurav Sharma for the article, very well explained. could you please allow access / or make it public for the image https://docs.google.com/drawings/d/sGd5g0DKnEVh_4PRmJLUNOw/image?w=602&h=399&rev=1&ac=1&parent=1tgeX...

Created on 12-07-2018 09:49 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Done. Thanks for reporting.