Community Articles

- Cloudera Community

- Support

- Community Articles

- Reading OpenData JSON and Storing into Phoenix Tab...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 09-05-2016 10:52 PM - edited 08-17-2019 10:10 AM

JSON Batch to Single Row Phoenix

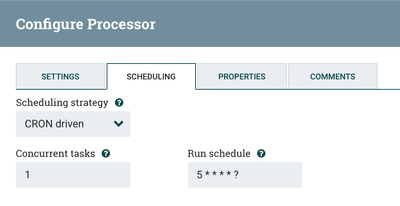

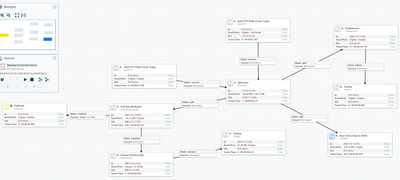

I grabbed open data on Crime from Philly's Open Data (https://www.opendataphilly.org/dataset/crime-incidents), after a free sign up you get access to JSON crime data (https://data.phila.gov/resource/sspu-uyfa.json) You can grab individual dates or ranges for thousands of records. I wanted to spool each JSON record as a separate HBase row. With the flexibility of Apache NiFi 1.0.0, I can specify run times via cron or other familiar setup. This is my master flow.

First I use GetHTTP to retrieve the SSL JSON messages, I split the records up and store them as RAW JSON in HDFS as well as send some of them via Email, format them for Phoenix SQL and store them in Phoenix/HBase. All with no coding and in a simple flow. For extra output, I can send them to Reimann server for monitoring.

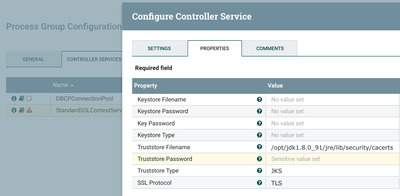

Setting up SSL for accessing HTTPS data like Philly Crime, require a little configuration and knowing what Java JRE you are using to run NiFi. You can run service nifi status to quickly get which JRE.

Split the Records

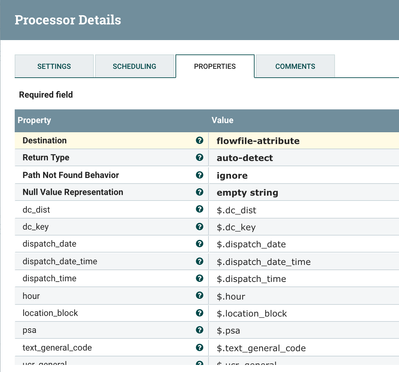

The Open Data set has many rows of data, let's split them up and pull out the attributes we want from the JSON.

Phoenix

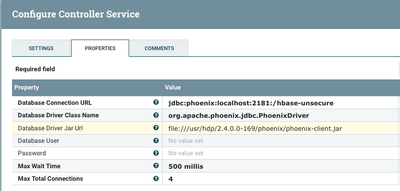

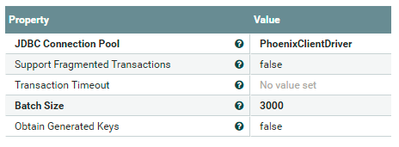

Another part that requires specific formatting is setting up the Phoenix connection. Make sure you point to the correct driver and if you have security make sure that is set.

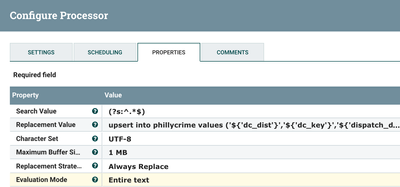

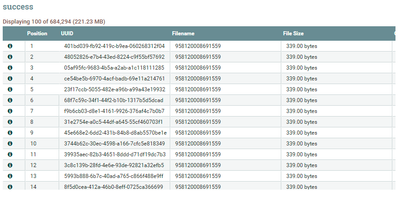

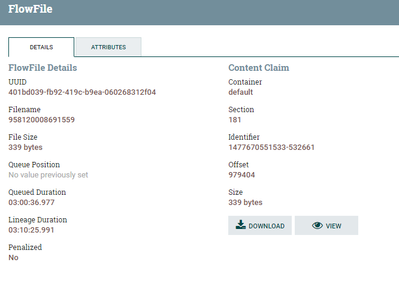

Load the Data (Upsert)

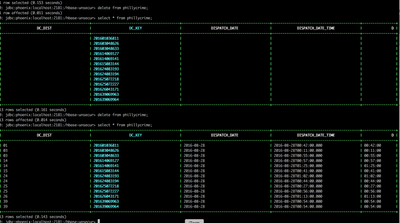

Once your data is loaded you can check quickly with /usr/hdp/current/phoenix-client/bin/sqlline.py localhost:2181:/hbase-unsecure

The SQL for this data set is pretty straight forward.

CREATE TABLE phillycrime (dc_dist varchar,

dc_key varchar not null primary key,dispatch_date varchar,dispatch_date_time varchar,dispatch_time varchar,hour varchar,location_block varchar,psa varchar,

text_general_code varchar,ucr_general varchar);

{"dc_dist":"18","dc_key":"200918067518","dispatch_date":"2009-10-02","dispatch_date_time":"2009-10-02T14:24:00.000","dispatch_time":"14:24:00","hour":"14","location_block":"S 38TH ST / MARKETUT ST","psa":"3","text_general_code":"Other Assaults","ucr_general":"800"}

upsert into phillycrime values ('18', '200918067518', '2009-10-02','2009-10-02T14:24:00.000','14:24:00','14', 'S 38TH ST / MARKETUT ST','3','Other Assaults','800');

!tables

!describe phillycrimeThe DC_KEY is unique so I used that as the Phoenix key. Now all the data I get will be added and any repeats will safely update. Sometimes during the data we may reget some of the same data, that's okay, it will just update to the same value.

Created on 10-11-2016 09:55 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

default JKS/TLS password is changeit

Created on 10-28-2016 07:28 PM - edited 08-17-2019 10:09 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

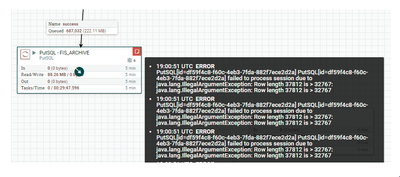

Hi , We have followed the same method . It is working successfully most of the time . But sometimes we are getting the below error . 2016-10-28 18:43:03,603 ERROR [Timer-Driven Process Thread-70] o.apache.nifi.processors.standard.PutSQL PutSQL[id=df59f4c8-f60c-4eb3-7fda-882f7ece2d2a] PutSQL[id=df59f4c8-f60c-4eb3-7fda-882f7ece2d2a] failed to process session due to java.lang.IllegalArgumentException: Row length 37812 is > 32767: java.lang.IllegalArgumentException: Row length 37812 is > 32767 2016-10-28 18:43:03,611 ERROR [Timer-Driven Process Thread-70] o.apache.nifi.processors.standard.PutSQL java.lang.IllegalArgumentException: Row length 37812 is > 32767 at org.apache.hadoop.hbase.client.Mutation.checkRow(Mutation.java:545) ~[na:na] at org.apache.hadoop.hbase.client.Put.<init>(Put.java:110) ~[na:na] at org.apache.hadoop.hbase.client.Put.<init>(Put.java:68) ~[na:na] at org.apache.hadoop.hbase.client.Put.<init>(Put.java:58) ~[na:na] at org.apache.phoenix.index.IndexMaintainer.buildUpdateMutation(IndexMaintainer.java:779) ~[na:na] at org.apache.phoenix.util.IndexUtil.generateIndexData(IndexUtil.java:263) ~[na:na] at org.apache.phoenix.execute.MutationState$1.next(MutationState.java:221) ~[na:na] at org.apache.phoenix.execute.MutationState$1.next(MutationState.java:204) ~[na:na] at org.apache.phoenix.execute.MutationState.commit(MutationState.java:370) ~[na:na] at org.apache.phoenix.jdbc.PhoenixConnection$3.call(PhoenixConnection.java:459) ~[na:na] at org.apache.phoenix.jdbc.PhoenixConnection$3.call(PhoenixConnection.java:456) ~[na:na] at org.apache.phoenix.call.CallRunner.run(CallRunner.java:53) ~[na:na] at org.apache.phoenix.jdbc.PhoenixConnection.commit(PhoenixConnection.java:456) ~[na:na] at org.apache.commons.dbcp.DelegatingConnection.commit(DelegatingConnection.java:334) ~[na:na] at org.apache.commons.dbcp.PoolingDataSource$PoolGuardConnectionWrapper.commit(PoolingDataSource.java:211) ~[na:na] at org.apache.nifi.processors.standard.PutSQL.onTrigger(PutSQL.java:371) ~[na:na] at org.apache.nifi.processor.AbstractProcessor.onTrigger(AbstractProcessor.java:27) ~[nifi-api-1.0.0.2.0.0.0-579.jar:1.0.0.2.0.0.0-579] at org.apache.nifi.controller.StandardProcessorNode.onTrigger(StandardProcessorNode.java:1064) ~[nifi-framework-core-1.0.0.2.0.0.0-579.jar:1.0.0.2.0.0.0-579] at org.apache.nifi.controller.tasks.ContinuallyRunProcessorTask.call(ContinuallyRunProcessorTask.java:136) [nifi-framework-core-1.0.0.2.0.0.0-579.jar:1.0.0.2.0.0.0-579] at org.apache.nifi.controller.tasks.ContinuallyRunProcessorTask.call(ContinuallyRunProcessorTask.java:47) [nifi-framework-core-1.0.0.2.0.0.0-579.jar:1.0.0.2.0.0.0-579] at org.apache.nifi.controller.scheduling.TimerDrivenSchedulingAgent$1.run(TimerDrivenSchedulingAgent.java:132) [nifi-framework-core-1.0.0.2.0.0.0-579.jar:1.0.0.2.0.0.0-579] at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) [na:1.8.0_91] at java.util.concurrent.FutureTask.runAndReset(FutureTask.java:308) [na:1.8.0_91] at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$301(ScheduledThreadPoolExecutor.java:180) [na:1.8.0_91] at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:294) [na:1.8.0_91] at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) [na:1.8.0_91] at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) [na:1.8.0_91] at java.lang.Thread.run(Thread.java:745) [na:1.8.0_91] FYI - we are using Nifi1.0 and we dont have each rowlength more than 500 bytes . firsttime when we got the error , we just cleaned the queue and restarted . its working fine later . once again we got th error . restarting i snot the good solution , and we are loosing the data if we do that . PFA for more information .

Created on 12-25-2016 05:19 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

good article

Created on 12-30-2016 06:12 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Created on 11-02-2017 02:46 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Could you share the nifi template for this flow

Created on 04-29-2019 07:15 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks for your artical , which is informative.

I had tried same for my specific case, I am unable to insert data into phoenix using Nifi.

Cloud you please help US by sharing simple template or try to address my problem.

Problem: Need to insert system logs into to the Phoenix table using Nifi

Created on 05-15-2020 07:15 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

hello! If I insert a string containing 'or "or, PutSQL to Phoenix will be return the grammatical errors, this should be how to solve?