Community Articles

- Cloudera Community

- Support

- Community Articles

- Running Apache MXNet Deep Learning on YARN 3.1 - H...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

09-21-2018

03:37 PM

- edited on

04-21-2026

06:27 AM

by

GrazittiAPI

Running Apache MXNet Deep Learning on YARN 3.1 - HDP 3.0

With Hadoop 3.1 / HDP 3.0, we can easily run distributed classification, training and other deep learning jobs. I am using Apache MXNet with Python. You can also do TensorFlow or Pytorch.

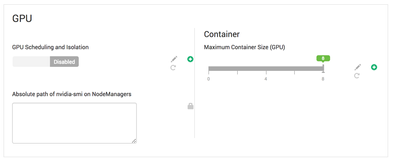

If you need GPU resources, you can specify them as such:

My cluster does not have an NVidia GPU unfortunately.

See:

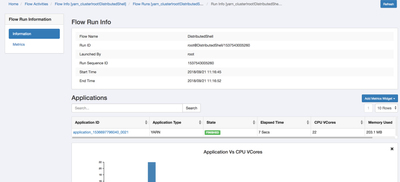

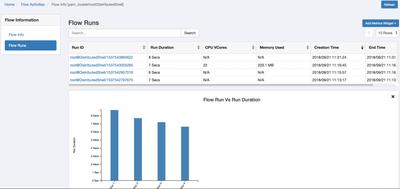

Running App on YARN

[root@princeton0 ApacheDeepLearning101]# ./yarn.sh

18/09/21 15:31:22 INFO distributedshell.Client: Initializing Client

18/09/21 15:31:22 INFO distributedshell.Client: Running Client

18/09/21 15:31:22 INFO client.RMProxy: Connecting to ResourceManager at princeton0.field.hortonworks.com/172.26.208.140:8050

18/09/21 15:31:23 INFO client.AHSProxy: Connecting to Application History server at princeton0.field.hortonworks.com/172.26.208.140:10200

18/09/21 15:31:23 INFO distributedshell.Client: Got Cluster metric info from ASM, numNodeManagers=1

18/09/21 15:31:23 INFO distributedshell.Client: Got Cluster node info from ASM

18/09/21 15:31:23 INFO distributedshell.Client: Got node report from ASM for, nodeId=princeton0.field.hortonworks.com:45454, nodeAddress=princeton0.field.hortonworks.com:8042, nodeRackName=/default-rack, nodeNumContainers=4

18/09/21 15:31:23 INFO distributedshell.Client: Queue info, queueName=default, queueCurrentCapacity=0.4, queueMaxCapacity=1.0, queueApplicationCount=8, queueChildQueueCount=0

18/09/21 15:31:23 INFO distributedshell.Client: User ACL Info for Queue, queueName=root, userAcl=SUBMIT_APPLICATIONS

18/09/21 15:31:23 INFO distributedshell.Client: User ACL Info for Queue, queueName=root, userAcl=ADMINISTER_QUEUE

18/09/21 15:31:23 INFO distributedshell.Client: User ACL Info for Queue, queueName=default, userAcl=SUBMIT_APPLICATIONS

18/09/21 15:31:23 INFO distributedshell.Client: User ACL Info for Queue, queueName=default, userAcl=ADMINISTER_QUEUE

18/09/21 15:31:23 INFO distributedshell.Client: Max mem capability of resources in this cluster 15360

18/09/21 15:31:23 INFO distributedshell.Client: Max virtual cores capability of resources in this cluster 12

18/09/21 15:31:23 WARN distributedshell.Client: AM Memory not specified, use 100 mb as AM memory

18/09/21 15:31:23 WARN distributedshell.Client: AM vcore not specified, use 1 mb as AM vcores

18/09/21 15:31:23 WARN distributedshell.Client: AM Resource capability=<memory:100, vCores:1>

18/09/21 15:31:23 INFO distributedshell.Client: Copy App Master jar from local filesystem and add to local environment

18/09/21 15:31:24 INFO distributedshell.Client: Set the environment for the application master

18/09/21 15:31:24 INFO distributedshell.Client: Setting up app master command

18/09/21 15:31:24 INFO distributedshell.Client: Completed setting up app master command {{JAVA_HOME}}/bin/java -Xmx100m org.apache.hadoop.yarn.applications.distributedshell.ApplicationMaster --container_type GUARANTEED --container_memory 512 --container_vcores 1 --num_containers 1 --priority 0 1><LOG_DIR>/AppMaster.stdout 2><LOG_DIR>/AppMaster.stderr

18/09/21 15:31:24 INFO distributedshell.Client: Submitting application to ASM

18/09/21 15:31:24 INFO impl.YarnClientImpl: Submitted application application_1536697796040_0022

18/09/21 15:31:25 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=AM container is launched, waiting for AM container to Register with RM, appMasterHost=N/A, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=ACCEPTED, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:26 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=AM container is launched, waiting for AM container to Register with RM, appMasterHost=N/A, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=ACCEPTED, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:27 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=AM container is launched, waiting for AM container to Register with RM, appMasterHost=N/A, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=ACCEPTED, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:28 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=, appMasterHost=princeton0/172.26.208.140, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=RUNNING, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:29 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=, appMasterHost=princeton0/172.26.208.140, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=RUNNING, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:30 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=, appMasterHost=princeton0/172.26.208.140, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=RUNNING, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:31 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=, appMasterHost=princeton0/172.26.208.140, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=RUNNING, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:32 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=, appMasterHost=princeton0/172.26.208.140, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=RUNNING, distributedFinalState=UNDEFINED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:33 INFO distributedshell.Client: Got application report from ASM for, appId=22, clientToAMToken=null, appDiagnostics=, appMasterHost=princeton0/172.26.208.140, appQueue=default, appMasterRpcPort=-1, appStartTime=1537543884622, yarnAppState=FINISHED, distributedFinalState=SUCCEEDED, appTrackingUrl=http://princeton0.field.hortonworks.com:8088/proxy/application_1536697796040_0022/, appUser=root

18/09/21 15:31:33 INFO distributedshell.Client: Application has completed successfully. Breaking monitoring loop

18/09/21 15:31:33 INFO distributedshell.Client: Application completed successfully

Results:

https://github.com/tspannhw/ApacheDeepLearning101/blob/master/run.log

Script:

https://github.com/tspannhw/ApacheDeepLearning101/blob/master/yarn.sh

yarn jar /usr/hdp/current/hadoop-yarn-client/hadoop-yarn-applications-distributedshell.jar -jar /usr/hdp/current/hadoop-yarn-client/hadoop-yarn-applications-distributedshell.jar -shell_command python3.6 -shell_args "/opt/demo/ApacheDeepLearning101/analyzex.py /opt/demo/images/201813161108103.jpg" -container_resources memory-mb=512,vcores=1

For pre-HDP 3.0, see my older script using the DMLC YARN runner. We don't need that anymore. No Spark either.

https://github.com/tspannhw/nifi-mxnet-yarn

Python MXNet Script:

https://github.com/tspannhw/ApacheDeepLearning101/blob/master/analyzehdfs.py

Since we are distributed, let's write the results to HDFS. We can use and install the Python HDFS library that works on Python 2.7 and 3.x. So let's pip install it.

pip install hdfs

In our code:

from hdfs import InsecureClient

client = InsecureClient('http://princeton0.field.hortonworks.com:50070', user='root')

from json import dumps

client.write('/mxnetyarn/' + uniqueid + '.json', dumps(row))

We write our row as JSON to HDFS.

When the job completes in YARN, we get a new JSON file written to HDFS.

hdfs dfs -ls /mxnetyarn

Found 2 items

-rw-r--r-- 3 root hdfs 424 2018-09-21 17:50 /mxnetyarn/mxnet_uuid_img_20180921175007.json

-rw-r--r-- 3 root hdfs 424 2018-09-21 17:55 /mxnetyarn/mxnet_uuid_img_20180921175552.json

hdfs dfs -cat /mxnetyarn/mxnet_uuid_img_20180921175552.json

{"uuid": "mxnet_uuid_img_20180921175552", "top1pct": "49.799999594688416", "top1": "n03063599 coffee mug", "top2pct": "21.50000035762787", "top2": "n07930864 cup", "top3pct": "12.399999797344208", "top3": "n07920052 espresso", "top4pct": "7.500000298023224", "top4": "n07584110 consomme", "top5pct": "5.200000107288361", "top5": "n04263257 soup bowl", "imagefilename": "/opt/demo/images/201813161108103.jpg", "runtime": "0"}HDP Assemblies

https://github.com/hortonworks/hdp-assemblies/

https://github.com/hortonworks/hdp-assemblies/blob/master/tensorflow/markdown/Dockerfile.md

*** SUBMARINE **

Coming soon, Submarine is really cool new way.

See this awesome presentation from Strata NYC 2018 by Wangda Tan (Hortonworks): https://conferences.oreilly.com/strata/strata-ny/public/schedule/detail/68289

See the quick start for setting Docker and GPU options:

Resources:

https://community.hortonworks.com/articles/60480/using-images-stored-in-hdfs-for-web-pages.html