Community Articles

- Cloudera Community

- Support

- Community Articles

- Running H2O Sparkling Water using Zeppelin (with p...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 11-09-2017 03:15 PM - edited 08-17-2019 10:20 AM

1. Introduction

This article is an extension of the one created by @Dan Zaratsian - H2O on Livy

2. Environment Details

Here are the environment details I did test it:

HDP: 2.6.1

Ambari: 2.5.0.3

OS: 7.3.1611

python: 2.7.5

IMPORTANT NOTE: H2O requires python ver. 2.7+

3. Installing H2O

Go to Zeppelin node and do the following:

$ mkdir /tmp/H2O $ cd /tmp/H2O $ wget http://h2o-release.s3.amazonaws.com/sparkling-water/rel-2.1/16/sparkling-water-2.1.16.zip $ unzip sparkling-water-2.1.16.zip

4. Testing H2O from CLI

Go to Zeppelin node where you downloaded and installed H2O

$ export SPARK_HOME='/usr/hdp/current/spark2-client' $ export HADOOP_CONF_DIR=/etc/hadoop/conf $ export MASTER="yarn-client" $ export SPARK_MAJOR_VERSION=2 $ cd /tmp/H2O/sparkling-water-2.1.16/bin $ ./pysparkling >>> from pysparkling import * >>> hc = H2OContext.getOrCreate(spark)

My test

[root@dkozlowski-dkhdp262 bin]# ./pysparkling Python 2.7.5 (default, Jun 17 2014, 18:11:42) [GCC 4.8.2 20140120 (Red Hat 4.8.2-16)] on linux2 Type "help", "copyright", "credits" or "license" for more information. Warning: Master yarn-client is deprecated since 2.0. Please use master "yarn" with specified deploy mode instead. Setting default log level to "WARN". To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel). SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/usr/hdp/2.6.1.0-129/spark2/jars/slf4j-log4j12-1.7.16.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/usr/hdp/2.6.1.0-129/spark2/jars/spark-llap_2.11-1.1.3-2.1.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] 17/11/08 14:33:06 WARN HiveConf: HiveConf of name hive.llap.daemon.service.hosts does not exist 17/11/08 14:33:06 WARN HiveConf: HiveConf of name hive.llap.daemon.service.hosts does not exist Welcome to ____ __ / __/__ ___ _____/ /__ _\ \/ _ \/ _ `/ __/ '_/ /__ / .__/\_,_/_/ /_/\_\ version 2.1.1.2.6.1.0-129 /_/ Using Python version 2.7.5 (default, Jun 17 2014 18:11:42) SparkSession available as 'spark'. >>> from pysparkling import * >>> hc = H2OContext.getOrCreate(spark) 17/11/08 14:33:57 WARN H2OContext: Method H2OContext.getOrCreate with an argument of type SparkContext is deprecated and parameter of type SparkSession is preferred. 17/11/08 14:33:57 WARN InternalH2OBackend: Increasing 'spark.locality.wait' to value 30000 17/11/08 14:33:57 WARN InternalH2OBackend: Due to non-deterministic behavior of Spark broadcast-based joins We recommend to disable them by configuring `spark.sql.autoBroadcastJoinThreshold` variable to value `-1`: sqlContext.sql("SET spark.sql.autoBroadcastJoinThreshold=-1") 17/11/08 14:33:57 WARN InternalH2OBackend: The property 'spark.scheduler.minRegisteredResourcesRatio' is not specified! We recommend to pass `--conf spark.scheduler.minRegisteredResourcesRatio=1` Connecting to H2O server at http://172.26.110.84:54323. successful. -------------------------- --------------------------------------------------- H2O cluster uptime: 25 secs H2O cluster version: 3.14.0.7 H2O cluster version age: 18 days H2O cluster name: sparkling-water-root_application_1507531306616_0032 H2O cluster total nodes: 2 H2O cluster free memory: 1.693 Gb H2O cluster total cores: 8 H2O cluster allowed cores: 8 H2O cluster status: accepting new members, healthy H2O connection url: http://172.26.110.84:54323 H2O connection proxy: H2O internal security: False H2O API Extensions: XGBoost, Algos, AutoML, Core V3, Core V4 Python version: 2.7.5 final -------------------------- --------------------------------------------------- Sparkling Water Context: * H2O name: sparkling-water-root_application_1507531306616_0032 * cluster size: 2 * list of used nodes: (executorId, host, port) ------------------------ (2,dkhdp262.openstacklocal,54321) (1,dkhdp263.openstacklocal,54321) ------------------------ Open H2O Flow in browser: http://172.26.110.84:54323 (CMD + click in Mac OSX)

5. Zeppelin site

Before following up the below steps ensure point 4. Testing H2O from CLI runs successfully

a) Ambari UI

Ambari -> Zeppelin -> Configs -> Advanced zeppelin-env -> zeppelin_env_template

export SPARK_SUBMIT_OPTIONS="--files /tmp/H2O/sparkling-water-2.1.16/py/build/dist/h2o_pysparkling_2.1-2.1.16.zip"

export PYTHONPATH="/tmp/H2O/sparkling-water-2.1.16/py/build/dist/h2o_pysparkling_2.1-2.1.16.zip:${SPARK_HOME}/python:${SPARK_HOME}/python/lib/py4j-0.8.2.1-src.zip" b) Zeppelin UI

Zeppelin UI -> Interpreter -> Spark2 (H2O does not work with dynamicAllocation enabled)

spark.dynamicAllocation.enabled=<blank> spark.shuffle.service.enabled=<blank>

c) Sample code

%pyspark from pysparkling import * hc = H2OContext.getOrCreate(spark)

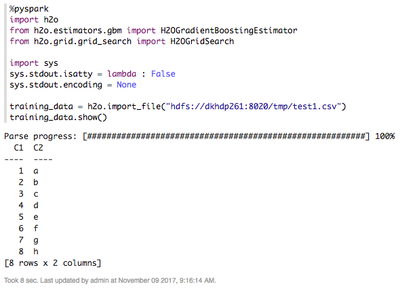

%pyspark

import h2o

from h2o.estimators.gbm import H2OGradientBoostingEstimator

from h2o.grid.grid_search import H2OGridSearch

import sys

sys.stdout.isatty = lambda : False

sys.stdout.encoding = None

training_data = h2o.import_file("hdfs://dkhdp261:8020/tmp/test1.csv")

training_data.show()