Support Questions

- Cloudera Community

- Support

- Support Questions

- Accumulo TServer failing after Wizard Install comp...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Accumulo TServer failing after Wizard Install completion

- Labels:

-

Apache Accumulo

-

Apache Ambari

Created on 08-25-2016 09:51 AM - edited 08-19-2019 03:36 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

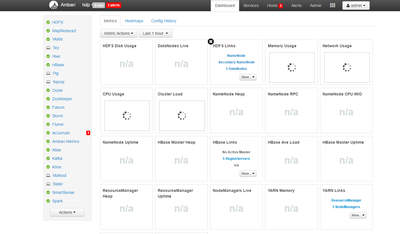

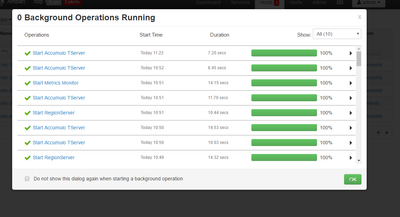

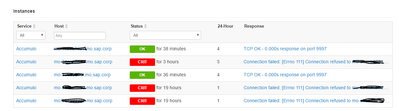

So I've just completed the Ambari install wizard (Ambari 2.2, HDP 2.3.4) and started the services.Everything is running and working now (not at first) except for 3/5 hosts have the same issue with the Accumulo TServer. It starts up with the solid green check (photo attached) but stops after just a few seconds with the alert icon.

The only information I found about the error is "Connection failed: [Errno 111] Connection refused to mo-31aeb5591.mo.sap.corp:9997" which I showed in the t-server-process. I checked my ssh connection and its fine, and all of the other services installed fine so I'm not sure what exactly that means. I posted the logs below, the .err file just said no such directory, and the .out file is empty. Are there other locations where there is more verbose err logs about this? As said, I am new to the environment.

Any general troubleshooting advice for initial issues after installation or links to guides that may help would also be very appreciated.

[root@xxxxxxxxxxxx ~]# cd /var/log/accumulo/ [root@xxxxxxxxxxxx accumulo]# ls accumulo-tserver.err accumulo-tserver.out [root@xxxxxxxxxxxx accumulo]# cat accumulo-tserver.err /usr/hdp/current/accumulo-client/bin/accumulo: line 4: /usr/hdp/2.3.4.0-3485/etc/default/accumulo: No such file or directory

Created 09-05-2016 12:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I ended up just removing the TServer service from the nodes that were failing. Not really a solution, but the others ones still work fine. Thanks for your help!

Created 08-25-2016 02:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This could be the same issue being discussed here:

Seems like Accumulo doesn't bind to the port after startup.

Created 08-26-2016 08:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Jonathan Hurley, thanks for the response.

So it's a problem with the way Ambari tests whether or not Accumulo TServer is started? That thread indicates a problem with "Ambari" in general, all of my other Ambari services are running. It is only TServer that starts, stops immediately, and says to not be running. If it was running, would there not be logs in those folders?

Do you have any suggestions as to what I can do to confirm that this is the problem? As mentioned, I am brand new to Ambari and HDP in general.

Created 08-27-2016 11:55 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The TabletServer is the exception to this. It is the per-server process, not an HA component.

Created 08-27-2016 11:58 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The content in the err files is definitely the issue here. Might you be able to cross-reference once of these nodes (that are failing) with one that does work? See if that file exists on the other node. If it does, I'd recommend copying it over. I have not run into this problem myself previously.

Created 08-29-2016 08:19 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Josh Elser, thanks for the response!

I can compare their log files but otherwise I'm not sure how exactly to cross-reference them, as there is little to no log of where the problem is happening. What should I look at for cross-referencing other than the logs? I copied over the log files(from my working nodes) and made sure the appropriate names were used, but the behavior was the same.

EDIT: I feel like I should add that I didn't copy over the "err" files from the working nodes, as there are no errors so this file exists but is empty. I did remove the err line from both instances, this didn't change the behavior, which I didn't think it would.

Created 08-29-2016 02:43 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry, not the logs, but the filesystem layout and the configuration. Specifically, compare if /usr/hdp/2.3.4.0-3485/etc/default/accumulo exists on the nodes which are correctly running. My hunch is that you are missing a symlink or something beneath /usr/hdp/2.3.4.0-3485/etc/default or similar.

And yes, under normal circumstances, the .err file should be present but empty. It is the redirection of STDERR for the Accumulo service which should have nothing while the process is happily running.

Created 08-30-2016 07:16 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Josh Elser Your suspicion is right: /usr/hdp/2.3.4.0-3485/etc/default/accumulo exists on the nodes which are correctly running TServer, and doesn't exist on the ones that aren't.

EDIT: I tried just adding the file to one of the incorrectly-running nodes, and the error changed to "Error: Could not find or load main class org.apache.accumulo.start.Main" ... so @Jonathan Hurley is most likely right about there being an issue with the configuration files, I think? Is there a place either of you recommend I look for this difference in configuration between this node and the others?

Thanks for your help.

Created 08-30-2016 05:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If were missing that file, it sounds like the package (RPM/Deb) was never correctly installed. Maybe you're better off trying to remove the service and re-add it if you have no data stored in Accumulo. I'm not sure what else to suggest at this point.

Created 08-29-2016 07:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

/usr/hdp/2.3.4.0-3485/etc/default/accumulo

That's not a correct file location. Everything under /usr/hdp/2.3.4.0-3485 must be HDP component names, like accumulo-tablet or accumulo-client.

I'm not sure how that directory is constructed in the Accumulo files, but something seems to have an invalid value. Perhaps Accumulo isn't looking in the correct location for configuration files? Could it be trying to look in /etc/default/accumulo which doesn't exist?