Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Configure Storage capacity of Hadoop cluster

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Configure Storage capacity of Hadoop cluster

- Labels:

-

Apache Hadoop

Created on 03-04-2016 10:22 AM - edited 08-19-2019 04:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

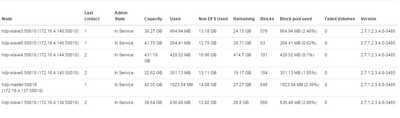

we have 5 node cluster with following configurations for master and slaves.

HDPMaster 35 GB 500 GB HDPSlave1 15 GB 500 GB HDPSlave2 15 GB 500 GB HDPSlave3 15 GB 500 GB HDPSlave4 15 GB 500 GB HDPSlave5 15 GB 500 GB

But the cluster is not taking much space. I am aware of the fact that it will reserve some space for non-dfs use.But,it is taking uneven capacity for each slave node. Is there a way to reconfigure hdfs ?

PFA.

Even though all the nodes have same hard disk, only slave 4 is taking 431GB, remaining all nodes are utilizing very small space. Is there a way to resolve this ?

Created 03-05-2016 10:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have never seen the same number for all the slave nodes because of the data distribution.

To overcome uneven block distribution scenario across the cluster, a utility program called balancer

http://hadoop.apache.org/docs/current/hadoop-project-dist/hadoop-hdfs/HDFSCommands.html#balancer

Created 03-07-2016 06:07 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You need to pick just one there, especially don't choose /tmp as your parent dir, asking for trouble

Created 03-07-2016 06:27 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Like you said, i removed /tmp from that directories list and the capacity of all the nodes reduced to 40 gb or less including slave 4

Created 03-08-2016 10:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@vinay kumar Can you help me understand where did you find the 'dfs.datanode.data.dir' property?. In my Ambari Installation, I did not find this property under 'Advanced hdfs-site' configuration.

Created 03-05-2016 10:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have never seen the same number for all the slave nodes because of the data distribution.

To overcome uneven block distribution scenario across the cluster, a utility program called balancer

http://hadoop.apache.org/docs/current/hadoop-project-dist/hadoop-hdfs/HDFSCommands.html#balancer

Created on 03-07-2016 06:13 AM - edited 08-19-2019 04:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I will make it clear.The Cluster is new and it don't have much data in it. As per my understanding, Available capacity is the storage available for data node(hdfs) , if i am not wrong. The actual hard disk size of each node being 500 GB and the available capacity for 5 of them is far to less than the slave 4.root folder disk capacity has more than 400gb space allocated and the same should be allocated to hdfs. My concern is where did the rest of the space go? How did the distribution thing come here when my only concern is about hdfs capacity. PFA.

Created 03-07-2016 09:23 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@vinay kumar What do you have for dfs.datanode.data.dir?

slave 4 --> / ?

Rest of the nodes are using other mounts...

Created 03-07-2016 09:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

dfs.datanode.data.dir have: /opt/hadoop/hdfs/data,/tmp/hadoop/hdfs/data,/usr/hadoop/hdfs/data,/usr/local/hadoop/hdfs/data

All the nodes have same mounts.

Created 03-07-2016 09:46 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Neeraj Sabharwal Did say anything wrong ? So the capacity is the Space allocated to hdfs right?

Created 03-07-2016 09:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As expected, the problem is with the disks allocated to datanode settings.

Ambari picks up all the mounts except /boot and /mnt

You were suppose to modify the settings during the installs. As you can see, data is going on /opt and other mounts and you were suppose to give only /hadoop " / has 400GB"

Now , there is no way we want to store the data on /tmp

/opt/hadoop/hdfs/data,/tmp/hadoop/hdfs/data,/usr/hadoop/hdfs/data,/usr/local/hadoop/hdfs/data

You need to create a directory as /hadoop and modify the settings to read the data from /hadoop.

Created on 03-07-2016 10:11 AM - edited 08-19-2019 04:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Does this mean that i should re-install everything?? I am still wondering about how only slave-4 got a capacity of 435 GB when every node have have configuration and same mounts.