Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Copy file from Windows to sandbox hosted in Az...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Copy file from Windows to sandbox hosted in Azure

- Labels:

-

Apache Hadoop

-

Docker

Created on 02-28-2017 08:01 AM - edited 09-16-2022 04:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I was successfuly able to copy a file to Azure from Windows using Putty Copy (pscp). I used the following command

pscp - P 22 <file from local folder> tmp\

I could see that file in the sandbox host. Now when i tried to "put" the file from sandbox host to the sandbox itself (docker container), i am not able to do so (throws network not accessible error). The command below

hadoop fs -ls -put \tmp\onecsvfile.csv \tmp\

hadoop fs -ls -put \tmp\onecsvfile.csv root@localhost:2222\\tmp\

Nothing works 😞

Can you tell me how can i move the file from sandbox host to the sandbox (Sandbox 2.5 hosted in Azure)?

Created 02-28-2017 03:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Prasanna G!

You have the following options:

1. You can use "docker cp" if you have only one file. Here is the documentation with examples.

2. The most flexible solution is mounting local drives to the sandbox with the -v option of "docker run". This is preferred because all the content in the folder is accessible inside the container.

docker run -v /Users/<path>:/<container path> ...

You can find an example how to configure this for Sandbox here.

Created on 03-01-2017 01:18 PM - edited 08-19-2019 02:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Prasanna G Have you started Sandbox from Azure Marketplace?

If so, you need to manually install Docker, as described here.

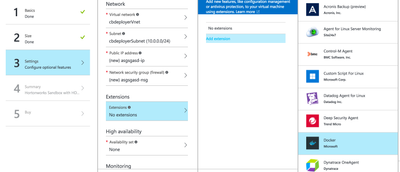

You have the option to install Docker extension with VM install, it should work as well:

Created 03-02-2017 07:48 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @pdarvasi , the docker is installed as part of the Azure Sandbox. I can see the docker service running. And i was also able to run the docker cp command once i did "sudo su"

Created 03-01-2017 08:45 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When you ssh to port 2222, you are inside the sandbox container. Is the file there? if you ls -l tmp/ what do you get? which would be different than ls -l /tmp based on the pscp command you ran. you should be able to pscp directly to the container by going to port 2222.

pscp -P 2222 <file_from_local> /tmp

Then, in your shell you should be able to

[root@sandbox ~]# ls -l /tmp total 548 -rw-r--r-- 1 root root 7540 Feb 27 10:00 file_from_local

Then I think you will want to copy it from the linux fs to the HDFS using hadoop command:

[root@sandbox ~]# hadoop fs -put /tmp/file_from_local /tmp [root@sandbox ~]# hadoop fs -ls /tmp -rw-r--r-- 1 root hdfs 0 2017-03-01 20:43 /tmp/file_from_local

Enjoy!

John

- « Previous

-

- 1

- 2

- Next »