Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Copy file from Windows to sandbox hosted in Az...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Copy file from Windows to sandbox hosted in Azure

- Labels:

-

Apache Hadoop

-

Docker

Created on 02-28-2017 08:01 AM - edited 09-16-2022 04:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I was successfuly able to copy a file to Azure from Windows using Putty Copy (pscp). I used the following command

pscp - P 22 <file from local folder> tmp\

I could see that file in the sandbox host. Now when i tried to "put" the file from sandbox host to the sandbox itself (docker container), i am not able to do so (throws network not accessible error). The command below

hadoop fs -ls -put \tmp\onecsvfile.csv \tmp\

hadoop fs -ls -put \tmp\onecsvfile.csv root@localhost:2222\\tmp\

Nothing works 😞

Can you tell me how can i move the file from sandbox host to the sandbox (Sandbox 2.5 hosted in Azure)?

Created 02-28-2017 03:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Prasanna G!

You have the following options:

1. You can use "docker cp" if you have only one file. Here is the documentation with examples.

2. The most flexible solution is mounting local drives to the sandbox with the -v option of "docker run". This is preferred because all the content in the folder is accessible inside the container.

docker run -v /Users/<path>:/<container path> ...

You can find an example how to configure this for Sandbox here.

Created 02-28-2017 03:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Prasanna G!

You have the following options:

1. You can use "docker cp" if you have only one file. Here is the documentation with examples.

2. The most flexible solution is mounting local drives to the sandbox with the -v option of "docker run". This is preferred because all the content in the folder is accessible inside the container.

docker run -v /Users/<path>:/<container path> ...

You can find an example how to configure this for Sandbox here.

Created 02-28-2017 03:14 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Prasanna G

You'll have to first copy the file from the local filesystem into the docker container like below.

First I created a directory on my docker container like so

docker exec sandbox mkdir /dan/ (can then run a docker exec sandbox ls to see that your mkdir worked)

then I copied the file to the directory I just created

docker cp /home/drice/test2.txt sandbox:dan/test2.txt

(where sandbox is the name of the docker container running HDP....you can get a list of containers by running docker ps)

once the file is in the docker container you can then copy to hadoop

docker exec sandbox hadoop fs -put /dan/test2.txt /test2.txt

[root@sandbox drice]# docker exec sandbox hadoop fs -ls /

Found 13 items

drwxrwxrwx - yarn hadoop 0 2016-10-25 08:10 /app-logs

drwxr-xr-x - hdfs hdfs 0 2016-10-25 07:54 /apps

drwxr-xr-x - yarn hadoop 0 2016-10-25 07:48 /ats

drwxr-xr-x - hdfs hdfs 0 2016-10-25 08:01 /demo

drwxr-xr-x - hdfs hdfs 0 2016-10-25 07:48 /hdp

drwxr-xr-x - mapred hdfs 0 2016-10-25 07:48 /mapred

drwxrwxrwx - mapred hadoop 0 2016-10-25 07:48 /mr-history

drwxr-xr-x - hdfs hdfs 0 2016-10-25 07:47 /ranger

drwxrwxrwx - spark hadoop 0 2017-02-28 15:05 /spark-history

drwxrwxrwx - spark hadoop 0 2016-10-25 08:14 /spark2-history

-rw-r--r-- 1 root hdfs 15 2017-02-28 15:04 /test.txt

drwxrwxrwx - hdfs hdfs 0 2016-10-25 08:11 /tmp

drwxr-xr-x - hdfs hdfs 0 2016-10-25 08:11 /user

NOTE: Another way to do this is to just use the ambari file browser view to copy files graphically.

Created 02-28-2017 08:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Dan Rice. Thanks dan but please can you explain to me more how to go about this process since am using the virtual machine on azure portal and am just beginning to learn this concept. thanks

Created 02-28-2017 09:10 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ambari is the web ui that is used to administer, monitor, and provision out a hadoop cluster. It also has the concept of VIEWs which allow for browsing the Hadoop Distributed filesystem (HDFS) as well as querying data through Hive, writing pig scripts amongst other things (even extensible to do something custom). Regardless within Ambari (example link - http:// TO AZURE PUBLIC IP>:8080/#/main/views/FILES/1.0.0/AUTO_FILES_INSTANCE )

You can log in as raj_ops (with password as raj_ops) in order to get to the files view. Don't just click the link but you'll have to change the above link to match your Azure sandbox Public IP address. This also assumes you have port 8080 open in Azures Network Security Group setting.

You may want to follow the instructions on how to reset the ambari admin password - https://hortonworks.com/hadoop-tutorial/learning-the-ropes-of-the-hortonworks-sandbox/#setup-ambari-...

Hope this helps

Created 02-28-2017 09:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi dan thanks for your help just want to give you update about the process when i ran the first line ok commands it work but when i tried this docker cp /home/drice/test2.txt sandbox:dan/test2.txt. it said not such file exist though i used my directory.

Created on 03-01-2017 07:43 AM - edited 08-19-2019 02:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

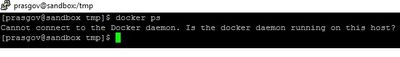

Thanks for the response. This dint work in Azure. I get this error:

I was able to ssh into port 2222 though, in Azure. Am i missing something?

However, this worked in my VMWare workstation.

Created on 03-01-2017 02:24 PM - edited 08-19-2019 02:37 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created 03-02-2017 07:51 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes this did it 🙂 I tried the sudo su earlier too, but i dint work. I restarted the docker service and did a sudo su , it all worked fine. guess there was some issue with docker service.

For anyone who wants to try, i did this

After u logged on to the VM via Putty (if ur host OS is Windows) , dont ssh into root@sandbox yet. Stay on the linux VM and do these

services docker restart

sudo su

docker ps

Created 03-02-2017 01:51 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Great to hear that you got things working @Prasanna G