Support Questions

- Cloudera Community

- Support

- Support Questions

- HDFS is almost full 90% but data node disks are ar...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

HDFS is almost full 90% but data node disks are around 50%

Created on 09-03-2018 05:05 PM - edited 08-18-2019 02:05 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi all

we have ambari cluster version 2.6.1 & HDP version 2.6.4

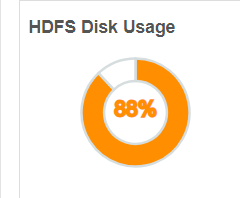

from the dashboard we can see that HDFS DISK Usage is almost 90%

but all data-node disk are around 90%

so why HDFS show 90% , while datanode disk are only 50%

/dev/sdc 20G 11G 8.7G 56% /data/sdc /dev/sde 20G 11G 8.7G 56% /data/sde /dev/sdd 20G 11G 9.0G 55% /data/sdd /dev/sdb 20G 8.9G 11G 46% /data/sdb

is it problem of fine-tune ? or else

we also performed re-balance from the ambari GUI but this isn't help

Created on 09-05-2018 12:56 AM - edited 08-18-2019 02:05 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

As the NameNode Report and UI (including ambari UI) shows that your DFS used is reaching almsot 87% to 90% hence it will be really good if you can increase the DFS capacity.

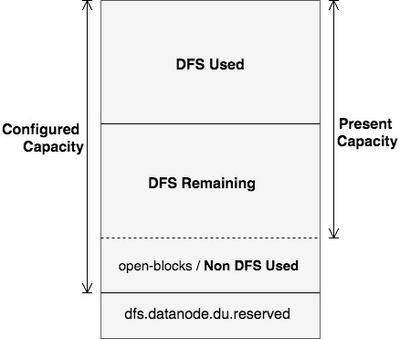

In order to understand in detail about the Non DFS Used = Configured Capacity - DFS Remaining - DFS Used

YOu can refer to the following article which aims at explaining the concepts of Configured Capacity, Present Capacity, DFS Used,DFS Remaining, Non DFS Used, in HDFS. The diagram below clearly explains these output space parameters assuming HDFS as a single disk.

https://community.hortonworks.com/articles/98936/details-of-the-output-hdfs-dfsadmin-report.html

.

The above is one of the best article to understand the DFS and Non-DFS calculations and remedy.

You add capacity by giving dfs.datanode.data.dir more mount points or directories. In Ambari that section of configs is I believe to the right depending the version of Ambari or in advanced section, the property is in hdfs-site.xml. the more new disk you provide through comma separated list the more capacity you will have. Preferably every machine should have same disk and mount point structure.

.

Created on 09-05-2018 02:31 PM - edited 08-18-2019 02:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

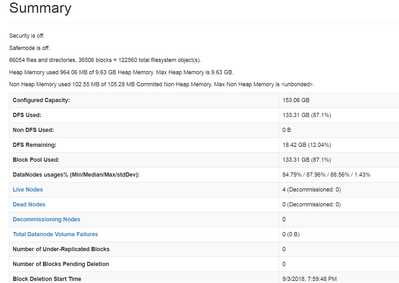

this is what we get from "http://<active namenode host>:50070/dfshealth.html#tab-overview"

Created on 09-05-2018 02:35 PM - edited 08-18-2019 02:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created 09-05-2018 02:52 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Michael Bronson As I said earlier that your configured capacity is 154 GB, not 320 GB. This can be seen in NN UI.

http://<active namenode host>:50070/dfshealth.html#tab-overview

You must check "dfs.datanode.data.dir", the no of disks that are configured for HDFS. It looks you configured only two disks.

Ambari -HDFS -> Config -> Settings -> DataNode directories

You must do HDFS restart if you have not done after commissioning disks.

Created on 09-05-2018 02:38 PM - edited 08-18-2019 02:04 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

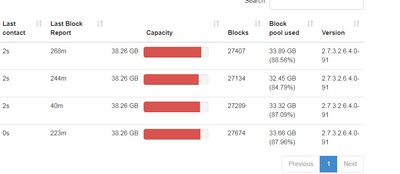

the last one is : http://<active namenode host>:50070/dfshealth.html#tab-datanode-volume-failures

- « Previous

- Next »