Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: NiFi: How to enter CSV file content and meta d...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

NiFi: How to enter CSV file content and meta data into single table of postgresql database by NiFi

- Labels:

-

Apache NiFi

Created on

02-11-2020

09:01 PM

- last edited on

02-11-2020

11:31 PM

by

VidyaSargur

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have csv files and I want to move the content of files along with its meta data (File name, source (To be hard coded), control number (Part of file name - to be extracted from file name itself) thru NiFi. So here is the sample File name and layout -

File name - 12345_user_data.csv (control_number_user_data.csv)

source - Newyork

CSV File Content/columns -

Fields - abc1, abc2, abc3, abc4

values - 1,2,3,4

Postgres Database table layout

Table name - User_Education

fields name -

control_number, file_name, source, abc1, abc2, abc3, abc4

Values -

12345, 12345_user_data.csv, Newyork, 1,2,3,4

I am planning to use below processors -

ListFile

FetchFile

UpdateAttributes

PutDatabaseRecords

LogAttributes

But I am not sure how to combine the actual content with the meta data to load into one single table. Please help

Created on 02-12-2020 05:16 AM - edited 02-12-2020 05:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are many ways to do this. I have added a template to my NiFI templates for you. This way takes a csv Input, splits the lines, extracts two columns, builds an insert statement, and executes that statement (requires database connection pool controller service).

The only real tricky part to this is the regex for mapping the columns in ExtractText Processor.

https://github.com/steven-dfheinz/NiFi-Templates

Once you are able to parse the csv to attributes, adding more attributes for metadata, and adding those details to the insert query should be very easy.

Hope this helps get you started. Additionally, if you search here, you will find loads of posts with all the other suggested methods for processing csv to sql.

Created 02-13-2020 04:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @vikrant_kumar24,

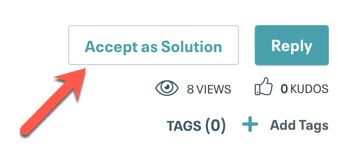

Looking over your most recent post it appears that @stevenmatison solved your issue. Once you've had a chance to try out the files he's provided, can you confirm by using the Accept as Solution button which can be found at the bottom of his reply so it can be of assistance to others?

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Created on 02-12-2020 05:16 AM - edited 02-12-2020 05:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are many ways to do this. I have added a template to my NiFI templates for you. This way takes a csv Input, splits the lines, extracts two columns, builds an insert statement, and executes that statement (requires database connection pool controller service).

The only real tricky part to this is the regex for mapping the columns in ExtractText Processor.

https://github.com/steven-dfheinz/NiFi-Templates

Once you are able to parse the csv to attributes, adding more attributes for metadata, and adding those details to the insert query should be very easy.

Hope this helps get you started. Additionally, if you search here, you will find loads of posts with all the other suggested methods for processing csv to sql.

Created 02-13-2020 12:05 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you very much

Created 02-13-2020 04:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @vikrant_kumar24,

Looking over your most recent post it appears that @stevenmatison solved your issue. Once you've had a chance to try out the files he's provided, can you confirm by using the Accept as Solution button which can be found at the bottom of his reply so it can be of assistance to others?

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.