Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Segregation between compute node and data node

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Segregation between compute node and data node

- Labels:

-

Apache YARN

-

HDFS

Created 06-06-2021 11:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi team,

Could you please tell me why do we segregate the compute node from storage node in the hadoop world, In this way are we not breaking the data locality's philosophy so in this way we are achieving the intra/inter rack data locality not the local data locality and what is the hurdle we faced in the previous design putting the both(compute and data node) on the same node).

Thanks

Created 07-01-2021 01:12 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

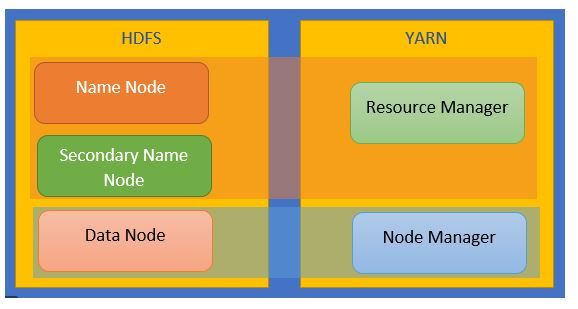

Namenode [Master] and Datanode [Slave] are part of HDFS, which is the storage layer, and ResourceManager[Master] and NodeManager [Slave] are part of YARN, which is a Resource Negotiator. So HDFS and YARN work together usually but are quite independent at design and architecture but their slave processes run together on the compute nodes i.e DataNode and a NodeManager process.

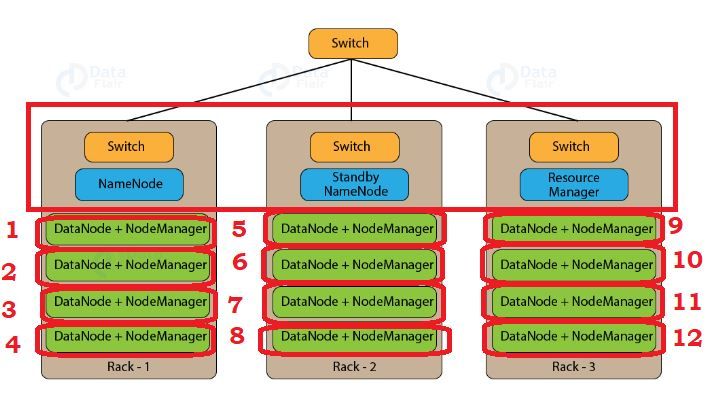

This a high-level architecture of RM and NM the 2 master processes and the latter being the brain of Hadoop

Below is a standard layout of a Hadoop cluster though we could have easily added a second RM for HA

On the 12 compute nodes the NM and DN and co-located for the localized processing

It's illogical to separate the DN and NM on different nodes. The NodeManager is YARN’s per-node agent and takes care of the individual compute nodes in a Hadoop cluster. Updating the ResourceManager (RM) with the status of running jobs on the DN, overseeing containers’ life-cycle management; monitoring resource usage (memory, CPU) of individual containers, tracking node-health, log’s management, and auxiliary services which may be exploited by different YARN applications.

DataNodes store data in a Hadoop cluster and is the name of the daemon that manages the data. File data is replicated on multiple DataNodes for reliability and so that localized computation can be executed near the data. That's the reason DN and NM are co-located on the same VM/host.

It could be very interesting to see a screenshot of the roles co-located with your data nodes.

Hope that gives you a clearer picture

Created on 06-28-2021 06:35 AM - edited 06-28-2021 06:36 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Faizan123 We are not segregating compute node and data node. Compute node is a node manager and data node is used for storage. If you submit the job the yarn will try to create the task containers on the node where the data is located. The name we use node manager or compute node is used by yarn containers for processing the data. The name data node is used for storing the data. Both can be in a single node.

Please let me know if you have any queries. Also mark "Accept as Solution" if my answer helps you!

Thanks

Shobika S

Created 06-28-2021 06:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

But I have seen the data node does not have the node manager roles to process that data that it has, and the node manager nodes does not have the data node role on it,yes but we may have two roles on the same node but in the current time these two roles are running on two different nodes ,so processing through the node manager is happening on the node manager only .

Created 07-01-2021 01:12 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Namenode [Master] and Datanode [Slave] are part of HDFS, which is the storage layer, and ResourceManager[Master] and NodeManager [Slave] are part of YARN, which is a Resource Negotiator. So HDFS and YARN work together usually but are quite independent at design and architecture but their slave processes run together on the compute nodes i.e DataNode and a NodeManager process.

This a high-level architecture of RM and NM the 2 master processes and the latter being the brain of Hadoop

Below is a standard layout of a Hadoop cluster though we could have easily added a second RM for HA

On the 12 compute nodes the NM and DN and co-located for the localized processing

It's illogical to separate the DN and NM on different nodes. The NodeManager is YARN’s per-node agent and takes care of the individual compute nodes in a Hadoop cluster. Updating the ResourceManager (RM) with the status of running jobs on the DN, overseeing containers’ life-cycle management; monitoring resource usage (memory, CPU) of individual containers, tracking node-health, log’s management, and auxiliary services which may be exploited by different YARN applications.

DataNodes store data in a Hadoop cluster and is the name of the daemon that manages the data. File data is replicated on multiple DataNodes for reliability and so that localized computation can be executed near the data. That's the reason DN and NM are co-located on the same VM/host.

It could be very interesting to see a screenshot of the roles co-located with your data nodes.

Hope that gives you a clearer picture

Created 07-05-2021 09:28 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Faizan123, has any of the replies helped resolve your issue? If so, can you kindly mark the appropriate reply as the solution, as it will make it easier for others to find the answer in the future?

Regards,

Vidya Sargur,Community Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community:

Created 07-06-2021 11:50 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Faizan123, I hope the replies provided by @Shelton or @shobikas has helped you resolve your issue. If so, can you kindly accept them as a solution?

Regards,

Vidya Sargur,Community Manager

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Learn more about the Cloudera Community: