Support Questions

- Cloudera Community

- Support

- Support Questions

- SplitContent is adding a newline in Nifi -why?

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

SplitContent is adding a newline in Nifi -why?

- Labels:

-

Apache NiFi

Created on 07-13-2017 06:01 PM - edited 08-18-2019 02:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Currently, we have a setup where we are trying to group together events based on timestamp and split them based on timestamp in order to keep the stack trace error which have newlines in them.

We are currently using the SplitContent to split on: "(newline)

20"

This is the format of the logs: (this example is supposed to appear single spaced, I hope it does when posted)

2017-07-13 01:00:00,123 Log data here

2017-07-13 01:00:00,124 Log data here

2017-07-13 01:00:00,125 Stack trace error here...

Stack trace error ....

Stack trace error....

Stack trace error.....

2017-07-13 01:00:00,126 Log data here

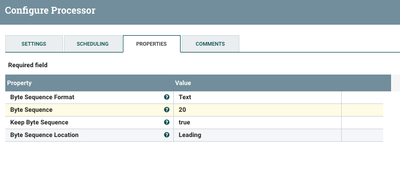

Using "(newline) 20" allows us to maintain everything between the timestamps as an event, including the stack trace. Oddly enough it will produce in HDFS events with an extra blank line in between each event. (Not usually a big deal but with 2-3 GB files, we are seeing 100+ MB of just space for blank lines) Our current solution is to have ReplaceText processor that will remove all the blank lines but its obviously not optimal.

Any suggestions are welcome. Please see screenshots.

Created 12-17-2018 03:29 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You are getting new line in each split because you stetted properties like that. Basically you are saying: Find me every (new line) 20 in flow and split it (Byte Sequence: (new line)20) and in each split add byte sequence (Keep Byte Sequence: true) which is (new line) 20 and finally add it to the beginning of the split (Byte Sequence Location: Leading).

You can split your flow with Byte Sequence: 20 (without new line).

Created 12-17-2018 05:04 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You may want to look in to using the SplitRecord processor instead of SplitContent. You could use a the GrokReader to split you log input. Here is a great article that includes a sample grok pattern for nifi log's format:

-

-

Thank you,

Matt