Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Unable to start Node Manager

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Unable to start Node Manager

Created on

12-18-2019

08:39 PM

- last edited on

12-18-2019

09:44 PM

by

VidyaSargur

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @nsabharwal

Greetings.

Need your inputs and expertise on this topic.

Details:

1. I have configured a FAIR_TEST queue and set the Ordering to FAIR

2. Have added "fair-scheduler.xml" in HADOOP_CONF_DIR default path (/usr/hdp/3.1.0.0-78/hadoop/conf) and have set minResources and maxResources to 4 GB and 8 GB respectively.

3. Changed the Scheduler Class in Ambari to fair scheduler class and added a parameter "yarn.scheduler.fair.allocation.file" to point to the above XML file.

While re-starting the YARN affected components in Ambari, I am getting the below error:

Can you please let me know what's going wrong and how to fix this issue.

2019-12-19 09:48:17,762 INFO service.AbstractService (AbstractService.java:noteFailure(267)) - Service NodeManager failed in state INITED

java.lang.RuntimeException: java.lang.RuntimeException: class org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler not org.apache.hadoop.yarn.server.nodemanager.ContainerExecutor

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2628)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.createContainerExecutor(NodeManager.java:347)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.serviceInit(NodeManager.java:389)

at org.apache.hadoop.service.AbstractService.init(AbstractService.java:164)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.initAndStartNodeManager(NodeManager.java:933)

at org.apache.hadoop.yarn.server.nodemanager.NodeManager.main(NodeManager.java:1013)

Caused by: java.lang.RuntimeException: class org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler not org.apache.hadoop.yarn.server.nodemanager.ContainerExecutor

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2622)

fair-scheduler.xml

<configuration xmlns:xi="http://www.w3.org/2001/XInclude">

<allocations>

<queue name="FAIR-TEST">

<minResources>4096 mb,0vcores</minResources>

<maxResources>8192 mb,0vcores</maxResources>

<maxRunningApps>50</maxRunningApps>

<maxAMShare>0.1</maxAMShare>

<weight>30</weight>

<schedulingPolicy>fair</schedulingPolicy>

</queue>

<queuePlacementPolicy>

<rule name="specified" />

<rule name="default" queue="FAIR-TEST" />

</queuePlacementPolicy>

</allocations>

Created 12-19-2019 09:01 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

Any updates on the below issue? I am facing lot of hurdles in getting this fixed.

I would appreciate any quick inputs from any one in fixing the problem.

@jsensharma @nsabharwal - I am a newbie to Cloudera Community and have seen that both of you are Gurus. Can you please help me in fixing this issue?

Thanks and Regards,

Sudhindra

Created 12-22-2019 11:11 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

Can you please help?

It has been almost a week since I am stuck at the same issue. I would appreciate any quick help on this critical issue, which is blocking my tasks.

Thanks and Regards,

Sudhindra

Created 12-23-2019 12:54 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created 12-26-2019 11:03 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Created 12-27-2019 04:33 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

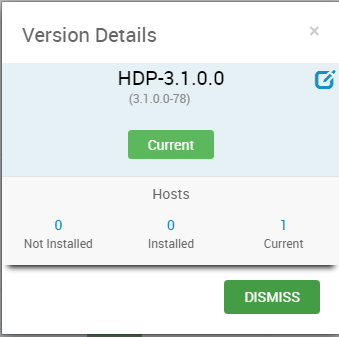

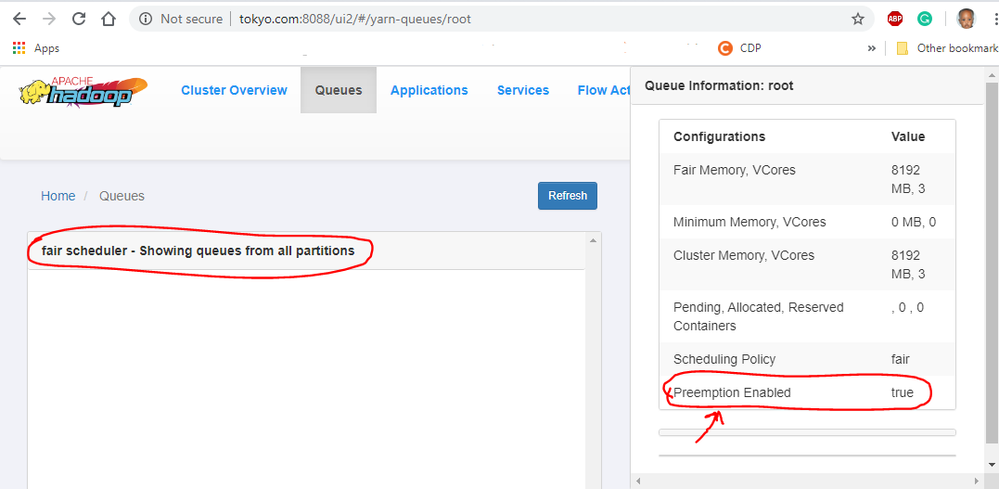

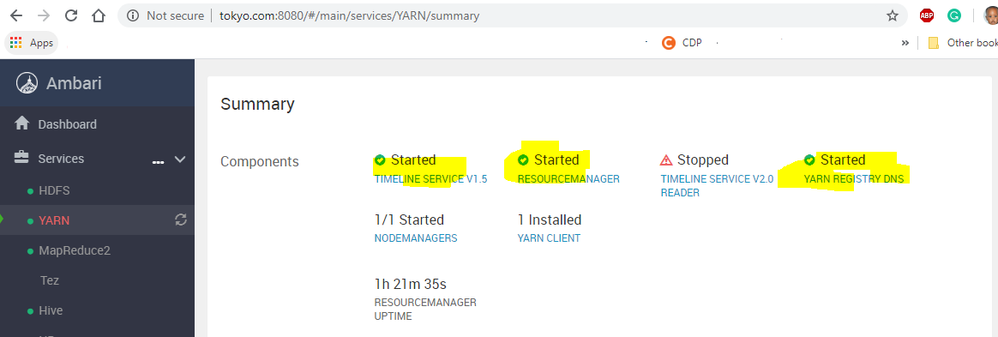

I successfully configured the fair scheduler on the below HDP version

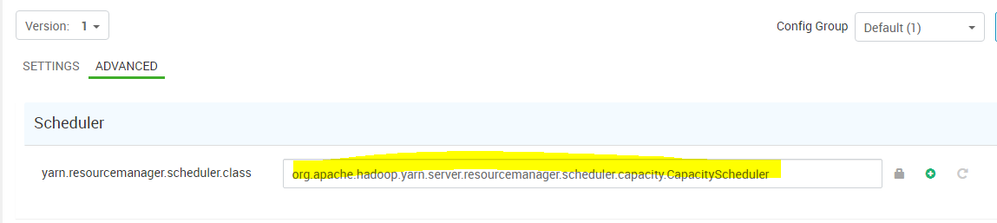

Original scheduler

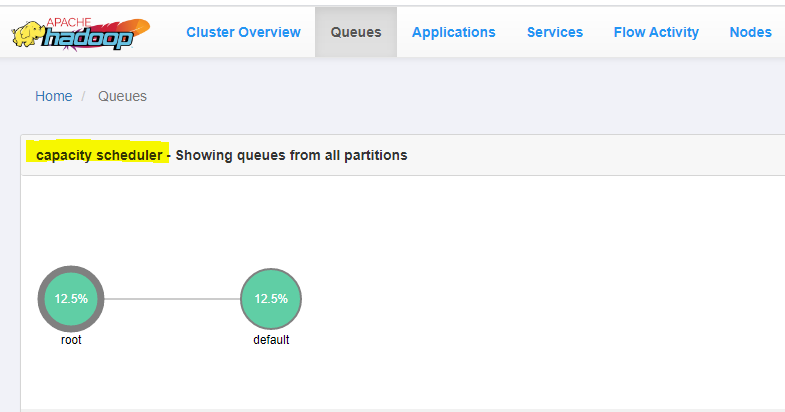

YARN UI

Default capacity scheduler after deployment of HDP

Pre-emption enabled before the change to fair-scheduler

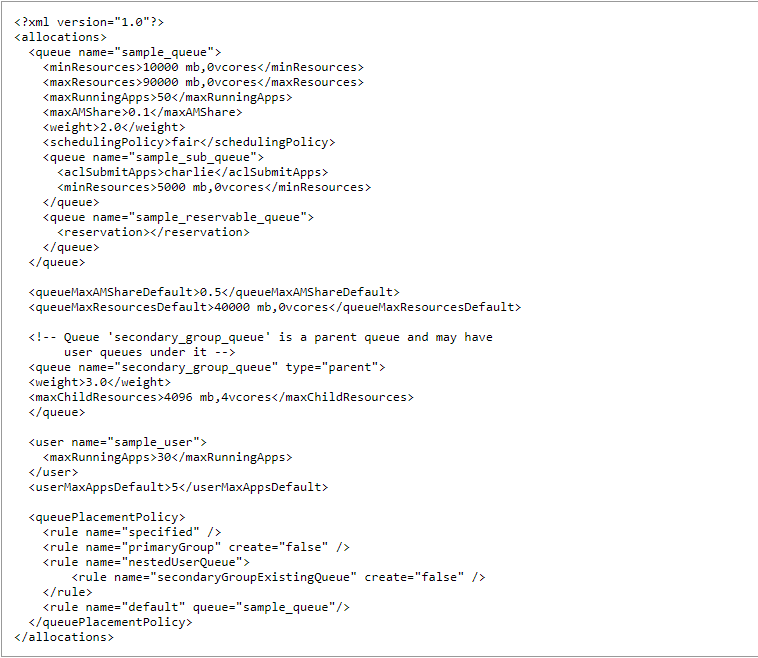

Grabbed the template fair-scheduler.xml fair-scheduler here I then changed a few values for testing purposes but ensured the is valid using the XML using XML Validator I then copied the fair-scheduler.xml to the $HADOOP_CONF directory and changed the user & permission

# cd /usr/hdp/3.1.0.0-78/hadoop/conf

# chown hdfs:hadoop fair-scheduler.xml

# chmod 644 fair-scheduler.xml

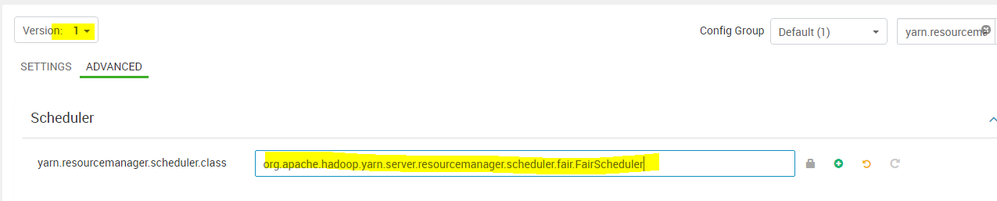

Changed the Scheduler class in the yarn-site.xml see the attached screenshot.

From : yarn.resourcemanager.scheduler.class=org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler

To: yarn.resourcemanager.scheduler.class=org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler

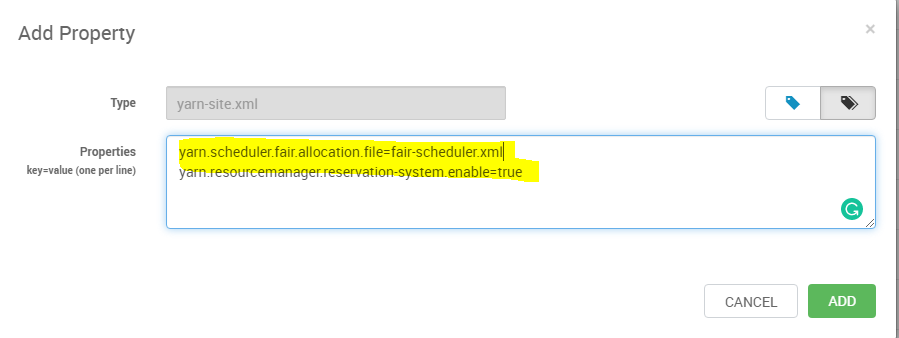

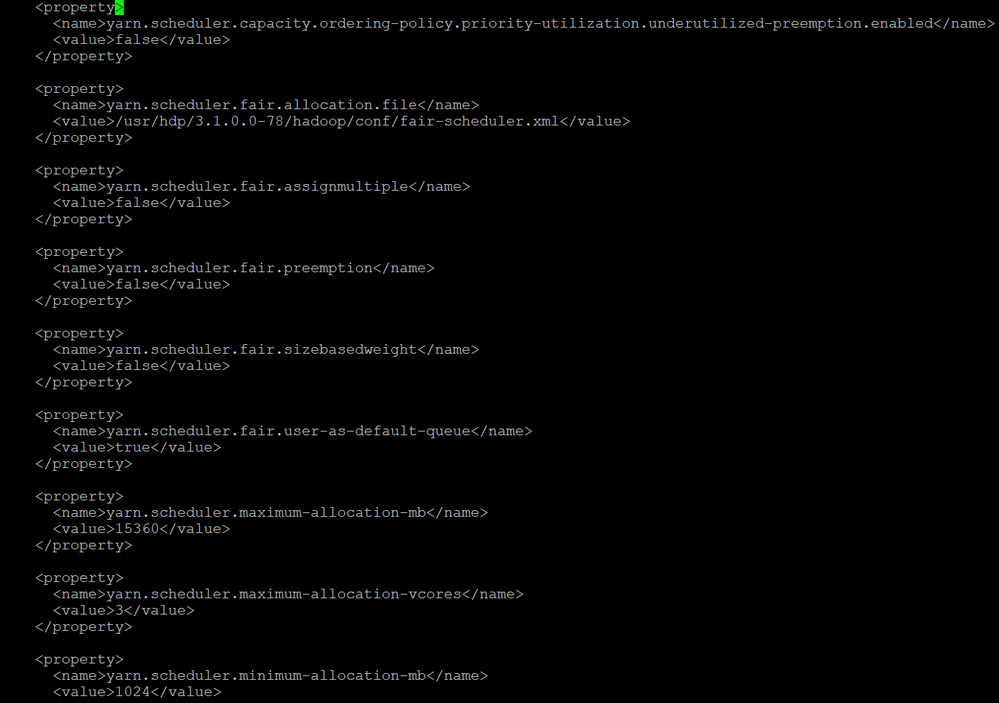

Added these new values in Custom yarn-site using the relative path default to /usr/hdp/3.1.0.0-78/hadoop/conf

yarn.scheduler.fair.allocation.file=fair-scheduler.xml

Custom yarn-site.xml

Changed the below mandatory parameter to enable the ReservationSystem in the ResourceManager is not enabled by default

yarn.resourcemanager.reservation-system.enable=true

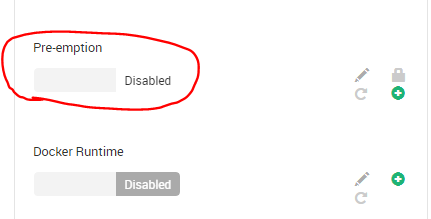

Disable pre-emption

Set the below properties as shown

The yarn-site.xml file contains parameters that determine scheduler-wide options. These properties include the below properties if they don't exist add them in the custom yarn-site

Note

The below property was available so I didn't add

yarn.scheduler.capacity.ordering-policy.priority-utilization.underutilized-preemption.enabled=false

Properties to verify

For my testing I didn't add the below properties, you will notice that above that despite disabling the pre-emption in the Ambari UI the fair schedule shows it's enabled [True] and my queues ain't showing I need to check my fair-scheduler.xml attached is the template I used

yarn.scheduler.fair.assignmultiple=false

yarn.scheduler.fair.sizebasedweight=false

yarn.scheduler.fair.user-as-default-queue=true

yarn.scheduler.fair.preemption=false Note: Do not use preemption when FairScheduler DominantResourceFairness is in

use and node labels are present.

All in all, this shows the fair-scheduler configuration is doable and my RM is up and running !!

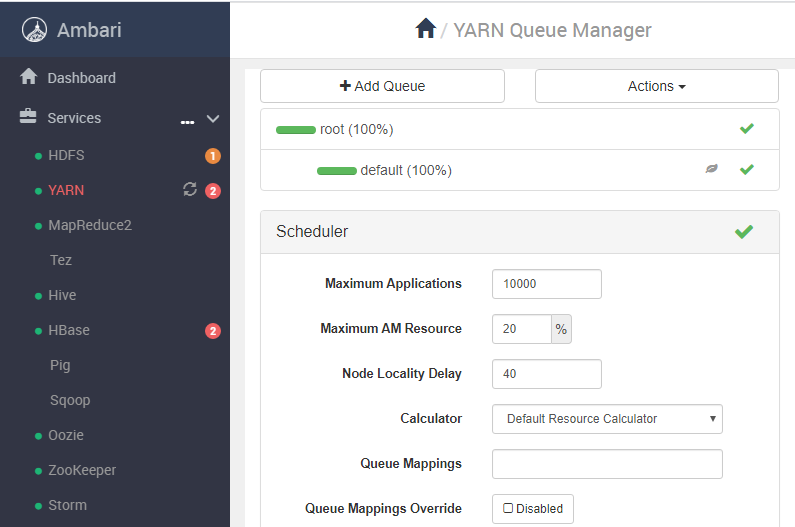

I also noticed that the above fair-scheduler was template overwritten when I checked the YARN Queue Manager so that can now allow me to configure a new valid fair-scheduler

Happy Hadooping

Created 12-30-2019 01:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Shelton,

Thanks for the reply. I have been able to change the scheduling mode to Fair-Scheduler, which is great.

However, my application is not running, due to resource allocation issue. I am getting the below standard error.

| [Mon Dec 30 15:02:19 +0530 2019] Application is added to the scheduler and is not yet activated. (Resource request: <memory:1024, vCores:1> exceeds maximum AM resource allowed). |

I am attaching all the relevant screenshots as well as information of my Yarn cluster for your reference.

Please guide me in fixing this issue. Why this issue usually occurs.

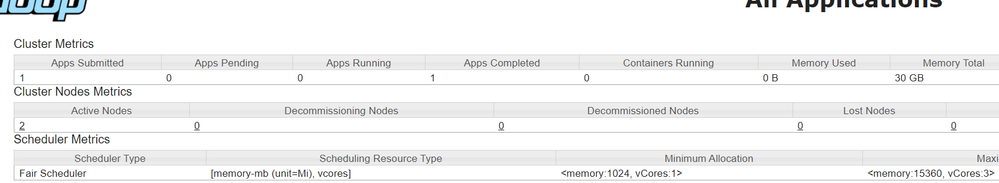

My YARN cluster has 2 nodes, scheduling mode as "Fair-Scheduler", minimum allocation of 1 GB/1 vcores and maximum allocation of 15GB/3 vcores and overall memory is 30GB.

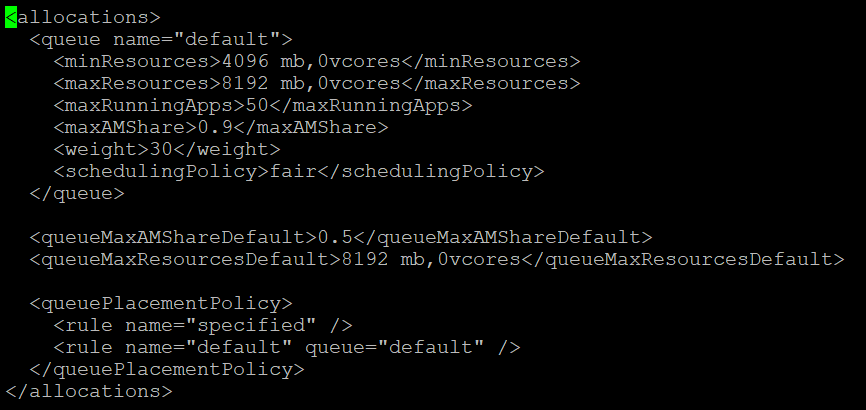

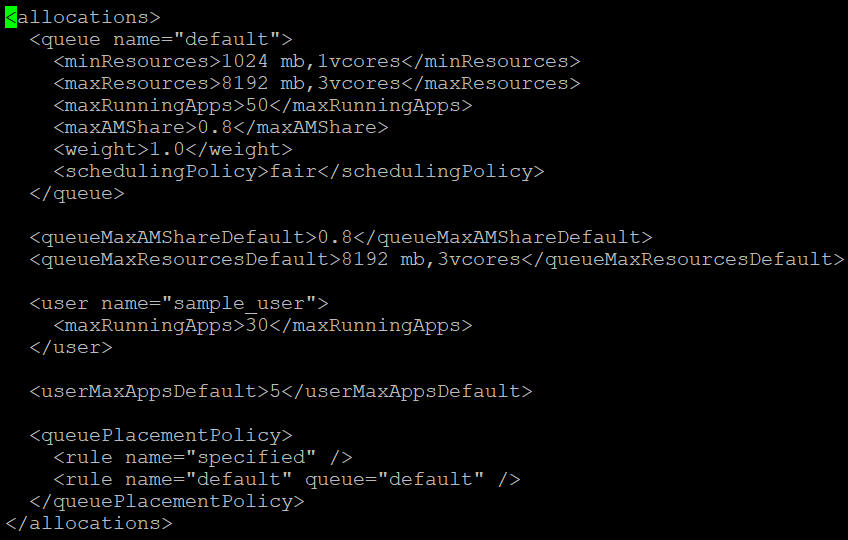

Given below is "fair-scheduler.xml" contents:

Below is the custom yarn-site parameters that have been set and the preemption is disabled as well.

Created 01-01-2020 01:42 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Shelton,

Please help me on this. I am again stuck on the issue. Did I wrongly configure anything?

Also, even after setting preemption to false, in the YARN Resource Manager UI, I am able to see that the preemption is still enabled. Is this causing the problem?

Thanks and Regards,

Sudhindra

Created 01-01-2020 04:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi @Shelton ,

I have made some progress on this issue. I have modified the fair-scheduler.xml and have set both "maxAMShare" and "queueMaxAMShareDefault" to 0.8 and weight to default value (1.0).

The result: One spark job is running fine. However, I am getting the same error as before on the exceeding of maximum AM resources limit, when I try to run the next job.

The modified fair-scheduler.xml is given below. Please provide your inputs on how to fix this particular issue.

Also, one interesting observation is that, even though the YARN Scheduling mode is showing as "Fair", the Spark Scheduling mode is still showing as "FIFO". Can I set it to "Fair" as well through the program? Since I am setting spark.master as "YARN", I believe the Fair scheduling mode will take precedence over the Spark scheduling mode. Please correct me if I am wrong.

Created 01-01-2020 05:17 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

A queueMaxAMShareDefault and maxAMShare are mutually exclusive as its overridden by maxAMShare element in each queue.

Can you decrease it to queueMaxAMShareDefault or maxAMShare to 0.1 and weight to 2.0

For the spark create the fairscheduler.xml from the fairscheduler.xml.template

your path might be different due to version 3.1.x.x.x.

# cp /usr/hdp/3.1.x.x-xx/etc/spark2/conf/fairscheduler.xml.template fairscheduler.xml

Please check the file permission

Then set spark.scheduler.allocation.file property in your SparkConf or either by putting a file named fairscheduler.xml on the classpath.

Note if no pools configured in the XML file will simply get default values for all settings (scheduling mode FIFO, weight 1, and minShare 0).

Here there are 2 default pools in fairscheduler.xml.template notably production and test using FAIR and FIFO

<allocations>

<pool name="production">

<schedulingMode>FAIR</schedulingMode>

<weight>1</weight>

<minShare>2</minShare>

</pool>

<pool name="test">

<schedulingMode>FIFO</schedulingMode>

<weight>2</weight>

<minShare>3</minShare>

</pool>

</allocations>

Without any intervention, newly submitted jobs go into a default pool, but jobs’ pools can be set by adding the spark.scheduler.pool “local property” to the SparkContext in the thread that’s submitting them. This is done as follows:

// Assuming sc is your SparkContext variable to pick the FAIR

sc.setLocalProperty("spark.scheduler.pool", "production")

Please let me know