Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Zeppelin livy interpreter not working with Ker...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Zeppelin livy interpreter not working with Kerberos cluster on HDP 2.5.3

- Labels:

-

Apache Spark

-

Apache Zeppelin

Created on 12-30-2016 03:59 AM - edited 08-19-2019 05:00 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

HDP 2.5.3 cluster kerberos with MIT KDC, openldap as the directory service. Ranger and Atlas are installed and working properly.

Zeppelin installed on node5, livy server installed on node1

Enabled ldap for user authentication with following shiro config. Login with ldap user is working fine.

[users] # List of users with their password allowed to access Zeppelin. # To use a different strategy (LDAP / Database / ...) check the shiro doc at http://shiro.apache.org/configuration.html#Configuration-INISections #admin = password1 #user1 = password2, role1, role2 #user2 = password3, role3 #user3 = password4, role2 # Sample LDAP configuration, for user Authentication, currently tested for single Realm [main] #activeDirectoryRealm = org.apache.zeppelin.server.ActiveDirectoryGroupRealm #activeDirectoryRealm.systemUsername = CN=Administrator,CN=Users,DC=HW,DC=EXAMPLE,DC=COM #activeDirectoryRealm.systemPassword = Password1! #activeDirectoryRealm.hadoopSecurityCredentialPath = jceks://user/zeppelin/zeppelin.jceks #activeDirectoryRealm.searchBase = CN=Users,DC=HW,DC=TEST,DC=COM #activeDirectoryRealm.url = ldap://ad-nano.test.example.com:389 #activeDirectoryRealm.groupRolesMap = "" #activeDirectoryRealm.authorizationCachingEnabled = true ldapRealm = org.apache.shiro.realm.ldap.JndiLdapRealm ldapRealm.userDnTemplate = uid={0},ou=Users,dc=field,dc=hortonworks,dc=com ldapRealm.contextFactory.url = ldap://node5:389 ldapRealm.contextFactory.authenticationMechanism = SIMPLE sessionManager = org.apache.shiro.web.session.mgt.DefaultWebSessionManager securityManager.sessionManager = $sessionManager # 86,400,000 milliseconds = 24 hour securityManager.sessionManager.globalSessionTimeout = 86400000 shiro.loginUrl = /api/login [urls] # anon means the access is anonymous. # authcBasic means Basic Auth Security # To enfore security, comment the line below and uncomment the next one /api/version = anon #/** = anon /** = authc

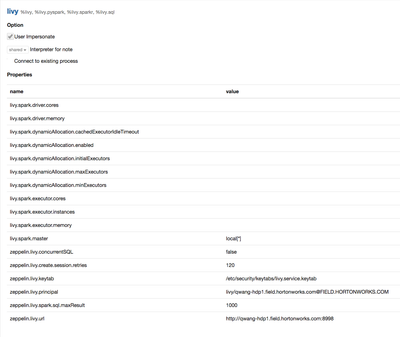

Then try to config the livy on Zeppelin with the following setting. please note the kerberos keytab is copied from node1, and principal have hostname from node1 as well

However, I keep getting the Connection refused error on Zeppelin while running sc.verison

%livy sc.version java.net.ConnectException: Connection refused at java.net.PlainSocketImpl.socketConnect(Native Method) at java.net.AbstractPlainSocketImpl.doConnect(AbstractPlainSocketImpl.java:350) at java.net.AbstractPlainSocketImpl.connectToAddress(AbstractPlainSocketImpl.java:206) at java.net.AbstractPlainSocketImpl.connect(AbstractPlainSocketImpl.java:188) at java.net.SocksSocketImpl.connect(SocksSocketImpl.java:392) at java.net.Socket.connect(Socket.java:589) at org.apache.thrift.transport.TSocket.open(TSocket.java:182) at org.apache.zeppelin.interpreter.remote.ClientFactory.create(ClientFactory.java:51) at org.apache.zeppelin.interpreter.remote.ClientFactory.create(ClientFactory.java:37) at org.apache.commons.pool2.BasePooledObjectFactory.makeObject(BasePooledObjectFactory.java:60) at org.apache.commons.pool2.impl.GenericObjectPool.create(GenericObjectPool.java:861) at org.apache.commons.pool2.impl.GenericObjectPool.borrowObject(GenericObjectPool.java:435) at org.apache.commons.pool2.impl.GenericObjectPool.borrowObject(GenericObjectPool.java:363) at org.apache.zeppelin.interpreter.remote.RemoteInterpreterProcess.getClient(RemoteInterpreterProcess.java:189) at org.apache.zeppelin.interpreter.remote.RemoteInterpreter.init(RemoteInterpreter.java:173) at org.apache.zeppelin.interpreter.remote.RemoteInterpreter.getFormType(RemoteInterpreter.java:338) at org.apache.zeppelin.interpreter.LazyOpenInterpreter.getFormType(LazyOpenInterpreter.java:105) at org.apache.zeppelin.notebook.Paragraph.jobRun(Paragraph.java:262) at org.apache.zeppelin.scheduler.Job.run(Job.java:176) at org.apache.zeppelin.scheduler.RemoteScheduler$JobRunner.run(RemoteScheduler.java:328) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$201(ScheduledThreadPoolExecutor.java:180) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:293) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) at java.lang.Thread.run(Thread.java:745)

Does the error mean ssl must be enabled?

Created 01-04-2017 03:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Finally found the resolution: because I have Ranger KMS installed on this cluster, livy user also need to be added in proxy user for ranger KMS

Add following in Ambari => Ranger KMS => custom core site

hadoop.kms.proxyuser.livy.hosts=* hadoop.kms.proxyuser.livy.users=*

Created 12-30-2016 04:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

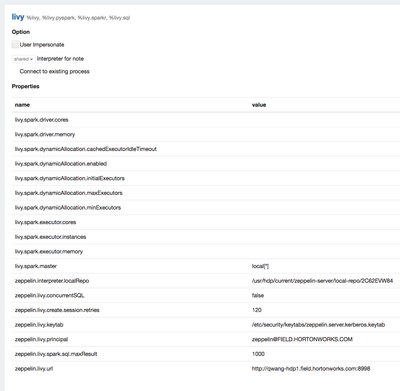

@Qi Wang I see that you have Impersonate enabled. Can you try with that option unset in interpreter config.

Setting Impersonate will need passwordless ssh to be set between zeppelin user and the user who logs into zeppelin UI on your zeppelin host.

Please try with Impersonate option unset in Livy interpreter.

Created on 01-03-2017 03:32 AM - edited 08-19-2019 04:59 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

So I updated Zeppelin interpreter setting based on the feedback

Now I am getting can't start Spark error

%livy sc.version Cannot start spark.

Created 01-03-2017 04:30 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

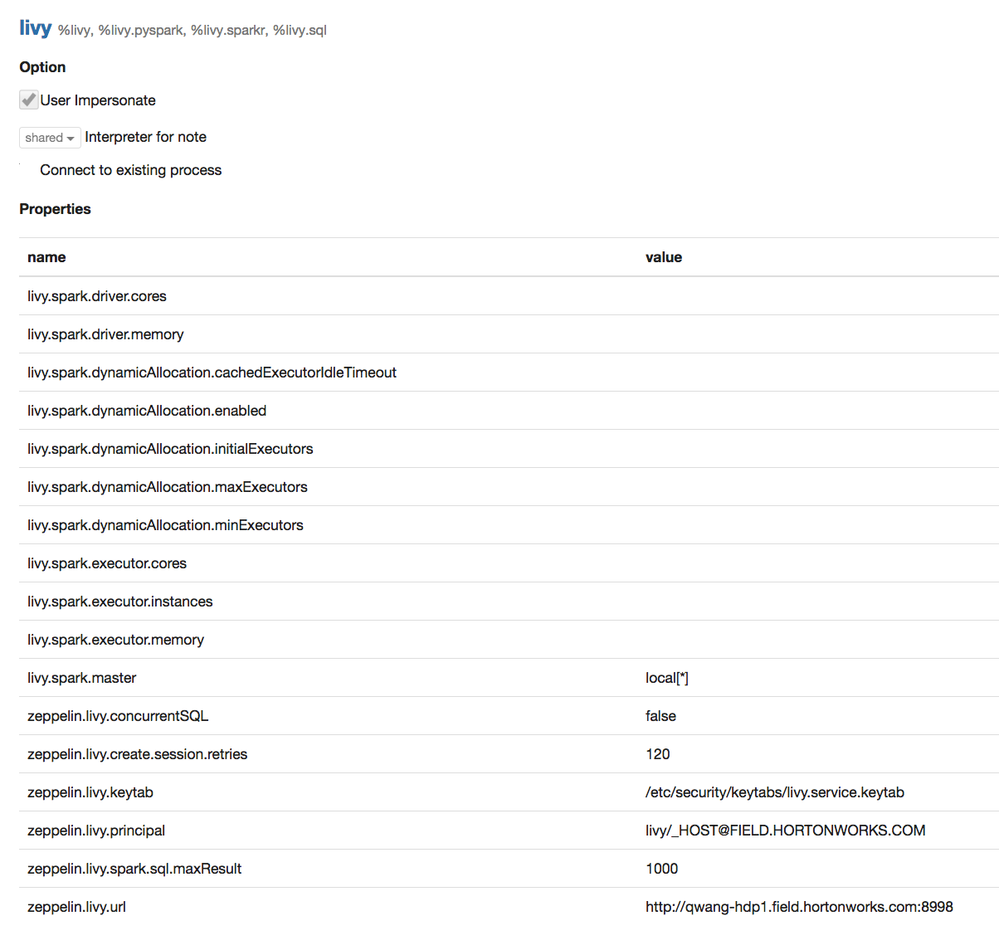

And also please set livy.spark.master to yarn-cluster to launch spark job on yarn.

Created 01-03-2017 06:30 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Still getting the same error after setting

livy.spark.master=yarn-cluster

And the log in /var/log/spark/ doesn't have much information

17/01/03 18:16:54 INFO SecurityManager: Changing view acls to: spark 17/01/03 18:16:54 INFO SecurityManager: Changing modify acls to: spark 17/01/03 18:16:54 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(spark); users with modify permissions: Set(spark) 17/01/03 18:16:54 INFO FsHistoryProvider: Replaying log path: hdfs://qwang-hdp0.field.hortonworks.com:8020/spark-history/local-1483414292027 17/01/03 18:16:54 INFO SecurityManager: Changing acls enabled to: false 17/01/03 18:16:54 INFO SecurityManager: Changing admin acls to: 17/01/03 18:16:54 INFO SecurityManager: Changing view acls to: hadoopadmin

Also I am seeing the following error in livy log, I use hadoopadmin user to log into Zeppelin. What authentication caused this failure?

INFO: Caused by: org.apache.hadoop.security.authentication.client.AuthenticationException: Authentication failed, URL: http://qwang-hdp4.field.hortonworks.com:9292/kms/v1/?op=GETDELEGATIONTOKEN&doAs=hadoopadmin&renewer=..., status: 403, message: Forbidden

Created 01-03-2017 09:56 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

If you are trying to authenticate use FOO via LDAP on Zeppelin and then use Zeppelin to launch a %livy.spark notebook as user FOO then you are using Livy impersonation (this is different from Zeppelin's own impersonation which is only recommended for the shell interpreter, not Livy interpreter).

User FOO should be available in the Hadoop cluster also because the jobs will eventually run as that user.

HDP 2.5.3 should already have all the configs setup for you. Its a bug that livy.spark.master in Zeppelin is not yarn-cluster.

Next, Livy should be using Livy keytab and Zeppelin should be using Zeppelin keytab. Zeppelin user needs to be configured a livy.superuser in Livy config.

Livy user should be configured as a proxy user in core-site.xml so that YARN/HDFS allow it to impersonate other users (in thi case hadoopadmin) when submitting spark jobs.

If that Zeppelin->Livy connection fails then you will see an exception in Zeppelin and logs in Livy. If that succeeds then Livy will try to submit the job. If that fails you will see the exception in Livy logs. From the above exception in your last comment, it appears that Livy user is not configured as a proxy user properly in core-site.xml. You can check that in hadoop configs and may have to restart affected services in case you change it. In HDP 2.5.3 this should already be done for you during Livy installation via Ambari.

Created 01-04-2017 02:57 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Bikas really appreciate your detailed explanation

I have the following in spark config. Do I need anything else for livy impersonation?

livy.impersonation.enabled=true

Also inside Ambari, HDFS=> custom core-site, I have

hadoop.proxyuser.zeppelin.hosts=* hadoop.proxyuser.zeppelin.groups=* hadoop.proxyuser.livy.hosts=* hadoop.proxyuser.livy.groups=*

Also if I kinit as the same user in console, I could start spark-shell fine

[root@qwang-hdp1 ~]# klist Ticket cache: FILE:/tmp/krb5cc_0 Default principal: hadoopadmin@FIELD.HORTONWORKS.COM Valid starting Expires Service principal 01/04/2017 02:41:42 01/05/2017 02:41:42 krbtgt/FIELD.HORTONWORKS.COM@FIELD.HORTONWORKS.COM [root@qwang-hdp1 ~]# spark-shell

I also noticed in the exception, the authentication actually failed for Ranger KMS if you look at the url. I did install Ranger KMS on the cluster but I did not enable it for any HDFS folder yet. Do I need to add livy user somewhere in KMS?

Created 01-04-2017 03:01 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Ranger KMS could be the issue because it causes problems for getting HDFS delegation token. If Z or L user needs to get HDFS delegation token then they also need to be super users for Ranger. You are better off trying with non-Ranger cluster or adding them to Ranger super users which is different from core site super users.

Created 01-04-2017 03:06 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Finally found the resolution: because I have Ranger KMS installed on this cluster, livy user also need to be added in proxy user for ranger KMS

Add following in Ambari => Ranger KMS => custom core site

hadoop.kms.proxyuser.livy.hosts=* hadoop.kms.proxyuser.livy.users=*

Created 01-11-2017 09:09 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

+1. Thats what I mentioned in my last comment below. Copying here so everyone get the context quickly.

Ranger KMS could be the issue because it causes problems for getting HDFS delegation token. If Z or L user needs to get HDFS delegation token then they also need to be super users for Ranger. You are better off trying with non-Ranger cluster or adding them to Ranger super users which is different from core site super users.