Support Questions

- Cloudera Community

- Support

- Support Questions

- hadoop cluster with active standby namenode + gap ...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

hadoop cluster with active standby namenode + gap in the edit log

- Labels:

-

HDFS

Created 01-19-2021 09:10 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

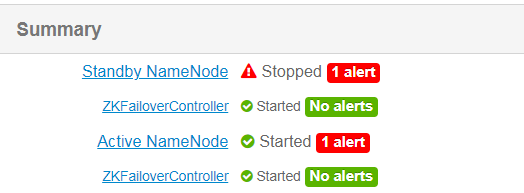

we have ambari cluster , HDP version `2.6.5`

cluster include management of two name-node ( one is active and the secondary is standby )

and 65 datanode machines

we have problem with the standby name-node that not started and from the namenode logs we can see the following

2021-01-01 15:19:43,269 ERROR namenode.NameNode (NameNode.java:main(1783)) - Failed to start namenode.

java.io.IOException: There appears to be a gap in the edit log. We expected txid 90247527115, but got txid 90247903412.

at org.apache.hadoop.hdfs.server.namenode.MetaRecoveryContext.editLogLoaderPrompt(MetaRecoveryContext.java:94)

at org.apache.hadoop.hdfs.server.namenode.FSEditLogLoader.loadEditRecords(FSEditLogLoader.java:215)

for now the active namenode is up but the standby name node is down

regarding to

java.io.IOException: There appears to be a gap in the edit log. We expected txid 90247527115, but got txid 90247903412.

what is the preferred solution to fix this problem?

Created 01-25-2021 02:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@mike_bronson7 There is a solution which can help. Run the following command on the Standby NameNode:

# su hdfs -l -c 'hdfs namenode -recover'

Following message can be seen: You have selected Metadata Recovery mode. Thismode is intended to recover lost metadata on a corrupt filesystem. Metadata recovery mode often permanently deletes data from your HDFS filesystem. Please back up your edit log and fsimage before trying this! Are you ready to proceed? (Y/N) (Y or N)

To proceed further, select option "yes", the recovery process will read as much of the edit log as possible. When there is an error or an ambiguity, it will prompt how to proceed. There will be further options prompted as Continue, Stop, Quit, and Always.

Mostly the data loss ( due to transaction skip/miss ) is possible when using this method.

This method is therefore not to be used, if data/transaction losses has to be avoided.

Cheers!

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Created 02-27-2024 12:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes @mike_bronson7 above steps also works

Created 01-25-2021 02:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@mike_bronson7 There is a solution which can help. Run the following command on the Standby NameNode:

# su hdfs -l -c 'hdfs namenode -recover'

Following message can be seen: You have selected Metadata Recovery mode. Thismode is intended to recover lost metadata on a corrupt filesystem. Metadata recovery mode often permanently deletes data from your HDFS filesystem. Please back up your edit log and fsimage before trying this! Are you ready to proceed? (Y/N) (Y or N)

To proceed further, select option "yes", the recovery process will read as much of the edit log as possible. When there is an error or an ambiguity, it will prompt how to proceed. There will be further options prompted as Continue, Stop, Quit, and Always.

Mostly the data loss ( due to transaction skip/miss ) is possible when using this method.

This method is therefore not to be used, if data/transaction losses has to be avoided.

Cheers!

Was your question answered? Make sure to mark the answer as the accepted solution.

If you find a reply useful, say thanks by clicking on the thumbs up button.

Created 02-03-2024 02:17 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

is the following procedure can help also?

Put Active NN in safemode

sudo -u hdfs hdfs dfsadmin -safemode enter

Do a savenamespace operation on Active NN

sudo -u hdfs hdfs dfsadmin -saveNamespace

Leave Safemode

sudo -u hdfs hdfs dfsadmin -safemode leave

Login to Standby NN

Run below command on Standby namenode to get latest fsimage that we saved in above steps.

sudo -u hdfs hdfs namenode -bootstrapStandby -force

Created 02-27-2024 12:29 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes @mike_bronson7 above steps also works