Archives of Support Questions (Read Only)

- Cloudera Community

- Board Archive

- Archives of Support Questions (Read Only)

- Who agreed with this topic

Who agreed with this topic

Hive-on-Spark tasks never finish

- Labels:

-

Apache Spark

Created on 03-23-2017 03:35 AM - edited 09-16-2022 04:19 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

We are currently evaluating the possiblity of migrating some of our batch ETL jobs from oldschool Hive to Hive-on-Spark. The first candidate is a pretty straightforward step that splits incoming data into groups based on their type, using a multiple insert Hive query. The execution is triggered from an Oozie workflow.

Most of the time this works out of the box without any problems. The amount of input data to be processed in each cycle is capped in order to keep resource requirements somewhat predictable, and the query normally finished within 15 minutes.

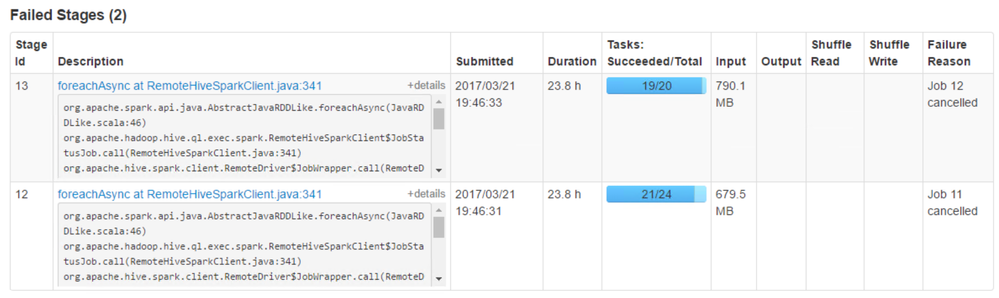

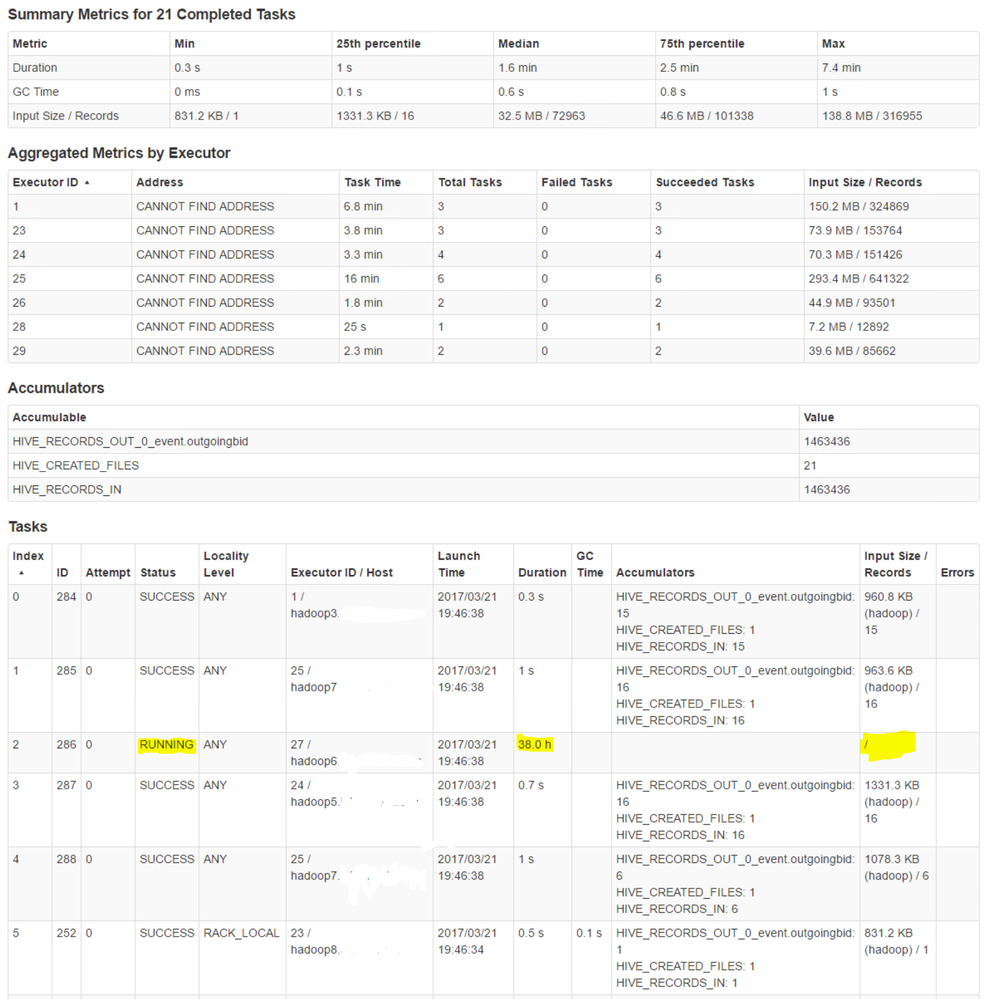

Now the issue: In a few rare cases, this query never stops running. At least not within the 24 hours we have (kind of accidentally) allowed it to keep running. The cause seems to be a few seemingly ordinary Spark tasks that just stop making any visible progress.

Some observations:

- The tasks in question are all foreachAsync calls

- All of the stalled tasks are running in the same executor

- Even after the application has been killed, the tasks are shown as RUNNING, and the associated executor is listed as Active in the Spark UI

- stdout and stderr of the executor contain no information, alternatively have been removed. At least he links in the UI give nothing useful

- The input sizes between tasks does not seem significantly scewed. I have yet to figure out how to see the input size to the running tasks though, which should be the interesting bit here.

Log contents

Below is a log snippet from the "application master" (Spark driver?)

... [Stage 10:=>(62 + 4) / 66][Stage 12:=>(17 + 7) / 24][Stage 13:=>(19 + 1) / 20] [Stage 10:=>(62 + 4) / 66][Stage 12:=>(18 + 6) / 24][Stage 13:=>(19 + 1) / 20]17/03/21 19:49:50 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. [Stage 10:=>(62 + 4) / 66][Stage 12:=>(19 + 5) / 24][Stage 13:=>(19 + 1) / 20]17/03/21 19:49:53 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. [Stage 10:=>(63 + 3) / 66][Stage 12:=>(19 + 5) / 24][Stage 13:=>(19 + 1) / 20]17/03/21 19:49:56 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:49:59 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:50:02 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:50:06 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. [Stage 10:=>(64 + 2) / 66][Stage 12:=>(19 + 5) / 24][Stage 13:=>(19 + 1) / 20]17/03/21 19:50:07 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:50:10 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:50:13 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. ... 17/03/21 19:51:02 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:51:06 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. [Stage 10:=>(64 + 2) / 66][Stage 12:=>(19 + 5) / 24][Stage 13:=>(19 + 1) / 20] [Stage 10:=>(64 + 2) / 66][Stage 12:=>(20 + 4) / 24][Stage 13:=>(19 + 1) / 20] [Stage 10:=>(65 + 1) / 66][Stage 12:=>(20 + 4) / 24][Stage 13:=>(19 + 1) / 20] [Stage 12:=============> (20 + 4) / 24][Stage 13:===============>(19 + 1) / 20]17/03/21 19:52:15 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:53:14 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. [Stage 12:=============> (20 + 4) / 24][Stage 13:===============>(19 + 1) / 20]17/03/21 19:54:03 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20]17/03/21 19:54:06 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:54:09 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:54:12 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. ... 17/03/21 19:54:57 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/21 19:55:00 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] ... [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20] [Stage 12:==============> (21 + 3) / 24][Stage 13:===============>(19 + 1) / 20]17/03/22 19:37:12 WARN spark.ExecutorAllocationManager: No stages are running, but numRunningTasks != 0 17/03/22 19:37:13 WARN yarn.YarnAllocator: Expected to find pending requests, but found none. 17/03/22 19:37:13 WARN client.RemoteDriver: Shutting down driver because RPC channel was closed. 17/03/22 19:37:13 WARN rpc.Rpc: Failed to send RPC, closing connection. java.nio.channels.ClosedChannelException 17/03/22 19:37:13 WARN rpc.Rpc: Failed to send RPC, closing connection. java.nio.channels.ClosedChannelException

Triple dots, "..." inserted by me where repeated log data has been cut away. Not really sure what all those warnings are about, but to me they do not appear to be directly related to this issue.

Screenshot

And here are some screenshots from the Spark UI. (Sorry about the font size. Couldn't get them any bigger here)

Environment:

CDH 5.9.1, Parcels

CentOS 6.7

Spark 1.6.1 used as execution engine for Hive

Spark 2.0.0 also installed on the cluster

22 data nodes (24-32 cores, 128 GB total RAM)

72 GB allocated to YARN containers

Some questions:

- Does this issue seem familiar to anyone else? If so, do you know what might be the root cause and/or solution?

- If not, are there any more sources of information that we could use to troubleshoot this. (Log files, UI pages etc.)

- Is the seemingly orphaned zombie executor actually still running somewhere, or is that a monitoring glitch? (Checking processes on the appointed host indicates nothing from this application is alive )

Any hints or help to let us move forward would be highly appreciated.

Cheers!

\Knut