Community Articles

- Cloudera Community

- Support

- Community Articles

- Choosing the right place to store data within the ...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 07-28-2020 11:33 AM - edited 09-16-2022 01:45 AM

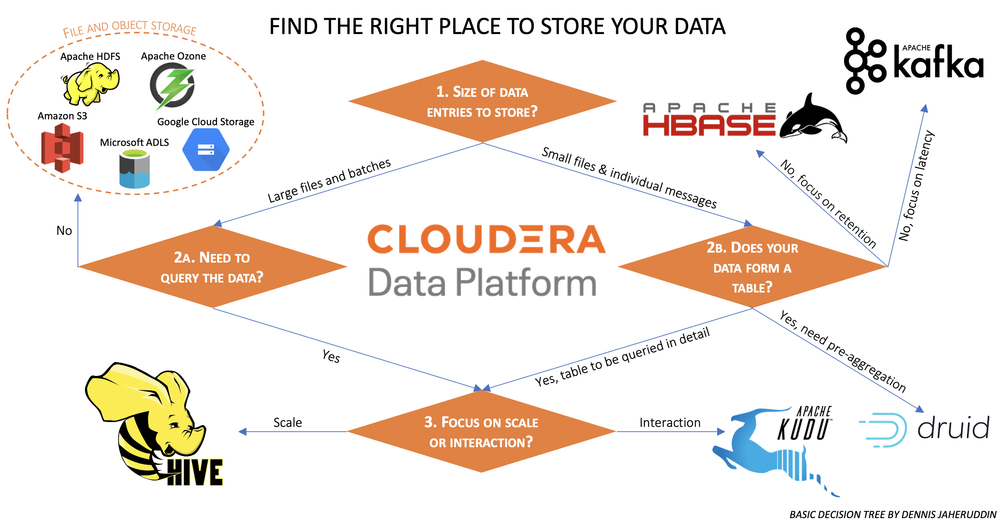

The Cloudera Data Platform (CDP) comes with many places to store your data, and it can be challenging to know which one to use. Though there is no formal decision tree, I hereby share the key considerations from my personal perspective. They can be visualized like this:

Explanation of each path

a. Have large bulky files, that do not need to be queried > File and object storage

The exact kind of storage to be used will mostly be defined by your environment, in a classical cluster HDFS is available. In the public cloud, each provider object store will be leveraged, and on-premises Ozone will serve as the object-store.

b. Have a table, either from large bulky files, or a set of messages > Hive for scale or Kudu for interaction

If you want to work with a table, and need to store it as such, it is clear you want to store your data as a table. Even if this may force you to think about how to implement the ingest in a sensible way. Kudu is great for fast insights, where hive tables (which in turn can be of different formats) can offer an unlimited scale. Note that Hive tables (registered in the Hive Metastore) can be accessed via different means, including the Hive engine and the Impala engine.

c. Does your table records stream in, but you only need pre-aggregates > Druid

Druid is able to aggregate data upon ingestion.

d. Are you working with messages or small files > Kafka for latency or HBase for retention

Kafka and Hbase are both great places to put 'many tiny things', for instance, individual transactions. Kafka offers great throughput and latency, but despite commonly used marketing messages, it is not a database and does not scale well for historical data. If you want to serve data granularly for a longer period of time, Hbase is a great fit for this.

Some notes:

- When working in the cloud, it is often desirable to work with object stores where possible to keep costs down. The good news is that CDP Public Cloud comes with cloud native capabilities. As such several storage solutions, such as Hive, actually store the data in cloud object stores.

- It is possible that more than one road applies to your data. For instance, a message may require very low latency in the first few days, but also needs to be retained for several years. In such cases, it often makes sense to store a subset of the data in two places.

- I did not include other solutions that could store data, such as solr, or in-application state. The reason is that the primary function of these is not storage, but search and processing respectively. I also did not include Impala as it is an engine, Hive is only on this chart to represent its storage capabilities.

- This is a basic decision tree, it should cover most situations but do not hesitate to deviate if your situations ask for this.

Also, see my related article: Find the right tool to move your data

Full Disclosure & Disclaimer:

I am an Employee of Cloudera, but this is not part of the formal documentation of the Cloudera Data platform. It is purely based on my own experience of advising people in their choice of tooling.

Created on 07-29-2020 08:01 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

This is a great decision chart.

I would add Flink SQL for querying events in stream and for querying Kafka topics.

If you have Time Series data definitely use Druid, if your data is not timeseries or timestamp driven do not use Druid, use Kudu instead.

https://druid.apache.org/docs/latest/comparisons/druid-vs-kudu.html

https://druid.apache.org/docs/latest/comparisons/druid-vs-key-value.html

Created on 07-29-2020 02:27 PM - edited 07-29-2020 02:28 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thanks, will think on refining the distinction between kudu and druid.

Currently i would not want to include the fact that flink has state as 'storage', but regarding flink SQL, i may actually make another post later to talk about the way to interact with/access different kinds of data. (As someone also noticed, impala is also not here because it is not a store in itself, but works with stored data).