Community Articles

- Cloudera Community

- Support

- Community Articles

- How to use Model Registry on Cloudera Machine Lear...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

11-29-2023

09:21 PM

- edited on

04-20-2026

11:45 PM

by

GrazittiAPI

Introduction:

This article demonstrates how a Machine Learning(ML) engineer can use the Model Registry Service in Cloudera Machine Learning for cataloging, versioning, and deploying models. ML Engineers work iteratively in an effort to improve model performance using one or more target metrics. This process step is called Experimentation, where the engineer works through a series of trial experiments by changing different parameters of the underlying model, called hyper-parameters, to improve the target metric. In CML, the Experiments feature allows users to compare experiment runs and visualize the impact of parameters on the target metric (for more information, refer to the article on hyper-parameter tuning here). Once a chosen experiment run is deemed satisfactory, use the CML Model Registry to register the model. The Model Registry can serve as a centralized catalog for all models that are deemed ready for deployment. Subsequent improvements can then be registered in the catalog as new versions.

This article assumes that a model registry has already been set up in the Cloudera machine learning service. To learn more about how to set up a model registry, check the References section at the end of the article for resources.

The Value of Model Registry in Machine Learning Workflows

Including model registry in machine learning workflows provides a number of benefits for data science teams:

- It serves as a catalog, helps model reuse and controls proliferation of models since teams can use the catalog to discover existing model artifacts

- It supports Model versioning and provides lineage information as new models are deployed after retraining with addition of new data

- It helps provide a foundation for DevOps automation using stage transitions of models from development to staging to production.

- It is now possible to understand exactly which experiment led to the model being deployed in production. This helps in improving the auditability and traceability of ML models.

Using the Model Registry to store and deploy models

Only models that have been created with MLflow experiments can be stored in the Model Registry. Hence, as a first step, ensure that the MLFlow APIs are used to create an experiment and logged experiments as a part of this run. Creating and logging experiment runs in CML is outside the scope of this blog. For more details, refer to the blog here or refer to the Cloudera documentation. Now, you will see how to deploy models to the model registry. In this case, I will use the model in the experiment run in the blog Hyperparameter Tuning with MLFlow Experiments to add the model to the model registry and subsequently deploy the model as an API endpoint.

Registering a CML Experiment in Model Registry

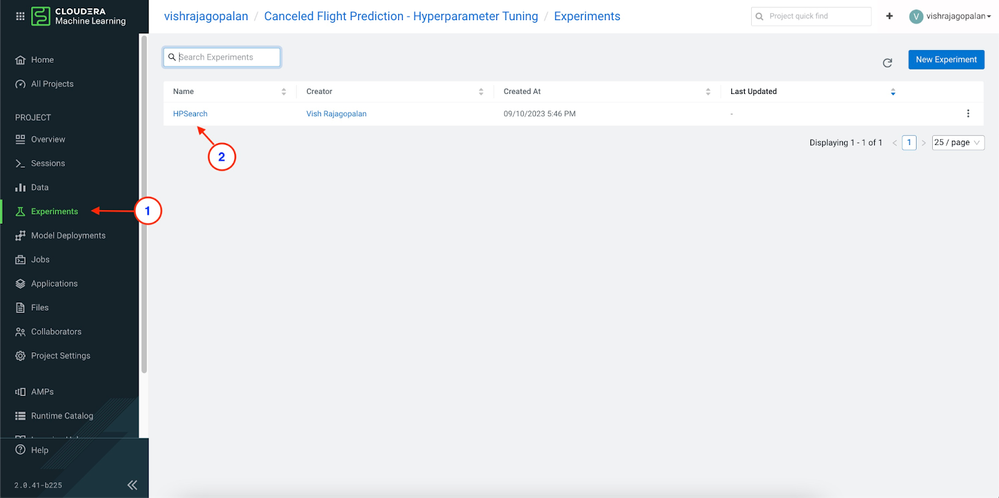

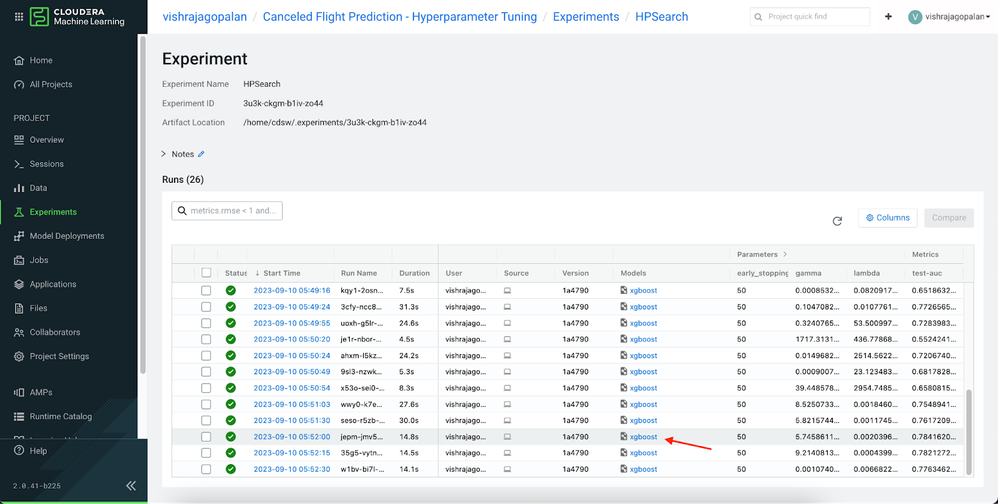

Click on the Experiments menu item in CML Menus to review the experiment runs, and click on a specific experiment run to see the details of all the experiments in the run.

Let us choose one specific model from an experiment run based on the performance of the target metric. The highlighted row is the chosen model, and click on the model to bring up the Model Registration page.

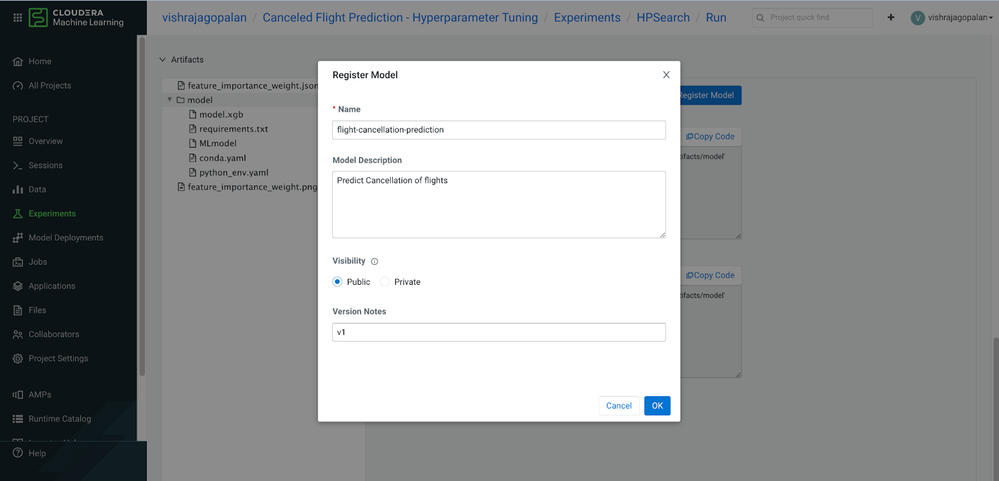

At this point, enter the name, description, visibility scope of the model, and version information, such as below. Once entered and submitted, the model registry confirms the registration of the model.

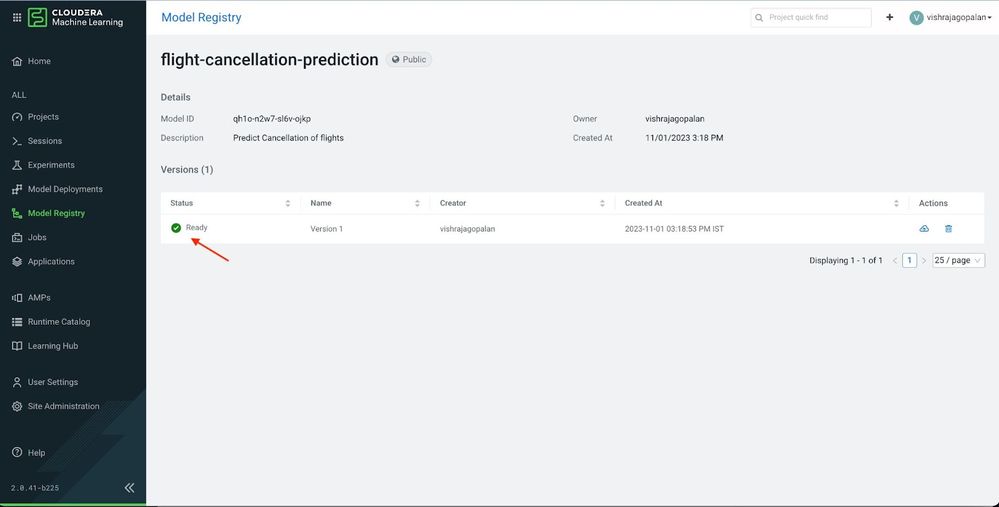

Deploying Models from Model Registry

Data and System Administrators are responsible for managing the data artifacts—tables, files, jobs, and models—in the production systems. To manage a controlled deployment process, they are typically responsible for transitioning these artifacts from development to staging and production systems. The model registry provides a single pane of glass that can be used for model management purposes. For example, administrators can enforce policies to ensure only models from the Model Registry are deployed to production, which allows lineage traceability of the deployed model, i.e., which experiment was used to deploy the model.

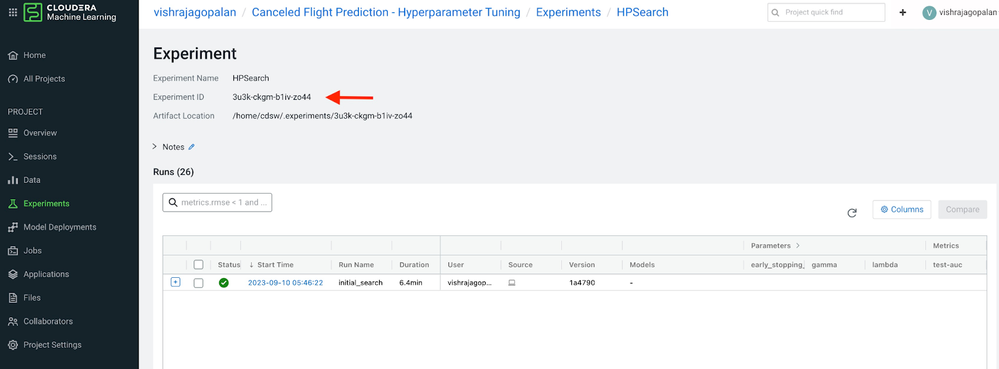

To deploy the model, identify the experiment and the model run name that is used to deploy the model. Save the name of the Experiment ID and the Run name as shown below:

cd ~/<experiment name>/<experiment run>/

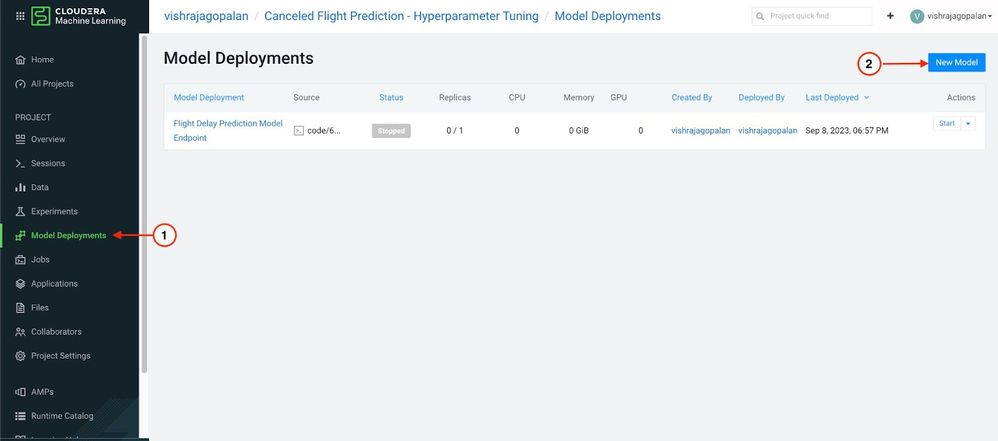

Now, use this process to deploy a model from the Model Registry. Click the menu Model Deployments to bring up the Model Deployments page in Cloudera Machine Learning. This shows all the existing models that are deployed for the project. Click on the New Model button on the top right, as shown below.

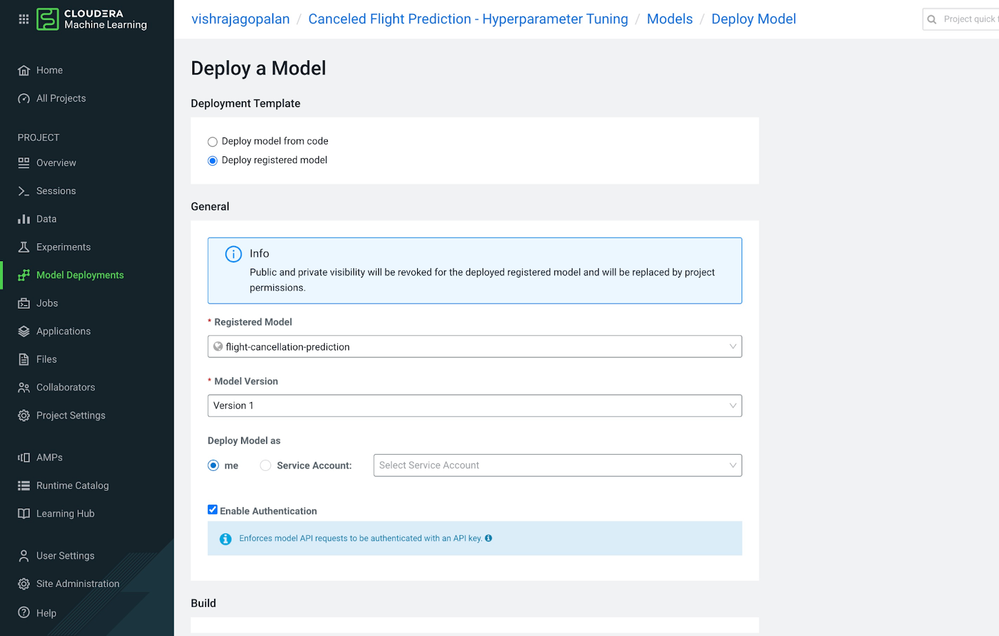

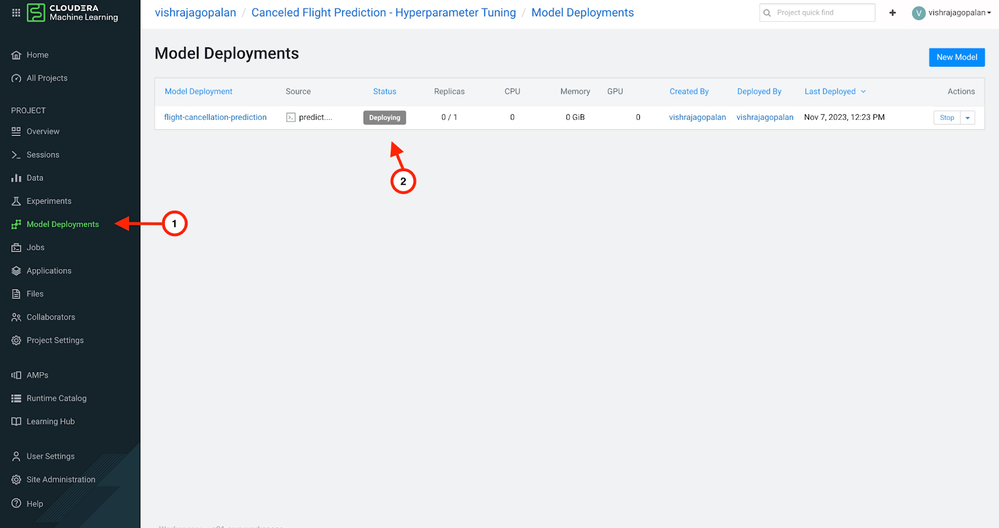

This brings up the Deploy Model User Interface, where you can choose the model to deploy. Since the plan is to use a registered model for deployment, select the options as shown below and choose the registered model from the drop-down. The model version gets pre-populated from the prior deployment history of the model. Return to the Model Deployments page to check the build status of the model deployed from the Model Registry, as shown below.

Summary

This blog demonstrates how machine learning engineers and administrators can build model management workflows by using the CML Model Registry. Adding the Model Registry in deployment workflows provides data science teams a number of advantages

- It provides a Centralized Model Management catalog and controls the proliferation of models since teams can use the catalog to discover existing model artifacts

- It allows versioning of models and therefore helps traceability and lineage as new models are deployed after retraining with addition of new data

- It provides a centralized gateway for stage transitions of models from development to staging to production.

- It supports model auditability and traceability. It is now possible to understand exactly which experiment led to the model being deployed in production.

References:

- Cloudera Reference Docs: here

- Setting up Model Registry : here

- Article on using Experiments with CML: here