Community Articles

- Cloudera Community

- Support

- Community Articles

- Secured access to Hive using Zeppelin's jdbc(hive)...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 04-06-2017 01:19 AM - edited 08-17-2019 01:21 PM

SETUP:

- Kerberized cluster with Ranger installed. This article uses a latest HDP-2.6 cluster installed using Ambari -2.5

- Ranger based authorization is enabled with Hive

- Zeppelin's authentication is enabled. You can use LDAP authentication, but for the purpose of this demonstration - I am using a simple authentication method and I am going to configure 2 additional users 'hive' and 'hrt_1' in zeppelin's shiro.ini .

[users] # List of users with their password allowed to access Zeppelin. # To use a different strategy (LDAP / Database / ...) check the shiro doc at http://shiro.apache.org/configuration.html#Configuration-INISections admin = admin, admin hive = hive, admin hrt_1 = hrt_1, admin # Sample LDAP configuration, for user Authentication, currently tested for single Realm [main] ### A sample for configuring Active Directory Realm #activeDirectoryRealm = org.apache.zeppelin.realm.ActiveDirectoryGroupRealm #activeDirectoryRealm.systemUsername = userNameA #use either systemPassword or hadoopSecurityCredentialPath, more details in http://zeppelin.apache.org/docs/latest/security/shiroauthentication.html #activeDirectoryRealm.systemPassword = passwordA #activeDirectoryRealm.hadoopSecurityCredentialPath = jceks://file/user/zeppelin/zeppelin.jceks #activeDirectoryRealm.searchBase = CN=Users,DC=SOME_GROUP,DC=COMPANY,DC=COM #activeDirectoryRealm.url = ldap://ldap.test.com:389 #activeDirectoryRealm.groupRolesMap = "CN=admin,OU=groups,DC=SOME_GROUP,DC=COMPANY,DC=COM":"admin","CN=finance,OU=groups,DC=SOME_GROUP,DC=COMPANY,DC=COM":"finance","CN=hr,OU=groups,DC=SOME_GROUP,DC=COMPANY,DC=COM":"hr" #activeDirectoryRealm.authorizationCachingEnabled = false ### A sample for configuring LDAP Directory Realm #ldapRealm = org.apache.zeppelin.realm.LdapGroupRealm ## search base for ldap groups (only relevant for LdapGroupRealm): #ldapRealm.contextFactory.environment[ldap.searchBase] = dc=COMPANY,dc=COM #ldapRealm.contextFactory.url = ldap://ldap.test.com:389 #ldapRealm.userDnTemplate = uid={0},ou=Users,dc=COMPANY,dc=COM #ldapRealm.contextFactory.authenticationMechanism = SIMPLE ### A sample PAM configuration #pamRealm=org.apache.zeppelin.realm.PamRealm #pamRealm.service=sshd sessionManager = org.apache.shiro.web.session.mgt.DefaultWebSessionManager ### If caching of user is required then uncomment below lines cacheManager = org.apache.shiro.cache.MemoryConstrainedCacheManager securityManager.cacheManager = $cacheManager securityManager.sessionManager = $sessionManager # 86,400,000 milliseconds = 24 hour securityManager.sessionManager.globalSessionTimeout = 86400000 shiro.loginUrl = /api/login [roles] role1 = * role2 = * role3 = * admin = * [urls] # This section is used for url-based security. # You can secure interpreter, configuration and credential information by urls. Comment or uncomment the below urls that you want to hide. # anon means the access is anonymous. # authc means Form based Auth Security # To enfore security, comment the line below and uncomment the next one /api/version = anon #/api/interpreter/** = authc, roles[admin] #/api/configurations/** = authc, roles[admin] #/api/credential/** = authc, roles[admin] #/** = anon /** = authc

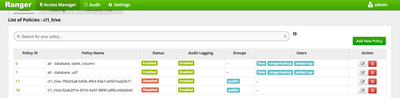

- Make sure only 'hive' user has access to all databases, tables and columns as follows

- Make sure Zeppelin's jdbc interpreter is configured as follows:

| hive.user | hive |

| hive.password | |

| hive.url | Make sure to configure correct hive.url using instructions on https://community.hortonworks.com/articles/4103/hiveserver2-jdbc-connection-url-examples.html |

| hive.driver | org.apache.hive.jdbc.HiveDriver |

| zeppelin.jdbc.auth.type | KERBEROS |

| zeppelin.jdbc.keytab.location | zeppelin server keytab location |

| zeppelin.jdbc.principal | your zeppelin principal name from zeppelin server keytab |

- Download test data from here , unzip it and copy the timesheet.csv file into HDFS /tmp directory and change permission to '777'

DEMO:

1) Log in to Zeppelin as user 'hive' (password is also configured to 'hive')2) Create a notebook 'jdbc(hive) demo' and run the following 2 paragraphs for creating table and loading data

%livy.sql

CREATE TABLE IF NOT EXISTS timesheet_livy_hive(driverId INT, week INT, hours_logged INT, miles_logged INT) row format delimited fields terminated by ',' lines terminated by '\n' stored as TEXTFILE location '/apps/hive/warehouse/timesheet' tblproperties('skip.header.line.count'='1')%livy.sql LOAD DATA INPATH '/tmp/timesheet.csv' INTO TABLE timesheet_livy_hive

%livy interpreter supports impersonation and it will execute the sql statements as 'hive' user. The RM UI will show corresponding YARN APPs running as 'hive' user

Also 'hive' user has the permissions to create table in the Ranger policies - these two paragraphs will run successfully.

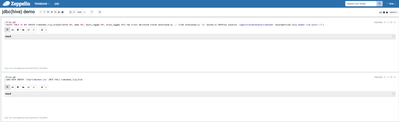

3) Stay logged in as 'hive' user and run a 'SELECT' query using jdbc(hive) interpreter

Since 'hive' user has the permissions to run a SELECT query in the Ranger policies - this paragraph will run successfully as well

%jdbc(hive) select count(*) from timesheet_livy_hive

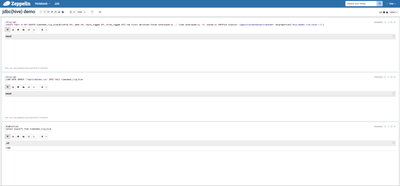

4) Now logout as 'hive' user and login as 'hrt_1' user, open notebook jdbc(hive) demo and re-run the SELECT query paragraph

%jdbc interpreter supports impersonation. It will run the SELECT query as 'hrt_1' user now and will fail subsequently, since the 'hrt_1' user does not have sufficient permissions in Ranger policies to perform a query on any of the hive tables

%jdbc(hive) grant select on timesheet_livy_hive to user hrt_1

This paragraph will fail again as the impersonated user 'hrt_1' does not have permissions to grant access

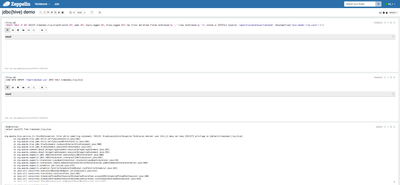

6) Now logout and login as 'hive' user again and try to grant access to 'hrt_1' user for the hive table again.

This time, the last paragraph will succeed as the 'hive' user has the permissions to grant access to 'hrt_1' as per the defined policies in Ranger.

When the last paragraph succeeds, you will see an extra 'grant' policy created in Ranger

7) Now logout and login back as 'hrt_1' user and try to run the 'SELECT' query again(wait for about 30 sec for the new Ranger policy to be in effect)

This paragraph would succeed now since a new Ranger policy has been created for 'hrt_1' user to perform select query on the hive table

😎 Stay logged in as 'hrt_1' user and try dropping the table using jdbc(hive) interpreter.

This would not succeed as user 'hrt_1' does not have permissions to drop a hive table.

%jdbc(hive) drop table timesheet_livy_hive

9) Now logout as 'hrt_1' user and login back as 'hive' user and try to drop the table.

This would succeed now, as only the 'hive' user has permission to drop the table.