Community Articles

- Cloudera Community

- Support

- Community Articles

- Storm Topology Runbook

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 01-05-2017 06:23 PM

General Recommendations

- Only fail tuples if there's a recoverable exception

- Use an Exception/Error Logging Bolt/Stream and send the output to a Kafka topic so all logs are consolidated in place

- Be careful about supervisor saturation / overallocation i.e. running more threads than available CPU resources

- Configure parallelism based on throughput requirements (use Throughput Equation to calculate)

- Be aware of data spikes and plan for them in your topology / use cases / operations

- If using Kafka, setup Kafka Producer-Consumer Lag analysis and dashboard

- Benchmark topologies to assess "health" operations numbers for individual bolt performance ## Troubleshooting topology performance issues:

- Check the topology stats from the Nimbus UI

- Look for Failures (in spout or specific bolt) if any that module is having issues.

- If a topology has Kafka error bolt then open a console consumer to looks at the error logs from Kafka

- Now find out what supervisor the worker is running on and start tailing the logs of the topology (log files are named by name of the topology and the deployment number; you can find this from the nimbus ui)

If you are seeing lag building there can be 2 major reasons for this:

- a topology component is slow (bolt or spout)

- the end sink is having issues; again sink specific exceptions will be present in output of the error bolt; follow instructions above to see the logs

Topology issues due to slow bolts

Topologies can have performance issues because some bolts are running slow. Bolts can be either be running slow due to 2 root causes:

1. the system they interact with is slow

2. the topology received a data spikes

Mostly slow bolts are usually the ones interacting with other external systems e.g. lookup bolts or writer bolts. Such bolts are slow because their external systems are experiencing transient performance slowdowns e.g. elasticsearch recovery, hbase region split, major gc etc.

**How to find out what's the root cause of a slow bolt?**

Analyze the capacity numbers and execute latencies, a slow bolt is the one with capacity >1.0 meaning there aren't enough bolt instances for the amount of data being processed. Now next step is to find out if the root cause is external system slowdown or data spike.

If execute latencies are reasonable i.e. around your normal value the external system is not the bottleneck therefore the root cause is data spike.

Troubleshooting external system bottleneck

External system bottleneck should be resolved before topology performance can be restored. Sometimes it may be beneficial to pause or stop the topology in order to recover the external system e.g. topology based data ingestion.

``Note: Sometimes data spike can trigger external system slowdown``

Troubleshooting data spike

Data spikes can be resolved by adding more parallelism to the topology. This implies balanced addition of executors and workers i.e. incrementally adding more parallelism to the specific bolt and analyzing capacity numbers. This process should be repeated until the bolt capacity is below 1.0

``Note: Add more executors only when the bolt latencies are low or code is linearly scaling``

Calculating current throughput Vs. expected throughput is critical for determining the correct configurations for parallelism and worker count.

**Throughput = Executor count * 1000/(process latency) * Capacity**

``Note: Adding more executors doesn’t solve the problem if the supervisors are saturated``

Adding more workers to the topology to evenly balance out the load across supervisors is critical for good performance. If there are more executors scheduled per worker than there's CPU capacity for that worker this will result in CPU resource contention leading to cascading performance effects across other topology workers running on that supervisor. A key way to analyzing over allocation is summing the number of executors in the cluster and dividing them by sum of CPU cores across all supervisors. If this number is greater than 2 than you have overallocated resources however this may not result in performance issues as overallocating executors for IO bound bolts is sometimes required for performance and parallelism.

Known Issues

Ackers

Number of ackers in Storm may get bottlenecked if there are too few of them. They may also get bottlenecked if there aren't enough ackers per worker because of network issues (seen this in AWS multi-az deployments)

Tuple Failures

Tuple failures happen because of 3 reasons:

1. Tuple timeouts

Tuple timeouts are the trickiest to resolve. They could happen due to any of the following reasons:

- Slow sink and bad max spout pending

- Micro-batching and un-tunned batch sizes (data is not coming is fast enough for your batches to fill and you don't have time based acking)

- Un-tunned max spout pending setting

- In-correct tuple message timeout (default 30 seconds)

2. Bolt Exceptions

Bolt exceptions will show in topology worker logs.

3. Explicit failures in the code i.e. calling collector.fail() method

Explicit failures will be shown in Nimbus UI, in failure counts corresponding to the respective bolts.

Saturated Workers

Storm has the concept of slots which can only allow to limit saturation and overallocation on memory but not CPU / threads. This is something that has to be manually maintained by engineers as in be careful not to over provision number of threads (executors) / workers. Symptoms: topology will appear to run slow without any tuple failures however lag is going to continue to build.

Created on 10-22-2018 07:16 AM - edited 08-17-2019 06:03 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi @Ambud Sharma,

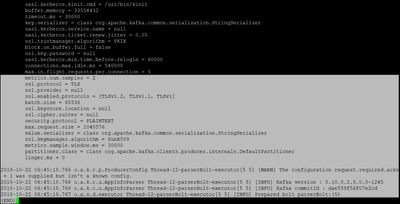

I am new to HCP and Storm, I am running though the squid use case. From Kafka console consumer, my kafka is receiving data, and from metron UI, my squid sensor is running but throughput is 0kb/s, from Storm UI squid topology is active and 1 worker 5 executors, but data not coming in to the topology. The logs in storm/workers-artifacts for worker.log.err is empty, for worker.log it stopped below image.