Support Questions

- Cloudera Community

- Support

- Support Questions

- Configure StandardSSLContextService for Elasticse...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Configure StandardSSLContextService for Elasticsearch processors in NiFi

- Labels:

-

Apache NiFi

Created 09-11-2020 08:41 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I am trying to configure the StandardSSLContextService controller to work with either the PutElasticsearchHttpRecord or PutElasticsearchRecord processors. The Elasticsearch cluster has been secured by Opendistro for Elasticsearch

I am able to do the following below successfully:

openssl s_client -connect atlas201.cs:9200 -debug -state -cert esnode.pem -key esnode-key.pem -CAfile root-ca.pem

But when I try those keys and certs in the StandardSSLContextService I am unable to get it to work. I am not too familiar with Opendistro as this was already a pre-configured cluster that I am working on.

Could someone tell me what the following parameters need to be for me to successfully connect and ingest data into the ES cluster?

Created on 09-14-2020 01:18 PM - edited 09-14-2020 04:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

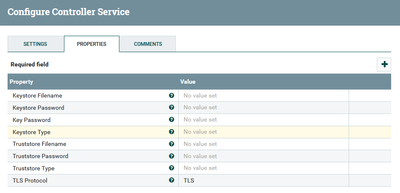

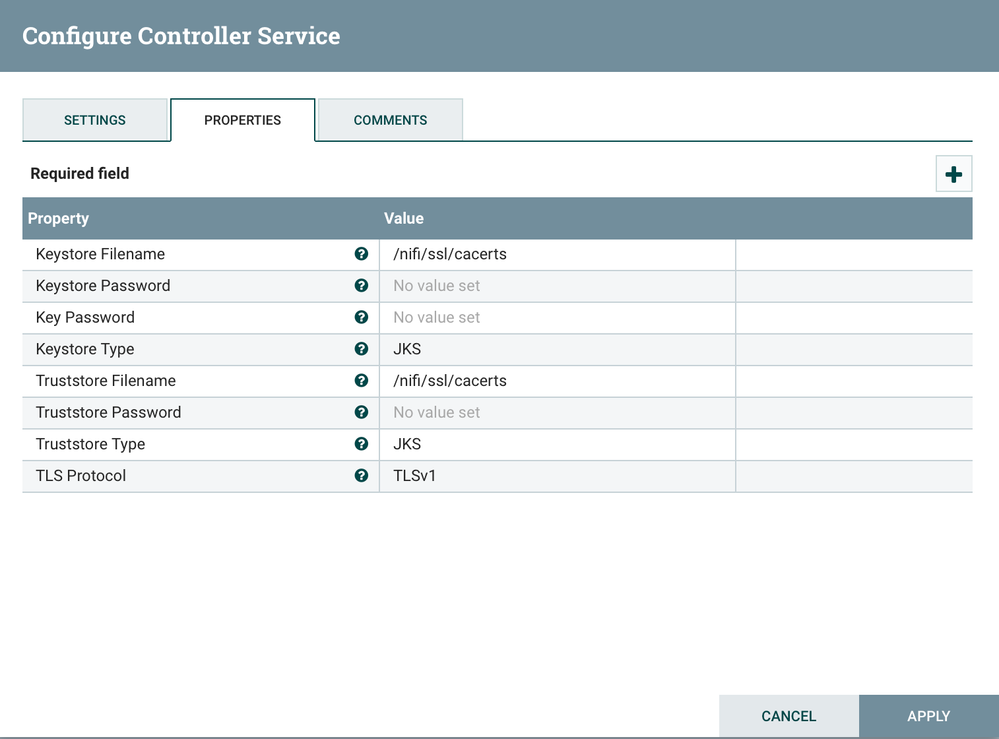

I suspect you have not completed a step, or missing something. The cacerts works for me in all cases if the cert is publicly trusted (standard public cert from public CA) which it should be. You should share info on the configurations you tried and what if any errors you got from that. The bare minimum settings you need for that are keystore (file location), password, key type (jks), and TLS version. Assuming you copied your java cacert file to all nodes as /nifi/ssl/cacerts the controller service properties should look like:

If cacerts doesnt work, then you must create keystores and/or trust stores with the public cert. Use the openssl command to get the cert. That command looks like:

openssl s_client -connect https://secure.domain.com

You can also get it from the browser when you visit the elk interface; for example cluster health, or indexes. Double click cert lock icon in the browser then use the browser's interface to see/view/download public certificate. You need the .cer or .crt file. Then you use the cert to create the keystore with keytool commands. An example is:

keytool -import -trustcacerts -alias ambari -file cert.cer -keystore keystore.jks

Once you have created a keystore/truststore file you need to copy it to all nifi nodes, ensure the correct ownership, and make sure all the details are correct in the SSL Context Service. Lastly you may need to modify the TLS type until testing works.

Here is working example of getting the cert and using it with keytool from a recent use case:

echo -n|openssl s_client -connect https://secure.domain.com | sed -ne '/-BEGIN CERTIFICATE-/,/-END CERTIFICATE-/p' > publiccert.crt

keytool -import -file publiccert.crt -alias astra -keystore keyStore.jks -storepass password -noprompt

keytool -import -file publiccert.crt -alias astra -keystore trustStore.jks -storepass password -noprompt

mkdir -p /etc/nifi/ssl/

cp *.jks /etc/nifi/ssl

chown -R nifi:nifi /etc/nifi/ssl/

Created 09-11-2020 09:25 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You need to configure your SSLContextService with a keystore/truststore built with the cert you get from the Elasticsearch Cluster. You can also try cacerts that is included with your Java. This is usually easier to do. More details here for cacerts:

If this answer resolves your issue or allows you to move forward, please choose to ACCEPT this solution and close this topic. If you have further dialogue on this topic please comment here or feel free to private message me. If you have new questions related to your Use Case please create separate topic and feel free to tag me in your post.

Thanks,

Steven @ DFHZ

Created 09-14-2020 10:40 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Steven,

I tried the method you linked but that didn't work.

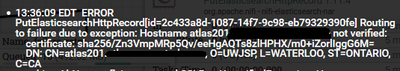

I also configured the SSLContextService with the keystore and truststore I got from Elasticsearch Cluster. But I get the following error now:

Is there anyway to stop hostname verification? Or configure it so hostname verification works correctly? I have blocked out the full hostnames but I can assure you the hostname (atlas201.xx.xxx.xx) is the exact same as the specified CN (atlas.xx.xxx.xx)

Created on 09-14-2020 01:18 PM - edited 09-14-2020 04:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I suspect you have not completed a step, or missing something. The cacerts works for me in all cases if the cert is publicly trusted (standard public cert from public CA) which it should be. You should share info on the configurations you tried and what if any errors you got from that. The bare minimum settings you need for that are keystore (file location), password, key type (jks), and TLS version. Assuming you copied your java cacert file to all nodes as /nifi/ssl/cacerts the controller service properties should look like:

If cacerts doesnt work, then you must create keystores and/or trust stores with the public cert. Use the openssl command to get the cert. That command looks like:

openssl s_client -connect https://secure.domain.com

You can also get it from the browser when you visit the elk interface; for example cluster health, or indexes. Double click cert lock icon in the browser then use the browser's interface to see/view/download public certificate. You need the .cer or .crt file. Then you use the cert to create the keystore with keytool commands. An example is:

keytool -import -trustcacerts -alias ambari -file cert.cer -keystore keystore.jks

Once you have created a keystore/truststore file you need to copy it to all nifi nodes, ensure the correct ownership, and make sure all the details are correct in the SSL Context Service. Lastly you may need to modify the TLS type until testing works.

Here is working example of getting the cert and using it with keytool from a recent use case:

echo -n|openssl s_client -connect https://secure.domain.com | sed -ne '/-BEGIN CERTIFICATE-/,/-END CERTIFICATE-/p' > publiccert.crt

keytool -import -file publiccert.crt -alias astra -keystore keyStore.jks -storepass password -noprompt

keytool -import -file publiccert.crt -alias astra -keystore trustStore.jks -storepass password -noprompt

mkdir -p /etc/nifi/ssl/

cp *.jks /etc/nifi/ssl

chown -R nifi:nifi /etc/nifi/ssl/