Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Configuring Zeppelin Spark Interpreters

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Configuring Zeppelin Spark Interpreters

- Labels:

-

Apache Zeppelin

Created 04-26-2016 08:20 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I installed zeppelin manually on my node(not sandbox) but after following through the instructions on configuring the spark notebook I notice that when I run "sc.version" it throws me an error(below):

sc.version

java.net.ConnectException: Connection refused at java.net.PlainSocketImpl.socketConnect(Native Method) at java.net.AbstractPlainSocketImpl.doConnect(AbstractPlainSocketImpl.java:350) at java.net.AbstractPlainSocketImpl.connectToAddress(AbstractPlainSocketImpl.java:206) at java.net.AbstractPlainSocketImpl.connect(AbstractPlainSocketImpl.java:188) at java.net.SocksSocketImpl.connect(SocksSocketImpl.java:392) at java.net.Socket.connect(Socket.java:589) at org.apache.thrift.transport.TSocket.open(TSocket.java:182) at org.apache.zeppelin.interpreter.remote.ClientFactory.create(ClientFactory.java:51) at org.apache.zeppelin.interpreter.remote.ClientFactory.create(ClientFactory.java:37) at org.apache.commons.pool2.BasePooledObjectFactory.makeObject(BasePooledObjectFactory.java:60) at org.apache.commons.pool2.impl.GenericObjectPool.create(GenericObjectPool.java:861) at org.apache.commons.pool2.impl.GenericObjectPool.borrowObject(GenericObjectPool.java:435) at org.apache.commons.pool2.impl.GenericObjectPool.borrowObject(GenericObjectPool.java:363) at org.apache.zeppelin.interpreter.remote.RemoteInterpreterProcess.getClient(RemoteInterpreterProcess.java:142) at org.apache.zeppelin.interpreter.remote.RemoteInterpreter.getFormType(RemoteInterpreter.java:271) at org.apache.zeppelin.interpreter.LazyOpenInterpreter.getFormType(LazyOpenInterpreter.java:104) at org.apache.zeppelin.notebook.Paragraph.jobRun(Paragraph.java:199) at org.apache.zeppelin.scheduler.Job.run(Job.java:171) at org.apache.zeppelin.scheduler.RemoteScheduler$JobRunner.run(RemoteScheduler.java:326) at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511) at java.util.concurrent.FutureTask.run(FutureTask.java:266) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.access$201(ScheduledThreadPoolExecutor.java:180) at java.util.concurrent.ScheduledThreadPoolExecutor$ScheduledFutureTask.run(ScheduledThreadPoolExecutor.java:293) at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1142) at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:617) at java.lang.Thread.run(Thread.java:745)

Created on 04-27-2016 07:49 PM - edited 08-19-2019 03:43 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

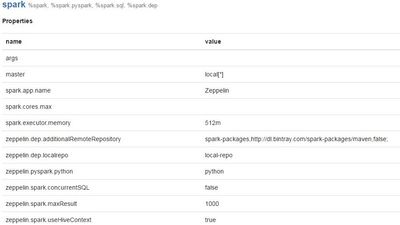

@Timothy Spann Yes spark is running in the cluster and on its default port(never changed the default port).I attached the configuration screen for spark interpreter.I can also access spark from the commandline and also from the UI those work perfectly.Thanks!

Created 04-27-2016 07:59 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

local master is not using YARN version of Spark. it's running a local version. Is that running?

is the green connected light on in the right upper corner?

Created 04-29-2016 03:22 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Timothy Spann should local master be set to "yarn-client" like it was set in "spark-yarn-interpreter"? The cluster is running spark 1.6 and works perfectly from the command line and yes the green connected light is on on the upper right corner.

Created 09-12-2016 07:51 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

yes, if you use the Zeppelin now installed with Spark this should be resolved

Created 12-31-2016 10:24 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Please try running it in yarn-cluster mode

Created 01-17-2017 10:50 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have the same problem and have tried all suggestions written above and still get the error message. Will appreciate your suggestions. @Koffi @Timothy Spann @Yogeshprabhu

Created 01-18-2017 01:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

are you using out of the box zeppelin installed through ambari, version 0.60?

how much RAM do you have?

what version of HDP? ambari? jdk?

does your cluster have spark?

any logs?

Created 01-06-2017 05:04 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This connection error usually means that the interpreter has been failed for some reason.

1. First of all check the log of the interpreter in the logs directory.

2. As you use yarn-client I guess spark has not been configured properly to use spark. Check if you have the right yarn-site.xml and core-site.xml in your $SPARK_CONF_DIR. You should also check if SPARK_HOME and SPARK_CONF_DIR set in your zeppelin-env.sh

3. Usually the spark-submit parameters are visible from the interpreter log, you can also check the log and try to submit an example application from the command line with the same parameters.

4. Sometime the spark-submit works well but the yarn application master is failed for some reason, so you can also check if you have any application on your spark web ui.

Created 01-25-2018 10:13 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I had similar stack trace issue, As per suggestion, I checked zeppelin-env.sh, I Noticed SPARK_HOME is commented. I corrected it still we had issue with same error. It would be appreciated if you can provide any more details to solve it. We are using HDP-2.6.1.12 with Ambari 2.5

Created 04-04-2018 02:21 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May I know the fix for this error?

- « Previous

-

- 1

- 2

- Next »