I'm using a tool in which I have to point out the master node (driver node) of the Cloudera Spark Cluster (spark :// <some-spark-master> : 7077). Also as I learned, Spark has "Master Node", "Driver Node" and "Worker Nodes".

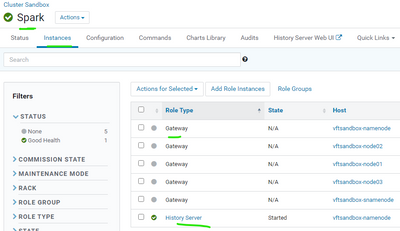

So I decided to go to the Cloudera Web Manager and checked the Configuration Tab of the Spark service, but all I found are "Gateway instance" and "History Server instance". Where are the "Driver instance" and "Worker instance"? I can't add these two instances in the "Add Role Instances" too

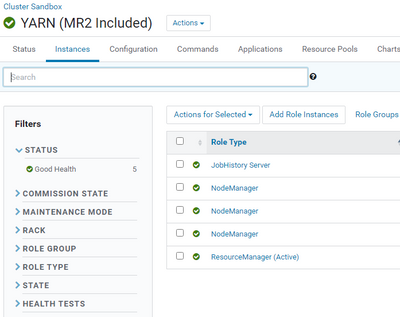

My guess is that it's in Yarn service configuration, but I can't find anything related to "Master", "Driver" or "Worker" either.

So what is the link to "Spark Master" that ends with 7077 (what is the Node)? I can't find it anywhere in the Configuration tab