Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: Multiple HPROF files are getting generated wit...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Multiple HPROF files are getting generated with hdfs user

- Labels:

-

Apache Hadoop

-

Apache Spark

Created 05-03-2017 05:35 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

I have 3 node cluster running on CentOS 6.7 having cloudera 5.9.

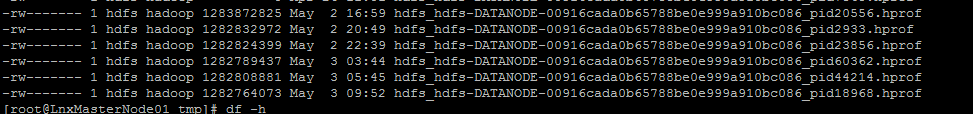

Namenode has been facing issue of .hprof files in /tmp directory leading to 100% disk usage on / mount. The owner of these files are hdfs:hadoop.

I know hprof is created when we have a heap dump of the process at the time of the failure. This is typically seen in scenarios with "java.lang.OutOfMemoryError".

Hence I increased the RAM of my NN to 112GB from 56GB.

My configs are:

yarn.nodemanager.resource.memory-mb - 12GB

yarn.scheduler.maximum-allocation-mb - 16GB

mapreduce.map.memory.mb - 4GB

mapreduce.reduce.memory.mb - 4GB

mapreduce.map.java.opts.max.heap - 3GB

mapreduce.reduce.java.opts.max.heap - 3GB

namenode_java_heapsize - 6GB

secondarynamenode_java_heapsize - 6GB

dfs_datanode_max_locked_memory - 3GB

dfs blocksize - 128 MB

The datanode log on NN has below error but they are also present on other DN (on all 3 nodes basically):

2017-05-03 10:03:17,914 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: DataNode{data=FSDataset{dirpath='[/bigdata/dfs/dn/current]'}, localName='XXXX.azure.com:50010', datanodeUuid='4ea75665-b223-4456-9308-1defcad54c89', xmitsInProgress=0}:Exception transfering block BP-939287337-X.X.X.4-148408516

3925:blk_1077604623_3864267 to mirror X.X.X.5:50010: java.net.SocketTimeoutException: 65000 millis timeout while waiting for channel to be ready for read. ch : java.ni

o.channels.SocketChannel[connected local=/X.X.X.4:43801 remote=X.X.X.5:50010]

2017-05-03 10:03:17,922 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: XXXX.azure.com:50010:DataXceiver error processing WRITE_BLO

CK operation src: /X.X.X.4:53902 dst: /X.X.X.4:50010

java.net.SocketTimeoutException: 65000 millis timeout while waiting for channel to be ready for read. ch : java.nio.channels.SocketChannel[connected local=/X.X.X.4:438

01 remote=/X.X.X.5:50010]

at org.apache.hadoop.net.SocketIOWithTimeout.doIO(SocketIOWithTimeout.java:164)

at org.apache.hadoop.net.SocketInputStream.read(SocketInputStream.java:161)

at org.apache.hadoop.net.SocketInputStream.read(SocketInputStream.java:131)

at org.apache.hadoop.net.SocketInputStream.read(SocketInputStream.java:118)

at java.io.FilterInputStream.read(FilterInputStream.java:83)

at java.io.FilterInputStream.read(FilterInputStream.java:83)

at org.apache.hadoop.hdfs.protocolPB.PBHelper.vintPrefixed(PBHelper.java:2241)

at org.apache.hadoop.hdfs.server.datanode.DataXceiver.writeBlock(DataXceiver.java:743)

at org.apache.hadoop.hdfs.protocol.datatransfer.Receiver.opWriteBlock(Receiver.java:169)

at org.apache.hadoop.hdfs.protocol.datatransfer.Receiver.processOp(Receiver.java:106)

at org.apache.hadoop.hdfs.server.datanode.DataXceiver.run(DataXceiver.java:246)

at java.lang.Thread.run(Thread.java:745)

2017-05-03 10:04:52,371 ERROR org.apache.hadoop.hdfs.server.datanode.DataNode: XXXX.azure.com:50010:DataXceiver error processing WRITE_BLO

CK operation src: /X.X.X.4:54258 dst: /X.X.X.4:50010

java.io.IOException: Premature EOF from inputStream

at org.apache.hadoop.io.IOUtils.readFully(IOUtils.java:201)

at org.apache.hadoop.hdfs.protocol.datatransfer.PacketReceiver.doReadFully(PacketReceiver.java:213)

at org.apache.hadoop.hdfs.protocol.datatransfer.PacketReceiver.doRead(PacketReceiver.java:134)

at org.apache.hadoop.hdfs.protocol.datatransfer.PacketReceiver.receiveNextPacket(PacketReceiver.java:109)

at org.apache.hadoop.hdfs.server.datanode.BlockReceiver.receivePacket(BlockReceiver.java:500)

at org.apache.hadoop.hdfs.server.datanode.BlockReceiver.receiveBlock(BlockReceiver.java:896)This log is getting such errors even during night time or early morning when nothing is running.

My cluster is used to getting webpage info using wget and then processing the data using SparkR.

Apart from this, I am also getting Block count more than threshold, for which I have another thread. http://community.cloudera.com/t5/Storage-Random-Access-HDFS/Datanodes-report-block-count-more-than-t...

Please help!.

Cluster configs(after recent upgrades) -

NN: RAM- 112GB, Core 16, Disk : 500GB

DN1: RAM- 56GB, Core 8, Disk: 400GB

DN2: RAM- 28GB, Core 4, Disk: 400GB

Thanks,

Shilpa

Created 05-05-2017 05:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

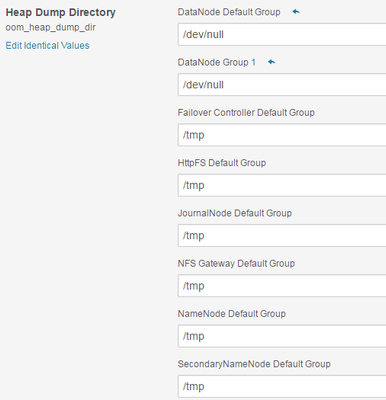

I found the Heap size of Datanode Role of my NameNode was low (1 GB) hence increased it to 3GB and hprof files are now not getting generated. I changed the heap dump path back to /tmp to verify for 24 hours ago to verify.

HDFS > configuration > DataNode DefaultGroup > Resource Management > Java Heap Size of DataNode in Bytes in Cloudera Manager.

Thanks,

Shilpa

Created 05-03-2017 08:12 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, as a workaround you could prevent the generation of the hprof files by setting the jvm option HeapDumpPath to /dev/null instead of /tmp. This will not resolve the root cause obviously.

Created on 05-03-2017 09:30 PM - edited 08-17-2019 07:40 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hmm.. actually I thought about it once but didnt do it. But till I find a resolution i need a workaround. So i have changed the HeapDump Path to /dev/null but only for Datanodes.

Thanks @Ward Bekker 🙂

Created 05-05-2017 05:19 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

I found the Heap size of Datanode Role of my NameNode was low (1 GB) hence increased it to 3GB and hprof files are now not getting generated. I changed the heap dump path back to /tmp to verify for 24 hours ago to verify.

HDFS > configuration > DataNode DefaultGroup > Resource Management > Java Heap Size of DataNode in Bytes in Cloudera Manager.

Thanks,

Shilpa