Support Questions

- Cloudera Community

- Support

- Support Questions

- Re: nifi node has very high memory usage even when...

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Subscribe to RSS Feed

- Mark Question as New

- Mark Question as Read

- Float this Question for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

nifi node has very high memory usage even when no dataflow/shutdown

- Labels:

-

Apache NiFi

Created 09-24-2018 12:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I have a nifi cluster of 3 nodes with external zookeeper and a simple dataflow consisting of consumeKafka+JoltTransformJSON. All 3 nifi nodes are running on dedicated virtual machines with 32GRAM and 16 core. Nifi heap size set to 8G. The problem I notice is that memory usage never reduces even when all processors are stopped and queues are empty.

The heap usage when dataflow is running is usually around 40~70%(heap size is set up 8g), which isnt too bad. However, from vm monitor tool, memory usage is usually around 80% (for all 32G RAM, and nifi is the only process that runs on each vm). After dataflow is done, there is no significant decrease in heap and memory usage. Heap usage is around 40~50%, memory is at least 60%. A restart of nifi can bring down memory from 90% to 70%. I tried to stop all nifi process, and memory usage is still at 60%. So I have to restart each vm after 2 or 3 run for best performance.

What could cause this memory behavior? From my understanding, flowfile and their content are stored in memory when they are in queue. So ideally, there shouldn't be much memory usage when queues are empty and all processors are stopped? I read a post which points out that there are some archiving/cleanup going on even when no dataflow is running, but memory usage should eventually decrease to normal level right?

Created 09-25-2018 06:07 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How are you measuring the memory being utilized by NiFi?

FYI, the content of a flow file is not stored in memory, only the properties and attributes associated with the physical file in the content repository are held in the JVM heap memory. The only time the content is in memory is when a processor that deals with the content of a file is in the flow.

Created on 09-26-2018 09:41 PM - edited 08-17-2019 10:21 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Wynner,

Thanks for looking into my problem. I use virtualization tool to see overall memory and cpu usage and the above data I mentioned (mem 80%) were all come from it.

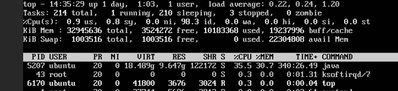

Now I use top command to examine performance more closely, it shows that a large part of memory usage is buff/cache, which can be around 20G even when no dataflow is running/queues are all empty. So I am wondering what's been stored in there and not released after dataflow is done. Nifi is the only java program running on each instance.

Thanks,

leah

Created 10-01-2018 06:44 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The NiFi java process is actually two processes. The actual NiFi java process and a bootstrap java process. The bootstrap process has a very small memory footprint, 12MB to 24MB, that looks for the NiFi java process. If it doesn't find that process it will try to start it. The NiFi java process will reserve the memory allocated, in this case 8GB, and hold it. It doesn't actually use the memory, but reserves it so it is available as NiFi needs it. There won't be any garbage clean up until the memory usage is around 80% utilization.