Community Articles

Find and share helpful community-sourced technical articles.

Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

- Cloudera Community

- Support

- Community Articles

- HDF 2.x : MultiNode NiFi Clusters on AWS EC2 for S...

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Options

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Guru

Created on

03-14-2017

02:09 PM

- edited on

04-21-2026

05:24 AM

by

GrazittiAPI

Introduction

What we’re doing:

- Committing to <2 hours setup time

- Deploying a matched pair of 3 node HDF clusters into two different zones on AWS EC2

- Configuring some basic performance optimisations in EC2/NiFi

- Setting up site-to-site to work with EC2's public/private FQDNs

What you will need:

- AWS account with access / credits to deploy 3x EC2 machines in two different regions

- Approx 80Gb SSD disk per machine, for 480GB total

- 3x Elastic IPs per region

- (Preferable) iTerm or similar ssh client with broadcast capability

- You should use two different AWS regions for your clusters, I’ll refer to them as regionA and regionB

Caveats:

- We’re going to use a script to setup OS pre-requisites and deploy HDF on the server nodes to save time, as this article is specific to AWS EC2 setup and not generic HDF deployment

- We’re not setting up HDF Security, though we will restrict access to our nodes to specific IPs

- This is not best practice for all use cases, particularly on security; you are advised to take what you learn here and apply it intelligently to your own environment needs and use cases

- You don't have to use Elastic IPs, but they'll persist through environment reboots and therefore prevent FQDN changes causing your services to need reconfiguration

Process

Part 1: EC2 Public IPs, Instance & OS setup, and HDF packages deployment

Create Public IPs

- Login to your AWS:EC2 account and select regionA

- Select the ‘Elastic IPs’ interface

- Allocate 3x new addresses, note them down

- Switch to regionB and repeat

Launch EC2 instances

- Run the ‘Launch Instance’ wizard

- From ‘AWS Marketplace’, select ‘CentOS 7 (x86_64) - with Updates HVM’

- Select ‘t2.xlarge’ (4x16)

- This is the minimum that will reasonably run the HDF stack, choose bigger if you prefer.

- Same for Node count and disk size below

- Set Number of Instances to ‘3’

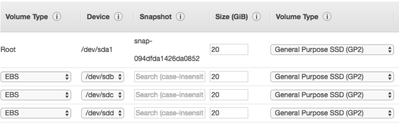

- Set root volume to 20Gb General Purpose SSD

- Add 3x New Volumes of the same configuration

- Add 3x New Volumes of the same configuration

- Set the ‘Name’ tag to something meaningful

- Create a new security group called ‘HDF Performance Test’

- Set a rule to allow ‘All traffic’ to ‘My IP’

- Add 3x new rules to allow ‘All Traffic’ to the Elastic IPs in regionB (the other region) that you created earlier

- Add the local subnet for internode communication

- Optional: You could create rules for the specific ports required, at the cost of a much longer configuration. Ports 22 (ssh), 8080 (Ambari), 9090-9092 (NiFi) should be sufficient for a no-security install

- Review your configuration options, and hit Launch

- Select your ssh key preference; either use an existing key or create a new one and download it

- Once the launcher completes, go to Elastic IPs

- Associate each Instance with an Elastic IP

- Note down the Private and Public DNS (FQDN) for each instance; the Public should have similar values to the Elastic IPs you allocatedWhile the deployment finishes, repeat Steps 1- 13 in the other region to create your matching sets of EC2 instances

OS Setup and package installs

- (optional) Launch iTerm, and open a broadcast tab for every node

- Otherwise issue commands to each node sequentially (much slower)

yum update -y mkfs -t ext4 /dev/xvdb mkfs -t ext4 /dev/xvdc mkfs -t ext4 /dev/xvdd mkdir /mnt/nifi_content mkdir /mnt/nifi_flowfile mkdir /mnt/nifi_prov mount /dev/xvdb /mnt/nifi_content/ mount /dev/xvdc /mnt/nifi_flowfile/ mount /dev/xvdd /mnt/nifi_prov/ echo "/dev/xvdb /mnt/nifi_content ext4 errors=remount-ro 0 1" >> /etc/fstab echo "/dev/xvdc /mnt/nifi_flowfile ext4 errors=remount-ro 0 1" >> /etc/fstab echo "/dev/xvdd /mnt/nifi_prov ext4 errors=remount-ro 0 1" >> /etc/fstab

- We’re going to use a script to do a default install of Ambari for HDF, as we’re interested in looking at NiFi rather than overall HDF setup

- https://community.hortonworks.com/articles/56849/automate-deployment-of-hdf-20-clusters-using-ambar....

- Follow steps 1 – 3 in this guide, reproduced here for convenience

- Tip: using the command ‘curl icanhazptr.com’ will provide you the FQDN of the current ssh session for convenience

- Run these commands as root on the first node, which I assume will be running Ambari server

export hdf_ambari_mpack_url=http://public-repo-1.hortonworks.com/HDF/centos7/2.x/updates/2.1.2.0/tars/hdf_ambari_mp/hdf-ambari-mpack-2.1.2.0-10.tar.gz yum install -y git python-argparse git clone https://github.com/seanorama/ambari-bootstrap.git export install_ambari_server=true ~/ambari-bootstrap/ambari-bootstrap.sh ambari-server install-mpack --mpack=${hdf_ambari_mpack_url} --purge --verbose #enter 'yes' to purge at prompt ambari-server restart

- Assuming Ambari will run on the first node in the cluster, run these commands as root on every other node

export ambari_server= <FQDN of host where ambari-server will be installed>; export install_ambari_server=false curl -sSL https://raw.githubusercontent.com/seanorama/ambari-bootstrap/master/ambari-bootstrap.sh | sudo -E sh ;

Deploy and Configure multi-node HDF Clusters on AWS EC2

HDF Cluster Deployment

- Open a browser to port 8080 of the public FQDN of the Ambari Server

- Login using the defaults credentials of admin/admin

- Select ‘Launch Install Wizard’

- Name your cluster

- Accept the default versions and repos

- Fill in these details:

- Provide the list of Private FQDNs in the ‘Target Hosts’ panel

- Select ‘Perform manual registration on hosts’ and accept the warning

- Wait while hosts are confirmed, then hit Next

- If this step fails, check you provided the Private FQDNs, and not the Public FQDNs

- Select the following services: ZooKeeper, Ambari Infra, Ambari Metrics, NiFi

- Service layout

- Accept the default Master Assignment

- Use the ‘+’ key next to the NiFi row to add NiFi instances until you have one on each Node

- Unselect the Nifi Certificate Service and continue

- Customize Services

- Provide a Grafana Admin Password in the ‘Ambari Metrics’ tab

- Provide Encryption Passphrases in the NiFi tab, they must be at least 12 characters

- When you hit Next you may get Configuration Warnings from Ambari; resolve any Errors and continue

- Hit Deploy and monitor the process

- Repeat steps 1 – 13 on the other cluster

NiFi Service Configuration for multi-node cluster on AWS EC2

- Login to the cluster

- In the NiFi service panel, go to the Configs tab

- Enter ‘repository’ in the top right filter box, and change the following

- Nifi content repository default dir = /mnt/nifi_content

- Nifi flowfile repository dir = /mnt/nifi_flowfile

- Nifi provenance repository default dir = /mnt/nifi_prov

- Enter ‘mem’ in the filter box:

- Set Initial memory allocation = 2048m

- Set Max memory allocation = 8096m

- Enter ‘port’ in the filter box:

- Note down the NiFi HTTP port (Non-SSL), default is 9090

- Set nifi.remote.input.socket.port = 9092

- Save your changes

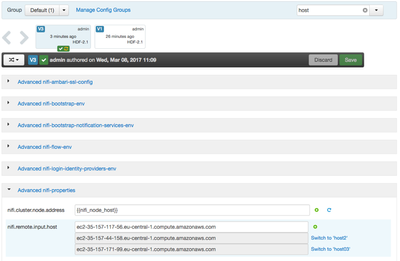

- Enter ‘nifi.remote.input.host’ in the filter box:

- Note that we must use specific config groups to work around EC2’s NAT configuration

- Set nifi.remote.input.host = <Public FQDN of first NiFi Node>

- Save this value

- Click the ‘+’ icon next to this field to Override the field

- Select to create a new NiFi Configuration Group, name it host02

- Set nifi.remote.input.host = <Public FQDN of the second NiFi node>

- Save this value

- Repeat for each NiFi node in the cluster

- When all values are set in config groups, go to the ‘Manage Config Groups’ link near the filter box

- Select each config group and use the plus key to assign a single host to it. The goal is that each host has a specific public FQDN assigned to this parameter

- Check your settings and restart the NiFi service

- You can watch the NiFi service startup and cluster voting process by using the command ‘tail –f /var/log/nifi/nifi-app.log’ on an ssh session on one of the hosts.

- NiFi is up when the jetty server reports the URLs it is listening on in the log, by default this is http://<public fqdn>:9090/nifi

Summary

In this article we have deployed sets of AWS EC2 instances for HDF clusters, prepared and deployed the necessary packages, and set the necessary configuration parameters to allow NiFi SiteToSite to operate behind the AWS EC2 NAT implementation.

In the next article I will outline how to build the Dataflow for generating a small files performance test, and pushing that data efficiently via SiteToSite.

3,996 Views