Community Articles

- Cloudera Community

- Support

- Community Articles

- Using Sqoop to fetch many tables in parallel

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 03-18-2016 02:40 PM

Introduction

This wiki page describes the script sqoopTables.sh (GitHub: https://github.com/sourygnahtw/hadoopUtils/blob/master/scripts/sqoop/sqoopTables.sh).

The purpose of the script is to sqoop in parallel many tables and to store them into Hive.

Motivations

In a previous project, I needed to download around 400 tables (out of 500) of a SQLserver database.

Most of the tables were quite small (less than a few MB), which means that the overhead of fetching the metadata in Sqoop (establishing the connexions, getting the DDL of the table...) is very important compared to the time to do the real job (download the data). Getting the 400 tables could take around 6 hours.

To speed up the downloading process, Sqoop usually "splits" a table in 4 parts, to parallelise the downloading process in 4 streams. In my project, such approach was not working:

- Many tables had not clear primary keys or the distribution of those keys was not uniform. Trying to find better split columns for 400 tables was a waste of time...

- Trying to split a table in several parts for downloading is sometimes not optimal for the database (how are those rows stored on disk?)

- Most of the tables have few data so trying to speed the download of data won't make lot of improvement. We need to speedup the fetching of the metadata.

So the idea of the script is, instead of parallelising the downloading of the data for 1 single table into several stream, to download several tables at the same time. And each table will have only 1 single stream of download.

With that approach, I was able to download the 400 tables in less than 1 hour.

Examples of execution

The easiest way to execute the script is (take care to first configure the SQL driver, user and password. See next chapter):

./sqoopTables.sh fileWithListOfTable

With fileWithListOfTable being a file that lists all the tables we want to Sqoop.

For instance, if we want to Sqoop the 6 tables table1, table2, table3, table4, table5, table6, then the file must contain only 6 lines, 1 for each table:

table1 table2 table3 table4 table5 table6

The script will launch first 4 Sqoop processes, to download the first 4 tables. When one of those first processes finishes, the script launch another Sqoop process for "table5". So that we will always have 4 active Sqoop processes till there is no more table to sqoop.

You can also use some options to tune the script behaviour. For instance:

./sqoopTables.sh -d myDatabase2 -H myHiveDatabase3 -p 6 -q etl listOfTables

In this case, we change the name of the relational and Hive databases. We also change the parallelism to have 6 Sqoop processes working at the same time. And we choose the "etl" Yarn queue instead of the default one.

Configuration

The default configuration can be encountered at the beginning of the script. You will have to change the default values or override them on the command line.

Here are the variables that can be modified:

origServer=myRelationalDatabase.example.com # The FQDN of the relational database you want to fetch (option: -o) origDatabase=myDatabase # The names of the database that contains the tables to fetch (option: -d) hiveDatabase=myHiveDatabase # The name of the Hive database that will get the tables fetched (option: -H) parallelism=4 # The number of tables (sqoop processes) you want to download at the same time (option: -p) queue=default # The queue used in Yarn (option: -q) baseDir=/tmp/sqoopTables # Base directory where will be stored the log files (option: -b) dirJavaGeneratedCode=/tmp/doSqoopTable-`id -u`-tmp # Directory for the java code generated by Sqoop (option: -c) targetTmpHdfsDir=/tmp/doSqoopTable-`id -u`-tmp/$$ # Temporary directory in HDFS to store downloaded data before moving it to Hive

Important!

This script is focused to SQLserver. Search the "sqoop import" line (in the middle of the script) and change the header of the URL appropriately. Take care also to change in this line the user and password needed to connect to the relation database.

Some few more notes

Logging

The script shows on the standard output the name of each table it has started to download, so that you can easily know how much part of the work defined in the "listOfTables" table has been accomplished.

It also stores more information in the logging directory (by default: /tmp/sqoopTables). For each parallelisation stream (4 streams by default), you will have 2 kind of files available:

- process_N-summary.log: an overview log. After executing the script, you should always have a look at those files. You may see the Hive stats (for instance: "[numFiles=1, totalSize=1263]"). But you must also get sure that there is no java error, due to an error when trying to Sqoop some tables (the standard output won't show up errors, this is why you must have a look at those files).

- process_N-raw.log: this is the whole standard output of each Sqoop execution for all the tables downloaded by this stream. After having finishing downloading a table, the script will "tail" the last 6 lines of this file and write them in the process_N-summary.log file. That is why the "summary log" is a quick way to detect errors. The "raw log" enables you to get enough details to debug any issue that might happen.

Parallelism

By default, the script uses 4 streams, meaning that 4 tables will be sqooped at the same time (thus, 4 connections to the relational database will be established). This number was chosen because Sqoop uses 4 as a default.

However, this number is quite conservative and you might easily put a higher degree of parallelism (even more if your tables are quite small). In my SQLserver project for instance, I have set that number to 12. For another Teradata project, I used 54 (more due to a limitation of containers on the Hadoop side than a limitation on the Teradata).

To avoid wasting containers, the script makes use of ubertask.

Mapping of the name of the tables

The names of the tables in Hive might be a bit different from the names in the relational database.

In the middle of the doSqoop() function, there is an example (commented) showing how to establish some mappings.

For instance, you might want to change all the table names that start with "raw_" by "ro_".

Created on 09-10-2016 12:18 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thank you for sharing your script on Github.

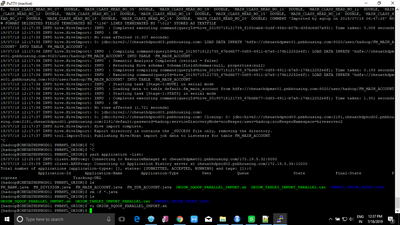

I made some changes to the sqoop import command and I ran the script with 4 concurrent imports, from MySQL to Hive:

sqoop import -D mapreduce.job.queuename=$queue -D mapreduce.job.ubertask.enable=true --connect jdbc:mysql://$origServer:3306/$origDatabase --username=$myUser --password=$myPassword --driver com.mysql.jdbc.Driver --connection-manager org.apache.sqoop.manager.GenericJdbcManager --query "select a.* from $origTable a where \$CONDITIONS" -m 1 --fields-terminated-by '\t' --outdir $dirJavaGeneratedCode --hcatalog-home $hCatalogHome --hcatalog-database $hiveDatabase --hcatalog-table $myTable --create-hcatalog-table --hcatalog-storage-stanza 'stored as orc tblproperties ("orc.compress"="ZLIB")' >> $logFileRaw 2>> $logFileRaw

I'm importing 971 tables from MySQL for a total size on disk of about 5GB.

I'm getting the following error after importing (successfully) about 130 tables:

16/09/09 15:52:27 INFO mapreduce.Job: Task Id : attempt_1473456475868_0168_m_000000_0, Status : FAILED Error: org.apache.hadoop.ipc.RemoteException(java.io.IOException): File /apps/hive/warehouse/development_testing.db/financialprograms/_SCRATCH0.047695628142107815/_temporary/1/_temporary/attempt_1473456475868_0168_m_000000_0/part-m-00000 could only be replicated to 0 nodes instead of minReplication (=1). There are 4 datanode(s) running and no node(s) are excluded in this operation. at org.apache.hadoop.hdfs.server.blockmanagement.BlockManager.chooseTarget4NewBlock(BlockManager.java:1592) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getNewBlockTargets(FSNamesystem.java:3158) at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalBlock(FSNamesystem.java:3082) at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.addBlock(NameNodeRpcServer.java:822) at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.addBlock(ClientNamenodeProtocolServerSideTranslatorPB.java:500) at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java) at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:616) at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:969) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2206) at org.apache.hadoop.ipc.Server$Handler$1.run(Server.java:2202) at java.security.AccessController.doPrivileged(Native Method) at javax.security.auth.Subject.doAs(Subject.java:422) at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1709) at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2200)

It's not related the DataNodes being down since I tried the import several times and it always stops around the same point (like it's reaching a limit somewhere); the script actually doesn't stop but it processes all tables: tables are created in Hive, Hadoop makes 2 attempts to execute the job (import MySQL table) and finally it fails with the error above; the table in Hive remains empty; I noticed that a temporary file representing the table's records is created in Hive but after the job fails, the temporary file (_SCRATCH...) is deleted.

I also checked if the Datanodes are healthy, and they are.

I tried to double the value of: NameNode Java heap size and DataNode maximum Java heap size (from 1024MB to 2048MB) in HDFS and also to double the value of 'Hadoop maximum Java heap size' to 2048MB with no success. It's a configuration issue (more heap size required? Where?) but I don't know where to look at at this point.

There is disk space on the datanodes (less then 45% of the space is used and there is enough space to import the MySQL tables to Hive). Datanodes (4) have 15GB memory.

Any suggestion to troubleshoot the issue is highly appreciated.

Created on 03-07-2018 11:43 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi,

We are doing sqoop for first 4 tables, out of that 2 got failed due to memory issue.

How the failed sqoop will be restarted again? Does your framework has that support as well?

Thanks

Created on 11-23-2018 07:36 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

if am exceuting below command it is working

bash sqoop2.sh -d dholding -H mydatbase -o 102.05.61.106:0006 -p 1 -q etl /home/trainer1/703226740/tablenames.txt

[Fri Nov 23 02:29:11 EST 2018] Creating the table sourcedatabse.employee from the SQLserver table sourcedatbase.employee

but if am passing variable of username and password with options it is throwing below error please help how to resolve this below error

bash sqoop2.sh -u trainer1 -pp hsgh21234 -d sourcedatabse -H mydatbase -o 102.05.01.146:0006 -p 1 -q etl /home/trainer1/703226740/tablenames.txt 1usage: sqoop2.sh

[-b <report directory>] [-c <directory for java code>] [-u <username>] [-pp <password>] [-d <source database] [-H <hive database>] [-o <source server>] [-p <parallelism>] [-q <queue>] <fileName>

usage: sqoop2.sh -h

the file must contain on each line the name of a table you want to sqoop. If you want to sqoop 5 tables, you need 5 lines in your file

Created on 07-16-2019 03:22 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Sourygna Luangsay I am unable to see tables in hive. The sqoop import seems to be successful.

Created on 07-18-2019 07:12 AM - edited 08-17-2019 01:01 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hive Import is Complete, but the next line gives the INFO hive.HiveImport: Export directory is contains the _SUCCESS file only, removing the directory.

I have made changes in the sqoop query as i am fetching data from Oracle.

When i log into hive the database doesn't has any tables, Please provide appropriate solution to this.

Hopeful to hear from you guys.