Community Articles

- Cloudera Community

- Support

- Community Articles

- Cheat Sheet and Tips for a Custom Install of Horto...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on

02-13-2016

04:50 PM

- edited on

02-14-2020

08:44 AM

by

VidyaSargur

This article is for those who want a cheat sheet for a smooth installation of HDP in a Dev, or Test with one or more of the following requirements:

- Place all the log data into a different directory, not /var/log

- All your service user names must be prefixed with the cluster name. The requirement is that these users must be centrally managed by AD or an LDAP.

- You do not have any local users in the Hadoop cluster, including Hadoop service users. This becomes important if you wish to have Centrify deployed also, or if you would be deploying multiple clusters with a single LDAP/ AD integration. Once again, these service names should have a cluster-prefix.

- You want to set appropriate YARN, Tez and MapReduce, Amabri Metrics Memory Parameters during Install.

Side Note: It is always prudent to get Professional Service assistance to either install or configure your production deployment, to make sure all the per-requisites, unique to your environment are covered and met.

--------------------------------------------------------------------------------------------------------

Step 1: Do Your Research..... Plan, Plan, Plan, Do it Right the First time, or Risk Doing it Over, and Over Again

This article is not intended to replace the Hortonworks docs or all the excellent resources here in HCC or elsewhere.

Apart from the Hortonworks docs, review:

- Hortonworks Operational Best Practices Webcast and Slides

- Typical Hadoop Cluster Networking Practices

- Best Practice Linux File System for Hadoop and ext4 vs. XFS

- Yarn Directories Recommended Size and Disk.

- Best Practice Zookeeper Placement

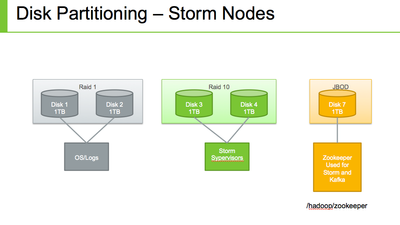

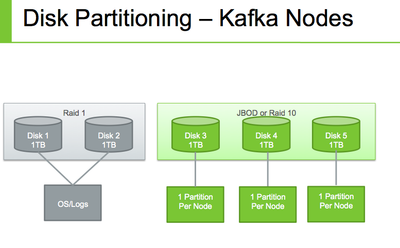

- Best Practice for Storm and Kafka Deployment and Unofficial Storm and Kafka Best practices Guide

- Name Node Garbage Collection Best Practice

- Tools to test the Performance, Scale and Reliability of Your Cluster

--------------------------------------------------------------------------------------------------------

Step 2: Get your Disk partitions Right

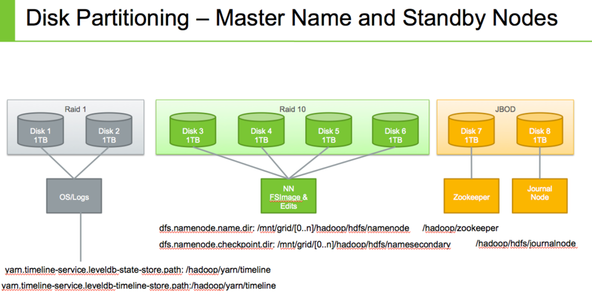

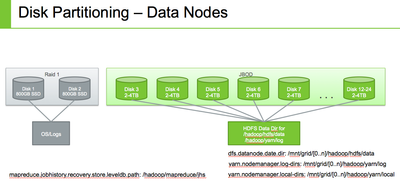

See the following for some guidance. Take note of the hadoop properties and default locations. You need to have this done ahead of time.

Disk Partition Baseline

--------------------------------------------------------------------------------------------------------

Name Nodes Disk Partitioning

--------------------------------------------------------------------------------------------------------

Data Nodes Disk Partition

--------------------------------------------------------------------------------------------------------

Ambari/ Edge/ Ranger/ Knox Nodes Disk Partition

--------------------------------------------------------------------------------------------------------

Storm and Kafka Nodes Disk Partition

--------------------------------------------------------------------------------------------------------

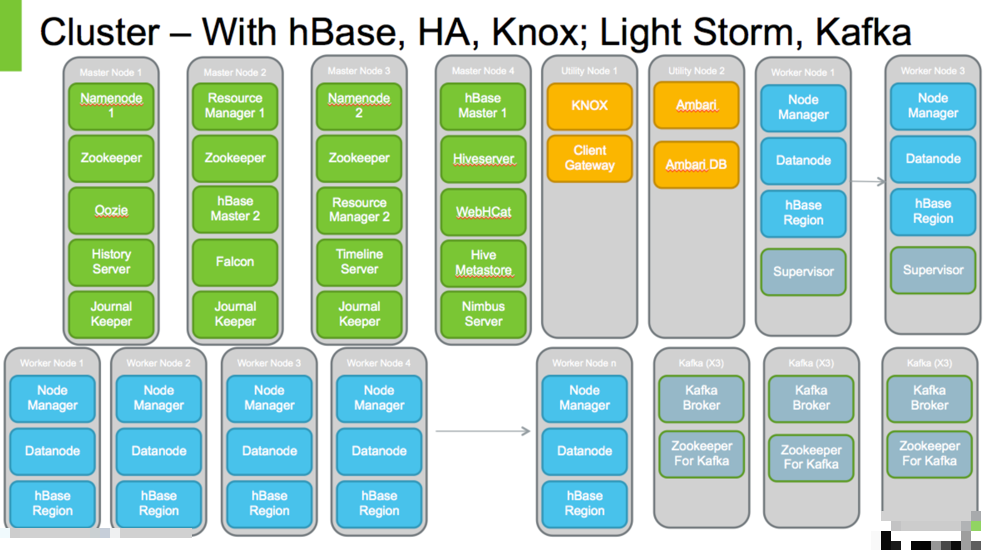

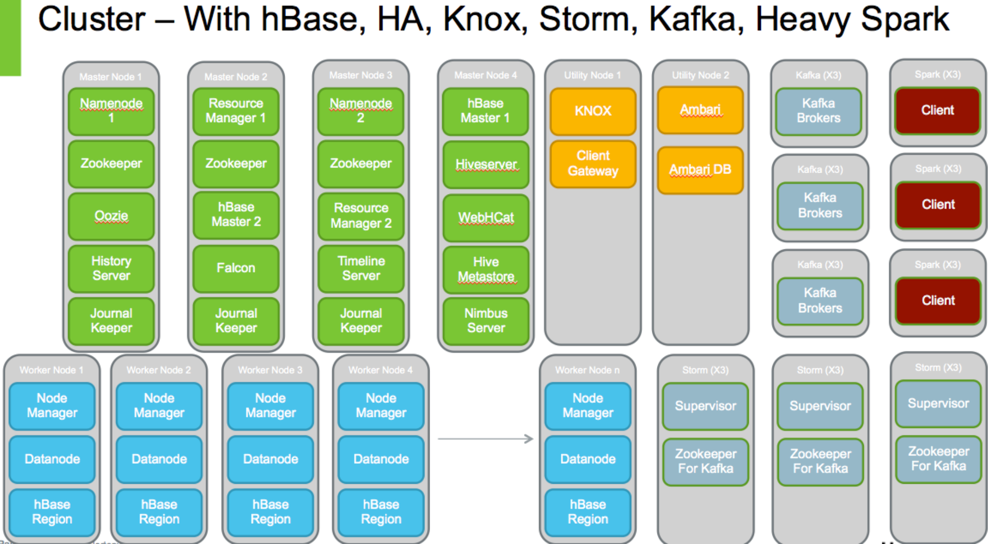

Step 3: Don't Scrimp on Master Nodes. Know the Placement of Your Master Services

If you want to do yourself an injustice, just allocate one or two master nodes.

If you want to do things properly, and you want to be set for up to 50 nodes, then please have at least 3 master nodes, better 4, if you doing HA, with at least 1 Edge and 1 Admin/ Ambari Server.

It is a PAIN and some effort involved to move master services if you don't get it right.

Figure out where you placing your Master Services. Use the following as a Guide:

--------------------------------------------------------------------------------------------------------

Step 4: Get a Dedicated Database Server with HA for Ambari, Hive, Metastore, Oozie, Ranger

Oozie by default installs on Derby. You do not want Derby in your cluster.

Ambari by default installs on Postgres. You can decide to keep it there.

Hive's metastore uses MySQL. You can use a dedicated MySQL Database for Hive, Ranger Admin, and Oozie. Bear in mind though that if you restart Hive's metastore, it may affect Ranger and Oozie.

The instructions for setting up the databases before an Ambari install is located at Using Non Default Databases

--------------------------------------------------------------------------------------------------------

Step 5: Create Service Accounts Beforehand in your LDAP

Decide what you rcluster prefix would be. Do not put an underscore "_" or a hyhen "-" in your prefix.

The list of service accounts you need to create are located here.

Solr is missing from the list. You need this user if you want to install Ranger, for Ranger uses Solr from HDP 2.3 and above for auditing and to show audit events in the UI.

Create a solr user with default group solr, with membership in the hadoop group also.

IMPORTANT: On each node, get the AD or LDAP UID for hdfs, and group hadoop; edit the /etc/passwd and /etc/groups and add the users there with the CORRECT UID fom AD or Ldap. I have found that even though you choose the option to

Skip Group Modifications to not modify the Linux groups in the cluster, and you tell Ambari to do not Manage HDFS, some of the yum installs still tries to create the, Ambari would respect your wishes but not yum.

Make sure the entries in your /etc/passwd and /etc/groups have your cluster prefix.

When you install through Ambari it is very important that you config the right properties so that Ambari is aware of your centrally managed cluster-prefixed service names:

Set Skip Group Modification Tell Ambari DO not Manage HDFS

Follow the instructions at

Setting properties that depend on service usernamesgroups

There is one property missing from the doc.

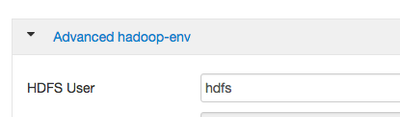

Also set HDFS User to your <cluster-prefix>-hdfs also in Advanced hadoop-env.

--------------------------------------------------------------------------------------------------------

Step 6: Use Hortonworks Handy Scripts to Automatically Prepare the Environment Across all Nodes

So you have your disk partitions, your network is setup, you have decided on your master services placement, you have created the service names in LDAP with a cluster prefix, you have edited your /etc/passwd and /etc/groups.

Here comes the fun part.

Go to your Ambari node and perform the following:

# Install Hortonworks Public Tools > yum install wget > wget –qO- --no-check-certificate https://github.com/hortonworks/HDP-Public-Utilities/raw/master/Installation/install_tools.sh | bash >./install.sh >cd hdp #Everything will be installed to /root/hdp; create the /root/hdp/Hostdetail.txt file with all the hostnames for your cluster. # Hostname –f > /root/hdp/Hostdetail.txt vi /root/hdp/Hostdetail.txt #To set up Password-less SSH > ssh-keygen >chmod 700 ~/.ssh >chmod 600 ~/.ssh/id_rsa # Distribute the keys to other nodes. The copy command is needed because the ./distribute_ssh_keys.sh script thinks the private key is at /tmp/ec2_keypair. Else if you set up your nodes with a root passwrd, when prompted by the script, just enter it. > cp <your nodes private key> /tmp/ec2_keypair > ./distribute_ssh_keys.sh ~/.ssh/id_rsa.pub #Optional: Copy the private key to all nodes if you want password less ssh from any node to any node. Don't do this, if you only want password-less ssh ONLY from the Ambari Node. Password-less ssh is only needed for Ambari to install Agents on all nodes, else without it you need to install the Agents and configure them yourself. >./copy_file ~/.ssh/id_rsa ~/.ssh/id_rsa # Test passwordless SSH > ssh <node> #Now run a script to set all the OS pre-requisites for a cluster install. You may have to edit ./run_command.sh and add to the ssh command, ssh -tty, since the ./hdp_preinstall.sh script has sudo commands in it. > ./run_command.sh 'mkdir /root/hdp' > ./copy_file.sh /root/hdp/hdp_preinstall.sh /root/hdp/hdp_preinstall.sh > vi run_command.sh (add "-tty" to the ssh call) # Now in one swoop set the OS parameters > ./run_command.sh './root/hdp/hdp_preinstall.sh' REBOOT ALL NODES #DOUBLE CHECK That all the Nodes retain all the OS Environment Configuration Changes for HDP Install > ./pre_install_check.sh | tee report.txt #View the report. Ignore the Repo warnings for Ambari and HDP, if you are connected to internet and you will pull the repos from there duing install. > vi report.txt # Now get your YARN Parameters to use when you install the cluster via Ambari # Download Hortonworks Companion files > wget http://public-repo-1.hortonworks.com/HDP/tools/2.3.4.0/hdp_manual_install_rpm_helper_files-2.3.4.0.3... > tar -zxvf hdp_manual_install_rpm_helper_files-2.3.4.0.3485.tar.gz > cd hortonworks-HDP-Public-Utilities-d617f44 # Now run the Script to determine your memory parameters that you would set in Ambari during the Customize Services Step. Put your Number of Cores (c), Memory per Node (m), Disks per Node for HDFS (d) and Whether HBase will be installed or not (-k) into the python call >python yarn-utils.py -c 16 -m 64 -d 4 -k True

See Determine YARN and HDP memory

Make a note of these memory settings to to plug in during Ambari Install.

--------------------------------------------------------------------------------------------------------

Step 7: Installing Ambari

Now you start install Ambari and HDP from the doc at

- Don't forget about setting your cluster-prefixed service name for hdfs and hbase

- Don't choose a cluster name that has an underscore (_) because HDFS HA does not like it.

- Don't forget to change the locations as per the Disk Partition diagrams above of all

- You can change the directory for Hadoop logs upon install if you wish. See https://community.hortonworks.com/questions/4329/log-file-location-is-there-a-way-to-change-varlog.h...

- Don't forget to set the YARN and MapReduce Memory Parameters found from the python script.

- Don't Forget to set the name Node Garbage Collection.

- You can do the following to get Ambari running better during install: http://docs.hortonworks.com/HDPDocuments/Ambari-2.2.0.0/bk_ambari_reference_guide/content/ch_tuning_...

- During Install you can configure Ambari Metrics: See https://cwiki.apache.org/confluence/display/AMBARI/Configurations+-+Tuning and http://docs.hortonworks.com/HDPDocuments/Ambari-2.2.0.0/bk_ambari_reference_guide/content/_ams_gener...

- You can follow this to tune Tez During the Install. See https://community.hortonworks.com/articles/14309/demystify-tez-tuning-step-by-step.html

- IMPORTANT: For less that 10 Data Nodes

Set mapred.submit.replication =3 in mapred-site.xml

This is to prevent the job related staging files to be created with default replication factor of 10, which would lead to under-replicated block warnings.

--------------------------------------------------------------------------------------------------------

Step 8 Install SmartSense, only offered by Hortonworks.

Finally INSTALL SMARTSENSE, if you are a Hortonworks Customer. If you are not, why NOT? You are missing all the value from SmartSense to auto tune your cluster. (In Ambari 2.2 it is available as a Service.)

--------------------------------------------------------------------------------------------------------

Step 9 Security Tips

- If you plan to install Ranger, INSTALL SOLR FIRST. Don't Add the Ranger Service as yet after you install the cluster.

- Make sure that you use the <cluster-prefix>-solr user in your install, so that the proces runs under that user

- Enable Kerberos if you can BEFORE adding Ranger. If not, that is fine, you would have to configure Ranger and all the plug ins after the fact, but it is easier if you enable Kerberos first.

- Storm, Kafka, Solr Needs Kerberos before you authorize with Ranger

- There is no Security without Kerberos.

--------------------------------------------------------------------------------------------------------

Finally

Most issues are due to a rouge process running having a local uid and not the LDAP, AD UID, so double check using ps -ef. If you set up your /etc/psswd and /etc/group properly before hand, you should not have this issue.

Some issues come up if your files and/ or logs are owned by the local hdfs user. Again if you did not choose the 'Skip Group Modification' option, and told Ambari to not manage HDFS, or set the hdfs user properly during install to the <cluster-prefix>-hdfs, or setup your /etc/psswd and /etc/group you would get this problem.

Remember some yum installs do not care what you set in Ambari for the hdfs user, so you may have to run those manually, so look out for that.

--------------------------------------------------------------------------------------------------------

Update:

A good resource:

https://martin.atlassian.net/wiki/pages/viewpage.action?pageId=45580306

Created on 03-05-2016 06:16 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hey, man, thanks for this write up. Very helpful in gaining insight to the big picture.

I am working on the requirements for my prod cluster. Question for you... Based on my somewhat novice knowledge, it seems like overkill to use RAID-10 and 4 1TB drives for the HA NNs when you are running QJM (which I assume is the case as the diagram also shows ZKs and JNs). All the edits go to the JN JBOD disks. So, that just leaves a couple fsimage files for the RAID-10 arrangement against 1TB - isn't that a bit over kill on storage?. Another confusion point for me is in that the NN disk layout diagram above it shows fsimage and edits going to the RAID-10 disks. Edits are written to the JNs against the one JBOD disk, right? So, is the diagram misleading or am I missing some intended message there? Here's a question I just asked related to this comment.

Created on 04-18-2016 08:35 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Ancil McBarnett, Could you please explain why you propose /dev as a separate 32G partition?

Created on 06-14-2016 07:18 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Very thankful for this write-up.These are the things to be noted before setting-up the cluster, It solve most of the admin related hurdles.

One question, We have 2* 80 GB 5G SATA SSD RAID-1 Hard-drive for Master node, in this blog-post, it suggests 32 GB for /dev partition.

Please suggest the recommended partition by considering the above hard-drive limits

Created on 08-23-2018 03:20 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Nice Ambari Hardware Matrix... Thanks a lot...

Created on 09-24-2018 02:53 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Request for @Ancil McBarnett (or anyone else who knows):

Please flesh out a little on ... "You do not want Derby in your cluster."