Community Articles

- Cloudera Community

- Support

- Community Articles

- Configure SAP Vora HDP Ambari - Part 2

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 09-26-2016 05:03 PM - edited 08-17-2019 09:40 AM

This article "Configure SAP Vora HDP Ambari - Part 2" is continuation of "Getting started with SAP Hana and Vora with HDP using Apache Zeppelin for Data Analysis - Part 1 In...

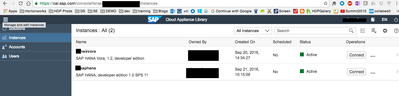

Log back in to SAP Cloud Appliance Library - the free service to manage your SAP solutions in the public cloud. You should have HANA and Vora instances up and running:

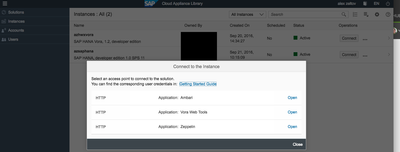

- Open Apache Ambari web UI click on Connect in your SAP HANA Vora instance in CAL, and then pick Open a link for

Application: Ambari.The port of Ambari web UI has been preconfigured for you in the SAP HANA Vora, developer edition, in CAL. As well its port has been opened as one of the default Access Points. As you might remember it translates into the appropriate inbound rule in the corresponding AWS’s security group.

- Log into Ambari web UI using the user

adminand the master password you defined during process of the creation of the instance in CAL. - You can see that (1) all services, including SAP HANA Vora components, are running, that (2) there are no issues with resources, and that (3) there are no alerts generated by the the system.

You use this interface to start/stop cluster components if needed during operations or troubleshooting.

Please refer to Apache Ambari official documentation if you need additional information and training how to use it.

For detailed review of all SAP HANA Vora components and their purpose please review SAP HANA Vora help

We will need to make some configuration to get the HDFS View to work in Ambari and also modify Yarn scheduler.

Setup HDFS Ambari View:

Creating and Configuring a Files View Instance

- Browse to the Ambari Administration interface.

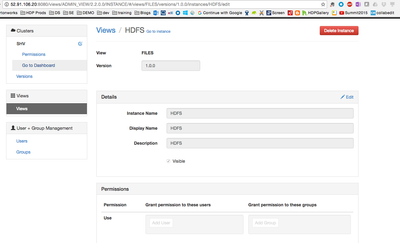

- Click Views, expand the Files View, and click Create Instance.

- Enter the following View instance Details:

Property

Description

Value

Instance Name This is the Files view instance name. This value should be unique for all Files view instances you create. This value cannot contain spaces and is required. HDFS

Display Name This is the name of the view link displayed to the user in Ambari Web. MyFiles Description This is the description of the view displayed to the user in Ambari Web. Browse HDFS files and directories. Visible This checkbox determines whether the view is displayed to users in Ambari Web. Visible or Not Visible

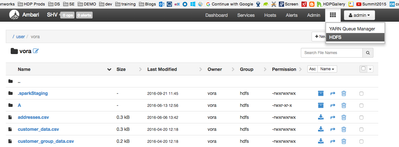

You should see the an ambari HDFS view like this:

Next

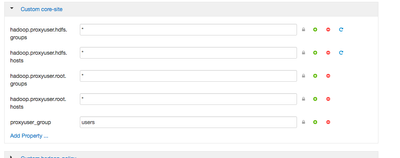

- In Ambari Web, browse to Services > HDFS > Configs.

- Under the Advanced tab, navigate to the Custom core-site section.

- Click Add

Property…

to add the following custom properties:hadoop.proxyuser.root.groups=*

hadoop.proxyuser.root.hosts=*

Now lets test that you can view the HDFS View:

Next we will reconfigure the Yarn to fix an issue when submitting yarn jobs. I got this when running a sqoop job to import data from SAP HANA to HDFS ( this will be a separate how-to article published soon)

YarnApplicationState: ACCEPTED: waiting for AM container to be allocated, launched and register with RM.been stuck like that for a while

Lets set yarn.scheduler.capacity.maximum-am-resource-percent=0.6 . Go to YARN -> Configs and look for property yarn.scheduler.capacity.maximum-am-resource-percent

https://hadoop.apache.org/docs/r0.23.11/hadoop-yarn/hadoop-yarn-site/CapacityScheduler.html

| yarn.scheduler.capacity.maximum-am-resource-percent /yarn.scheduler.capacity.<queue-path>.maximum-am-resource-percent | Maximum percent of resources in the cluster which can be used to run application masters - controls number of concurrent active applications. Limits on each queue are directly proportional to their queue capacities and user limits. Specified as a float - ie 0.5 = 50%. Default is 10%. This can be set for all queues with yarn.scheduler.capacity.maximum-am-resource-percent and can also be overridden on a per queue basis by settingyarn.scheduler.capacity.<queue-path>.maximum-am-resource-percent |

Now lets connect to Apache Zeppelin and load sample data from files already created in HDFS in SAP HANA Vora

- Apache Zeppelin is a web-based notebook that enables interactive data analytics. multi-purposed web-based notebook which brings data ingestion, data exploration, visualization, sharing and collaboration features to Hadoop and Spark.

SAP HANA Vora provides its own

%vorainterpreter, which allows Spark/Vora features to be used from Zeppelin. Zeppelin allows queries to be written directly in Spark SQL - SAP HANA Vora, developer edition, on CAL comes with Apache Zeppelin pre-installed. Similar to opening Apache Ambari to open Zeppelin web UI click on Connect in your SAP HANA Vora instance in CAL, and then pick Open a link for

Application: Zeppelin. - Zeppelin opens up in a new browser window, check it is Connected and if yes, then click on

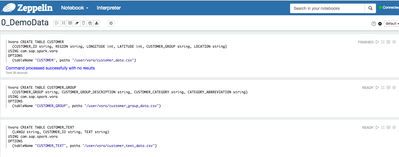

0_DemoDatanotebook. - The

0_DemoDatanotebook will open up. Now you can click on Run all paragraphsbutton on top of the page to create tables in SAP HANA Vora using data from the existing HDFS files preloaded on the instance in CAL. These are the tables you will need as well later in exercises.A dialog window will pop up asking you to confirm to Run all paragraphs? Click OK

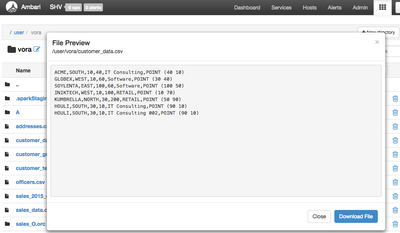

The Vora code will load .csv files and create tables in Vora Spark. You can navigate to the hdfs files using the created view earlier to preview the data right on HDFS:

At this point we setup an Ambari HDFS view to browse our distributed files system on HDP and tested the Vora connectivity to HDFS that everything is working.

Stay tuned for the next article "How to connect SAP Vora to SAP HANA using Apache Zeppelin", where we will now use the Apache Zeppelin to connect to the SAP HANA system in part 1

References:

http://help.sap.com/Download/Multimedia/hana_vora/SAP_HANA_Vora_Installation_Admin_Guide_en.pdf

http://go.sap.com/developer/tutorials/hana-setup-cloud.html

http://help.sap.com/hana_vora_re

http://go.sap.com/developer/tutorials/vora-setup-cloud.html

http://go.sap.com/developer/tutorials/vora-connect.html

http://help.sap.com/Download/Multimedia/hana_vora/SAP_HANA_Vora_Installation_Admin_Guide_en.pdf