Community Articles

- Cloudera Community

- Support

- Community Articles

- Installing Anaconda python on HDP cluster using Cl...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Objectives

This tutorial will walk you through the process of using Cloudbreak recipes to install Anaconda on an your HDP cluster during cluster provisioning. This process can be used to automate many tasks on the cluster both pre-install and post-install.

Prerequisites

- You should already have a Cloudbreak v1.14.0 environment running. You can follow this article to create a Cloudbreak instance using Vagrant and Virtualbox: HCC Article

- You should already have credentials created in Cloudbreak for deploying on AWS (or Azure).

Scope

This tutorial was tested in the following environment:

- macOS Sierra (version 10.12.4)

- Cloudbreak 1.14.0 TP

- AWS EC2

- Anaconda 2.7.13

Steps

Create Recipe

Before you can use a recipe during a cluster deployment, you have to create the recipe. In the Cloudbreak UI, look for the "mange recipes" section. It should look similar to this:

If this is your first time creating a recipe, you will have 0 recipes instead of the 2 recipes show in my interface.

Now click on the arrow next to manage recipes to display available recipes. You should see something similar to this:

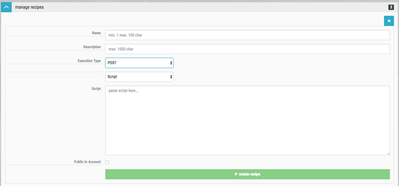

Now click on the green create recipe button. You should see something similar to this:

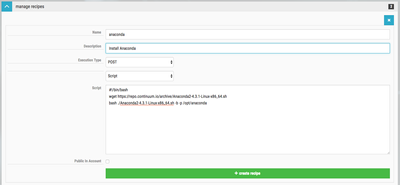

Now we can enter the information for our recipe. I'm calling this recipe anaconda. I'm giving it the description of Install Anaconda. You can choose to install Anaconda as either pre-install or post-install. I'm choosing to do the install post-install. This means the script will be run after the Ambari installation process has started. So choose the Execution Type of POST. Choose Script so we can copy and paste the shell script. You can also specify a file to upload or a URL (gist for example). Our script is very basic. We are going to download the Anaconda install script, then run it in silent mode. Here is the script:

#!/bin/bash wget https://repo.continuum.io/archive/Anaconda2-4.3.1-Linux-x86_64.sh bash ./Anaconda2-4.3.1-Linux-x86_64.sh -b -p /opt/anaconda

When you have finished entering all of the information, you should see something similar to this:

If everything looks good, click on the green create recipe button.

After the recipe has been created, you should see something similar to this:

Create a Cluster using a Recipe

Now that our recipe has been created, we can create a cluster that uses the recipe. Go through the process of creating a cluster up to the Choose Blueprint step. This step is when you select the recipe you want to use. The recipes are not selected by default; you have to select the recipes you wish to use. You specify recipes for 1 or more host groups. This allows you to run different recipes across different host groups (masters, slaves, etc). You can also select multiple recipes.

If you select the anaconda recipe, you should see something similar to this:

[Select Recipe]( ) In our case, we are going to run the recipe on every host group. If you intend to use something like Anaconda across the cluster, you should install it on at least the slave nodes and the client nodes.

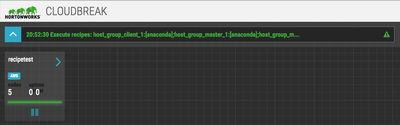

After you have selected the recipe for the host groups, click the Review & Launch button, then launch the cluster. As the cluster is building, you should see a message in the Cloudbreak UI that indicates the recipe is running. When that happens, you will see something similar to this:

Cloudbreak will create logs for each recipe that runs on each host. These logs are located at /var/log/recipe and have the name of the recipe and whether it is pre or post install. For example, our recipe log is called post-anaconda.log. You can tail this log file to following the execution of the script.

NOTE: Post install scripts won't be executed until the Ambari server is installed and the cluster is building. You can always monitor the /var/log/recipe directory on a node to see when the script is being executed. The time it takes to run the script will vary depending on the cloud environment and how long it takes to spin up the cluster.

On your cluster, you should be able to see the post-install log:

$ ls /var/log/recipes post-anaconda.log post-hdfs-home.log

Once the install process is complete, you should be able to verify that Anaconda is installed. You need to ssh into one of the cloud instances. You can get the public ip address from the Cloudbreak UI. You will login using the corresponding private key to the public key you entered when you created the Cloudbreak credential. You should login as the cloudbreak user. You should see something similar to this:

$ ssh -i ~/Downloads/keys/cloudbreak_id_rsa cloudbreak@#.#.#.#

The authenticity of host '#.#.#.# (#.#.#.#)' can't be established.

ECDSA key fingerprint is SHA256:By1MJ2sYGB/ymA8jKBIfam1eRkDS5+DX1THA+gs8sdU.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '#.#.#.#' (ECDSA) to the list of known hosts.

Last login: Sat May 13 00:47:41 2017 from 192.175.27.2

__| __|_ )

_| ( / Amazon Linux AMI

___|\___|___|

https://aws.amazon.com/amazon-linux-ami/2016.09-release-notes/

25 package(s) needed for security, out of 61 available

Run "sudo yum update" to apply all updates.

Amazon Linux version 2017.03 is available.

Once you are on the server, you can check the version of python:

$ /opt/anaconda/bin/python --version Python 2.7.13 :: Anaconda 4.3.1 (64-bit)

Review

If you have successfully followed along with this tutorial, you should know how to create pre and post install scripts. You should have successfully deployed a cluster on either AWS or Azure with Anaconda installed under /opt/anaconda on the nodes you specified.