Community Articles

- Cloudera Community

- Support

- Community Articles

- MiniFi: Capturing, Converting, TensorFlow Incepti...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 08-06-2017 12:03 AM - edited 08-17-2019 11:44 AM

Technology: Python, TensorFlow, Apache Hive, MiniFi, NiFi, HDFS, WebHDFS, Zeppelin, SQL, Raspberry Pi, Pi Camera, S2S.

Apache NiFi For Ingest of Images and TensorFlow Analysis from the Edge (Raspberry Pi 3)

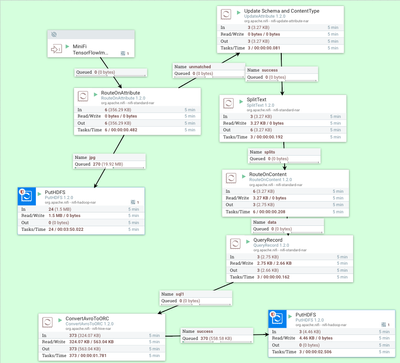

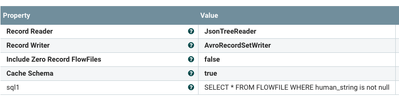

The Apache NiFi ingestion flow is straightforward. MiniFi sends us flow files over S2S from the RPI which consists of two types of messages. One is a JSON formatted file of metadata and TensorFlow analysis of an image. The second is the actual image captured. We route on the filename attribute to handle each file type appropriately. We send the image to HDFS for storage and retrieval via WebHDFS. The JSON I add a schema and a JSON content-type and split up the file. You see I have some non-JSON junk in there I want pulled out. Then I send my JSON record to QueryRecord to filter out any empty messages. This produces AVRO files from JSON which I convert to ORC and store in HDFS. From there it's easy to query my new deep learning produced multimedia data via standard SQL.

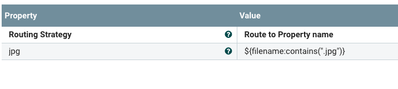

Routing by FileName Attribute

Split Into Lnes

Extract Only JSON Data

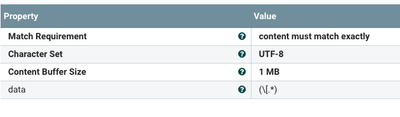

ORC Configuration

Query Record

MiniFi Flow Installed on Raspberry Pi

The flow on the Pi is simple. We have three processes running. The First is to execute our classify.sh to activate the PiCamera to take a picture and then feed the picture to TensorFlow. The CleanupLogs process is a shell script that deletes old logs. The GetFile reads any image produced by the first shell execute and send the image to NiFi.

Hive DDL:

CREATE EXTERNAL TABLE IF NOT EXISTS tfimage (image STRING, ts STRING, host STRING, score STRING, human_string STRING, node_id FLOAT) STORED AS ORC LOCATION '/tfimage'

Hive SQL:

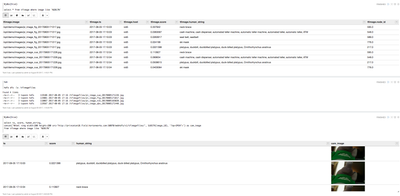

%jdbc(hive)

select ts, score, human_string,

concat('%html <img width=200 height=200 src="http://princeton10.field.hortonworks.com:50070/webhdfs/v1/tfimagefiles/', SUBSTR(image,18), '?op=OPEN">') as cam_image

from tfimage where image like '%2017%'Shell classify.sh

python -W ignore /opt/demo/classify_image.py

Modified TensorFlow Example Python

# Copyright 2015 The TensorFlow Authors. All Rights Reserved. # # Licensed under the Apache License, Version 2.0 (the "License"); # you may not use this file except in compliance with the License. # You may obtain a copy of the License at # # http://www.apache.org/licenses/LICENSE-2.0 # # Unless required by applicable law or agreed to in writing, software # distributed under the License is distributed on an "AS IS" BASIS, # WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. # See the License for the specific language governing permissions and # limitations under the License. # ============================================================================== """Simple image classification with Inception. Run image classification with Inception trained on ImageNet 2012 Challenge data set. This program creates a graph from a saved GraphDef protocol buffer, and runs inference on an input JPEG image. It outputs human readable strings of the top 5 predictions along with their probabilities. Change the --image_file argument to any jpg image to compute a classification of that image. Please see the tutorial and website for a detailed description of how to use this script to perform image recognition. https://tensorflow.org/tutorials/image_recognition/ """ from __future__ import absolute_import from __future__ import division from __future__ import print_function import argparse import os.path import re import sys import tarfile import os import datetime import math import random, string import base64 import json import time import picamera from time import sleep from time import gmtime, strftime import numpy as np from six.moves import urllib import tensorflow as tf tf.logging.set_verbosity(tf.logging.ERROR) FLAGS = None # pylint: disable=line-too-long DATA_URL = 'http://download.tensorflow.org/models/image/imagenet/inception-2015-12-05.tgz' # pylint: enable=line-too-long # yyyy-mm-dd hh:mm:ss currenttime= strftime("%Y-%m-%d %H:%M:%S",gmtime()) host = os.uname()[1] def randomword(length): return ''.join(random.choice(string.lowercase) for i in range(length)) class NodeLookup(object): """Converts integer node ID's to human readable labels.""" def __init__(self, label_lookup_path=None, uid_lookup_path=None): if not label_lookup_path: label_lookup_path = os.path.join( FLAGS.model_dir, 'imagenet_2012_challenge_label_map_proto.pbtxt') if not uid_lookup_path: uid_lookup_path = os.path.join( FLAGS.model_dir, 'imagenet_synset_to_human_label_map.txt') self.node_lookup = self.load(label_lookup_path, uid_lookup_path) def load(self, label_lookup_path, uid_lookup_path): """Loads a human readable English name for each softmax node. Args: label_lookup_path: string UID to integer node ID. uid_lookup_path: string UID to human-readable string. Returns: dict from integer node ID to human-readable string. """ if not tf.gfile.Exists(uid_lookup_path): tf.logging.fatal('File does not exist %s', uid_lookup_path) if not tf.gfile.Exists(label_lookup_path): tf.logging.fatal('File does not exist %s', label_lookup_path) # Loads mapping from string UID to human-readable string proto_as_ascii_lines = tf.gfile.GFile(uid_lookup_path).readlines() uid_to_human = {} p = re.compile(r'[n\d]*[ \S,]*') for line in proto_as_ascii_lines: parsed_items = p.findall(line) uid = parsed_items[0] human_string = parsed_items[2] uid_to_human[uid] = human_string # Loads mapping from string UID to integer node ID. node_id_to_uid = {} proto_as_ascii = tf.gfile.GFile(label_lookup_path).readlines() for line in proto_as_ascii: if line.startswith(' target_class:'): target_class = int(line.split(': ')[1]) if line.startswith(' target_class_string:'): target_class_string = line.split(': ')[1] node_id_to_uid[target_class] = target_class_string[1:-2] # Loads the final mapping of integer node ID to human-readable string node_id_to_name = {} for key, val in node_id_to_uid.items(): if val not in uid_to_human: tf.logging.fatal('Failed to locate: %s', val) name = uid_to_human[val] node_id_to_name[key] = name return node_id_to_name def id_to_string(self, node_id): if node_id not in self.node_lookup: return '' return self.node_lookup[node_id] def create_graph(): """Creates a graph from saved GraphDef file and returns a saver.""" # Creates graph from saved graph_def.pb. with tf.gfile.FastGFile(os.path.join( FLAGS.model_dir, 'classify_image_graph_def.pb'), 'rb') as f: graph_def = tf.GraphDef() graph_def.ParseFromString(f.read()) _ = tf.import_graph_def(graph_def, name='') def run_inference_on_image(image): """Runs inference on an image. Args: image: Image file name. Returns: Nothing """ if not tf.gfile.Exists(image): tf.logging.fatal('File does not exist %s', image) image_data = tf.gfile.FastGFile(image, 'rb').read() # Creates graph from saved GraphDef. create_graph() with tf.Session() as sess: # Some useful tensors: # 'softmax:0': A tensor containing the normalized prediction across # 1000 labels. # 'pool_3:0': A tensor containing the next-to-last layer containing 2048 # float description of the image. # 'DecodeJpeg/contents:0': A tensor containing a string providing JPEG # encoding of the image. # Runs the softmax tensor by feeding the image_data as input to the graph. softmax_tensor = sess.graph.get_tensor_by_name('softmax:0') predictions = sess.run(softmax_tensor, {'DecodeJpeg/contents:0': image_data}) predictions = np.squeeze(predictions) # Creates node ID --> English string lookup. node_lookup = NodeLookup() top_k = predictions.argsort()[-FLAGS.num_top_predictions:][::-1] row = [] for node_id in top_k: human_string = node_lookup.id_to_string(node_id) score = predictions[node_id] row.append( { 'node_id': node_id, 'image': image, 'host': host, 'ts': currenttime, 'human_string': str(human_string), 'score': str(score)} ) json_string = json.dumps(row) print( json_string ) def maybe_download_and_extract(): """Download and extract model tar file.""" dest_directory = FLAGS.model_dir if not os.path.exists(dest_directory): os.makedirs(dest_directory) filename = DATA_URL.split('/')[-1] filepath = os.path.join(dest_directory, filename) if not os.path.exists(filepath): def _progress(count, block_size, total_size): sys.stdout.write('\r>> Downloading %s %.1f%%' % ( filename, float(count * block_size) / float(total_size) * 100.0)) sys.stdout.flush() filepath, _ = urllib.request.urlretrieve(DATA_URL, filepath, _progress) print() statinfo = os.stat(filepath) print('Successfully downloaded', filename, statinfo.st_size, 'bytes.') tarfile.open(filepath, 'r:gz').extractall(dest_directory) def main(_): maybe_download_and_extract() # Create unique image name img_name = '/opt/demo/images/pi_image_{0}_{1}.jpg'.format(randomword(3),strftime("%Y%m%d%H%M%S",gmtime())) # Capture Image from Pi Camera try: camera = picamera.PiCamera() camera.resolution = (1024,768) camera.annotate_text = " Stored with Apache NiFi " camera.capture(img_name, resize=(600,400)) pass finally: camera.close() # image = (FLAGS.image_file if FLAGS.image_file else # os.path.join(FLAGS.model_dir, 'cropped_panda.jpg')) run_inference_on_image(img_name) if __name__ == '__main__': parser = argparse.ArgumentParser() # classify_image_graph_def.pb: # Binary representation of the GraphDef protocol buffer. # imagenet_synset_to_human_label_map.txt: # Map from synset ID to a human readable string. # imagenet_2012_challenge_label_map_proto.pbtxt: # Text representation of a protocol buffer mapping a label to synset ID. parser.add_argument( '--model_dir', type=str, default='/tmp/imagenet', help=""" Path to classify_image_graph_def.pb, imagenet_synset_to_human_label_map.txt, and imagenet_2012_challenge_label_map_proto.pbtxt. """ ) parser.add_argument( '--image_file', type=str, default='', help='Absolute path to image file.' ) parser.add_argument( '--num_top_predictions', type=int, default=5, help='Display this many predictions.' ) FLAGS, unparsed = parser.parse_known_args() tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

Key Additions to Python

row.append( { 'node_id': node_id, 'image': image, 'host': host, 'ts': currenttime, 'human_string': str(human_string), 'score': str(score)} )

json_string = json.dumps(row)

print( json_string )Image Captured by Camera (Also Added "Stored with Apache NiFi" via Python)

References: