Community Articles

- Cloudera Community

- Support

- Community Articles

- Installing Docker Version of Sandbox on Mac

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 10-08-2016 04:17 PM

Objective

This tutorial walks you through the process of installing the Docker version of the HDP 2.5 Hortonworks Sandbox on a Mac. This tutorial is part one of a two part series. The second article can be found here:HCC Article

Prerequisites

- You should already have installed Docker for Mac. (Read more here Docker for Mac)

- You should already have downloaded the Docker version of the Hortonworks Sandbox (Read more here Hortonworks Sandbox)

Scope

This tutorial was tested using the following environment and components:

- Mac OS X 10.11.6

- HDP 2.5 on Hortonworks Sandbox (Docker Version)

- Docker for Mac 1.12.1

NOTE: You should adjust your Docker configuration to provide at least 8GB of RAM. I personally find things are better with 10-12GB of RAM. You can follow this article for more information: https://hortonworks.com/tutorial/sandbox-deployment-and-install-guide/section/3/#for-mac

Steps

1. Ensure the Docker daemon is running. You can verify by typing:

$ docker images

You should see something similar to this:

$ docker images REPOSITORY TAG IMAGE ID CREATED SIZE

If your Docker daemon is not running, you may see the following:

$ docker images Error response from daemon: Bad response from Docker engine

2. Load the Hortonworks sandbox image into Docker:

$ docker load < HDP_2.5_docker.tar.gz

You should see something similar to this:

$ docker load < HDP_2.5_docker.tar.gz b1b065555b8a: Loading layer [==================================================>] 202.2 MB/202.2 MB 0b547722f59f: Loading layer [==================================================>] 13.84 GB/13.84 GB 99d7327952e0: Loading layer [==================================================>] 234.8 MB/234.8 MB 294b1c0e07bd: Loading layer [==================================================>] 207.5 MB/207.5 MB fd5c10f2f1a1: Loading layer [==================================================>] 387.6 kB/387.6 kB 6852ef70321d: Loading layer [==================================================>] 163 MB/163 MB 517f170bbf7f: Loading layer [==================================================>] 20.98 MB/20.98 MB 665edb80fc91: Loading layer [==================================================>] 337.4 kB/337.4 kB Loaded image: sandbox:latest

3. Verify the image was successfully imported:

$ docker images

You should see something similar to this:

$ docker images REPOSITORY TAG IMAGE ID CREATED SIZE sandbox latest fc813bdc4bdd 3 days ago 14.57 GB

4. Start the container: The first time you start the container, you need to create it via the run command. The run command both creates and starts the container.

$ docker run -v hadoop:/hadoop --name sandbox --hostname "sandbox.hortonworks.com" --privileged -d \ -p 6080:6080 \ -p 9090:9090 \ -p 9000:9000 \ -p 8000:8000 \ -p 8020:8020 \ -p 42111:42111 \ -p 10500:10500 \ -p 16030:16030 \ -p 8042:8042 \ -p 8040:8040 \ -p 2100:2100 \ -p 4200:4200 \ -p 4040:4040 \ -p 8050:8050 \ -p 9996:9996 \ -p 9995:9995 \ -p 8080:8080 \ -p 8088:8088 \ -p 8886:8886 \ -p 8889:8889 \ -p 8443:8443 \ -p 8744:8744 \ -p 8888:8888 \ -p 8188:8188 \ -p 8983:8983 \ -p 1000:1000 \ -p 1100:1100 \ -p 11000:11000 \ -p 10001:10001 \ -p 15000:15000 \ -p 10000:10000 \ -p 8993:8993 \ -p 1988:1988 \ -p 5007:5007 \ -p 50070:50070 \ -p 19888:19888 \ -p 16010:16010 \ -p 50111:50111 \ -p 50075:50075 \ -p 50095:50095 \ -p 18080:18080 \ -p 60000:60000 \ -p 8090:8090 \ -p 8091:8091 \ -p 8005:8005 \ -p 8086:8086 \ -p 8082:8082 \ -p 60080:60080 \ -p 8765:8765 \ -p 5011:5011 \ -p 6001:6001 \ -p 6003:6003 \ -p 6008:6008 \ -p 1220:1220 \ -p 21000:21000 \ -p 6188:6188 \ -p 61888:61888 \ -p 2181:2181 \ -p 2222:22 \ sandbox /usr/sbin/sshd -D

Note: Mounting local drives to the sandbox

If you would like to mount local drives on the host to your sandbox, you need to add another -v option to the command above. I typically recommend creating working directories for each of your docker containers, such as /Users/<username>/Development/sandbox or /Users/<username>/Development/hdp25-demo-sandbox. In doing this, you can copy the docker run command above into a script called create_container.sh and you simply change the --name option to be unique and correspond to the directory the script is in.

Lets look at an example. In this scenario I'm going to create a directory called /Users/<username>/Development/hdp25-demo-sandbox where I will create my create_container.sh script. Inside of that script I will have as the first line:

$ docker run -v `pwd`:`pwd` -v hadoop:/hadoop --name hdp25-demo-sandbox --hostname "sandbox.hortonworks.com" --privileged -d \

Once the container is running you will notice the container has /Users/<username>/Development/hdp25-demo-sandbox as a mount. This is similar in nature/concept to the /vagrant mount when using Vagrant. This allows you to easily share data between the container and your host without having to copy the data around.

Once the container is created and running, Docker will display a CONTAINER ID for the container. You should see something similar to this:

fe57fe79f795905daa50191f92ad1f589c91043a30f7153899213a0cadaa5631

For all future container starts, you only need to run the docker start command:

$ docker start sandbox

Notice that sandbox is the name of the container in the run command used above.

If you name the container the same name as the container project directory, like hdp25-demo-sandbox above, it will make it easier to remember what the container name is. However, you can always create a start_container.sh script that includes the above start command. Similarly you can create a stop_container.sh script that stops the container.

5. Verify the container is running:

$ docker ps

You should see something similar to this:

$ docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 85d7ec7201d8 sandbox "/usr/sbin/sshd -D" 31 seconds ago Up 27 seconds 0.0.0.0:1000->1000/tcp, 0.0.0.0:1100->1100/tcp, 0.0.0.0:1220->1220/tcp, 0.0.0.0:1988->1988/tcp, 0.0.0.0:2100->2100/tcp, 0.0.0.0:4040->4040/tcp, 0.0.0.0:4200->4200/tcp, 0.0.0.0:5007->5007/tcp, 0.0.0.0:5011->5011/tcp, 0.0.0.0:6001->6001/tcp, 0.0.0.0:6003->6003/tcp, 0.0.0.0:6008->6008/tcp, 0.0.0.0:6080->6080/tcp, 0.0.0.0:6188->6188/tcp, 0.0.0.0:8000->8000/tcp, 0.0.0.0:8005->8005/tcp, 0.0.0.0:8020->8020/tcp, 0.0.0.0:8040->8040/tcp, 0.0.0.0:8042->8042/tcp, 0.0.0.0:8050->8050/tcp, 0.0.0.0:8080->8080/tcp, 0.0.0.0:8082->8082/tcp, 0.0.0.0:8086->8086/tcp, 0.0.0.0:8088->8088/tcp, 0.0.0.0:8090-8091->8090-8091/tcp, 0.0.0.0:8188->8188/tcp, 0.0.0.0:8443->8443/tcp, 0.0.0.0:8744->8744/tcp, 0.0.0.0:8765->8765/tcp, 0.0.0.0:8886->8886/tcp, 0.0.0.0:8888-8889->8888-8889/tcp, 0.0.0.0:8983->8983/tcp, 0.0.0.0:8993->8993/tcp, 0.0.0.0:9000->9000/tcp, 0.0.0.0:9090->9090/tcp, 0.0.0.0:9995-9996->9995-9996/tcp, 0.0.0.0:10000-10001->10000-10001/tcp, 0.0.0.0:10500->10500/tcp, 0.0.0.0:11000->11000/tcp, 0.0.0.0:15000->15000/tcp, 0.0.0.0:16010->16010/tcp, 0.0.0.0:16030->16030/tcp, 0.0.0.0:18080->18080/tcp, 0.0.0.0:19888->19888/tcp, 0.0.0.0:21000->21000/tcp, 0.0.0.0:42111->42111/tcp, 0.0.0.0:50070->50070/tcp, 0.0.0.0:50075->50075/tcp, 0.0.0.0:50095->50095/tcp, 0.0.0.0:50111->50111/tcp, 0.0.0.0:60000->60000/tcp, 0.0.0.0:60080->60080/tcp, 0.0.0.0:61888->61888/tcp, 0.0.0.0:2222->22/tcp sandbox

Notice the CONTAINER ID is the shortened version of the ID displayed when you ran the run command.

6. To stop the container Once the container is running, you stop it using the following command:

$ docker stop sandbox

7. To connect to the container You connect to the container via ssh using the following command:

$ ssh -p 2222 root@localhost

The first time you log into the container, you will be prompted to change the root password. The root password for the container is hadoop.

8. Start sandbox services The Ambari and HDP services do not start automatically when you start the Docker container. You need to start the processes with a script.

$ ssh -p 2222 root@localhost $ /etc/init.d/startup_script start

You should see something similar to this (you can ignore any warnings):

# /etc/init.d/startup_script start Starting tutorials... [ Ok ] Starting startup_script... Starting HDP ... Starting mysql [ OK ] Starting Flume [ OK ] Starting Postgre SQL [ OK ] Starting Ranger-admin [ OK ] Starting name node [ OK ] Starting Ranger-usersync [ OK ] Starting data node [ OK ] Starting Zookeeper nodes [ OK ] Starting Oozie [ OK ] Starting Ambari server [ OK ] Starting NFS portmap [ OK ] Starting Hdfs nfs [ OK ] Starting Hive server [ OK ] Starting Hiveserver2 [ OK ] Starting Ambari agent [ OK ] Starting Node manager [ OK ] Starting Yarn history server [ OK ] Starting Resource manager [ OK ] Starting Webhcat server [ OK ] Starting Spark [ OK ] Starting Mapred history server [ OK ] Starting Zeppelin [ OK ] Safe mode is OFF Starting sandbox... ./startup_script: line 97: /proc/sys/kernel/hung_task_timeout_secs: No such file or directory Starting shellinaboxd: [ OK ]

9. You can now connect to your HDP instance via a web browser at http://localhost:8888

Created on 10-08-2016 04:17 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Great step-by-step instruction. You can skip Step 2 and replace step 3 with

docker load < HDP_2.5_docker.tar.gz

Created on 10-08-2016 04:17 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Thank you for the feedback. I didn't realize that you could load the .tar.gz file directly.

Created on 01-24-2017 10:05 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I am trying to spin up the 2.5 sandbox on a VMware vSphere environment. I deploy the .ova with no issues but I need to set a static IP on it. Do I just modify the "docker run" command in the start_sandbox.sh? Thanks for any help.

Created on 01-27-2017 05:22 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Have trouble when I get to the part with

docker run -v `pwd`:`pwd` -v hadoop:/hadoop --name hdp25-demo-sandbox --hostname "sandbox.hortonworks.com" --privileged -d \

It says "docker run requires at least 1 argument"...

Created on 01-30-2017 03:43 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

All of the lines in that code box have to be pasted together. The \ character at the end of each line is a continuation character and the shell appends the next line. I'm guessing you have to hit enter twice for it to give you the error. Just paste the whole window full of lines at the same time and it will run.

John

Created on 10-01-2017 06:19 PM - edited 08-17-2019 09:30 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

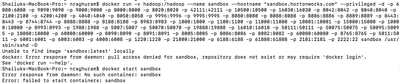

Hi.. i was following article to install sandbox on docker.

I was successfully able to complete step 3. when I execute the command docker images I am able to see - Sandbox-hdp.

I am stuck in step 4. I ran the command that you have in step 4. I get this error.

am I doing it correctly? Should I change the hostname.? I am a beginner. Please help.

Created on 10-06-2017 01:56 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

This was a big help to me in getting the Docker sandbox up and running. The Sandbox doc says that a bunch of services are started automatically, but alas, they are not. Once I read your instructions for starting the service processes, the mystery was solved. Thanks!

Created on 12-13-2017 03:30 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

The image is now called sandbox-hdp ie:

cloudusr@dev2:~/hdp$ sudo docker load < ./HDP_2.6.3_docker_10_11_2017.tar

e00c9229b481: Loading layer [==================================================>] 202.7MB/202.7MB

6d029676b777: Loading layer [==================================================>] 12.25GB/12.25GB

8f8f12de0582: Loading layer [==================================================>] 2.56kB/2.56kB

Loaded image: sandbox-hdp:latest

Therefore if you use the command documented (cut/pasted into my script):

cloudusr@dev2:~/hdp$ sudo ./runhdp.sh

Unable to find image 'sandbox:latest' locally

docker: Error response from daemon: pull access denied for sandbox, repository does not exist or may require 'docker login'.

See 'docker run --help'.

The fix is to use 'sandbox-hdp' for the name of the docker image - this is the nearly last parameter to the docker command, just before sshd (the -name can be left as is)

Created on 12-15-2017 03:58 PM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Change 'sandbox' to whatever the image name is in your local image repository. Alternatively, you can specify the container ID.

Created on 07-16-2018 05:03 AM

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

I could not see the startup_script start script in /etc/init.d/ folder. Please help me!