Community Articles

- Cloudera Community

- Support

- Community Articles

- Apache Deep Learning 101: Using Apache MXNet on Th...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 03-05-2018 07:38 PM - edited 09-16-2022 01:42 AM

This is for people preparing to attend my talk on Deep Learning at DataWorks Summit Berling 2018 (https://dataworkssummit.com/berlin-2018/#agenda) on Thursday April 19, 2018 at 11:50AM Berlin time.

This is for running Apache MXNet on a Raspberry Pi.

Let's get this installed!

git clone https://github.com/apache/incubator-mxnet.git

The installation instructions at Apache MXNet's website (http://mxnet.incubator.apache.org/install/index.html) are amazing. Pick your platform and your style. I am doing this the simplest way on Linux path.

Installation:

This builds on previous builds, so see those articles. We installed the drivers for Sense Hat, Intel Movidius and the USB Web Cam previously. Please note that versions for Raspberry Pi, Apache MXNet, Python and other drivers are updated every few months so if you are reading this post DWS 2018 you should check the relevant libraries and update to the latest versions.

You need Python, Python Devel and PIP installed and you may need to run as root. You will also need OpenCV installed as mentioned in the previous article.

In this combined Python script we grab Sense-Hat sensors for temperature, humidity and more. We also run Movidius image analysis and Apache MXNet Inception on the image that we capture with our web cam. Apache MXNet is now in version 1.1, so you may want to upgrade.

pip install --upgrade pip pip install scikit-image git clone https://github.com/tspannhw/mxnet_rpi.git sudo apt-get update -y sudo apt-get install python-pip python-opencv python-scipy python-picamera -y sudo apt-get -y install git cmake build-essential g++-4.8 c++-4.8 liblapack* libblas* libopencv* git clone --recursive https://github.com/apache/incubator-mxnet.git mxnet --branch 1.0.0 cd incubator-mxnet export USE_OPENCV = 0 make cd python pip install --upgrade pip pip install -e . pip install mxnet==1.0.0

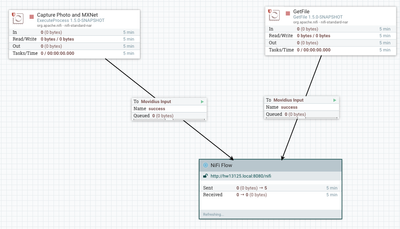

MiniFi Flow to Run Python Script and Send Over Images (Running on Raspberry Pi)

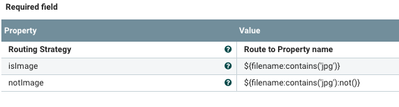

Routing on Server to Process Either an Image or a JSON

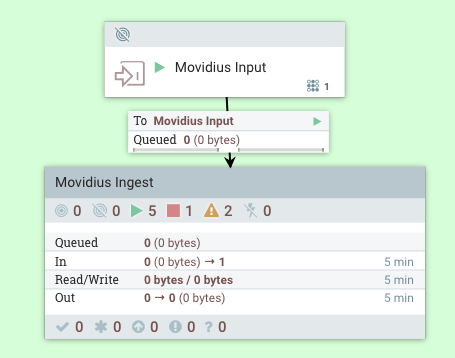

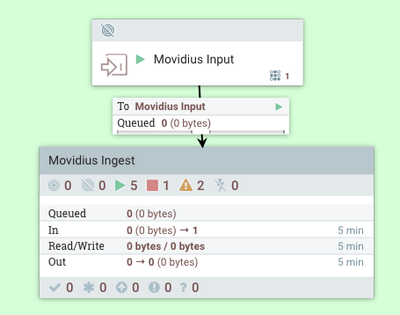

Our Apache NiFi Server Receiving Input from Raspberry Pi

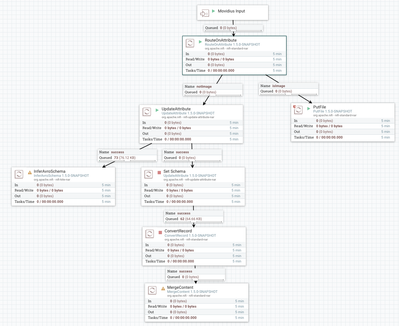

Apache NiFi Server Processing The Input

We route to two different processing flows, with one for saving images, the other adds a schema and converts the JSON data into Apache AVRO. The AVRO content is merged and we send that to a central HDF 3.1 cluster that can write to HDFS. We can either stream to an ACID Hive table or convert AVRO to Apache ORC and store it to HDFS and autogenerate an external Hive table on top of it. You can find many examples of both of these processes in my links below. We could also insert into Apache HBase or insert into an Apache Phoenix table. Or do all of those and send it to Slack, Email, Store in an RDBMS like MySQL and anything else you could think of.

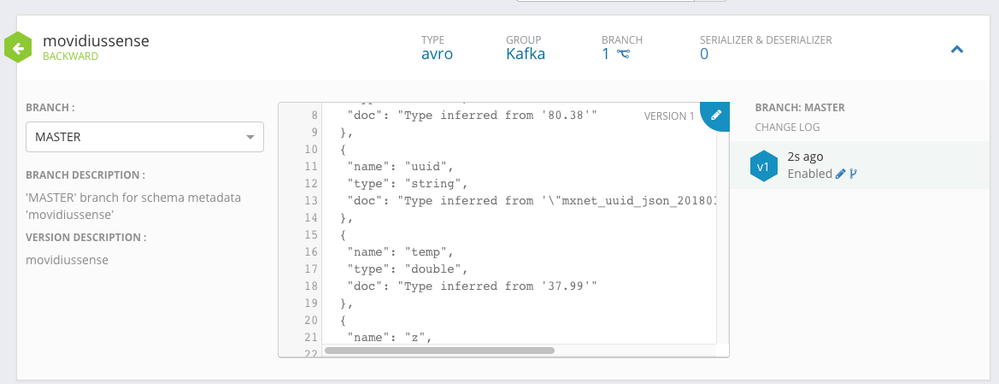

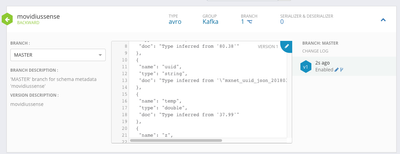

Generated Schema

Running:

We are using Apache MiniFi Java Agent 0.3.0. I will be adding a follow up including MiniFi 0.40 with the native C++ TensorFlow and USB Cam. See this awesome article for TensorFlow: https://community.hortonworks.com/articles/174520/minifi-c-iot-cat-sensor.html

Source Code:

https://github.com/tspannhw/rpi-mxnet-movidius-minifi

This is too easy!

References:

- https://github.com/tspannhw/ApacheBigData101/

- https://community.hortonworks.com/articles/171960/using-apache-mxnet-on-an-apache-nifi-15-instance-w...

- https://community.hortonworks.com/articles/174227/apache-deep-learning-101-using-apache-mxnet-on-an....

- https://community.hortonworks.com/articles/171960/using-apache-mxnet-on-an-apache-nifi-15-instance-w...

- https://community.hortonworks.com/articles/174227/apache-deep-learning-101-using-apache-mxnet-on-an....

- https://community.hortonworks.com/articles/174399/apache-deep-learning-101-using-apache-mxnet-on-apa...

- https://community.hortonworks.com/articles/176784/deep-learning-101-using-apache-mxnet-in-dsx-notebo...

- https://community.hortonworks.com/articles/176789/apache-deep-learning-101-using-apache-mxnet-in-apa...

- https://community.hortonworks.com/articles/174538/apache-deep-learning-101-using-apache-mxnet-with-h...

- https://community.hortonworks.com/articles/83100/deep-learning-iot-workflows-with-raspberry-pi-mqtt....

- https://community.hortonworks.com/articles/167193/building-and-running-minifi-cpp-in-orangepi-zero.h...

- https://community.hortonworks.com/articles/118132/minifi-capturing-converting-tensorflow-inception-t...

- https://community.hortonworks.com/articles/130814/sensors-and-image-capture-and-deep-learning-analys...

- https://community.hortonworks.com/articles/83100/deep-learning-iot-workflows-with-raspberry-pi-mqtt....

- https://community.hortonworks.com/articles/155475/powering-apache-minifi-flows-with-a-movidius-neura...

- http://mxnet.incubator.apache.org/install/index.html

- https://mxnet.incubator.apache.org/tutorials/embedded/wine_detector.html

- https://github.com/tspannhw/ApacheBigData101

- https://github.com/tspannhw/mxnet-in-notebooks

- https://github.com/tspannhw/nifi-mxnet-yarn/

- https://github.com/tspannhw/nvidiajetsontx1-mxnet

- https://github.com/tspannhw/mxnet_rpi

- https://github.com/tspannhw/rpi-sensehat-minifi-python/

- https://github.com/tspannhw/rpi-minifi-movidius-sensehat