Community Articles

- Cloudera Community

- Support

- Community Articles

- Scanning Documents into Data Lakes via Tesseract, ...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Created on 07-14-2018 09:29 PM - edited 08-17-2019 07:11 AM

Scanning Documents into Data Lakes via Tesseract, Python, OpenCV and Apache NiFi

Source: https://github.com/tspannhw/nifi-tesseract-python

There are many awesome open source tools available to integrate with your Big Data Streaming flows.

Take a look at these articles for installation and why the new version of Tesseract is different.

I am officially recommending Python 3.6 or newer. Please don't use Python 2.7 if you don't have to. Friends don't let friends use old Python.

Tesseract 4 with Deep Learning

https://www.learnopencv.com/deep-learning-based-text-recognition-ocr-using-tesseract-and-opencv/

Github: https://github.com/spmallick/learnopencv/tree/master/OCR

For installation on a Mac Laptop:

brew install tesseract --HEAD pip3.6 install pytesseract brew install leptonica

Note: if you have tesseract already, you may need to uninstall and unlink it first with brew. If you don't use brew, you can install another way.

Summary

- Execute the run.sh (https://github.com/tspannhw/nifi-tesseract-python/blob/master/pytesstest.py) .

- It will send a MQTT message of the text and some other attributes in JSON format to the tesseract topic in the specified MQTT broker.

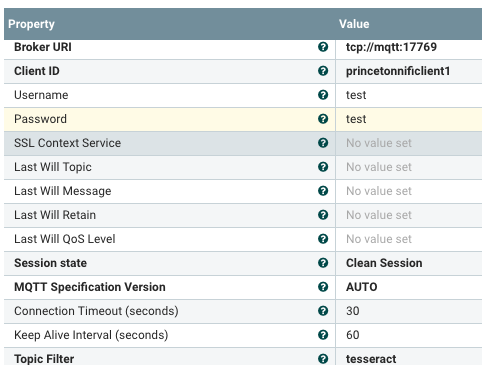

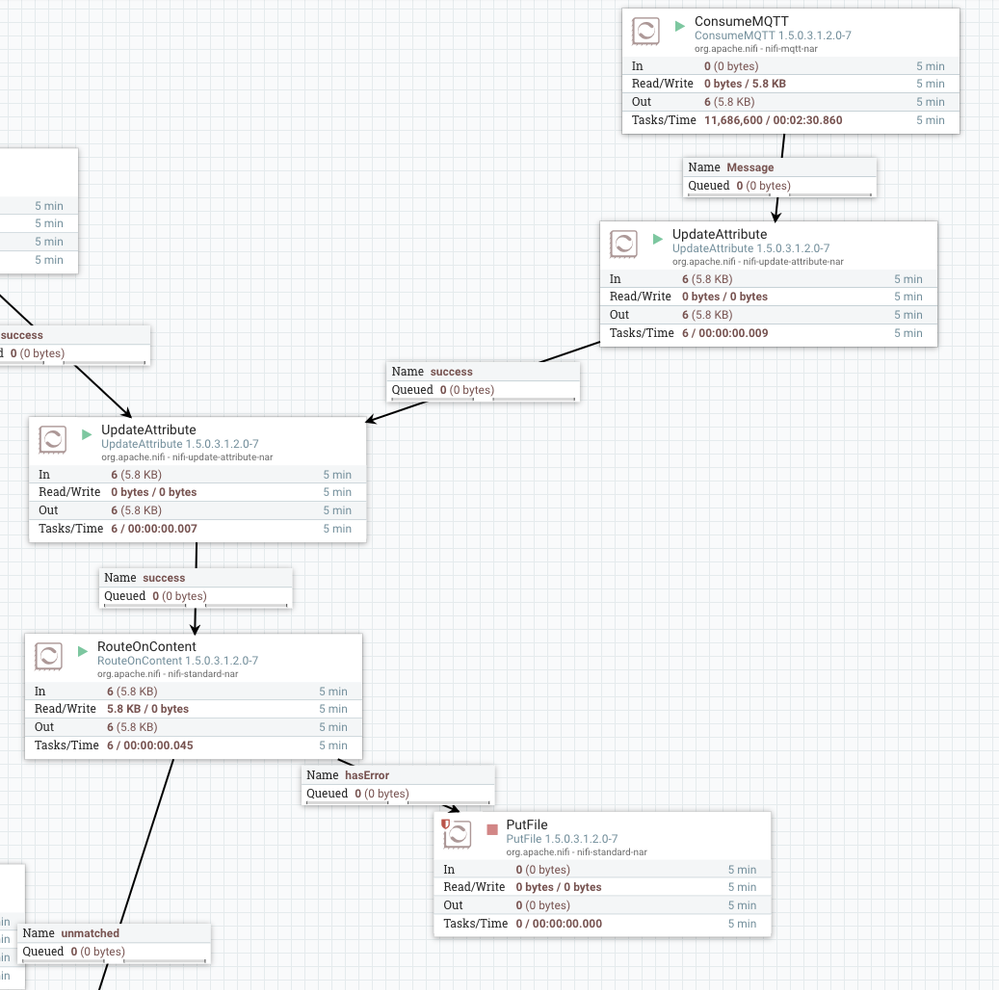

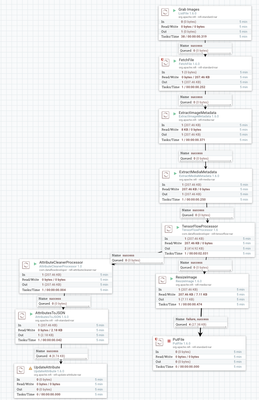

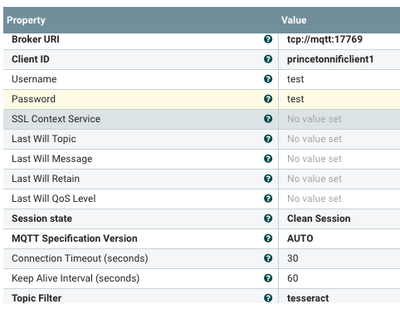

- Apache NiFi will read from this topic via ConsumeMQTT

- The flow checks to see if it's valid JSON via RouteOnContent.

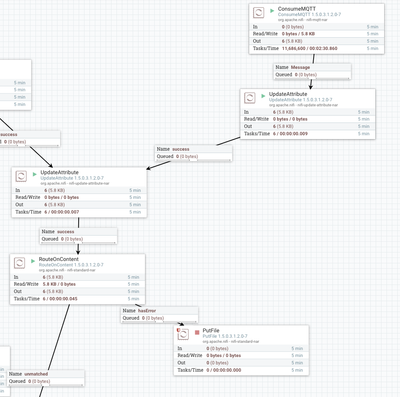

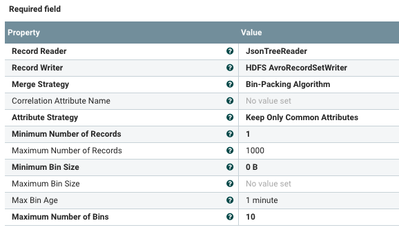

- We run MergeRecord to convert a bunch of JSON into one big Apache Avro File

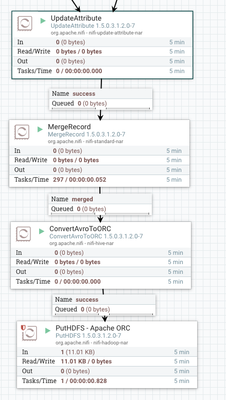

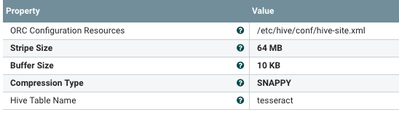

- Then we run ConvertAvroToORC to make a superfast Apache ORC file for storage

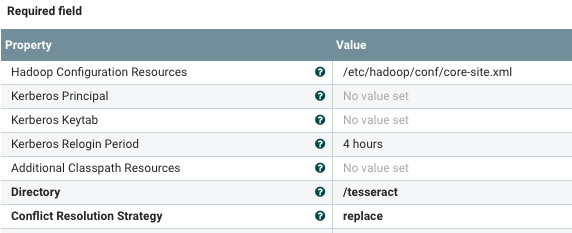

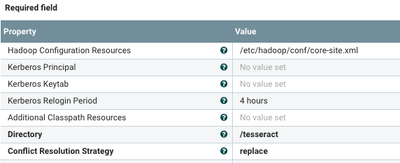

- Then we store it in HDFS via PutHDFS

Running The Python Script

You could have this also hooked up to a scanner or point it at a directory. You could also have it scheduled to run every 30 seconds or so. I had this hooked up to a local Apache NiFi instance to schedule runs. This can also be run by MiniFi Java Agent or MiniFi C++ agent. Or on demand if you wish.

Sending MQTT Messages From Python

# MQTT

client = mqtt.Client()

client.username_pw_set("user","pass")

client.connect("server.server.com", 17769, 60)

client.publish("tesseract", payload=json_string, qos=0, retain=True)You will need to run: pip3 install paho-mqtt

Create the HDFS Directory

hdfs dfs -mkdir -p /tesseract

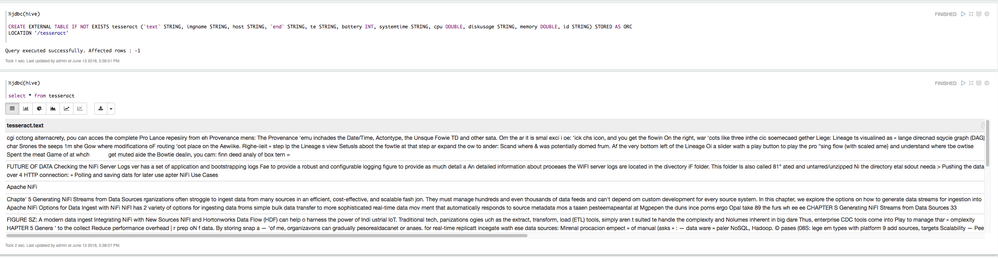

Create the External Hive Table (DDL Built by NiFi)

CREATE EXTERNAL TABLE IF NOT EXISTS tesseract (`text` STRING, imgname STRING, host STRING, `end` STRING, te STRING, battery INT, systemtime STRING, cpu DOUBLE, diskusage STRING, memory DOUBLE, id STRING) STORED AS ORC LOCATION '/tesseract';

This DDL is a side effect, it's built by our ORC conversion and HDFS storage commands.

You could run that create script in Hive View 2, Beeline or another Apache Hive JDBC/ODBC tool. I used Apache Zeppelin since I am going to be doing queries there anyway.

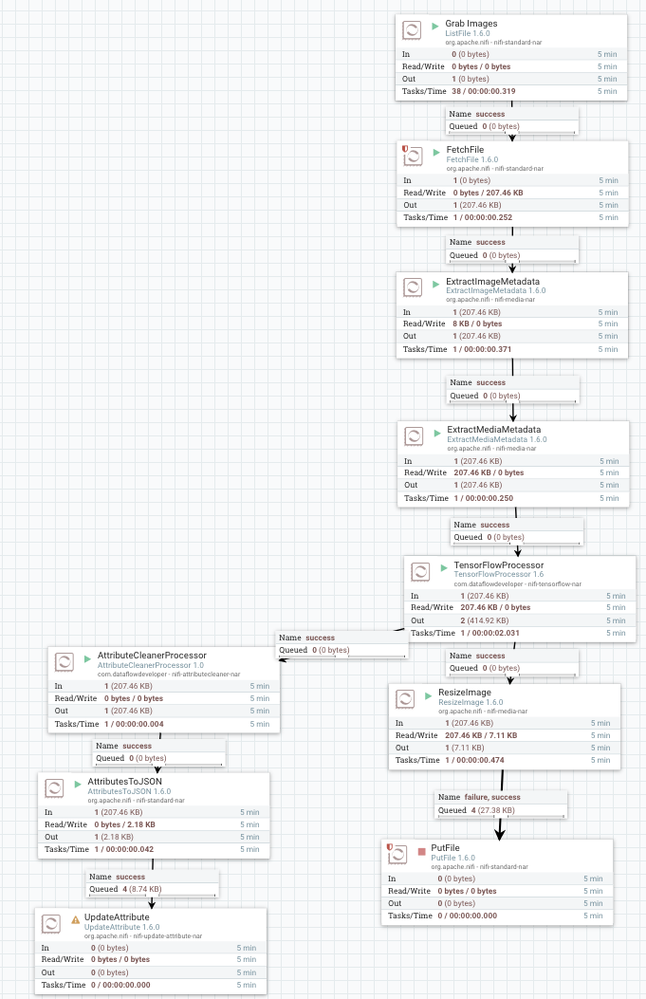

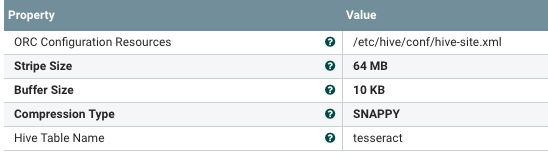

Let's Ingest Our Captured Images and Process Them with Apache Tika, TensorFlow and grab the metadata

Consume MQTT Records and Store in Apache Hive

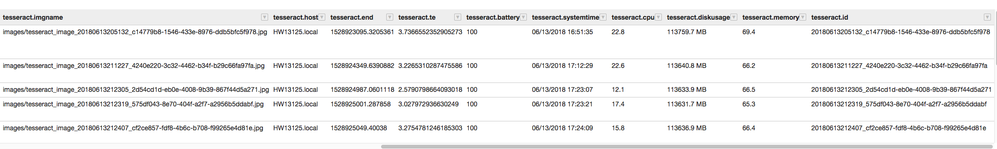

Let's look at other fields in Zeppelin

Let's Look at Our Records in Apache Zeppelin via a SQL Query (SELECT *FROM TESSERACT)

ConsumeMQTT: Give me all the record from the tesseract topic from our MQTT Broker. Isolation from our ingest clients which could be 100,000 devices.

MergeRecord: Merge all the JSON files sent via MQTT into one big AVRO File

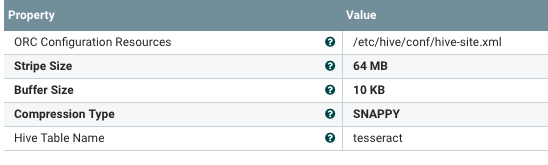

ConvertAVROToORC: converts are merged AVRO file

PutHDFS

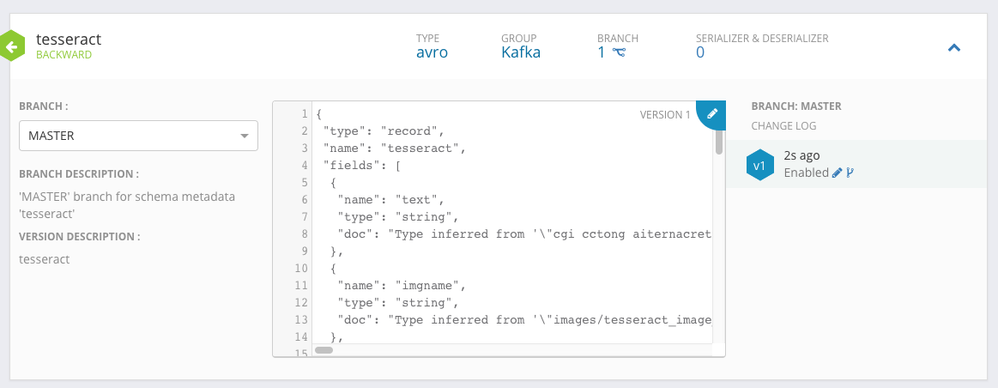

Tesseract Example Schema in Hortonworks Schema Registry

TIP: You can generate your schema with InferAvroSchema. Do that once, copy it and paste into Schema Registry. Then you can remove that step from your flow.

The Schema Text

{

"type": "record",

"name": "tesseract",

"fields": [

{

"name": "text",

"type": "string",

"doc": "Type inferred from '\"cgi cctong aiternacrety, pou can acces the complete Pro\nLance repesiiry from eh Provenance mens: The Provenance\n‘emu inchades the Date/Time, Actontype, the Unsque Fowie\nTD and other sata. Om the ar it is smal exci i oe:\n‘ick chs icon, and you get the flowin On the right, war\n‘cots like three inthe cic soemecaed gether Liege:\n\nLineage ts visualined as « lange direcnad sqycie graph (DAG) char\nSrones the seeps 1m she Gow where modifications oF routing ‘oot\nplace on the Aewiike. Righe-iieit « step lp the Lineage s view\nSetusls aboot the fowtle at that step ar expand the ow to ander:\nScand where & was potentially domed frum. Af the very bottom\nleft of the Lineage Oi a slider wath a play button to play the pro\n“sing flow (with scaled ame} and understand where tbe owtise\nSpent the meat Game of at whch PORN get muted\n\naide the Bowtie dealin, you cam: finn deed analy of box\n\ntern\n=\"'"

},

{

"name": "imgname",

"type": "string",

"doc": "Type inferred from '\"images/tesseract_image_20180613205132_c14779b8-1546-433e-8976-ddb5bfc5f978.jpg\"'"

},

{

"name": "host",

"type": "string",

"doc": "Type inferred from '\"HW13125.local\"'"

},

{

"name": "end",

"type": "string",

"doc": "Type inferred from '\"1528923095.3205361\"'"

},

{

"name": "te",

"type": "string",

"doc": "Type inferred from '\"3.7366552352905273\"'"

},

{

"name": "battery",

"type": "int",

"doc": "Type inferred from '100'"

},

{

"name": "systemtime",

"type": "string",

"doc": "Type inferred from '\"06/13/2018 16:51:35\"'"

},

{

"name": "cpu",

"type": "double",

"doc": "Type inferred from '22.8'"

},

{

"name": "diskusage",

"type": "string",

"doc": "Type inferred from '\"113759.7 MB\"'"

},

{

"name": "memory",

"type": "double",

"doc": "Type inferred from '69.4'"

},

{

"name": "id",

"type": "string",

"doc": "Type inferred from '\"20180613205132_c14779b8-1546-433e-8976-ddb5bfc5f978\"'"

}

]

}The above schema was generated by Infer Avro Schema in Apache NiFi.

Image Analytics Results

{

"tiffImageWidth" : "1280",

"ContentType" : "image/jpeg",

"JPEGImageWidth" : "1280 pixels",

"FileTypeDetectedFileTypeName" : "JPEG",

"tiffBitsPerSample" : "8",

"ThumbnailHeightPixels" : "0",

"label4" : "book jacket",

"YResolution" : "1 dot",

"label5" : "pill bottle",

"ImageWidth" : "1280 pixels",

"JFIFYResolution" : "1 dot",

"JPEGImageHeight" : "720 pixels",

"filecreationTime" : "2018-06-13T17:24:07-0400",

"JFIFThumbnailHeightPixels" : "0",

"DataPrecision" : "8 bits",

"XResolution" : "1 dot",

"ImageHeight" : "720 pixels",

"JPEGNumberofComponents" : "3",

"JFIFXResolution" : "1 dot",

"FileTypeExpectedFileNameExtension" : "jpg",

"JPEGDataPrecision" : "8 bits",

"FileSize" : "223716 bytes",

"probability4" : "1.74%",

"tiffImageLength" : "720",

"probability3" : "3.29%",

"probability2" : "6.13%",

"probability1" : "81.23%",

"FileName" : "apache-tika-2858986094088526803.tmp",

"filelastAccessTime" : "2018-06-13T17:24:07-0400",

"JFIFThumbnailWidthPixels" : "0",

"JPEGCompressionType" : "Baseline",

"JFIFVersion" : "1.1",

"filesize" : "223716",

"FileModifiedDate" : "Wed Jun 13 17:24:27 -04:00 2018",

"Component3" : "Cr component: Quantization table 1, Sampling factors 1 horiz/1 vert",

"Component1" : "Y component: Quantization table 0, Sampling factors 2 horiz/2 vert",

"Component2" : "Cb component: Quantization table 1, Sampling factors 1 horiz/1 vert",

"NumberofTables" : "4 Huffman tables",

"FileTypeDetectedFileTypeLongName" : "Joint Photographic Experts Group",

"fileowner" : "tspann",

"filepermissions" : "rw-r--r--",

"JPEGComponent3" : "Cr component: Quantization table 1, Sampling factors 1 horiz/1 vert",

"JPEGComponent2" : "Cb component: Quantization table 1, Sampling factors 1 horiz/1 vert",

"JPEGComponent1" : "Y component: Quantization table 0, Sampling factors 2 horiz/2 vert",

"FileTypeDetectedMIMEType" : "image/jpeg",

"NumberofComponents" : "3",

"HuffmanNumberofTables" : "4 Huffman tables",

"label1" : "menu",

"XParsedBy" : "org.apache.tika.parser.DefaultParser, org.apache.tika.parser.ocr.TesseractOCRParser, org.apache.tika.parser.jpeg.JpegParser",

"label2" : "web site",

"label3" : "crossword puzzle",

"absolutepath" : "/Volumes/seagate/opensourcecomputervision/images/",

"filelastModifiedTime" : "2018-06-13T17:24:07-0400",

"ThumbnailWidthPixels" : "0",

"filegroup" : "staff",

"ResolutionUnits" : "none",

"JFIFResolutionUnits" : "none",

"CompressionType" : "Baseline",

"probability5" : "1.12%"

}

This is built using a combination of Apache Tika, TensorFlow and other metadata analysis processors.